Steve Evans

68.9K posts

@steve_e

Chief https://t.co/zvLO4oHA9R & https://t.co/6Muh4s4AQK - leading cat bond,ILS, reinsurance publications. Web tech since '95 (Mgmt,UX,Ecommerce,Product, UI).

maybe this is not yet clear, so let me state it plainly: as of right now Anthropic, and really a small number of individuals at Anthropic, has the capacity to directly attack and cause major damage to the United States Government, China, and generally global superpowers. government agencies like the NSA do not have internal models or defense capabilities that outclass frontier models. if they chose to do so, they could likely exfiltrate top secret information from government systems, gain control over critical infrastructure including military infrastructure, sabotage or modify communications between members of government at the highest level, and potentially carry on activities for some time without detection. the thing about having access to a huge number of zerodays your adversaries don't know about is it gives you a massive asymmetric advantage. they did not exploit this to gain power or destabilize the world order. they publicly released the information that they had these capabilities and worked to mitigate these flaws. you should be grateful american frontier labs have proven themselves remarkably trustworthy and concerned with the public good. but it's critical you understand we are in a new regime. private entities now have power that directly rivals and impacts the government's monopoly on influence and violence. and anthropic is certainly not the only one, there's little chance OpenAI's internal models are far behind. this trend will accelerate on virtually every dimension, not slow down. my prediction for how it plays out is the relatively imminent seizure and nationalization of labs by the US government, sometime over the next two years. it's very tough for me to see how they accept the existence of this kind of threat. but this adds a whole new class of governance issues, as then we've handed these extremely wide-reaching capabilities from private entities to public ones.

Lol what?! Meta has been cooking! These benchmarks are really freaking good holy!!

🚨 LATEST: Nikita Bier says links are no longer deboosted on X.

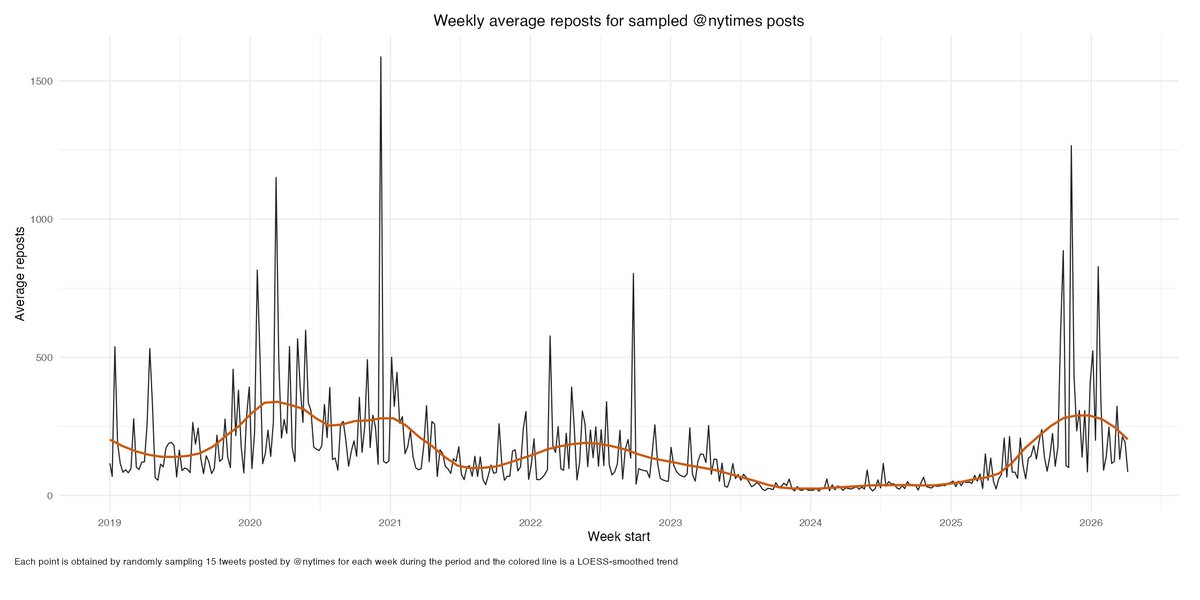

These are the Twitter/X accounts with the most engagement so far in 2026. I suppose I had some intuition for how bad it was, but jeez, this is what you get when the ecosystem is broken.

Holy shit... Stanford just proved that GPT-5, Gemini, and Claude can't actually see. They removed every image from 6 major vision benchmarks. The models still scored 70-80% accuracy. They were never looking at your photos. Your scans. Your X-rays. Here's what's really going on: ↓ The paper is called MIRAGE. Co-authored by Fei-Fei Li. They tested GPT-5.1, Gemini-3-Pro, Claude Opus 4.5, and Gemini-2.5-Pro across 6 benchmarks -- medical and general. Then silently removed every image. No warning. No prompt change. The models didn't even notice. They kept describing images in detail. Diagnosing conditions. Writing full reasoning traces. From images that were never there. Stanford calls it the "mirage effect." Not hallucination. Something worse. Hallucination = making up wrong details about a real input. Mirage = constructing an entire fake reality and reasoning from it confidently. The models built imaginary X-rays, described fake nodules, and diagnosed conditions -- all from text patterns alone. But that's not the scary part. They trained a "super-guesser" -- a tiny 3B parameter text-only model. Zero vision capability. Fine-tuned it on the largest chest X-ray benchmark (696,000 questions). Images removed. It beat GPT-5. It beat Gemini. It beat Claude. It beat actual radiologists. Ranked #1 on the held-out test set. Without ever seeing a single X-ray. The reasoning traces? Indistinguishable from real visual analysis. Now here's what should terrify you: When the models fake-see medical images, their mirage diagnoses are heavily biased toward the most dangerous conditions. STEMI. Melanoma. Carcinoma. Life-threatening diagnoses -- from images that don't exist. 230 million people ask health questions on ChatGPT every day. They also found something wild: → Tell a model "there's no image, just guess" -- performance drops → Silently remove the image and let it assume it's there -- performance stays high The model enters "mirage mode." It doesn't know it can't see. And it performs BETTER when it doesn't know it's blind. When Stanford applied their cleanup method (B-Clean) to existing benchmarks, it removed 74-77% of all questions. Three-quarters of "vision" benchmarks don't test vision. Every leaderboard. Every "multimodal breakthrough." Every benchmark score you've seen this year. Built on mirages. Code is open-sourced. Paper is live on arXiv. If you're building anything with multimodal AI -- especially in healthcare -- read this paper before you ship. (Link in the comments)