Zichen Liu

580 posts

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.

Finally finished! If you're interested in an overview of recent methods in reinforcement learning for reasoning LLMs, check out this blog post: aweers.de/blog/2026/rl-f… It summarizes ten methods, tries to highlight differences and trends, and has a collection of open problems

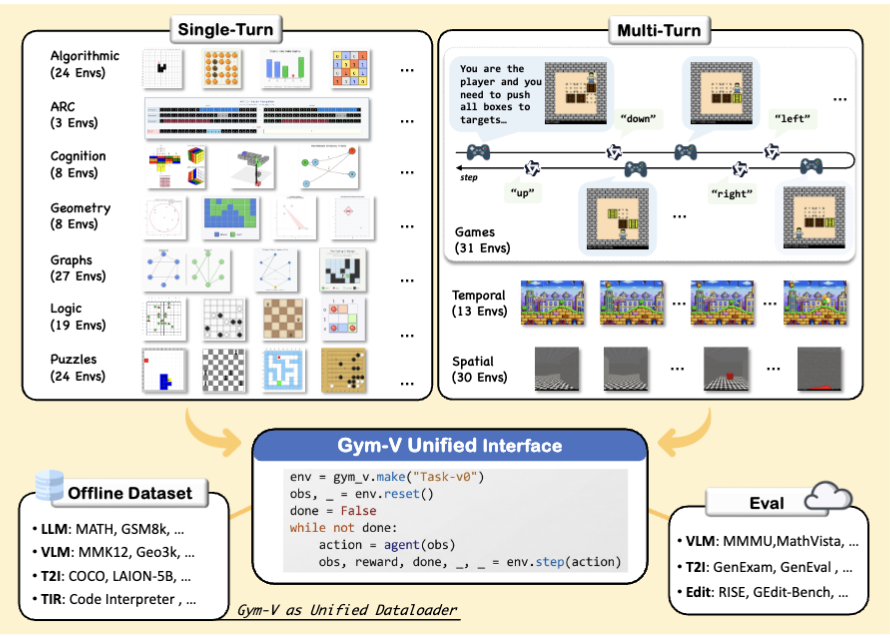

GEM❤️Tinker GEM, an environment suite with a unified interface, works perfectly with Tinker, the API by @thinkymachines that handles the heavy lifting of distributed training. In our latest release of GEM, we 1. supported Tinker and 5 more RL training frameworks 2. reproduced deepseek-r1 length increasing with LoRA 3. benchmarked PPO, GRPO, REINFORCE and showed their tradeoffs 4. added Terminal, MCP, visual and multi-agent environments … Open the thread for a deep dive!

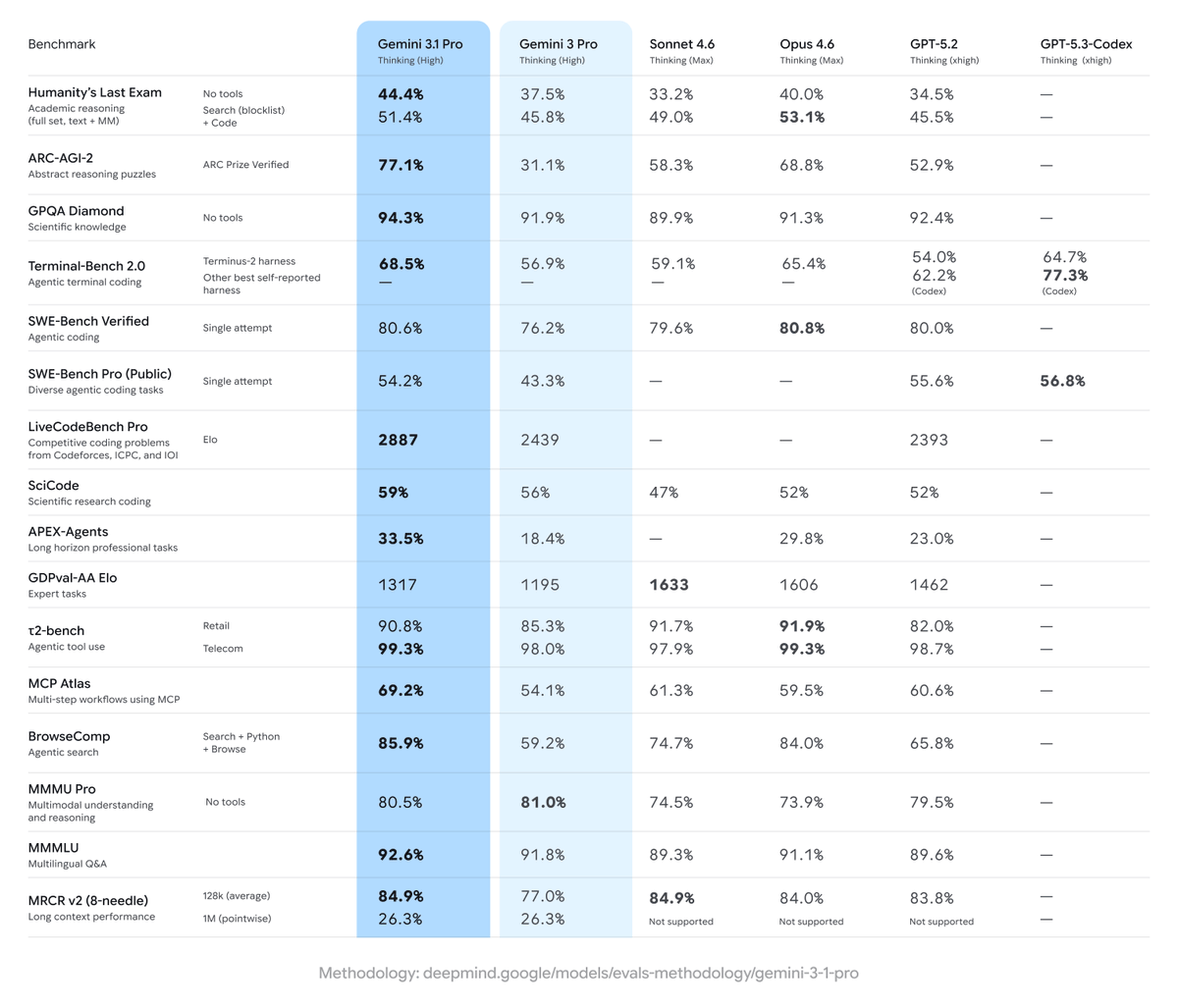

Life update: I’ve joined @GoogleDeepMind as a research scientist to work on ✨gemini scaling and RL, under the leadership of Yi Tay (@YiTayML) and Quoc Le (@quocleix). I feel extremely fortunate to be on the critical path towards AGI and can't wait to help push the frontier of gemini capabilities! 🚀

Life update: I’ve joined @GoogleDeepMind as a research scientist to work on ✨gemini scaling and RL, under the leadership of Yi Tay (@YiTayML) and Quoc Le (@quocleix). I feel extremely fortunate to be on the critical path towards AGI and can't wait to help push the frontier of gemini capabilities! 🚀