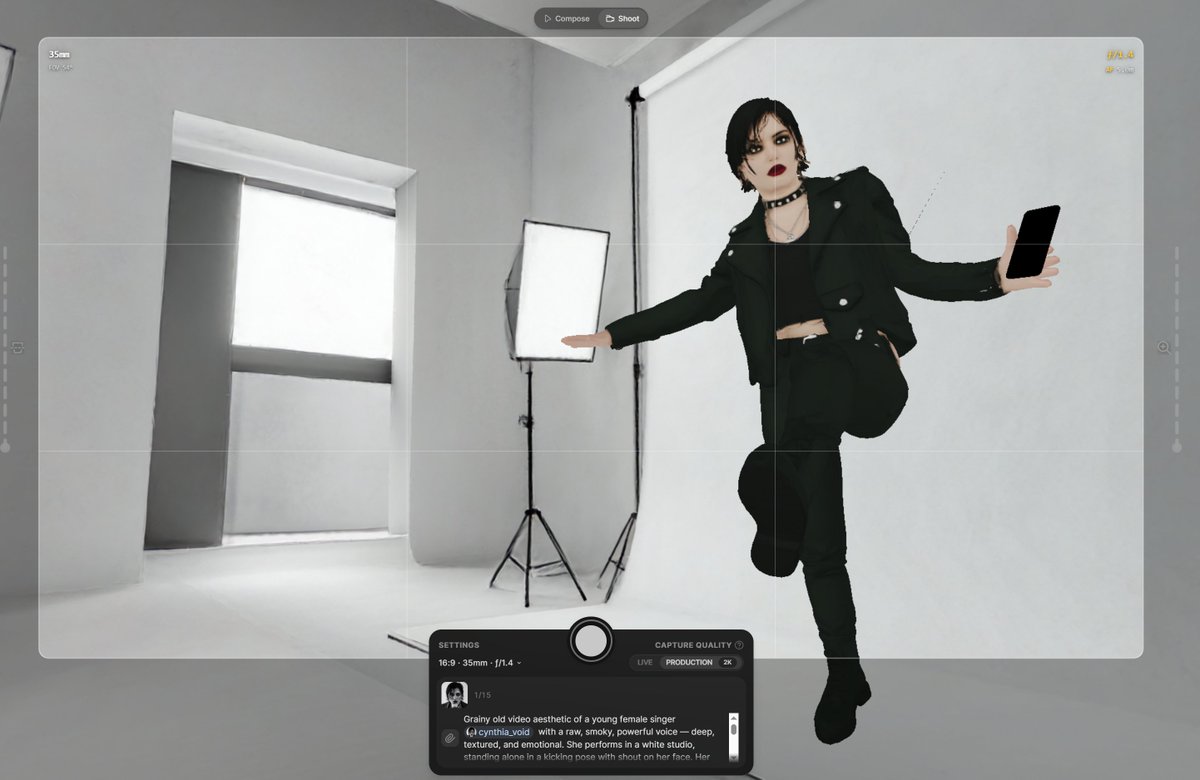

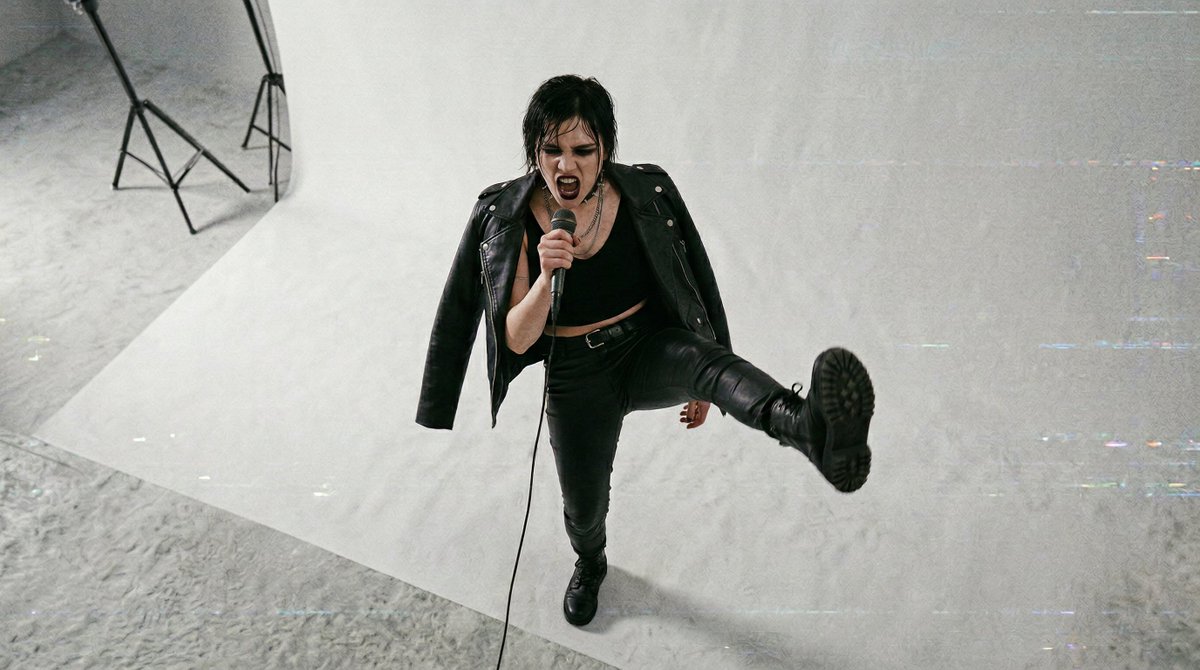

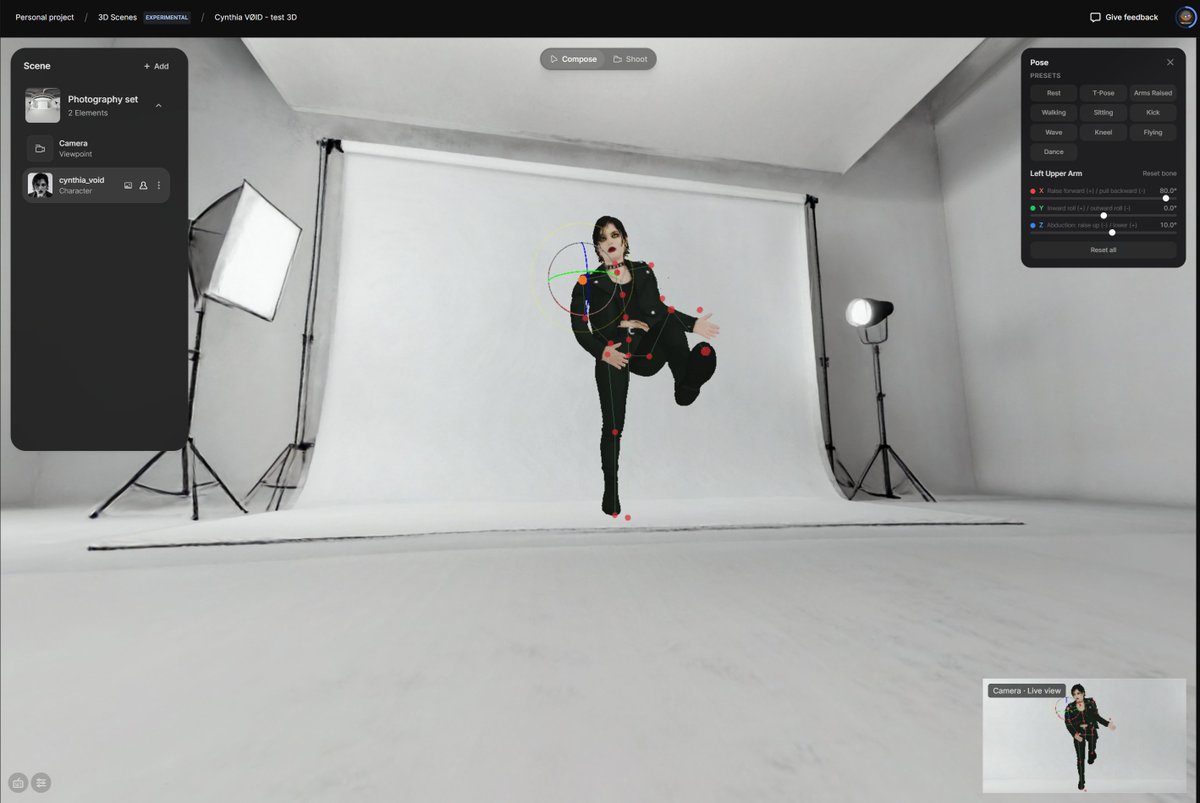

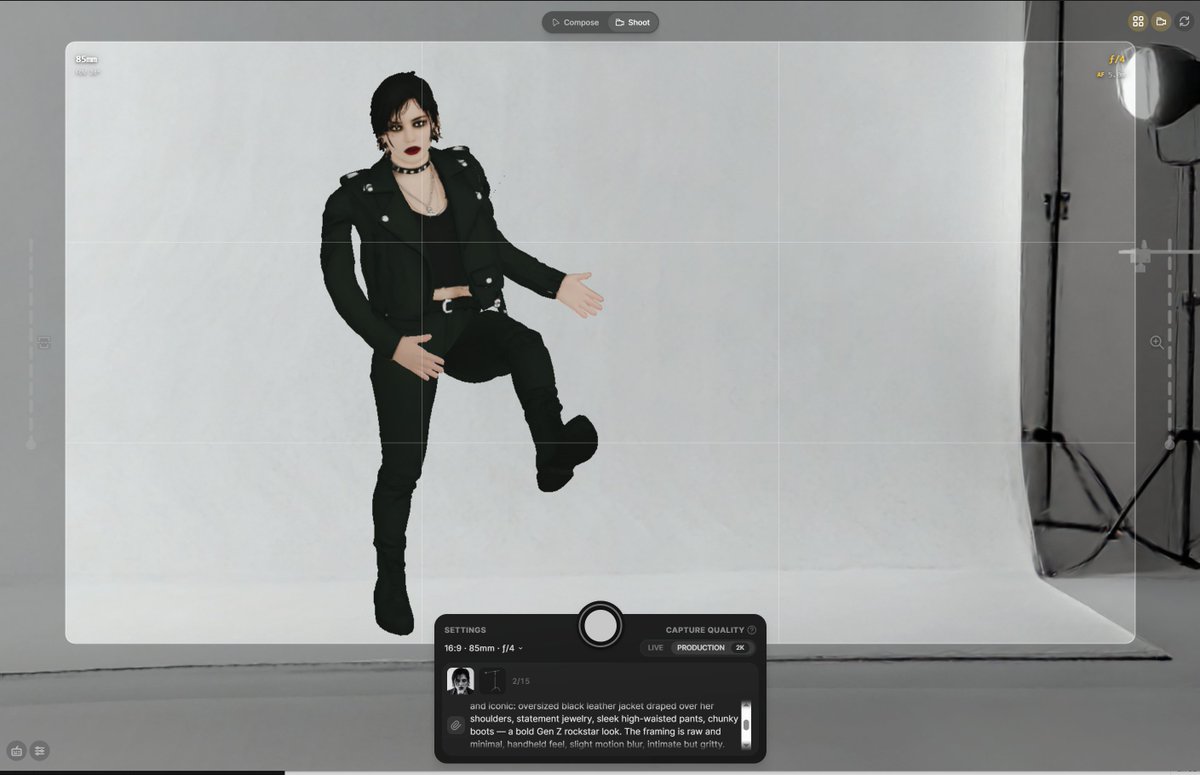

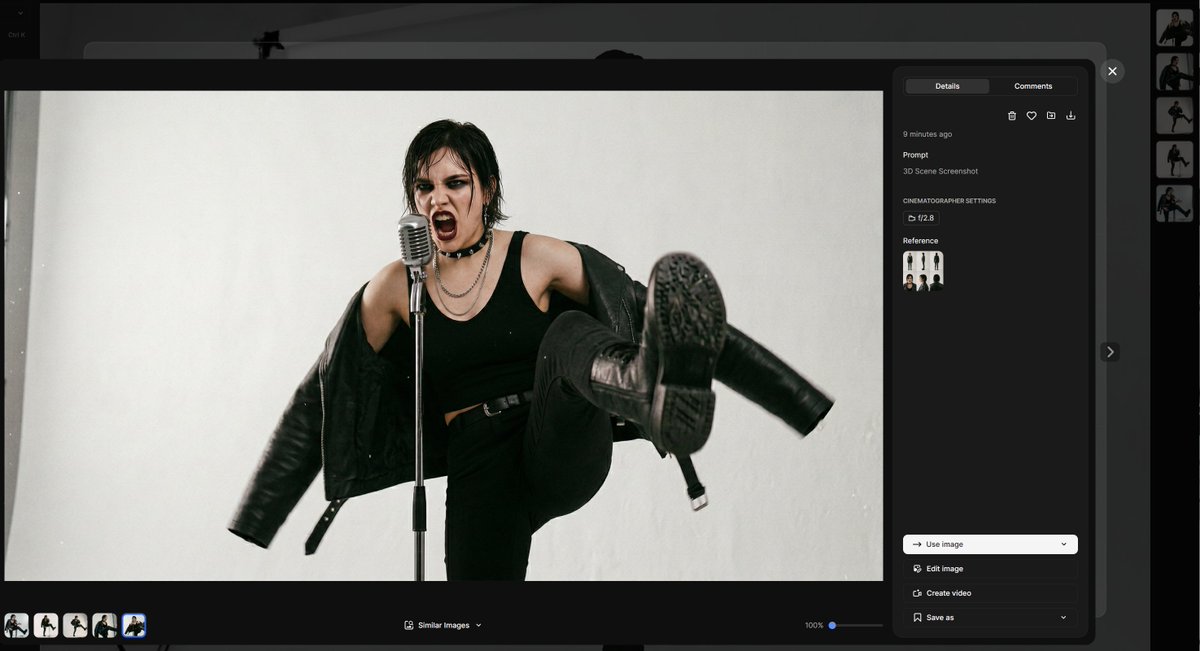

Seedance 2.0 - first generation. The first scene is a 3x3 grid starting frame, the following scenes follow the script in order, each with its own duration and transitions, extracting subsequent selected cells from the grid and replacing 9 reference images. 2/5