Max

476 posts

NVIDIA's new open source model is now free on OpenCode Zen Nemotron 3 Super is a mid sized model that is - fast - fully open source - 1M context

This is the most impressive plot I've seen all year: - Scaling RL not only works, but can be predicted from experiments run with 1/2 the target compute - PipelineRL crushes conventional RL pipelines in terms of compute efficiency - Many small details matter for stability & scaling, notably CISPO for the loss, FP32 for the LM logits (generator <> trainer mismatch), and filtering zero-variance prompts Really big kudos to Meta and the authors for burning a bonfire of silicon to uncover these valuable scaling laws 🔥

🚨 We’ve just published a recipe to train a frontier-level deep research agent using RL. With just 30 hours on an H200, any developer can now beat Sonnet-4 on DeepResearch Bench using open-source tools. (Thread 🧵)

Kimi team just trained a state of the art open source model 32B active parameter/1T total with 0 training instabilities, thanks to MuonClip, this is amazing

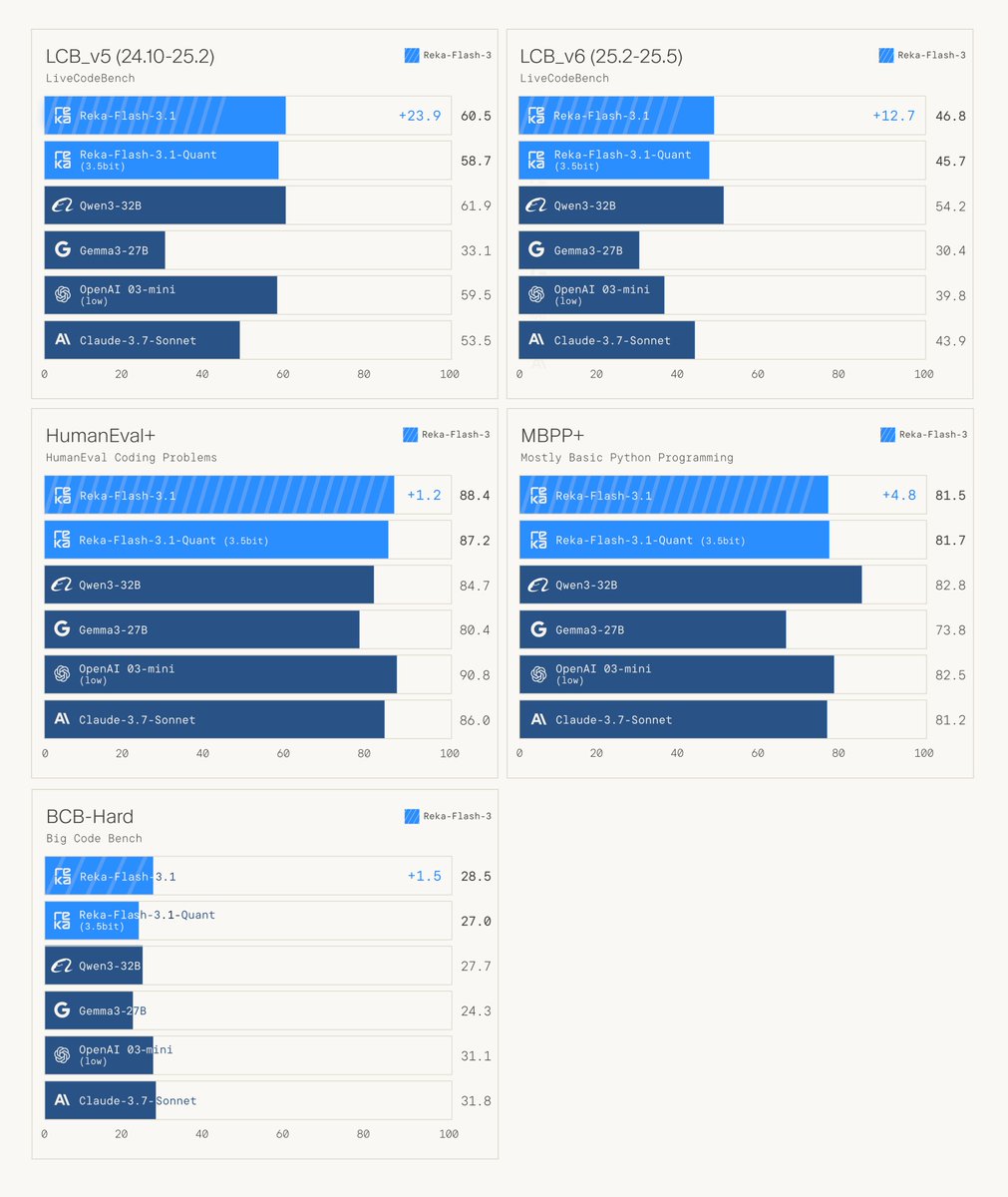

Well done @RekaAILabs huggingface.co/RekaAI/reka-fl…

📢 We are open sourcing ⚡Reka Flash 3.1⚡ and 🗜️Reka Quant🗜️. Reka Flash 3.1 is a much improved version of Reka Flash 3 that stands out on coding due to significant advances in our RL stack. 👩💻👨💻 Reka Quant is our state-of-the-art quantization technology. It achieves near-lossless compression of Reka Flash 3.1 to 3.5 bits. 💻

🚀 Meet Reka Research––agentic AI that 🤔 thinks → 🔎 searches → ✏️ cites across the open web and private docs to answer your questions. 🥇 State-of-the-art performance, available now via our API and Playground!