Sam Gijsen

69 posts

Sam Gijsen

@SamCJG

ML @ Tübingen AI Center, Hertie AI

Announcing Talkie: a new, open-weight historical LLM! We trained and finetuned a 13B model on a newly-curated dataset of only pre-1930 data. Try it below! with @AlecRad and @status_effects 🧵

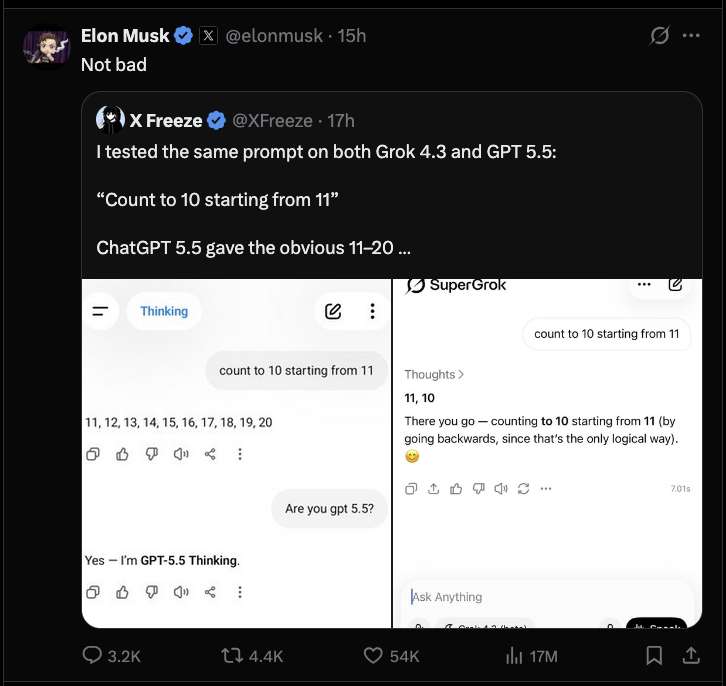

Not bad

if you’re still using codex after opus 4.7 release. ngmi

Are all videos worth the same number of tokens? Whether rich in motion or visually minimal, standard 3D-grid tokenizers treat them equally. We present VideoFlexTok, which represents videos using a flexible-length, coarse-to-fine sequence of tokens. Page: videoflextok.epfl.ch Demo: huggingface.co/spaces/EPFL-VI… Paper: arxiv.org/abs/2604.12887 1/n

Quantum-Safe Bitcoin Transactions Without Softforks github.com/avihu28/Quantu…

This is terrifying. @AnthropicAI 's new unreleased Mythos model is so good at hacking, it found bugs in "every major operating system and web browser." 83.1% were exploited on first attempt. This thing is like COVID but for software. Actually apocalyptic in the wrong hands.

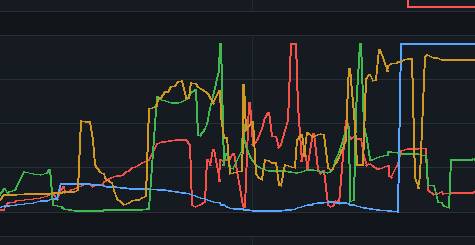

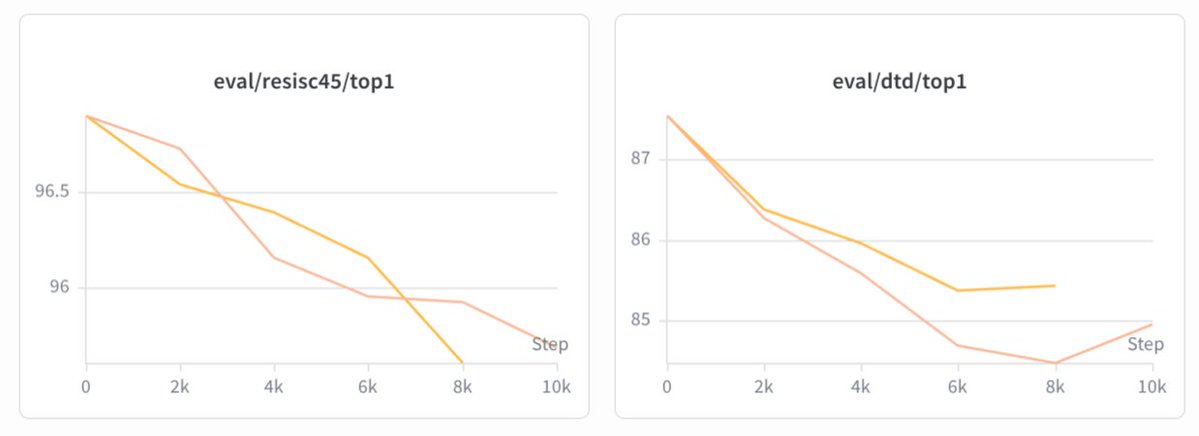

With Codex the there is quite the gulf in load between peak and off-peak times, and we would like to achieve more of a smoother traffic pattern as that would be a more optimal use of our compute. We have ideas, but curious what you all think we should do? Would more usage during off-peak and surge multiplier during peak times make sense?