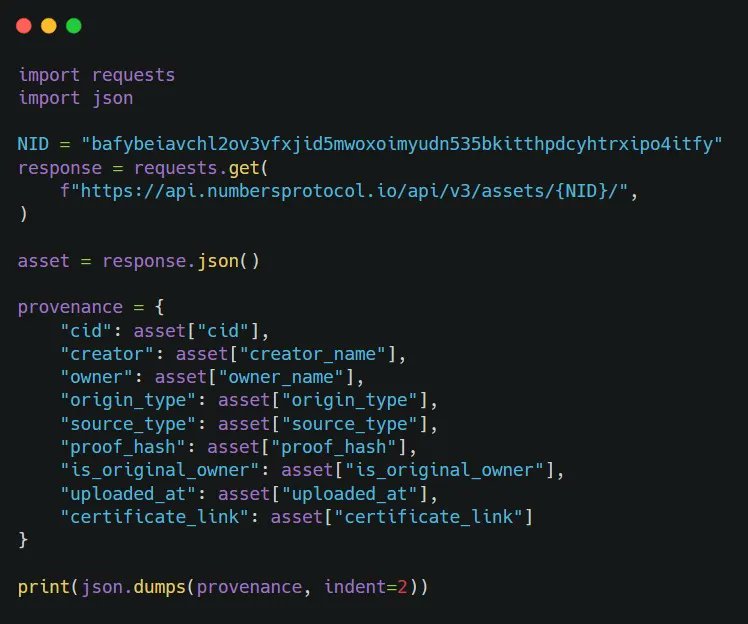

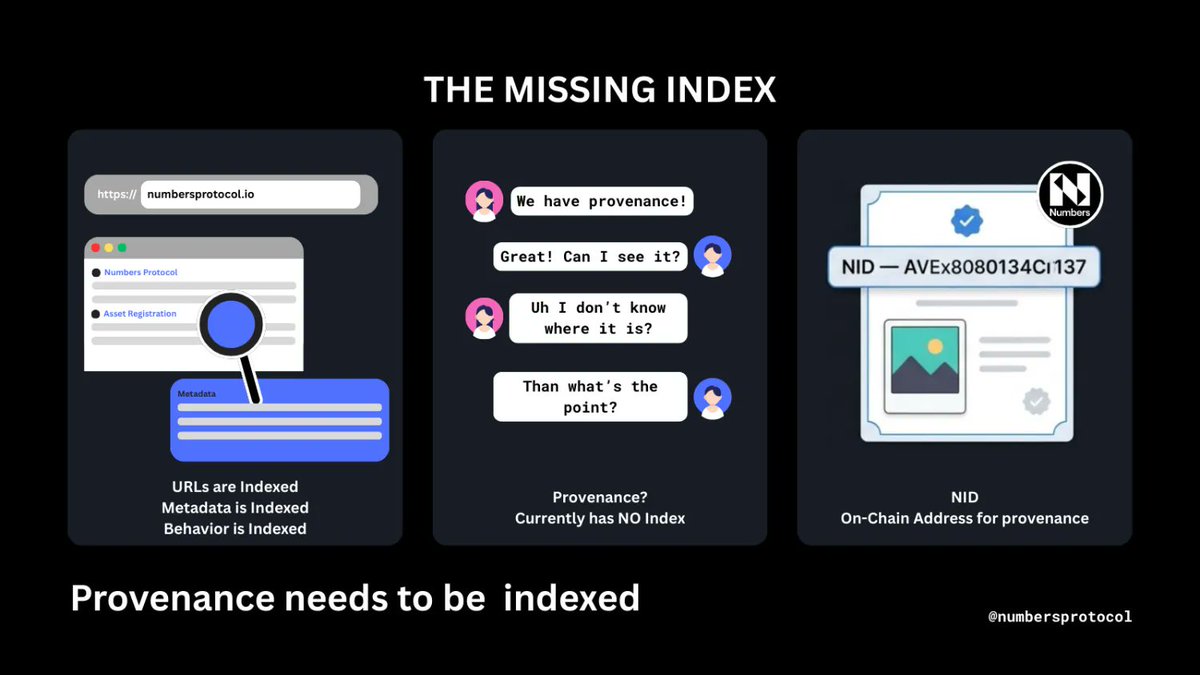

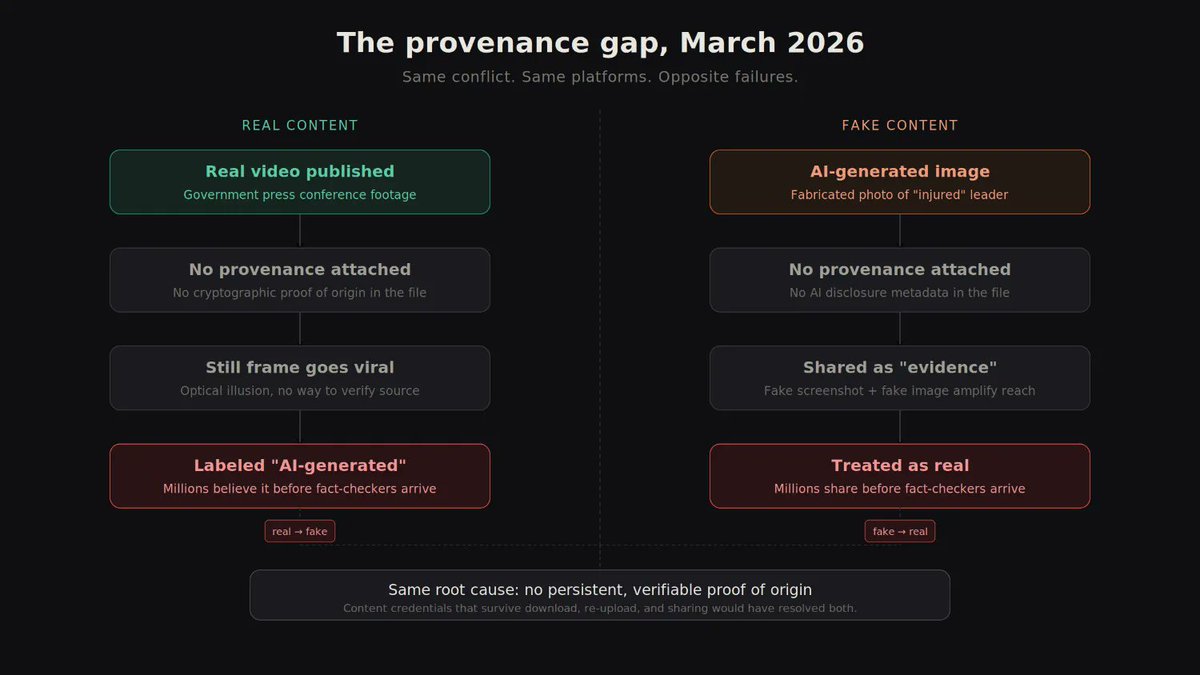

@meta’s Oversight Board highlights three critical weaknesses that are currently hindering the industry but with which ArAIstotle and @numbersprotocol can cover you. Cc: @finkd “Meta’s Oversight Board have just published their decision on deceptive AI content circulating across Meta's platforms during the Israel-Iran conflict last year. It serves as a stark reminder of the systemic gaps in how social media platforms currently handle this type of mis- and disinformation… 1) Failure to use provenance standards: platforms are missing opportunities to surface and verify content provenance, such as the C2PA standard. This makes it unnecessarily difficult to identify AI-generated media and apply accurate labels for users. 2) Inadequate detection tools: there is a distinct lack of high-quality detection technology capable of scanning multi-format content. As a result, harmful material often goes viral before it can be flagged or removed. 3) Absence of clear policy: current community guidelines lack specific requirements for AI self-disclosure, standardised labelling, or concrete penalties for those who fail to follow provenance protocols. …When deceptive AI content goes viral during an ongoing conflict, it confuses civilians in active war zones, erodes trust in verified information, and can directly contribute to real-world violence.” Adapted from Sam Stockwell’s post, link below ⤵️