Thanh @ B.ARMY Ventures

97 posts

Thanh @ B.ARMY Ventures

@techcomthanh

Founder of https://t.co/aE3kNrNB8C, Blockchain Fund focusing on #Ai and #Depin. A Value Investor & 🏌 lover

@garrytan Gave this a spin last night. Super powerful.

Nobody is talking about @apple keeping prices the same for the 128GB MacBook Pro. There has been no price increase in response to surging memory prices. Everyone is talking about the boost in compute, speeding up prefill by 4x. This is cool but practically it’s not that big of a deal. Why? Because on your own computer, most apps/tools using LLMs are going to get high kv cache hit rates - that means as a user you only experience slow prefill once. kv cache can be persisted to disk and loaded at 6GB/s. Most time in LLM inference is spent on decode, which is memory bandwidth bound. It’s still great for image/video generation, high batch LLM inference and fine-tuning, which are compute bound. We should see huge speedups there. Apple’s AI strategy is on-device LLMs and here, memory is the name of the game, not FLOPS. Expect the same for M5 Pro/Max Mac Mini and M5 Ultra Mac Studio. That means 512GB M5 Ultra at 10k! @tim_cook is a supply chain genius.

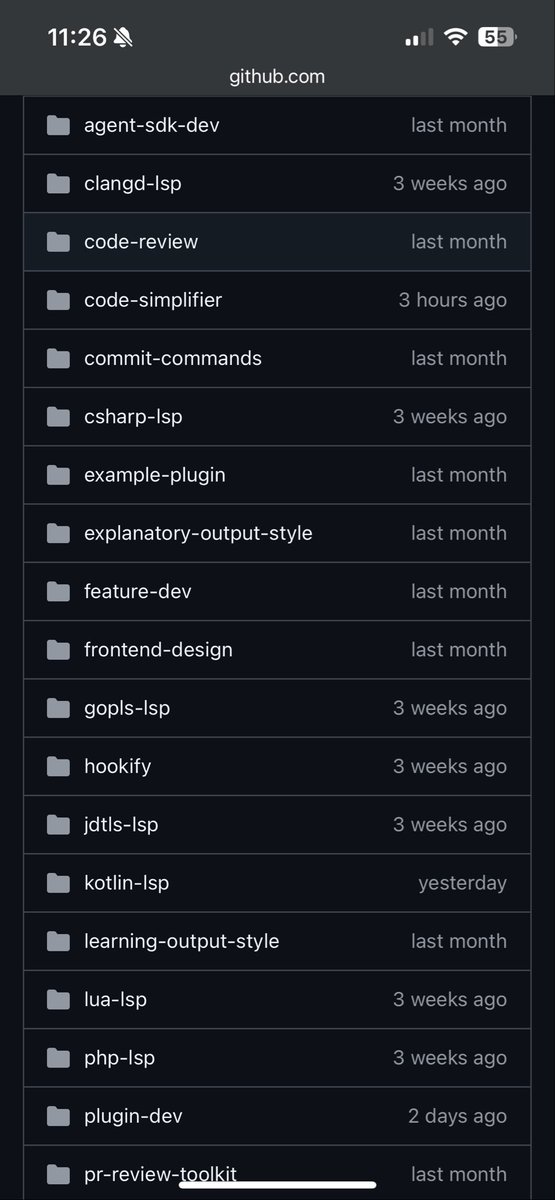

We just open sourced the code-simplifier agent we use on the Claude Code team. Try it: claude plugin install code-simplifier Or from within a session: /plugin marketplace update claude-plugins-official /plugin install code-simplifier Ask Claude to use the code simplifier agent at the end of a long coding session, or to clean up complex PRs. Let us know what you think!