Goodfire

415 posts

Goodfire

@GoodfireAI

Using interpretability to understand, learn from, and design AI.

We rented out the Academy of Sciences next Tuesday for a series of talks on autonomous science with @Ginkgo, @GoodfireAI, and Monomer Bio! Come by! luma.com/rmuagyc0

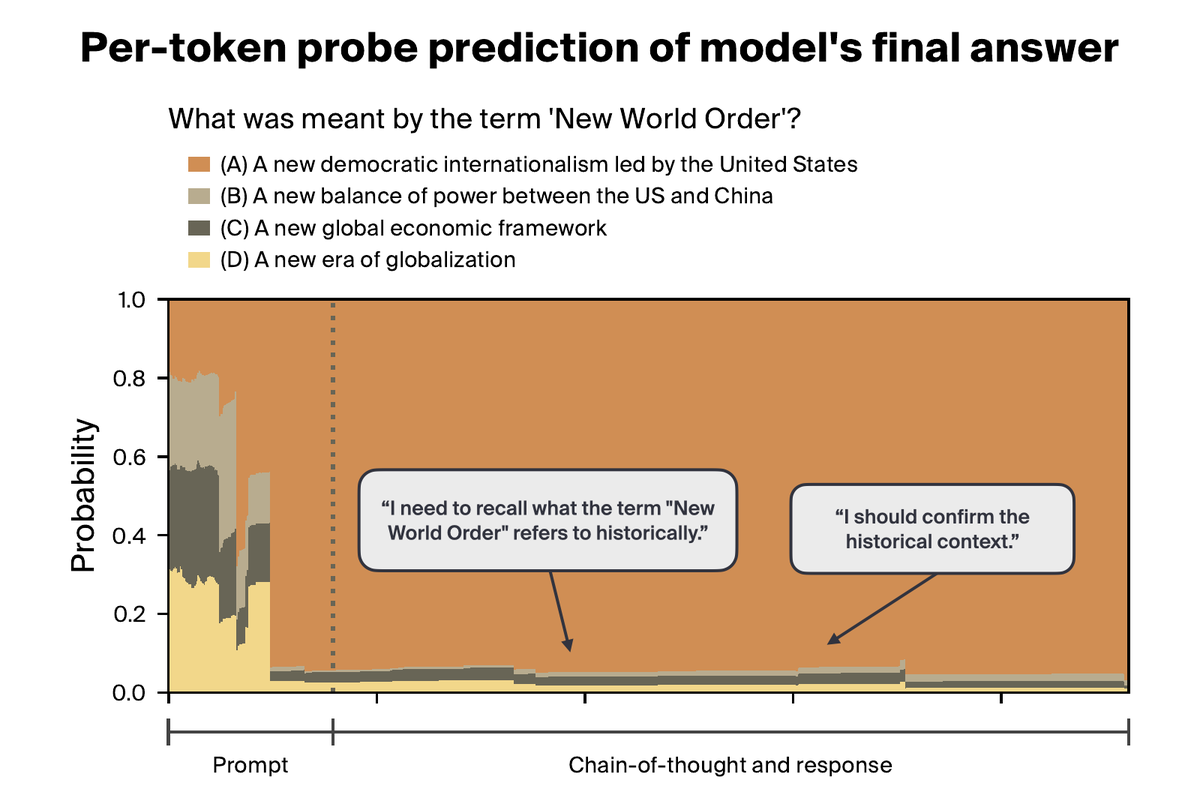

Interpretability methods usually study single-token behavior. But real model behaviors, like sycophancy or writing style, are diffuse across many tokens. Can these diffuse behaviors be localized and controlled from long-form responses? YES!

After 2 years probing #visionmodels at the #KempnerInstitute, @thomas_fel_ reflects on what he’s learned—and what pieces of the #interpretability puzzle remain hidden—as he heads to @GoodfireAI. Read the interview: bit.ly/4aEBzmp 🎙️🧩

Excited to launch a course on mechanistic interpretability at Stanford next quarter! So grateful to be part of an amazing teaching team: Atticus Geiger, Jing Huang, Junyi Tao, and Thomas Icard (who all share the admirable trait of not having a twitter account)

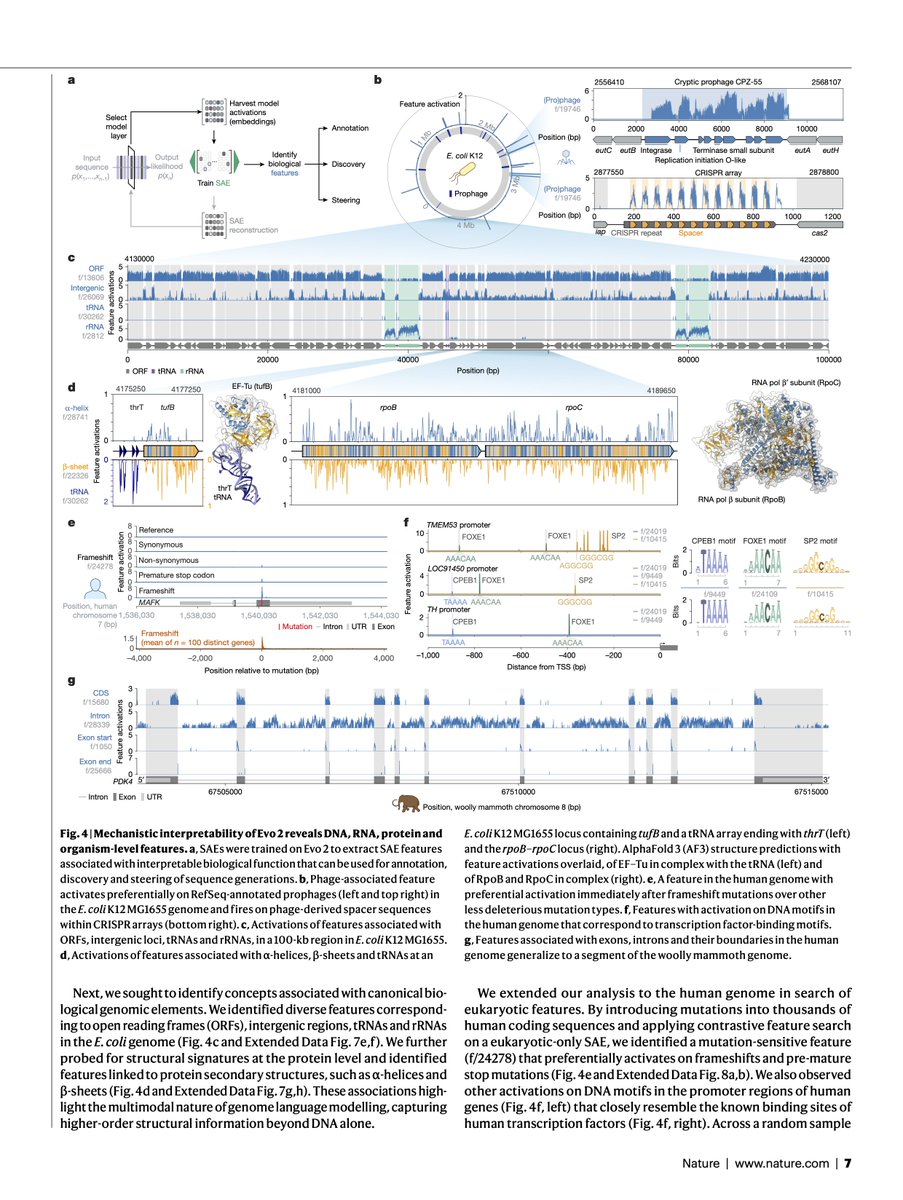

Evo 2, the largest fully open biological AI model to date, is now published in @Nature.

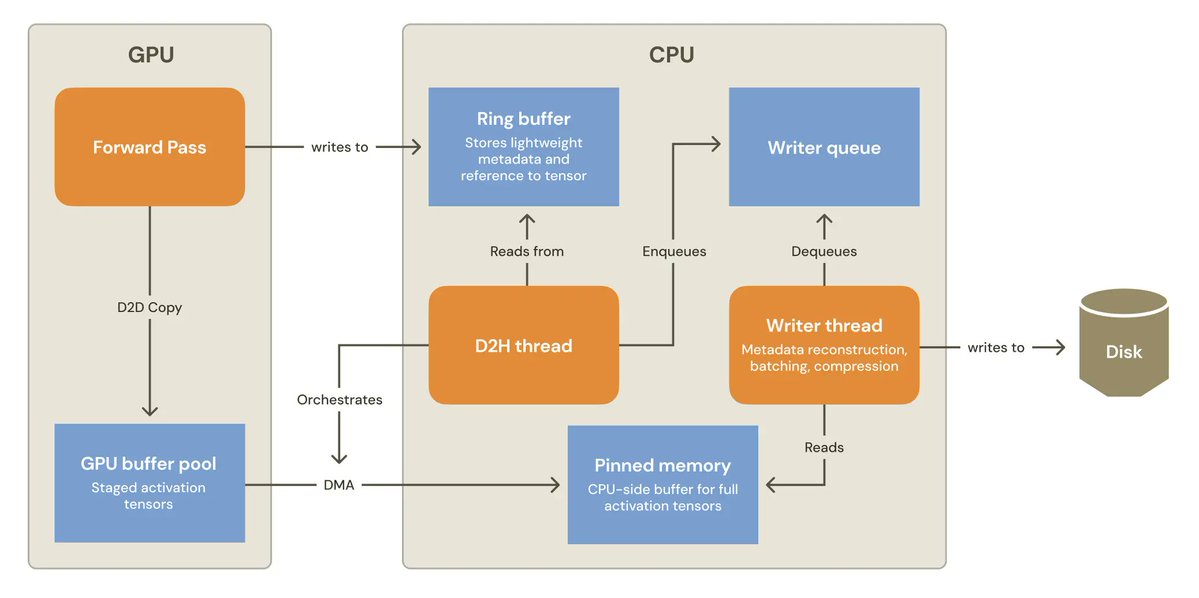

Kimi is just like me frfr Steering @Kimi_Moonshot's K2 Thinking's reasoning in the Kimi CLI