StripSlashes

9.9K posts

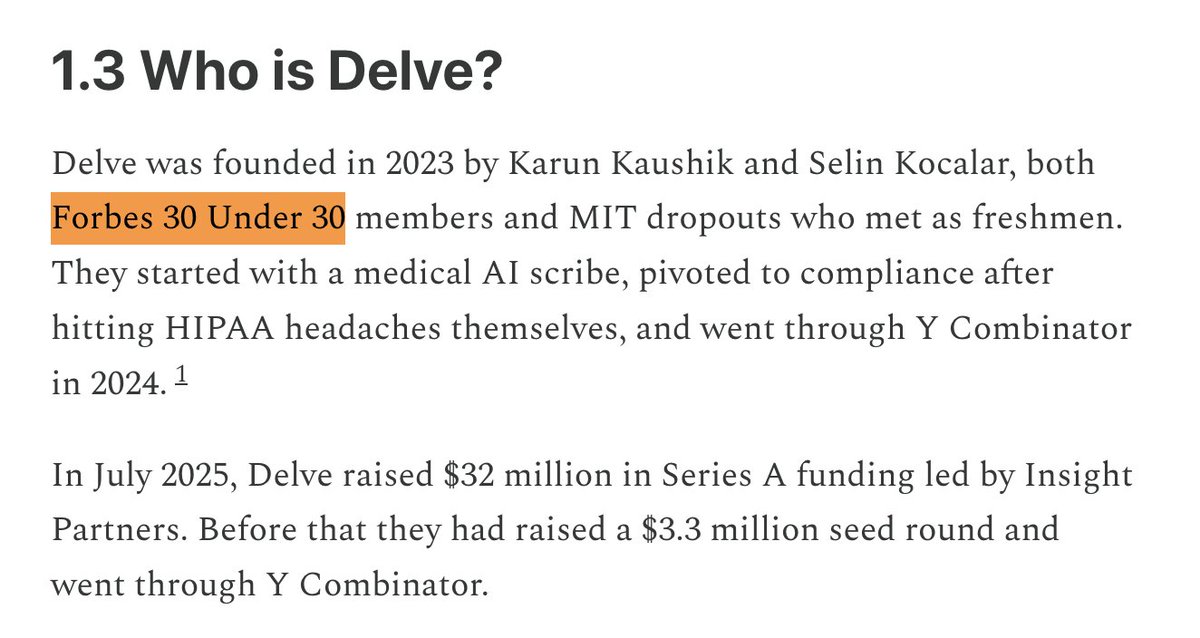

A detailed and brutal look at the tactics of buzzy AI compliance startup Delve "Delve built a machine designed to make clients complicit without their knowledge, to manufacture plausible deniability while producing exactly the opposite." substack.com/home/post/p-19…

Jensen Huang: "If that $500,000 engineer did not consume at least $250,000 worth of tokens, I am going to be deeply alarmed. This is no different than a chip designer who says 'I'm just going to use paper and pencil. I don't think I'm going to need any CAD tools.'"

all this build up to reveal the ugliest creature known to man

A detailed and brutal look at the tactics of buzzy AI compliance startup Delve "Delve built a machine designed to make clients complicit without their knowledge, to manufacture plausible deniability while producing exactly the opposite." substack.com/home/post/p-19…

Jensen Huang: "If that $500,000 engineer did not consume at least $250,000 worth of tokens, I am going to be deeply alarmed. This is no different than a chip designer who says 'I'm just going to use paper and pencil. I don't think I'm going to need any CAD tools.'"

OPENAI: GREG BROCKMAN TO TEMPORARILY OVERSEE PRODUCT REVAMP & DESKTOP APP CHANGES; FIDJI SIMO TO LEAD SALES FOR NEW PRODUCT

MiMo-V2-Pro & Omni & TTS is out. Our first full-stack model family built truly for the Agent era. I call this a quiet ambush — not because we planned it, but because the shift from Chat to Agent paradigm happened so fast, even we barely believed it. Somewhere in between was a process that was thrilling, painful, and fascinating all at once. The 1T base model started training months ago. The original goal was long-context reasoning efficiency. Hybrid Attention carries real innovation, without overreaching — and it turns out to be exactly the right foundation for the Agent era. 1M context window. MTP inference for ultra-low latency and cost. These architectural decisions weren't trendy. They were a structural advantage we built before we needed it. What changed everything was experiencing a complex agentic scaffold — what I'd call orchestrated Context — for the first time. I was shocked on day one. I tried to convince the team to use it. That didn't work. So I gave a hard mandate: anyone on MiMo Team with fewer than 100 conversations tomorrow can quit. It worked. Once the team's imagination was ignited by what agentic systems could do, that imagination converted directly into research velocity. People ask why we move so fast. I saw it firsthand building DeepSeek R1. My honest summary: — Backbone and Infra research has long cycles. You need strategic conviction a year before it pays off. — Posttrain agility is a different muscle: product intuition driving evaluation, iteration cycles compressed, paradigm shifts caught early. — And the constant: curiosity, sharp technical instinct, decisive execution, full commitment — and something that's easy to underestimate: a genuine love for the world you're building for. We will open-source — when the models are stable enough to deserve it. From Beijing, very late, not quite awake.