Basic SWE

66 posts

@basicswe

Software engineer • in the arena • 10+ years #FAANG • Views are my own

Yes! Flying mounts ruin games. Ground travel is so much more interesting than air travel. You have to navigate, you sometimes have to face danger. Air just skips everything and you fly in a straight line. When my friends and I were playing Ark I had to make a no-flying-mounts rule because the challenge of navigating the terrain and avoiding tough dinos is a huge part of the game and if you can just fly then you lose all that. I also loved that in the original Ark, the only map you got didn't show your position or direction. You had to navigate by landmarks and memory. Wish more people would make games with expansive worlds with no map and no fast travel. The game has to be designed for it, though -- like quests have to be designed so you aren't constantly going back and forth across the world, and landmarks have to be distinct enough for you to be able to navigate off of them.

This is Farzapedia. I had an LLM take 2,500 entries from my diary, Apple Notes, and some iMessage convos to create a personal Wikipedia for me. It made 400 detailed articles for my friends, my startups, research areas, and even my favorite animes and their impact on me complete with backlinks. But, this Wiki was not built for me! I built it for my agent! The structure of the wiki files and how it's all backlinked is very easily crawlable by any agent + makes it a truly useful knowledge base. I can spin up Claude Code on the wiki and starting at index.md (a catalog of all my articles) the agent does a really good job at drilling into the specific pages on my wiki it needs context on when I have a query. For example, when trying to cook up a new landing page I may ask: "I'm trying to design this landing page for a new idea I have. Please look into the images and films that inspired me recently and give me ideas for new copy and aesthetics". In my diary I kept track of everything from: learnings, people, inspo, interesting links, images. So the agent reads my wiki and pulls up my "Philosophy" articles from notes on a Studio Ghibli documentary, "Competitor" articles with YC companies whose landing pages I screenshotted, and pics of 1970s Beatles merch I saved years ago. And it delivers a great answer. I built a similar system to this a year ago with RAG but it was ass. A knowledge base that lets an agent find what it needs via a file system it actually understands just works better. The most magical thing now is as I add new things to my wiki (articles, images of inspo, meeting notes) the system will likely update 2-3 different articles where it feels that context belongs, or, just creates a new article. It's like this super genius librarian for your brain that's always filing stuff for your perfectly and also let's you easily query the knowledge for tasks useful to you (ex. design, product, writing, etc) and it never gets tired. I might spend next week productizing this, if that's of interest to you DM me + tell me your usecase!

Look at that mostly empty $3,800,000,000 light rail train! Now look at that $500,000 bus overtaking it and having more flexible options for riders. Oh well, it’s just taxpayer money. Doesn’t need to be spent responsibly.

Based on the leaked Claude Code source code, your CLAUDE.md file is re-injected on every single **turn** of the conversation.

My friend works with a company that is fully remote Because of this, he is able to: - Go the barber at 9 AM on a Tuesday - Go the gym at 3:30 PM - Start work earlier/later than normal to accommodate this schedule He doesn't have to ask permission to schedule his life as needed. He doesn't need his team to tell him what they are doing every second of every day. He doesn't have to worry about whether or not his team can be trusted as adults. Hire right. Trust your team. Repeat. Work should add to your life, it shouldn't prevent you from living. Do you agree?

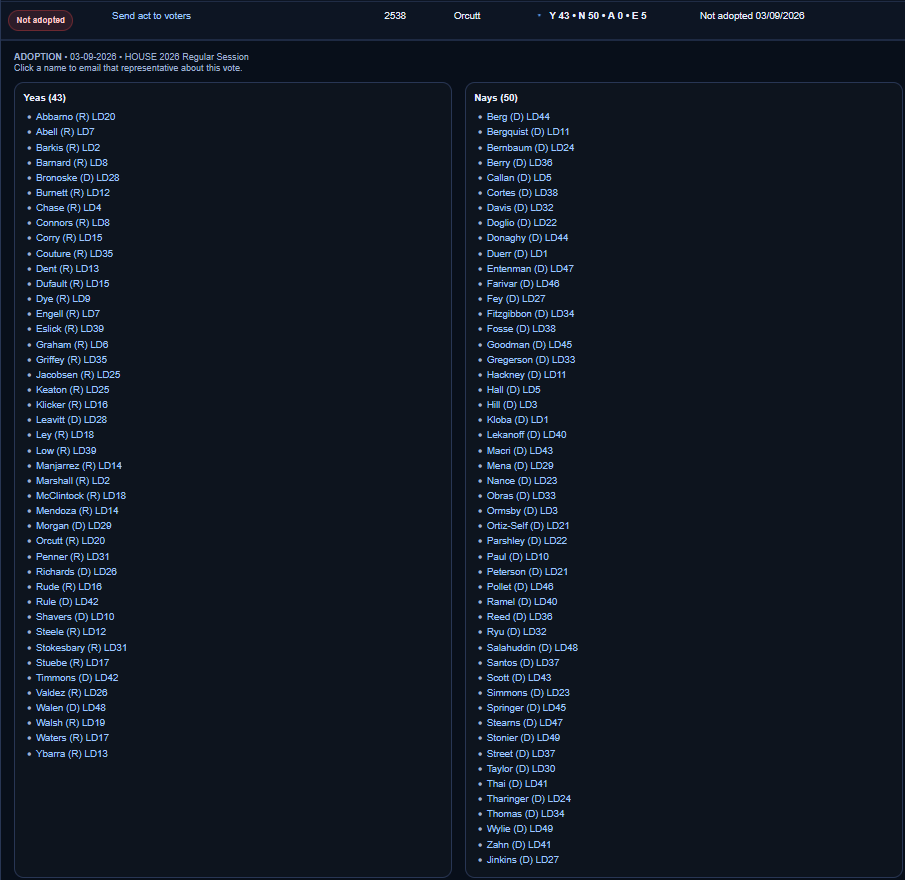

All the amendments offered on the floor. Only ~7 were adopted—and two of those were Republican ideas Democrats were willing to accept. Democrats refused to let voters decide on this massive tax shift. They refused to lock in ironclad protections keeping it to just millionaires permanently. And they shot down most Republican proposals for real relief to working families—like tax breaks on school supplies, diapers, and basic sanitation necessities. Don't gaslight us. We see it for what it is: a new income tax dressed up as fairness, with the revenue going wherever Democrats want it to go. Republicans brought forth solid amendments and valiantly fought for them for 24 hours on the House floor. Your party steamrolled the bill and called it good.

Added a message queue. Removed it 8 months later. The addition: - "We need to decouple these services" - Added RabbitMQ between API and processor - Async all the things - Felt very architectural The reality: - 99% of messages processed in under 1 second - Queue depth never exceeded 10 - Added failure mode: queue itself - Added complexity: retry logic, dead letters - Added latency: async overhead The problems: - Debugging required checking two systems - Message ordering issues emerged - New engineers confused by the indirection - Sync would've been simpler and sufficient The removal: - Direct HTTP calls between services - Circuit breaker for resilience - Timeout for latency control - Retry at caller level Result: Simpler, faster, easier to debug. Queues solve real problems. But "decoupling" isn't automatically a problem. Add infrastructure for reasons, not resume.

If your program is too exciting, you've lost everybody. You want to keep it dull and robust. That's engineering. hanselminutes.com/1024

We’re starting to get a clearer sign of how vast the surface area of context engineering is going to be. To build AI agents, in theory, it should be as simple as having a super powerful model, giving it a set of tools, having a really good system prompt, and giving it access to data. Maybe at some point it really will be this simple. But in practice, to make agents that work today, you’re dealing with a delicate balance of what to give to the global agent vs. a subagent. What things to make agentic vs. just a deterministic tool call. How to handle the inherent limitations of the context window. You had to figure out how to retrieve the right data for the user’s task, and how much compute to throw at the problem. How to decide what to make fast, and suffer potential quality drops, vs. slow but maybe annoying. And endless other questions. So far there’s no one right answer for any of this, and there are meaningful tradeoffs for any given approach you take. And importantly, getting this right requires a deep understanding of the domain you’re solving the problem for. Handling this problem in AI coding is different from law, which is different from healthcare. This is why there’s so much opportunity for AI agent plays right now.

they won't tell you this in SRE school but the easiest way to fix a memory leak is to restart your pods on a schedule