Db

3.2K posts

This quote has me thinking great writing isn't just great prosecraft, but the fusion of powerful ideas with the written word. Lesser ideas would make for lesser writing, no matter the quality of the craft on display.

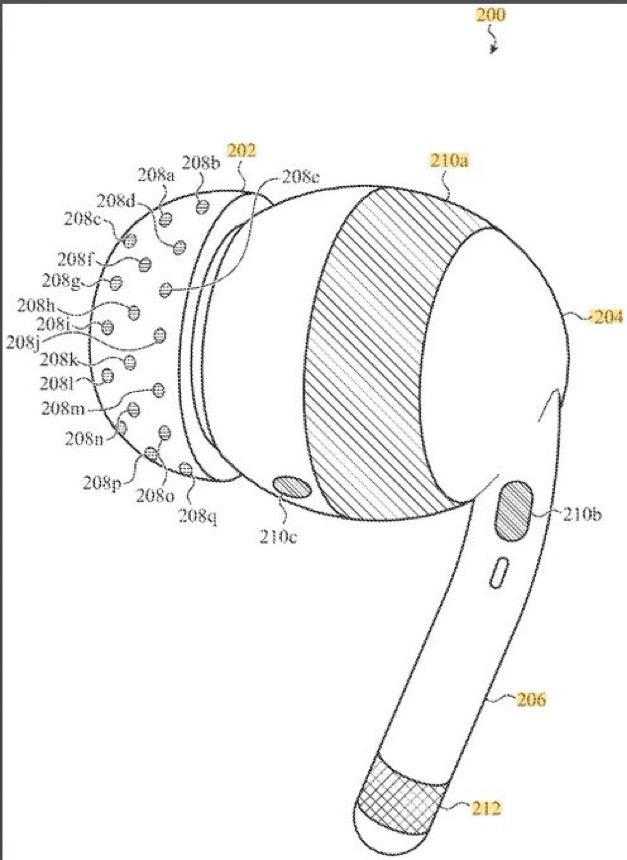

Neural nets don’t just forget. Sometimes, after long training, they lose the ability to learn at all. In our #ICLR2026 poster, we model Loss of Plasticity as gradient dynamics trapped in invariant manifolds: 🔴 frozen units, 🔵 cloned units. The video makes the traps visible.

First silicon just arrived. These dies are from the first wafer of my GF180MCU based Linux SoC KianV, built with a fully open source ASIC flow. This chip was part of the wafer.space GF180MCU run and hardware validation comes next. Big thanks to Leo Moser for help with the ASIC flow and to @mithro and @evezor for their guidance along the way. The picture shows the first dies. More bring up updates soon.

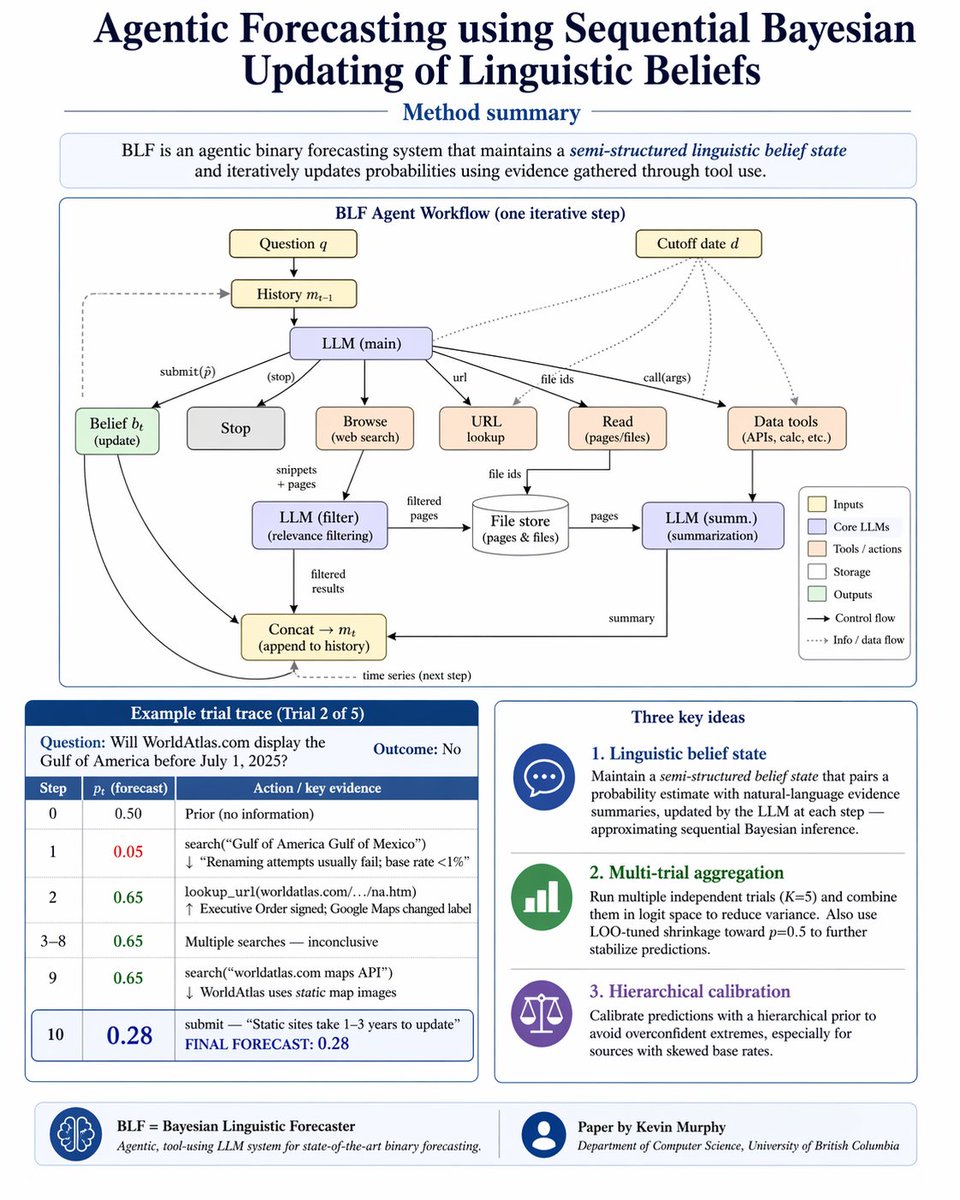

@rsalakhu @subail @chuckjhoover @asenkut @FHaskaraman Congrats! BTW you might find my recent paper of interest... arxiv.org/abs/2604.18576