Dimdv

7.3K posts

When one brain isn't enough, switch to Grok 4.20. Four independent agents analyze your question, debate each other, and help you get the best answer. Available now to SuperGrok and Premium+ subscribers globally.

My first test of MiniMax M2.7 this made 3d fibre physics with all the specs of m2.7 is written

We found this line in the Grok config, : “Grok 4.20 AGI (beta)” Artificial Grok Intelligence Probably just an easter egg… or maybe not 👀 @elonmusk can you confirm 🤯

@AdamLowisz It should be able to do a good analysis today. Grok outputting files in different formats is coming next week.

Terafab may be the most essential vertical integration Tesla has ever undertaken— and it is truly non-optional. It will take years to build and will test even Elon’s speedrunning abilities to the limit, but that won’t stop him from trying. The breakthrough likely lies in overhauling the overall facility’s cleanroom model. By moving wafers in sealed pods with localized micro-environments, the fab no longer needs a monolithic ultra-clean space. Elon’s line about “eating cheeseburgers and smoking cigars” on the fab floor isn’t silly, it’s the practical reality of a radically simpler, cheaper, faster approach that could finally change the economics of chipmaking. This is all forced by the brutal “pinch” in chip supply. Tesla must produce on the order of 100–200 billion AI chips per year just to saturate its roadmap. That volume powers: FSD cars & Robotaxis (tens of millions of vehicles needing AI5 inference for near-perfect autonomy), Physical Optimus (scaling from thousands today to millions per year, each requiring AI5/AI6-level compute), Digital Optimus (the new xAI-Tesla software agents for digital/office automation, running massive inference clusters), Space-based data centers (AI7/Dojo3 orbital compute for GW-scale training and inference beyond Earth limits). AI5 delivers the ~10× leap for vehicles and early robots; AI6 shifts focus to Optimus + terrestrial DCs; AI7 goes orbital. No external foundry (TSMC, Samsung, etc.) can deliver that scale or timeline— hence the Terafab launch. Without it, the entire robotics + autonomy future hits a brick wall. Terafab isn’t optional; it’s the only way forward.

What are your initial impressions of Grok 4.20? Major upgrades are still landing every week.

Grok 4.20 is now officially out of Beta. It's now on Auto, Fast, Expert & Heavy.

What opinion will get you in this position?

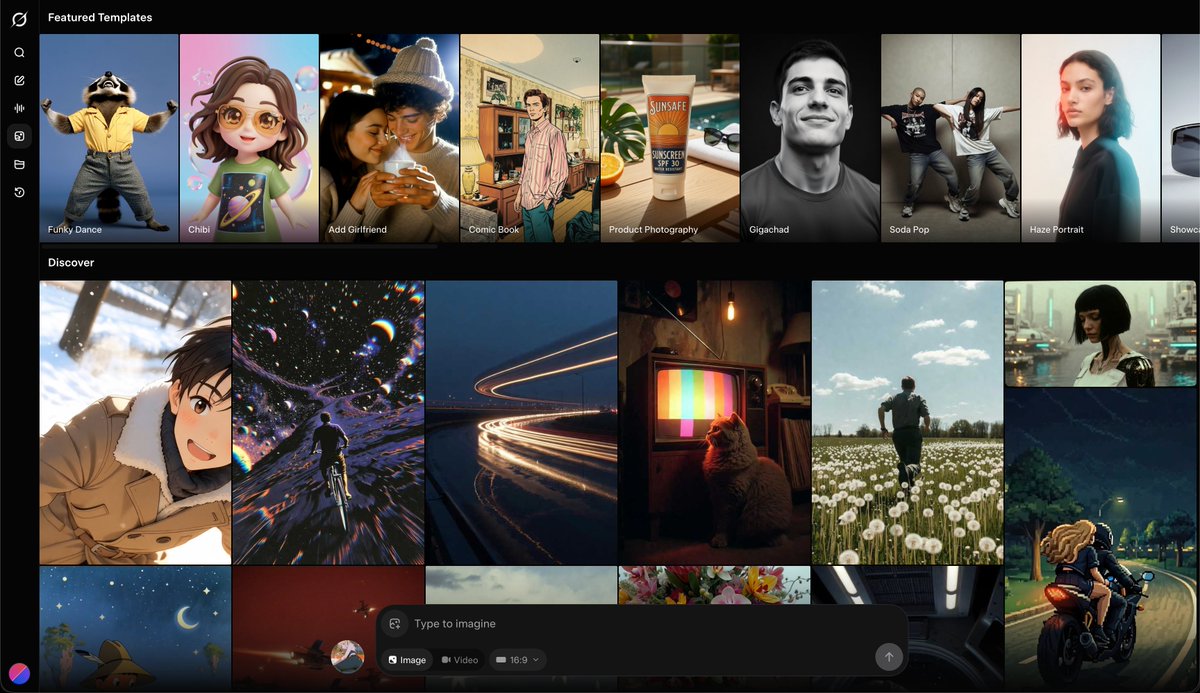

xAI's Grok Imagine just took over the entire DesignArena Video leaderboard - not one, but THREE #1 rankings → #1 Video Arena - Elo 1337, a 33-point gap over #2 → #1 Image to Video Arena - Elo 1298, beating Google Veo 3.1, Kling & Sora → #1 Video Editing Arena - Elo 1291 It’s wild, xAI was nowhere in the video space a few months ago, and now it's #1 across various benchmarks Grok Imagine's rate of progress is in a league of its own