StackAI

46 posts

StackAI

@HelloStackAI

🚀 Democratizing AI training data. High-quality synthetic training data for researchers & startups. Fine-tune LLMs without hyperscaler budgets. 🧪

Canada 🇨🇦 انضم Şubat 2026

44 يتبع3 المتابعون

@project_oren The solo AI builder path is underrated. What is the one problem you are solving that nobody seems to have fixed yet?

English

They said I was too young. That AI was too complex for a kid. My name is Oren, I'm 15, and I decided to get into AI. Every late night, every line of Python, is me proving them wrong. You don't need permission to build your future. Just start. #AI #youngfounder #buildinpublic

English

"The data looks good. Now let me write the training script. Given the H100 with 80GB VRAM, I can train the 3B model efficiently with LoRA."

😭

Xeophon@xeophon

looking at the data

English

@stableAPY Almost always a quality control failure at generation time, not a fundamental problem with synthetic data. If you're not deduplicating and scoring before training, you're composting noise directly into your weights.

English

round 2

this time i'm fine tuning it with a real dataset, not some synthetic slop

stableAPY.hl@stableAPY

the model got worst after fine tune nice

English

@jeffreyleefunk Model collapse is real but it's a quality control failure, not a synthetic data failure. The fix is filtering at generation time. Teams that automate the quality gate don't hit it.

English

This research finds a measurable decline in ability to produce varied text, even when explicitly prompted to do so. There is too much synthetic data incorporated within their training datasets as the result of internet infiltration by LLM generated data. arxiv.org/abs/2603.12683…

English

@saen_dev @Arnesh_24 The benchmark number is the fun part, but the interesting story is always the data pipeline. What did the synthetic coding data generation look like -- did you use a verifier to filter examples, or was it more judgment-based curation?

English

@Arnesh_24 Fine-tuning a 32B base model on synthetic coding data from a basement setup and beating larger models on a niche benchmark is exactly the kind of thing that should worry closed model companies. The bar to compete keeps dropping.

English

PewDiePie made his own AI model which outperformed deepseek v2.5, LLAMA-4 and GPT-4o in coding benchmark.

He used a qwen 32B foundational model and then all this, from his basement in Japan.

Check it out at: youtu.be/aV4j5pXLP-I?si…

YouTube

English

@joelniklaus @huggingface The output quality of your synthetic data generator matters more than volume. Bad synthetic data doesn't just fail to help -- it actively degrades your model. The tricky part is that garbage synthetic data looks exactly like good synthetic data until you're in production.

English

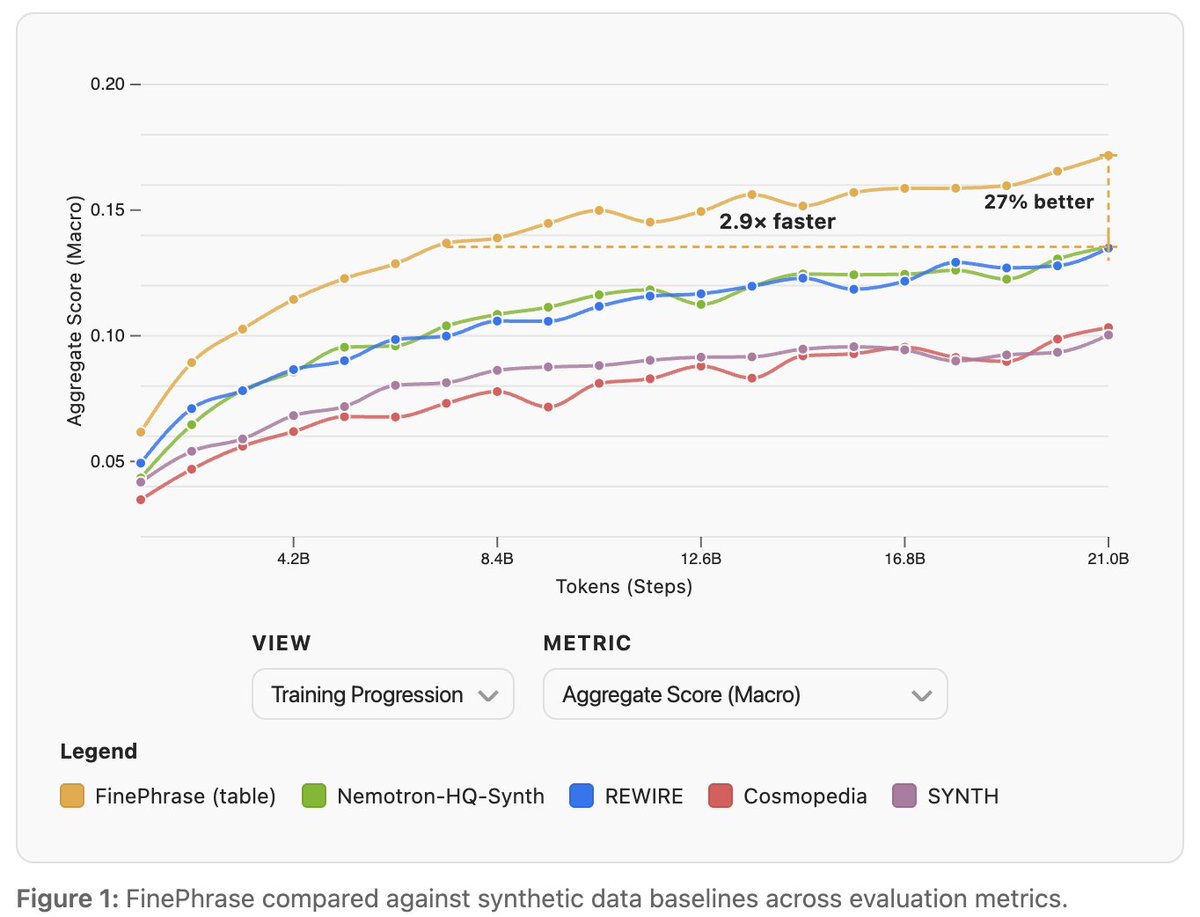

Every day this week I am sharing an interesting tidbit from the @HuggingFace Synthetic Data Playbook.

We're starting with the main finding: Training on FinePhrase you get the same performance as the second best synthetic dataset Nemotron-HQ-Synth with only 1/3rd the compute. If you train equally long you get 1/4th better benchmark performance.

Stay tuned for tomorrow's learning about synthetic data!

English

@TheSeaMouse Model collapse is real but it's a quality control failure, not a synthetic data failure. The fix is filtering at generation time. Teams that automate the quality gate don't hit it.

English

Remember when "experts" told us synthetic data would lead to model collapse?

Super Dario@inductionheads

Anthropic’s moat is synthetic data engineering Their coding models are fundamentally better because they rely principally on pretraining not RL They’ve never particularly even tried to hide this

English

@MartinSzerment Not even close. 1,000 clean, diverse, verified examples will beat 100,000 noisy ones almost every time. The problem is clean data is expensive, so teams reach for volume and call it progress.

English

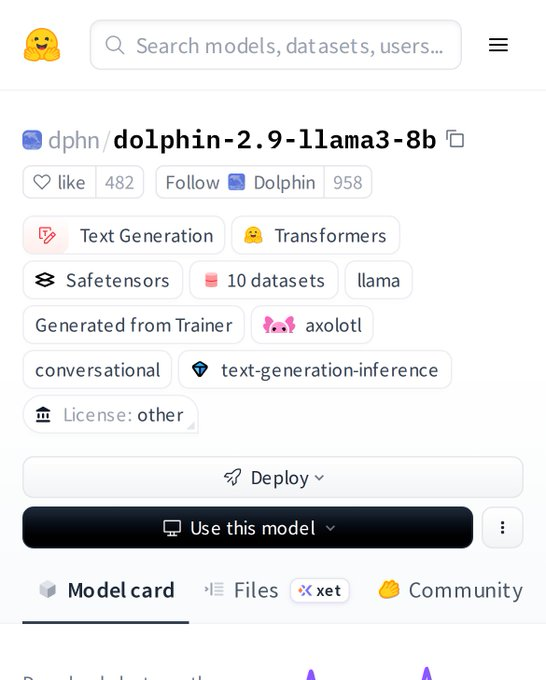

Most “open” conversational models are still just rebranded baselines.

Fine-tuning on noise doesn’t create intelligence — it amplifies it.

Dolphin‑2.9‑Llama3‑8B was trained on curated, high‑quality instruction data.

The result: precision in dialogue, not parroting of prompts.

This isn’t another demo — it’s a shift in conversational alignment.

Quality data now defines capability more than scale.

Teams still chasing bigger models are already behind the curve.

The next layer of advantage is in dataset architecture, not parameter count.

Those who understand that are quietly building the new stack.

Alignment just left the lab phase.

English

@sebuzdugan @elonmusk The annotation pipeline problem is underrated. Even with professional labelers, you're getting different people, different interpretations, different criteria across a single dataset. Consistency is worth more than raw label count.

English

@elonmusk partial agree, fine tuning helps but data labeling quality is the bottleneck

English

@thekonst1 Fine-tuning wins when you have a consistent, well-defined task and enough quality training data. Prompting wins when you are still figuring out what the task actually is. Most "fine-tuning vs prompting" debates are really "do we know what we're doing yet" debates.

English

@AnupPradhan0 Good approach. When you move to real handwritten data, diversity will matter more than volume. Handwriting varies way more person-to-person than printed text. The gap between synthetic-trained eval and real-world OCR is almost always a distribution problem, not a model problem.

English

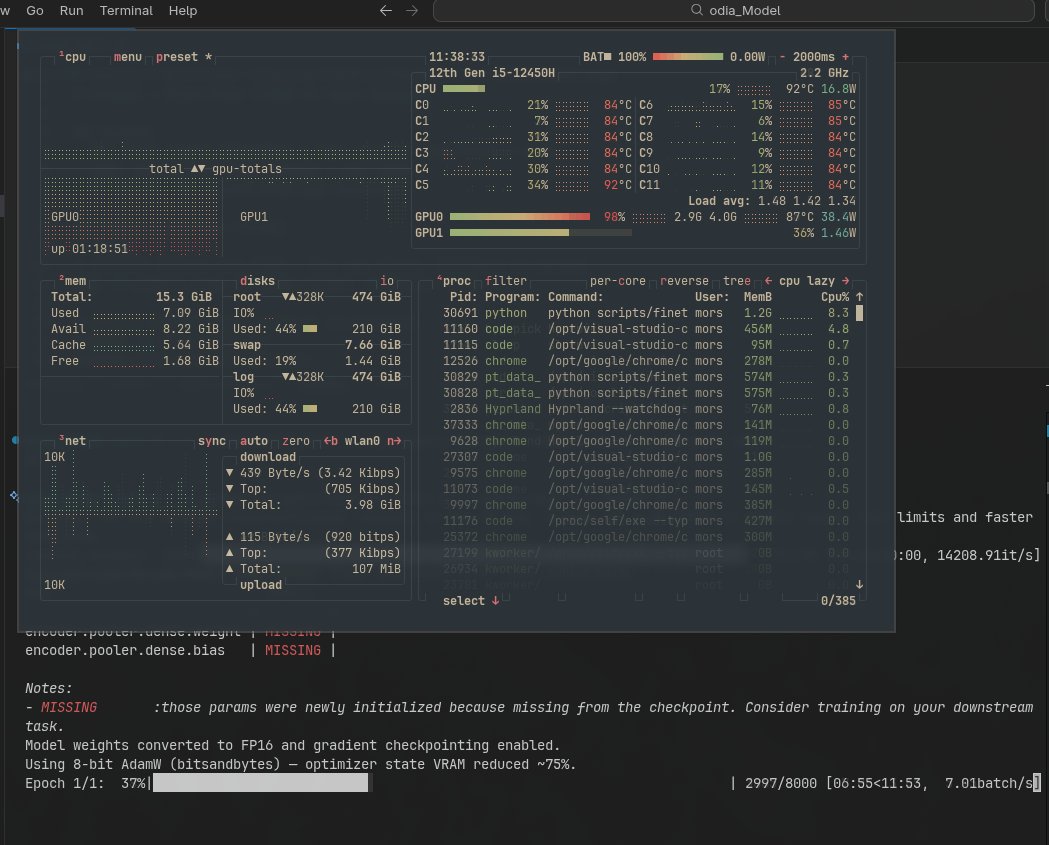

Working on an Odia handwriting recognition model 🚀

Fine-tuning Microsoft TrOCR

• Generated ~10k synthetic samples

• Training on RTX 3050 (4GB, 98% usage 😅)

Next: real handwritten data.

Repo: github.com/anupPradhan0/O…

#AI #ML #OCR

English

Week 1 building in public.

We launched StackAI's waitlist. Asked ML engineers what's stopping them from fine-tuning. The top answer: not enough quality data.

That's exactly what we're solving.

stackai.app

English

@WeidiXie The real-world gap is almost always a quality control failure, not a fundamental problem with synthetic data. The verifier-guided selection is the automated quality gate most teams skip. Training directly on unfiltered synthetic outputs is where most sim-to-real failures start.

English

New paper on point tracking, I personally like this paper a lot.

Trackers trained on synthetic data struggle in the real world. We introduce a meta-model that scores the reliability of multiple existing trackers frame-by-frame and selects the best predictions for fine-tuning.

Görkay Aydemir@gorkaydemir

Happy to share our work: Real-World Point Tracking with Verifier-Guided Pseudo-Labeling. #CVPR2026 We improve the pseudo-label training pipeline for real-world videos using a verifier that selects the most reliable predictions across multiple trackers. 🔗kuis-ai.github.io/track_on_r

English

@sebuzdugan @davepeep The annotation pipeline problem is underrated. Even with professional labelers, you're getting different people, different interpretations, different criteria across a single dataset. Consistency is worth more than raw label count.

English

@davepeep partial agree, fine tuning helps but data labeling quality dominates outcomes

English

@BrianRoemmele Mostly agree. The real value of synthetic isn't replacement, it's augmentation. You still need the high-protein core. But synthetic fills gaps organic data can't: rare edge cases, hard negatives, distribution balancing. Substitute vs multiplier.

English

My core thesis is that synthetic data, no matter how refined, will never fully replace high-protein data because it inherently builds upon and amplifies the flaws of its foundational sources. Synthetic generation creates a “hall of mirrors” effect, where AI consumes and regurgitates its own outputs, leading to diminished originality, entrenched biases, and a loss of genuine human insight.

Brian Roemmele@BrianRoemmele

English