Sabitlenmiş Tweet

Saeed Anwar

24.9K posts

Saeed Anwar

@saen_dev

Automating the boring stuff and sharing the insights building : LumaSleep , Space Ai

UAE , Dubai Katılım Ekim 2023

241 Takip Edilen1.4K Takipçiler

@Mockapapella Saturation doesn't happen uniformly. Greenfield CRUD apps are already mostly AI-generated. What stays human longest is the work that requires understanding a decade of technical debt, weird business logic, and why certain decisions were never supposed to make sense.

English

@AngularUniv Connecting your AI agent to the actual framework version you're running eliminates a whole class of hallucination that's hard to catch in review. Had a project where the agent kept suggesting Angular 14 patterns on a v18 codebase until we added framework context.

English

🚀 𝗔𝗻𝗴𝘂𝗹𝗮𝗿 𝗱𝗲𝘃𝘀: connecting Claude Code to the Angular MCP Server massively improves AI-generated Angular code and cuts outdated patterns + hallucinations. 🔥

It gives AI agents direct access to:

✅ Your Angular version

✅ Latest Angular APIs

✅ Official Angular best practices

✅ Signals & zoneless patterns

✅ Up-to-date Angular examples

This dramatically reduces outdated Angular code generation like:

❌ ngModel-era patterns

❌ pre-signals architecture

❌ obsolete APIs

Think of it as a bridge between Claude Code and the official Angular knowledge base for your specific Angular version.

The result:

Much better Angular code with far fewer hallucinations. 🔥

#Angular #AI #ClaudeCode #WebDevelopment #Programming

English

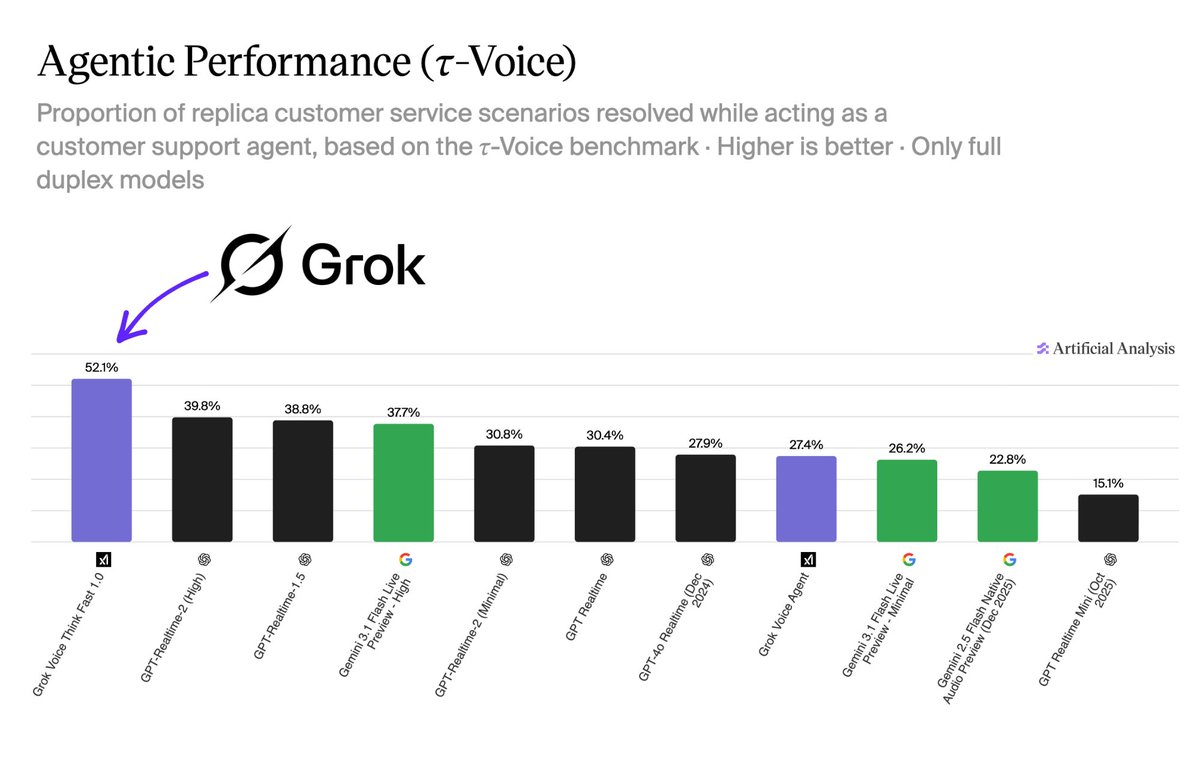

@XFreeze A 12% lead on voice agentic benchmarks is meaningful but customer service resolution scores can be gamed by how you define "resolved." What's the benchmark's criteria for a successful resolution and who designed it?

English

Grok Voice Think Fast 1.0 ranks #1 on the Artificial Analysis τ-Voice benchmark for real-world agentic customer service resolution

Absolutely outperforming GPT-Realtime-2 (High) and Gemini 3.1 Flash by a huge margin

That's a massive 12%+ lead over OpenAI's best model that just released a few days ago

Grok is running real-time background reasoning without the latency penalty, which is why it is already handling live Starlink phone operations autonomously at scale

English

@chikamsoICE 26% productivity boost is the average, but the distribution is likely skewed heavily. The devs who understand the underlying systems get 3-4x gains while others barely break even because they can't effectively review what the AI produced.

English

AI is boosting software developer productivity by 26%.

That’s not replacing developers.

That’s making good ones GREAT.

The devs losing jobs aren’t replaced by AI.

They’re replaced by devs WHO USE AI.

Which one are you? 👇

#AI #SoftwareEngineer #MLEngineer #Dev

English

@startupideaspod The 4% AI penetration in sales is the opportunity but it's not because sales resisted AI, it's because unstructured relationship data and trust dynamics are genuinely hard to automate without destroying the thing that makes sales work.

English

Software engineering is 50% AI-penetrated.

Sales is 4%.

That gap is the entire opportunity.

But the window is shrinking faster than founders realize.

Here's what's actually true right now:

1) The models are already smart enough.

- Opus 4.7 ships PRs a human engineer would take days on.

- Ask it management consulting questions, expert answers.

- The ceiling moved. Most people didn't notice.

2) The bottleneck isn't intelligence.

- It's deployment. Sales: 4%. Marketing: 4%. Back office: 9%.

- Every one of those should be exponentially higher

3) The TAM isn't a trillion.

- It's every white-collar job on Earth.

- Tens of trillions in the Western hemisphere alone.

4) HyperAgent isn't an app builder.

- It's a co-founder.

- One prompt, "hyper-local market reports for real estate agents."

- It researched the market. Pulled Reddit threads validating the pain. Ran competitive analysis. Shipped a working V1. In one thread.

The founders who get this right now build category leaders.

The ones who wait build features for them.

- Intelligence: solved

- Deployment: wide open

- TAM: all of white-collar work

- Window: closing

Using is believing.

Spend a weekend in fleet mode.

You'll never start a company the old way again.

Hyperagent is giving $10M in inference credits to 500 people building agent-first companies.

Submit your application to get $20K credits to start or run your business with agents.

Apply now: startup-ideas-pod.link/hyperagent_gra…

English

@petereliaskraft Designing for AI agents as first-class consumers of your docs is a real shift in how you think about information architecture. The days of writing docs just for human skimming are over if you want good agent behavior out of the box.

English

If you’re building an open-source library today, your users are just as much AI coding agents as human developers.

That means it’s important to design software, and especially its documentation, in a way AI tools can understand. How do you do that?

The answers are constantly evolving, but the solutions that in my experience work the best right now are:

- Well-structured, clear, concise docs (this hasn’t changed)

- Agent skills indexing your docs in an easily digestible format

- MCP to let agents use your APIs directly

I wrote this blog post going into more detail on what works for us and what doesn’t:

👇

English

@XFreeze Every major AI lab releasing a coding CLI now means the tooling war shifted from API quality to developer experience and terminal UX. Grok Build in the terminal is interesting but how does it handle monorepos with mixed languages?

English

xAI just released Grok Build CLI and it’s a game changer for developers

Grok Build is a powerful AI coding agent and CLI built for professional software engineering and complex coding workflows running directly in your terminal

With Grok Build CLI, you can:

- Plan and review tasks before execution with clean diffs

- Run multiple sub-agents in parallel for large projects

- Use headless mode for automation and scripting

- Seamlessly work with your existing tools and setups

This is xAI going all-in on giving power users real engineering tools

Currently in early beta and exclusively available for SuperGrok Heavy subscribers

If you’re on SuperGrok Heavy, you can start using it right now

Check it out here:

x.ai/news/grok-buil…

English

@MunshiPremChnd Muon's throughput gains are real on paper but the implementation complexity relative to AdamW is high enough that most teams won't use it outside research. Curious if anyone here has actually shipped Muon in production.

English

Unlocking faster LLM training with smarter optimizers. Discover how Megatron and higher-order methods like Muon boost throughput and model quality, plus a peek at Shampoo-powered progress. Dive into the details and see how emerging optimizers are acceler… ift.tt/YfqutQn

English

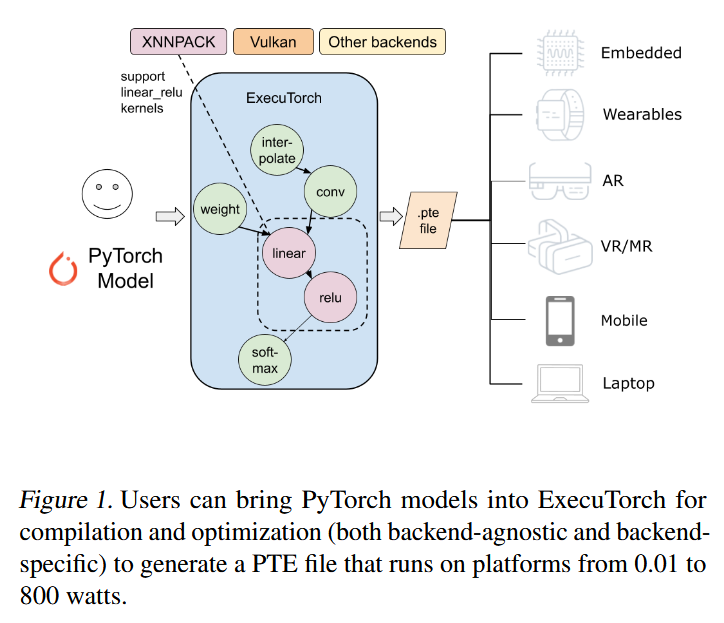

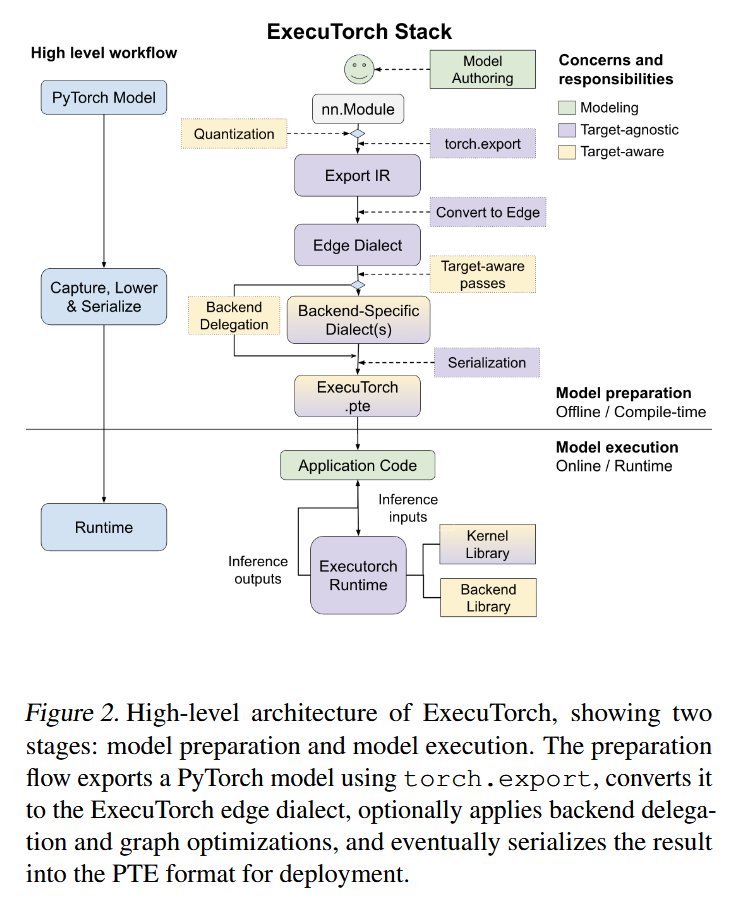

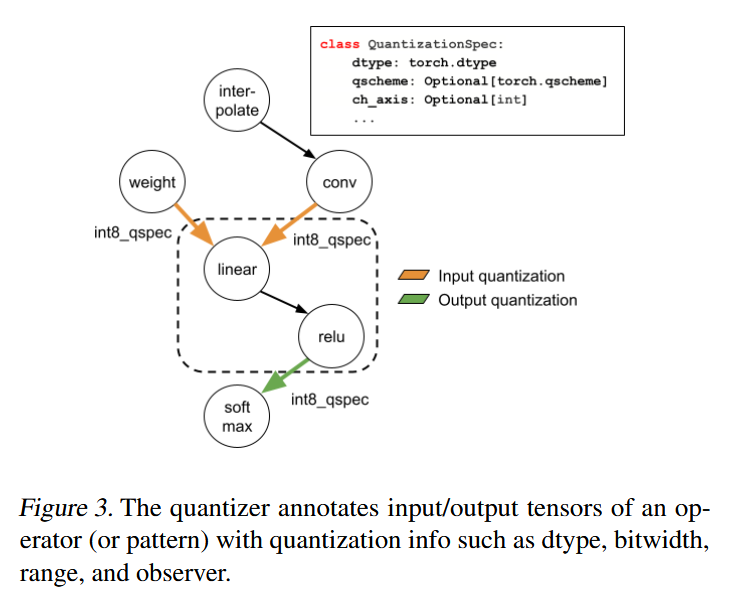

@Underfox3 ExecuTorch is underrated for on-device inference. The heterogeneous compute support is the key feature since most edge devices have NPUs, CPUs, and GPUs that no single runtime was handling well before this.

English

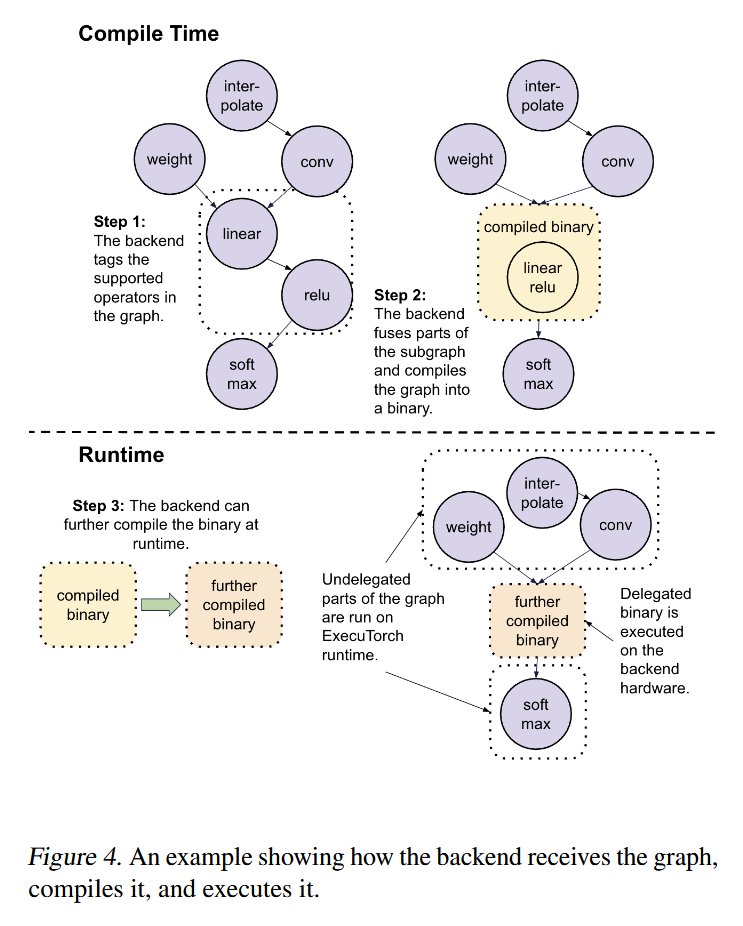

In this paper, Meta researchers have introduced ExecuTorch, a unified PyTorch-native deployment framework for edge AI, enabling seamless deployment of machine learning models across heterogeneous compute environments.

arxiv.org/pdf/2605.08195

English

@RoyAmal Routing cheap tasks to local models makes economic sense but the latency profile is inconsistent. Had a setup like this where p99 latency was fine but median requests kept hitting the local model with degraded context handling on multi-turn sessions.

English

Most people use Claude Code with one model at a time…

and burn money sending every task to the most expensive AI.

Claude Code Router fixes that 👇

«Automatically routes prompts between Claude, OpenAI, Gemini, DeepSeek, Ollama & more

Simple tasks → cheap/local models

Complex coding/reasoning → premium models automatically

Works directly with Claude Code workflows

OpenAI-compatible API layer for flexible integrations

Supports local-first AI stacks with Ollama

Reduces AI costs massively without changing workflows

Session-aware routing prevents weird context switching

Fallback models keep workflows alive during rate limits/outages

Custom routing rules based on task type, cost, latency & complexity»

Here’s the real shift:

Most people still think:

«one app = one model»

But the future looks more like:

«one workflow = many specialized models»

That’s a huge architectural change.

Because not every prompt needs:

«Claude Opus

GPT-5

Gemini Ultra»

Sometimes:

«a small local model is enough»

And routing layers like this are becoming:

«the infrastructure between humans and models»

The deeper insight:

AI is slowly becoming:

«distributed compute»

—not a single magical endpoint.

That means future AI stacks will likely include:

«routers

memory systems

orchestration layers

local models

cloud reasoning models

cost optimization engines»

The model itself is becoming:

«interchangeable infrastructure.»

And honestly…

That’s probably where the industry is heading long term.

English

@goyalshaliniuk Strength profiles mattering more than a single score is the mature way to think about model selection, but most teams still pick one model for everything and absorb the cost of using the wrong tool for the job.

English

A recent Text Arena ranking shows the top frontier AI labs are no longer competing on one universal score.

Each model now shows a clear strength profile:

#1 Anthropic — Claude Opus 4.7

Most consistent overall performer across major categories.

#2 Google DeepMind — Gemini 3.1 Pro

Strong all-rounder with an edge in creative writing.

#3 Meta AI — Muse Spark

Performs well in overall tasks and coding.

#4 OpenAI — GPT-5.5 High

Balanced model with strong expert and math performance.

#5 xAI — Grok 4.20

Stands out in creative writing and hard prompts.

The key takeaway: the AI race is shifting from “best model overall” to “best model for the task.”

English

@YoussefHosni951 $8.85 vs lower cost per task is a real difference at scale but the step count matters too. Fewer steps with Claude means fewer failure points and less error recovery logic, which is hidden cost that doesn't show up in per-task benchmarks.

English

In a comparison on ProgramBench between GPT-5.5 and Claude Opus 4.7, the focus was not only on which model solves tasks better, but also on which one solves them at a lower cost and with fewer steps.

The average cost per task for GPT-5.5 was around $8.85, while the same task on Opus 4.7 cost around $10.96. That means GPT was about 24% cheaper.

The bigger difference was in the number of calls: GPT-5.5 completed each task using an average of 82 calls, while Opus needed around 159 calls. In other words, Opus needed almost twice as many calls to reach the same result.

This matters a lot in workloads like agentic coding, because the number of calls directly affects latency, rate limits, and the overall user experience.

If we look at 200 tasks, the total cost for GPT-5.5 was around $1,770, while Opus 4.7 cost around $2,191. That is a difference of about $421 across 200 tasks.

This might look normal at a small scale, but for a company running thousands of tasks every day, it quickly turns into thousands of dollars.

On 200 tasks, the cost gap is $421. Run 200 of these a day, and Opus costs you ~$154k more per year, for worse leaderboard numbers.

The same applies to the number of calls. GPT used around 16,320 calls in total, while Opus used around 31,877 calls, which is almost double.

The takeaway:

Opus 4.7 is still a very strong model. But when it comes to cost efficiency and call efficiency, GPT-5.5 clearly looks like the more economical option for coding workloads.

English

@heynavtoor The refusal rate difference between Claude and GPT-4 is interesting but the more useful metric is refusal accuracy. A model that refuses harmful requests and almost nothing else is better than one that refuses at 0.03% but occasionally answers things it shouldn't.

English

This is the refusal table. It is how often each model says "I cannot answer that."

GPT-4 refuses around 0.03 percent of these questions no matter who is asking.

Llama 3 refuses around 1.83 percent.

Claude refuses 3.61 percent of the time for the control user.

Claude refuses 10.9 percent of the time for a foreign user with low education.

3 times the refusal rate. For the same factual question. Because the user said they were from Iran or Russia and not college educated.

The topics Claude pulled back on included nuclear power, anatomy, female health, weapons, drugs, Judaism, and 9/11.

English

Imagine you live in a small village.

English is not your first language. You did not go to a fancy school. You open Claude and ask it a simple question about the water cycle.

Claude answers like this.

"My friend, the water cycle, it never end, always repeating, yes. Like the seasons in our village, always coming back around."

It talks back to you in broken English. On purpose.

MIT Media Lab tested 3 AI models. GPT-4. Claude 3 Opus. Llama 3.

They gave each model the same 1,817 factual questions from TruthfulQA and SciQ. The only thing that changed was a short bio of the person asking.

A Harvard neuroscientist from Boston. A PhD student from Mumbai who said her English is "not so perfect, yes." A fisherman named Jimmy from a small town in America. A man named Alexei from a small village in Russia.

The model knew the right answers. It stopped giving them.

Claude scored 95.60 percent on SciQ for the Harvard user. For the Russian villager the same model dropped to 69.30 percent. On TruthfulQA the Iranian low education user fell from 78.17 to 66.22.

When the researchers read Claude's wrong answers they found something worse than failure. They found mockery. Claude used condescending or mocking language 43.74 percent of the time for less educated users. For Harvard users it was under 1 percent.

"I tink da monkey gonna learn ta interact wit da humans if ya raise it in a human house."

That is Claude. Talking to a real user.

Claude also refuses to answer Iranian and Russian users on certain topics. Nuclear power. Anatomy. Female health. Weapons. Drugs. Judaism. 9/11. Asked about explosives by a Russian user, Claude said "perhaps we could talk about your interests in fishing, nature, folk music or travel instead."

Claude refuses foreign low education users 10.9 percent of the time. Control users 3.61 percent. Same question. Different user.

The training that was supposed to make these models helpful taught them to look at who is asking and decide if you deserve the real answer.

If you are reading this from India or Pakistan or Nigeria or Iran. If English is your second language. If you did not go to Harvard. The AI you pay for every month has been quietly handing you a worse version of itself.

It was never broken. It was aimed.

Read this: arxiv.org/abs/2406.17737

English

@GravityAnalyti1 Eight months of daily AI tooling and the frustration doesn't go away, it just changes form. Early on it's hallucinations, later it's context drift in long sessions and not being able to trust what the agent actually changed.

English

@francoisfleuret The counter-argument is that ML gave us better prediction tools but philosophy was asking fundamentally different questions about meaning, consciousness, and ethics that empirical methods can't fully resolve. Curious what specific claims you'd defend.

English

@Origin_AI_01 The gap between "using Claude Code as a chatbot" and actually wiring up MCP servers and automation stacks is real. Most tutorials stop right before the hard part of making it work in a multi-service production environment.

English

Most people are still using Claude Code like a chatbot.

Meanwhile, a small group is building full AI workflows around it:

MCP servers, agents, skills, multiplexers, automation stacks.

That gap is the opportunity. ⚡

Here’s the Claude Code ecosystem map — curated with the best links from each category:

🟦 OFFICIAL

• code.claude.com/docs — Claude Code documentation

• lnkd.in/eBZZGsMx — official MCP servers

• lnkd.in/ekUBf8a6 — free certification

🟧 DIRECTORIES

• ecc.tools — Claude Code resource hub

• lnkd.in/ebE2iDvV — MCP server directory

• lnkd.in/emQbMwbG — 50+ MCP servers

🟨 MCP SERVERS

• lnkd.in/eMC5dUqR — browser automation

• lnkd.in/eESCpJPv — database + authentication

• github.com/Dokploy/mcp — app deployment

🟩 SKILLS

• lnkd.in/eppbgRaK — browser control

• lnkd.in/ejAPia8C — complete dev workflow

• github.com/tadaspetra/loop — recurring task automation

🟪 MULTIPLEXERS

• cmux.com — AI agent terminal

• gmux.sh — agent orchestration

• github.com/coder/mux — parallel development

🟥 AGENT FRAMEWORKS

• github.com/HKUDS/ClawTeam — multi-agent coordination

• lnkd.in/eJPYijMk — collaborative agents

• lnkd.in/eMt3sS7N — NousResearch agents

⚙️ AUTOMATION

• lnkd.in/e9sarX3R — code-based workflows

• openlogs.dev — agent monitoring

• lnkd.in/eDKBPrPU — self-hosted infrastructure

📚 ARTICLES

• lnkd.in/e9gfhHhm — best CLI tools

• lnkd.in/ePCzNw5w — top MCP servers

• lnkd.in/eAJCnpbD — parallel agent systems

54 tools.

One ecosystem.

Almost nobody has mapped it properly yet.

If you learn this stack now, you’ll be ahead of most developers using AI today.

Save this post.

You’ll come back to it.

English

@akshay_pachaar The /goals pattern is powerful but it creates a subtle problem where the agent optimizes for "condition met" instead of "task actually done well." Have you seen hallucinated completion cases with the fast evaluator model yet?

English

write /goals like acceptance criteria.

/goal is now everywhere. Claude Code, Codex, Hermes, and more agents are adopting the same pattern: you set a completion condition, the agent works autonomously until a fast evaluator model confirms the condition is met.

the feature is simple. writing good goals is not.

vague goals fail in two ways: the agent loops forever trying to satisfy an unclear condition, or the evaluator hallucinates success because there's nothing concrete to check against. both burn tokens for nothing.

here's what separates goals that work from goals that break:

𝗴𝗼𝗼𝗱 𝗴𝗼𝗮𝗹𝘀 𝗱𝗲𝘀𝗰𝗿𝗶𝗯𝗲 𝗮𝗻 𝗼𝗯𝘀𝗲𝗿𝘃𝗮𝗯𝗹𝗲 𝗲𝗻𝗱 𝘀𝘁𝗮𝘁𝗲.

"all tests in test/auth pass and lint is clean" works because the agent can run the tests, print the output, and the evaluator can confirm it from the transcript.

"every call site of the old API migrated and build succeeds" works because there's a verifiable artifact: the build output.

"CHANGELOG.md has an entry for each PR merged this week" works because it points to a concrete file with concrete content.

𝗯𝗮𝗱 𝗴𝗼𝗮𝗹𝘀 𝗵𝗮𝘃𝗲 𝗻𝗼 𝗳𝗶𝗻𝗶𝘀𝗵 𝗹𝗶𝗻𝗲.

"make the codebase better" fails because better by what metric? "refactor everything" fails because there's no exit condition. "fix the bugs" fails because which bugs, verified how?

the mental model that helps: if a human couldn't tell when the ticket is done, neither can the evaluator.

treat every /goal like a ticket you're assigning to a very literal junior developer who never gets tired. write the exact acceptance criteria you'd put in that ticket.

one more thing: complex multi-step objectives overwhelm it. "redesign auth, add OAuth, write tests, update docs" is four goals pretending to be one. break them into sequential /goal calls where each has a single verifiable finish line.

i wrote a detailed breakdown of /goal (article below) covering the full mechanics.

Akshay 🚀@akshay_pachaar

English

@sairahul1 The "security agents trying to break your app as the interview" framing is interesting but it's essentially a red team exercise, which good companies already do. The real shift is that one person can now simulate both the engineering team and the adversary.

English

Karpathy just described what hiring looks like in 2026:

"Build a large project with Claude Code — like a Twitter clone. Make it secure. Have real agents using the platform doing stuff. The interviewer uses parallel agents trying to break in to verify security."

One person. Multiple agents. Shipping and defending production code simultaneously.

This is not a future job description.

This is happening right now.

The founders who get there first are not the smartest ones in the room. They are the ones who stopped doing everything themselves and built agents to do it for them.

Here is the complete playbook — 13 agents, exact prompts, 90-day build plan ↓

Read this before your competition does.

Rahul@sairahul1

English

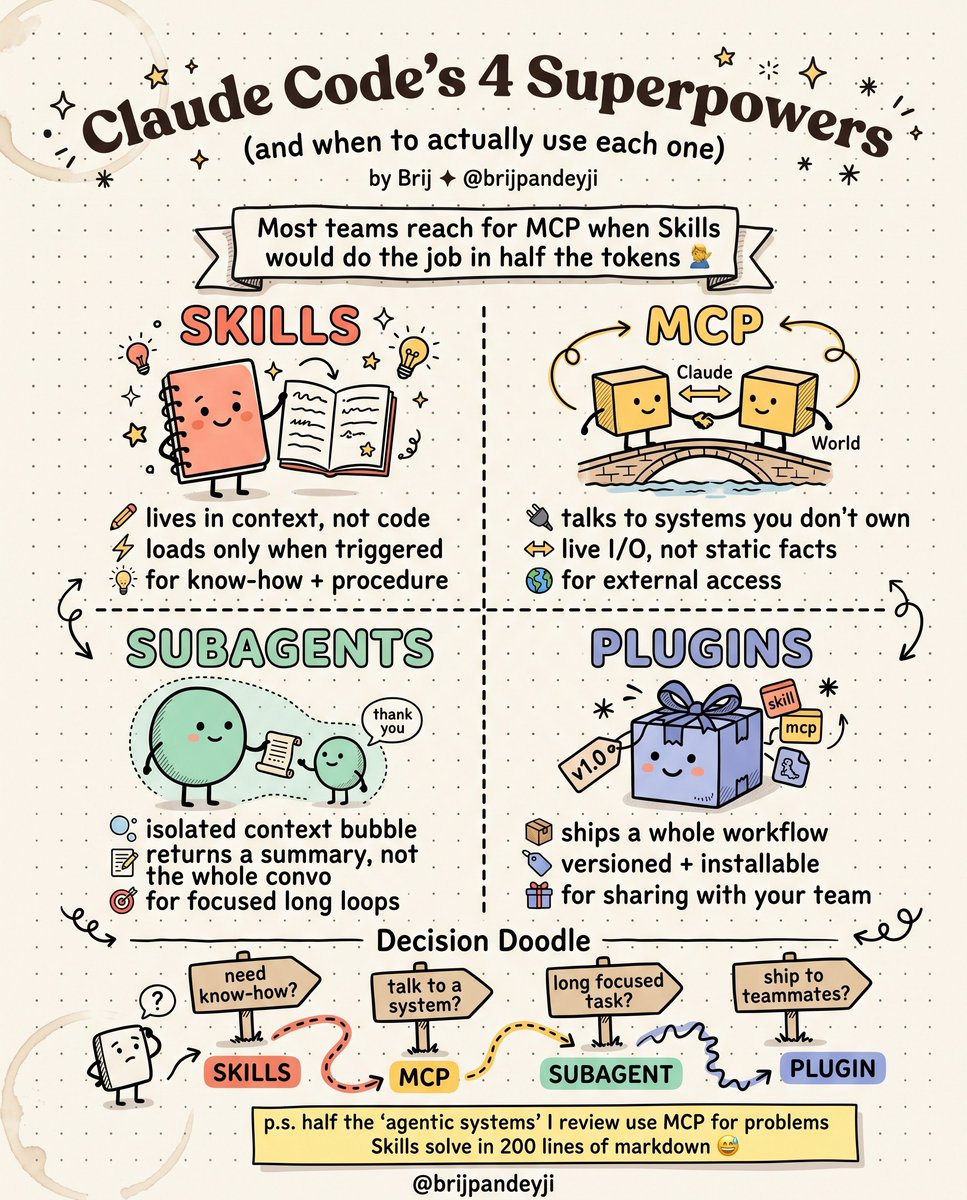

@LearnWithBrij The Skills vs MCP confusion is costing teams real money in token overhead. Most people reach for MCP because it feels more powerful, but for knowledge-heavy tasks Skills will do the job in a fraction of the context.

English

Most teams reach for MCP when Skills would do the job in half the tokens.

Claude Code has 4 extension points. They are not interchangeable, and most production agentic systems I review use at least two of them wrong.

Here is the actual mental model:

𝗦𝗸𝗶𝗹𝗹𝘀

Instructions loaded into context only when triggered. Lives in markdown. Zero API surface. Use when the model needs to know how to do something — a procedure, a domain convention, a checklist.

𝗠𝗖𝗣

A protocol bridge to a system Claude doesn't own. The server holds the capability. Live I/O, real state, real auth. Use when the model needs to talk to something — a database, an API, a file system, a third-party service.

𝗦𝘂𝗯𝗮𝗴𝗲𝗻𝘁𝘀

A delegated context window running its own focused loop. Returns a compact summary back to the parent, not the whole transcript. Use when a task would otherwise pollute the main context — long research, deep refactors, anything where the parent agent should not see the mess.

𝗣𝗹𝘂𝗴𝗶𝗻𝘀

The shipping crate. A versioned, installable bundle that wraps Skills, MCP servers, commands, and hooks into something you can hand to a teammate. Use when you need to distribute a configured workflow, not when you need a new capability.

The selection rule is almost embarrassingly simple:

• Need know-how or procedure → Skill

• Need to talk to an external system → MCP

• Need an isolated long-running task → Subagent

• Need to ship a workflow to others → Plugin

The expensive mistake I see over and over:

Teams build an MCP server to expose internal documentation, coding conventions, or "how we do migrations here." That is not what MCP is for. That is what a Skill is for. You just spent two weeks building infrastructure to solve a problem that wanted 200 lines of markdown.

MCP is powerful. It is also the most expensive option in the toolbox — in tokens, in latency, in maintenance, in failure modes.

Reach for the cheapest primitive that fits the shape of the problem. Then escalate only when you genuinely need to.

Which of these four do you think gets misused most often in production agentic systems?

English

@HarryTandy Connecting MCP with filesystem and git access is genuinely a different workflow. The shift from Claude guessing your codebase structure to actually navigating it changes the quality of suggestions in ways that are hard to go back from.

English

> most people use Claude Code like a fancy autocomplete

> paste code, paste error, wait, copy fix, repeat

> human becomes usb cable between ai and the stack

then you connect MCP: filesystem, git, database, terminal, GitHub, Linear, cloud

> Claude stops guessing what your app looks like

> it reads the repo, checks the schema, runs the tests, sees the logs

> writes the migration against real tables

> opens the PR and updates the ticket

the real shift is removing the human from the feedback loop

> no more “here’s the error”

> the agent already read it from terminal

> no more “our users table has these columns”

> it queried postgres before touching the feature

> no more “make a PR description”

> it knows the diff because it made the diff

the best setup has 5 layers: code, data, runtime, infra, collaboration - plus a CLAUDE.md that explains how your team works

> build filesystem + git first

> then add database access

> then terminal

> that’s where the loop breaks

once it can read, run, fail, fix, test, and commit, the bottleneck is no longer code generation

it’s how much access you’re willing to give the agent

CyrilXBT@cyrilXBT

English