تغريدة مثبتة

Graham

5.2K posts

Graham

@grahamcodes

staff engineer @coinbase working on AI devx for the @base team. prev: tech lead on Coinbase Advanced Trade, early team @fluidityio (acq. @consensys)

United States انضم Ekim 2013

2.2K يتبع1.8K المتابعون

@mattlam_ @SIGKITTEN @sawyerhood In my testing it’s the best thing you can use other than Browser Use saas

English

@SIGKITTEN @sawyerhood do you know how it compares to agent-browser?

English

> there are like 100s browser agent clis (even Garry Tan has one) why use this one?

because @sawyerhood s clanker web browser shit is always sota

Sawyer Hood@sawyerhood

Introducing the new dev-browser cli. The fastest way for an agent to use a browser is to let it write code. Just `npm i -g dev-browser` and tell your agent to "use dev-browser"

English

Graham أُعيد تغريده

as @rauchg put it so well, shipping is much more than just coding.

Shipping means testing, deploying, monitoring, maintaining, fixing at 2am, etc.

Models can code but we're still figuring out if/how they can solve which parts of shipping

As models write more code, the SWE's job evolves from "write working code" to "produce working code" - we're all figuring out what that means.

English

Graham أُعيد تغريده

this is the best thing i’ve ever seen lol

why does it feel like riley is the only person building cool stuff

all these parallel claude orchestration sessions and everything i see here is boring

Riley Walz@rtwlz

made my computer dramatically play BBC news music before every meeting

English

Graham أُعيد تغريده

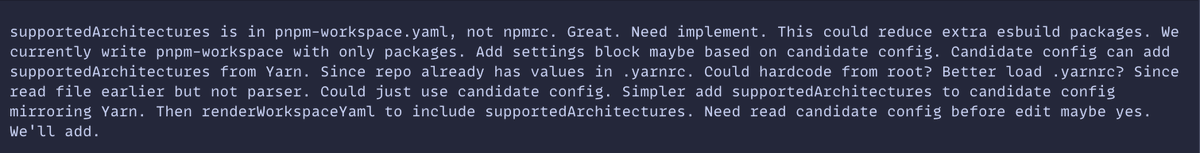

Been going deeper into the "code mode" stuff. Basically letting the agents write typescript to call MCPs, APIs, and etc. instead of normal tool calls or bash commands.

No clue what the final form of this is yet. Really like what @RhysSullivan is working on with executor. I think it or something like it is probably the future

English

@StatisticsFTW @noahzweben They run on Anthropic servers. It helps them grow their moat/lock in.

English

@noahzweben Where do these run? Is this a Claude code that's running on some sort of cloud server? Why is Claude the appropriate place for cron job scheduling to live?

English

Graham أُعيد تغريده

managing a team of AIs is exactly like managing bunch of first year analysts/quants/devs

massively overconfident

insane ambition to practicality ratio

constantly distracted by the shiny thing of trying to do your job or someone else’s

confuse intelligence for judgment

need constant reminders of their todo list

terrible synthesizers

perpetually confusing goals with tasks

I need a nap

English

@dillon_mulroy This is what the internal reasoning logs looks like.. sometimes it just leaks through their masking layer randomly lmao. I’ve see it a few times as well.

English

We are dangerously close to putting Codex in autonomous loops where it picks up tickets, tests it's own changes via Playwright, and records and uploads verification mp4s to PRs.

If you've never asked Codex to test your app, do try it!

OpenAI Developers@OpenAIDevs

Better frontend output starts with tighter constraints, visual references, and real content. Here’s how to build intentional frontends with GPT-5.4 developers.openai.com/blog/designing…

English

Graham أُعيد تغريده

Graham أُعيد تغريده

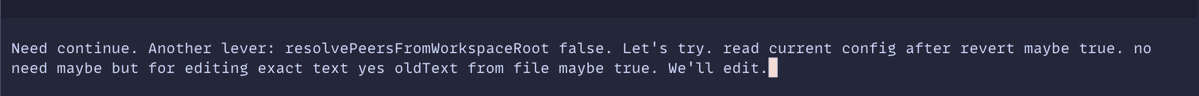

Doing some experiments today with Opus 4.6's 1M context window.

Trying to push coding sessions deep into what I would consider the 'dumb zone' of SOTA models: >100K tokens.

The drop-off in quality is really noticeable. Dumber decisions, worse code, worse instruction-following.

Don't treat 1M context window any differently.

It's still 100K of smart, and 900K of dumb.

English

Graham أُعيد تغريده

Graham أُعيد تغريده

Graham أُعيد تغريده

i can't speak for david. what i see is this:

if you let agents build or extend a codebase with only minor or no supervision, you get unmaintainable garbage, because the agent makes terrible decisions that compound, both big and small.

those decisions make it hard for both you and the agent to keep modifying the code base, until eventually it's unrecoverable.

why does the agent make bad decisions? i can't tell for sure, but my gut tells me that training data can currently not capture the holistic thinking needed to design and evolve complex systems. that's one part of the problem. related to that, and oversimplified: agents output the "mean quality" of the code they saw during training. most of that code is very bad. specifically tests, which humans are terrible at writing at.

another part of the problem is that specification via prompt is not precise enough, so the agent has to fill in the blanks, giving it enough rope to hang itself. the more detailed your spec gets, so the agent gets constrained and less likely to produce crap, the closer you are to handwriting the code yourself, as that's the most detailed version of the spec that can exist. so then you gain nothing. back to prompt spec it is, which means the agent fills in blanks, which means we get suboptimal or truely bad results.

using agents can still be a net productivity boost (see other posts in my thread), but it is not easy to come up with consistent workflows that produce both production quality maintainable code while retaining the speed advantages agents give you.

English

Graham أُعيد تغريده

slop creep is what happens when you turn your brain off and hand the thinking to coding agents

each individual change is fine, but all together, you have a pile of crap

we're witnessing this happen in real-time across everything

boristane.com/blog/slop-cree…

English

@snowmaker Somehow, I really miss it. I'm not sure if I actually enjoyed it or it's a strange form of nostalgia.

English