rob a

29 posts

"Show me the critical path."

"Uh, right here. It’s the multiplier it’s failing setup by 1.2 nanoseconds at 600 megahertz."

"A nanosecond and a half. An eternity. You’re missing the clock edge by a fucking eternity?"

"Well, the synthesizer isn’t packing the DSP slices efficiently, and the routing delay to the accumulator is—"

"Stop.

Do not blame the tools. The tool is a hammer. You are the carpenter. If the table wobbles, you don’t blame the hammer. You blame the incompetent carpenter who doesn't know how to drive a nail. Pull up the RTL... now.

Scroll down to the accumulator block. Line 450... read it to me, what does it say?"

"Ugh... always @(posedge clk) begin if (reset) acc <= 0; else acc <= acc + mult_result; end"

"Do you see it?"

"...See what?"

"He doesn’t see it. The grad student from MIT. He looks at a block of sequential logic and he doesn’t see the cancer he just planted in my silicon. Where is the pipeline stage for mult_result before it hits the adder?"

"Oh. I… I didn’t think I needed one there. The simulation showed the data arriving in time."

"Yes because the simulation is fucking Mario Kart. It’s a fantasy land where physics is a suggestion and wire delay doesn’t exist. We are building fucking hardware. The electrons move through copper traces at a fraction of the speed of light. Do you understand the difference between a video game and reality?"

"Ugh...Yes, Mr. Bubble. I just thought the logic depth wasn't that—"

"THE LOGIC DEPTH IS TWENTY-FOUR GATES DEEP!"

"You have a 32-bit multiplier feeding directly into a 64-bit adder in a single clock cycle at 600 megahertz! What do you think happens to the propagation delay? Do you think the electrons just teleport because you wrote a pretty line of code?"

"I—I can add a register."

"Where? Where do you add it? Show me on the floor plan." Look at this shit. It looks like a plate of spaghetti thrown against a wall by a toddler. You're starving the chip. Are you lazy? Or are you just stupid?"

"I’m not… I was trying to optimize for latency."

"You are burning resources like they’re free. You’re at 85% LUT utilization because you’re coding hardware like a Python script kiddie. Now erase the multiplier module."

"What?"

"Delete it. The whole file."

"But… that’s three weeks of work."

"It’s three weeks of garbage. Delete it."

"Now. You are going to rewrite it. You are going to manually instantiate the DSP48 primitives. No inference. You are going to pipeline it three stages deep. And you are going to floorplan those slices right next to the Block RAMs. Now start typing dickhead....

And if I see another timing violation you won't just be off this project. I will make sure the only digital design you ever do again is programming the microwave at Wendy's."

*door slams*

English

@BillAckman Works for me too. I usually just go up to the card deck and say “Ace….may I meet you?”

English

@anderssandberg @AndyMasley we might in fact consider police as ideal to automate .. cheaper, bias-free safety w/o human cops in harm's way. of course, who watches the watchers and dark sci-fi notwithstanding, seems like the future

English

@AndyMasley There is a case for thinking carefully about jobs we may not want to automate (e.g. the police), but equally there are jobs we ethically should automate since providing more of the service cheaply is important (e.g. health care) even if incumbents do not benefit.

English

@togelius OP .. can you explain your assertions? considering the massive benefits...

we should avoid [AI, etc. ] if no ...new roles for humans

[must build AI w/o replacing humans] ... it is of paramount importance

Human agency... is vital and must be safeguarded at almost any cost

English

I was at an event on AI for science yesterday, a panel discussion here at NeurIPS. The panelists discussed how they plan to replace humans at all levels in the scientific process. So I stood up and protested that what they are doing is evil. Look around you, I said. The room is filled with researchers of various kinds, most of them young. They are here because they love research and want to contribute to advancing human knowledge. If you take the human out of the loop, meaning that humans no longer have any role in scientific research, you're depriving them of the activity they love and a key source of meaning in their lives. And we all want to do something meaningful. Why, I asked, do you want to take the opportunity to contribute to science away from us?

My question changed the course of the panel, and set the tone for the rest of the discussion. Afterwards, a number of attendees came up to me, either to thank me for putting what they felt into words, or to ask if I really meant what I said. So I thought I would return to the question here.

One of the panelists asked whether I would really prefer the joy of doing science to finding a cure for cancer and enabling immortality. I answered that we will eventually cure cancer and at some point probably be able to choose immortality. Science is already making great progress with humans at the helm. We'll get fusion power and space travel some day as well. Maybe cutting humans out of the loop could speed up this process, but I don't think it would be worth it. I think it is of crucial importance that we humans are in charge of our own progress. Expanding humanity's collective knowledge is, I think, the most meaningful thing we can do. If humans could not usefully contribute to science anymore, this would be a disaster. So, no. I do not think it worth it to find a cure for cancer faster if that means we can never do science again.

Many of those who came up to talk to me last night, those who asked me whether I was being serious or just trolling, thought that the premise was absurd. Of course there would always be room for humans in science. There will always be tasks only humans can do, insight only humans have, and so on. Therefore, we should welcome AI. Research is hard, and we need all the help we can get. I responded that I hoped they were right. That is, I truly hope there will always be parts of the research process which humans will be essential for. But what I was arguing against was not what we might call "weak science automation", where humans stay in the loop in important roles, but "strong science automation", where humans are redundant.

Others thought it was immature to argue about this, because full science automation is not on the horizon. Again, I hope they are right. But I see no harm in discussing it now. And I certainly don't think we need research on science automation to go any further.

Yet others remarked that this was a pointless argument. Science automation is coming whether we want it or not, and we'd better get used to it. The train is coming, and we can get on it or stand in its way. I think that is a remarkably cowardly argument. It is up to us as a society to decide how we use the technology we develop. It's not a train, it's a truck, and we'd better grab the steering wheel.

One of the panelists made a chess analogy, arguing that lots of people play chess even though computers are now much better than humans at chess. So we might engage in science as a kind of hobby, even though the real science is done by computers. We would be playing around far from the frontier, perhaps filling in the blanks that AI systems don't care about. That was, to put it mildly, not a satisfying answer. While I love games, I certainly do not consider game-playing as meaningful as advancing human knowledge. Thanks, but no thanks.

Overall, though, it was striking that most of those I talked to thanked me for raising the point, as I articulated worries that they already had. One of them remarked that if you work on automating science and are not even a little bit worried about the end goal, you are a psychopath. I would add that another possibility is that you don't really believe in what you are doing.

Some might ask why I make this argument about science and not, for example, about visual art, music, or game design. That's because yesterday's event was about AI for science. But I think the same argument applies to all domains of human creative and intellectual expression. Making human intellectual or creative work redundant is something we should avoid when we can, and we should absolutely avoid it if there are no equally meaningful new roles for humans to transition into.

You could further argue that working on cutting humans out of meaningful creative work such as scientific research is incredibly egoistic. You get the intellectual satisfaction of inventing new AI methods, but the next generation don't get a chance to contribute. Why do you want to rob your children (academic and biological) of the chance to engage in the most meaningful activity in the world?

So what do I believe in, given that I am an AI researcher who actively works on the kind of AI methods used for automating science? I believe that AI tools that help us be more productive and creative are great, but that AI tools that replace us are bad. I love science, and I am afraid of a future where we are pushed back into the dark ages because we can no longer contribute to science. Human agency, including in creative processes, is vital and must be safeguarded at almost any cost.

I don't exactly know how to steer AI development and AI usage so that we get new tools but are not replaced. But I know that it is of paramount importance.

English

The reason government programs are so inefficient is that, unlike a commercial company, the feedback loop for improvement is broken, because they have a state-mandated monopoly and can’t go out of business if customers are unhappy.

No matter how bad the service is at your DMV (sorry to pick on DMVs), you still have to use your DMV, because it’s a monopoly.

English

rob a أُعيد تغريده

@Kathy083107 @FLCons These are not abnormal conditions in Canada. Planes land safely all the time in similar.

English

@FLCons I can't believe the airport even allowed planes to land on those runway conditions. Does not make sense, and you would think an area like Toronto would know better.

English

@FLCons Could commenters say if they are pilots or just making shit up? OP has to wade through an ocean of nonsense.... I'm a GA pilot, but here to help. Pilots decide if safe to land unless airport closed. Land faster if gusty crosswind. Windshear can induce hard landings.

English

@willjam465 @MrStand_Fast @BillAckman NVG = night vision goggles. not just looking out the window(s)

English

@MrStand_Fast @BillAckman I am curious; honest question from someone just trying to understand. Can you elaborate on your comment about looking at the world through toilet paper tubes. There is a fair amount of glass on this helicopter which would seem to provide good field of vision out.

English

Someone who has piloted a Blackhawk please explain how this is possible.

Raylan Givens@JewishWarrior13

A new, more clearer video of the collision between a helicopter and a passenger plane in Washington, D.C, has been released. Via: @visegrad24

English

@MrStand_Fast @KeenanPeachy @BillAckman Fixed wing pilot here, fly occasionally in FRZ. Wow Matt you are so patient with the jaw-dropping idiocy in some comments. Bravo on that, and thank you for your service.

English

They can be flown safely, and are flown safely almost every day. I'm confident the investigation will prove out what happened, but it's much more likely that a series of human errors lined up in the worst way to cause this.

Our military has to train on specific missions within the DC region for a variety of missions, and in crowded airspace, there are always risks.

I mourn the loss of life, and hope that analysis helps provide better risk mitigation in the future, but it's highly unlikely there was any malicious intent here.

English

@ChainPatrol @3blue1brown This is pretty impressive. Explain the situation, take full accountability, not just say we are accountable but actually explain what you mean by it (we've all had exes say "explain what your apologizing for")... Describe a meaningful, actionable approach to resolving etc...GG!

English

This YouTube video has been restored and we have shared a post-mortem report with @3blue1brown directly, which explains in detail what caused this false positive to occur and what steps are being taken to avoid this error in the future.

At ChainPatrol, we use a combination of human reviewers, advanced LLM scanning, image recognition, and proprietary models to detect brand impersonation and malicious actors targeting organizations.

We have purposefully built an auditable system, to ensure that automation does not cause harm. We found that this video was never reported in our system, and our system did not flag it. This false positive was due to human error, not bots or AI. One of our analysts, responsible for submitting takedowns, accidentally copied the wrong link while reporting a malicious video.

Our current false positive rate is 0.053%. We are dedicated to reaching a 0% false positive rate across both automation and human processes.

To ensure this issue does not repeat, here are the fixes we are putting in place, immediately:

We are assessing and re-training all analysts.

We are implementing PagerDuty Alerts when a Copyright Counter Notification is received for faster response.

We are implementing a system to detect when our analysts add links into takedown input fields that are not on the blocklist.

Lastly, we would like to emphasize: @Arbitrum did not have any involvement in this takedown. At ChainPatrol, we act independently, on behalf of our clients, who partner with us in an effort to protect their communities from malicious activity, like phishing.

We treat every account, big or small, fairly. Every piece of content we block can be disputed on our search page or by emailing support@chainpatrol.io. These disputes immediately trigger PagerDuty alerts across 24/7 available staff and our team of Founders.

English

I learned yesterday the video I made in 2017 explaining how Bitcoin works was taken down, and my channel received a copyright strike (despite it being 100% my own content).

The request seems to have been issued by a company chainpatrol, on behalf of Arbitrum, whose website says they "makes use of advanced LLM scanning" for "Brand Protection for Leading Web3 Companies"

I could be wrong, but it sounds like there's a decent chance this means some bot managed to convince YouTube's bots that some re-upload of that video (of which there has been an incessant onslaught) was the original, and successfully issue the takedown and copyright strike request.

It's naturally a little worrying that it should be possible to use these tools to issue fake takedown requests, considering that it only takes 3 to delete an entire channel.

English

With a little bit of audio processing, I think I have figured out where that strange Starliner sound came from.

Gene@SpaceBasedFox

Starliner crew reports hearing strange "sonar like noises" emanating from their craft. This is the real audio of it:

English

@dieworkwear Rock star @dieworkwear

I've got so many nice clothes just wasting away in the closet, don't need for work and guess I'm not spending time with such a classy crowd 🤣

You're absolutely inspiring me to invent a reason, or maybe no reason at all, to get them back out on parade

English

Kids never get it wrong. And even when they style clothes in a slightly off way, it only looks more awesome. But here is a guide on how adults can match patterns. 🧵

Marc Istook@MarcIstook

I need the menswear guy to teach my kid how to mix patterns @dieworkwear

English

@brendenslab @Aella_Girl I thought it was just so "made for TV"... Paul's speech re Chani vs. Irulan cut for the film....so just another usual pissed off girl, lame protagonist, etc.

English

@Aella_Girl i mean…in the books Paul basically gives a speech on how he’s still gonna be monogamous for her…

but go off i guess

English

@ClaytonArnall @Aella_Girl Their connection is straight out of the book. Or at least from his side, visions of her, their future together, etc. Other annoying stuff that was done to their relationship for the movie was definitely not in the book.

English

@Aella_Girl I agree, why does every good movie need a side love story? Dune does it in such an obnoxious way, too. The only two characters even in that age range are, of course, attracted to each other, and not just that, but are soul mates backed by visions.

English

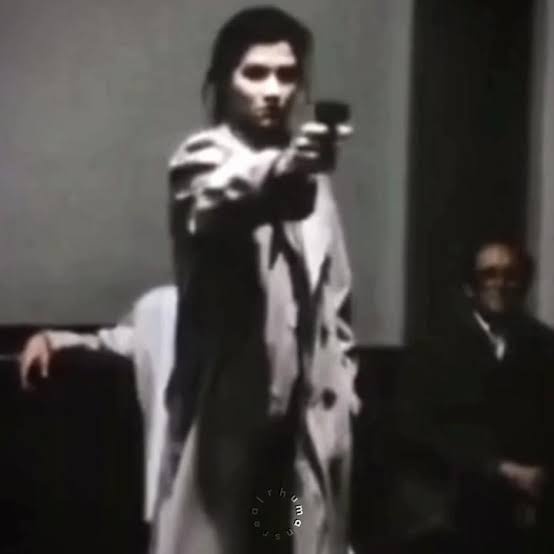

In 1981, Marianne Bachmeier shot the murderer of her 7-year-old daughter. Klaus Grabowski, a 35-year-old butcher who had already sexually assaulted 2 girls previously.

Bachmeier pulled out a Beretta during his trial nd emptied 7 bullets into Grabowski's body, killing him instantly.

Marianne was sentenced for murder to 6 years in prison, of which she served 3 and was released for good behavior.

English