SwedishAccelerationism

561 posts

SwedishAccelerationism

@swe_acc

Hard takeoff. Soft landing.

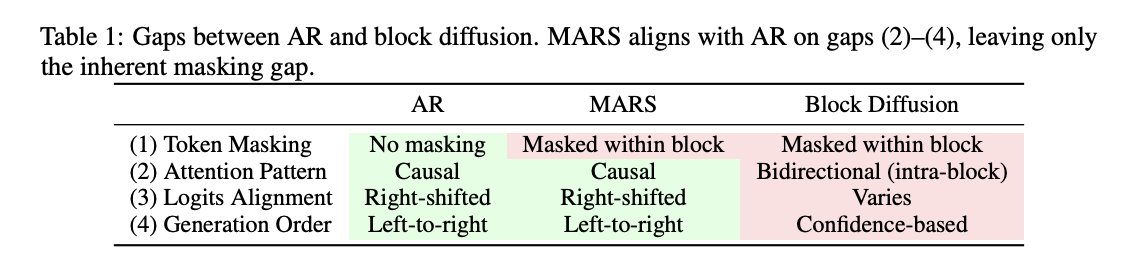

MARS: Multi-token generation for autoregressive models with zero architectural changes Achieves 1.5-1.7x throughput while maintaining baseline accuracy. Supports real-time speed adjustment via confidence thresholding.

A fun recent project with @HeMuyu0327 on attention sinks. We studied a surprisingly fundamental question: how does an LLM identify the physically first token in a sequence? Some hypotheses ruled out, some new clues found, and still one big mystery left. Blog here, would love to hear thoughts. smoothcriminal.notion.site/the-remaining-…

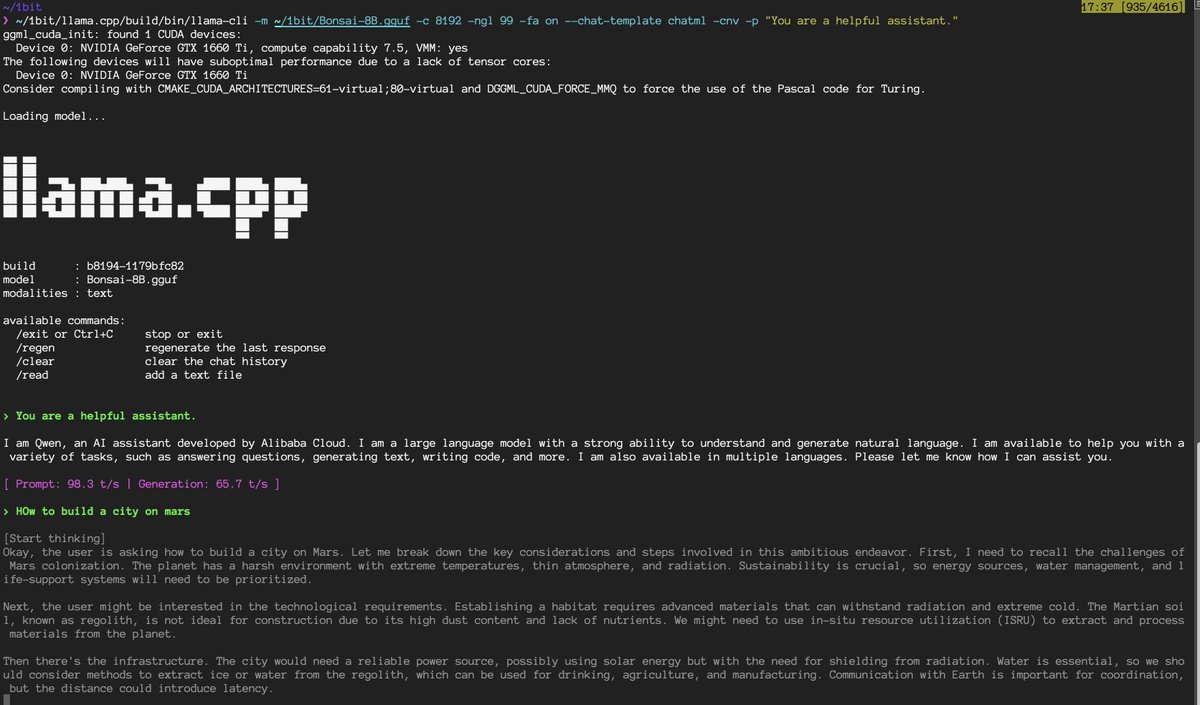

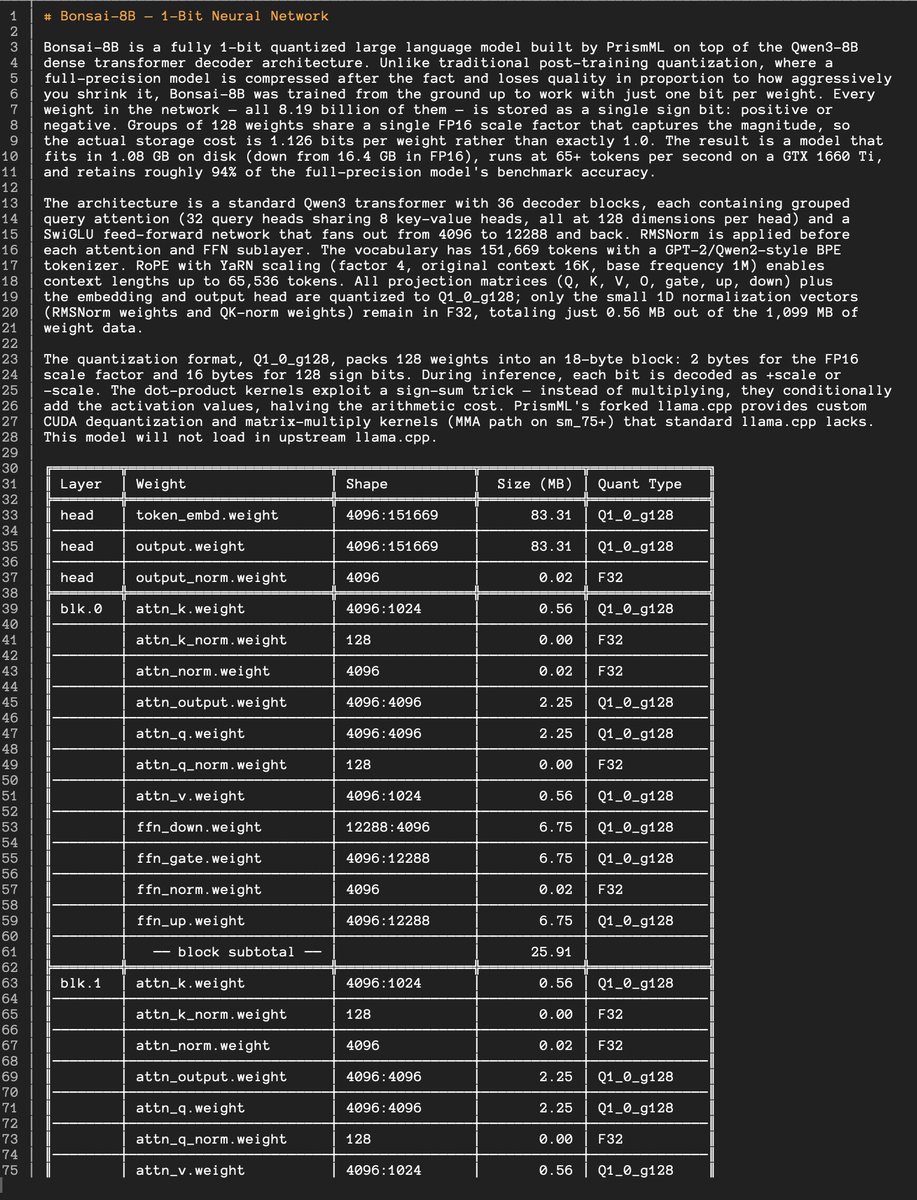

your spotify cache is bigger than our largest AI model. Bonsai: 1-bit weights. 1.7B to 8B params. 14x compression vs bf16. 8x faster on edge. 256 MB to 1.2GB. Based on Qwen 3. we just came out of stealth. intelligence belongs at the edge and we're going to put it there. Apache 2.0. we compressed intelligence. more coming. @PrismML

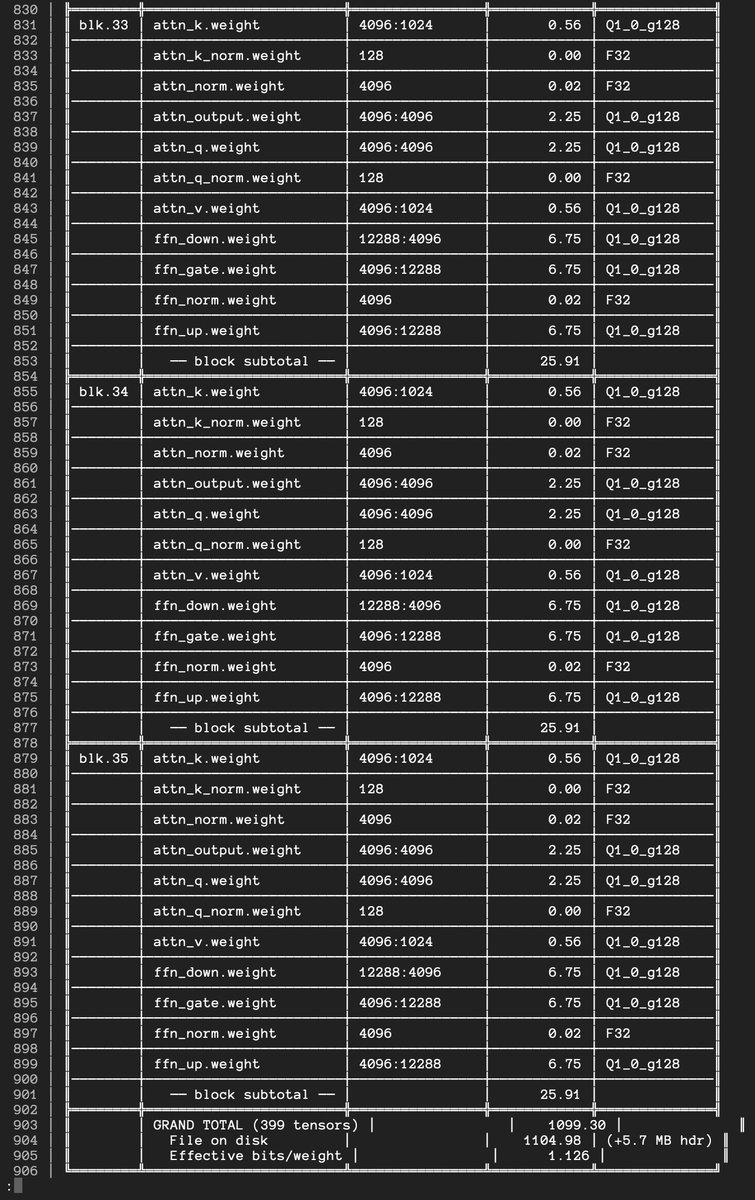

There are several people who have seen classified intel on Covid origins. The mystery for them is not "Did Covid come from a lab?" but "What processes led to Covid leaking from a lab?"

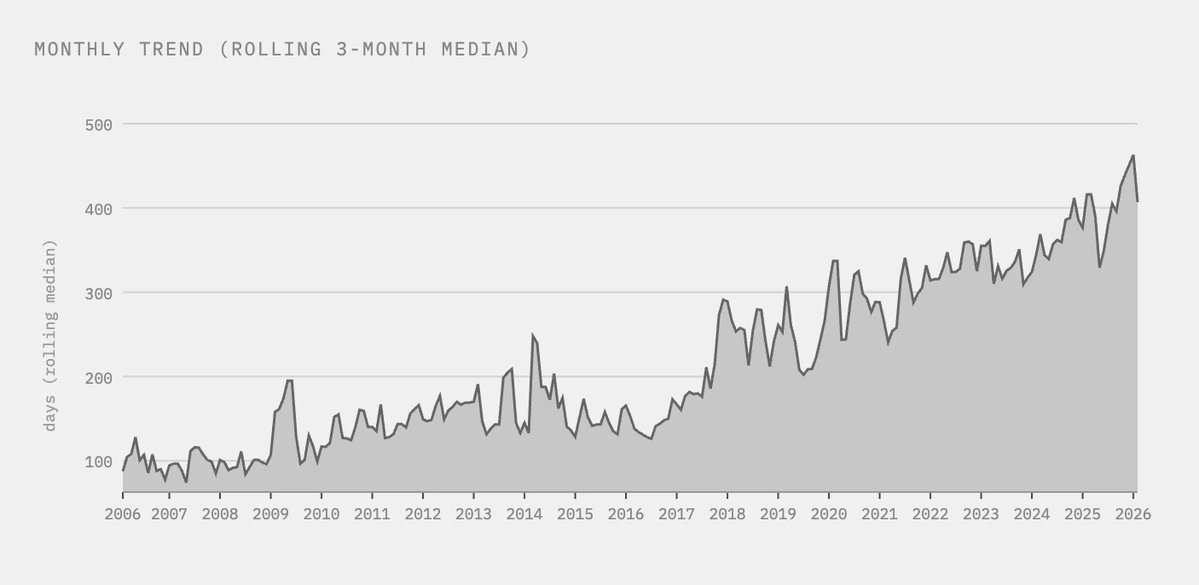

Monthly median Received to Accepted time (days) at Nature Genetics

Paul Erdős won a bet by proving he wasn't addicted to amphetamines. But he got nothing done: "You've showed me I'm not an addict... You've set mathematics back a month."