Tsukuyomi

17.8K posts

Tsukuyomi

@0xtsk

I am straight out of @aiapocalypto manga , opinions are my own. My current Timeline: 2030 prev doomgpt

🎤 The stage is set and the spotlight’s on Dylan Patel, Founder, CEO & Chief Analyst at SemiAnalysis, a #PyTorchCon keynote speaker! Join the minds moving #OpenSource #AI & ML forward. 📍 San Francisco | 📅 October 22–23 👀 Keynotes: hubs.la/Q03GmG220 🎟️ Register: hubs.la/Q03GmFXC0

Super happy to sign a partnership with @ESCP_bs, the oldest business school in the world, to give full access of Hugging Face to all 11,000 students and faculty! It's particularly meaningful for me as I was a student there 15 years ago, with Dean Laulusa, who was part of my admission jury, that I had as a professor & recommended me for my first internship. I also started my first startup in the basement of the school with @BlueFactory_ by @MaevaTordo! AI is going to change education so can't wait to see what ESCP students and faculty will build!

7 LLM generation parameters, clearly explained (with visuals):

BAAI just released InfoSeek: a breakthrough in LLM Deep Research! It's a new data synthesis framework that trains smaller models to outperform much larger ones on complex reasoning benchmarks.

Wondering how to evaluate your Retrieval-Augmented Generation (RAG) system amzn.to/3JJkdd2 Start by measuring its precision and recall for retrieval, checking how well it sources relevant info. Then, assess the quality of its generated responses based on relevance, factual accuracy, and fluency. Don’t forget to A/B test with different datasets and track improvements over time! #AI #MachineLearning #RAG #Evaluation #AIResearch #NaturalLanguageProcessing

Who will maintain the future of open source? Here are 6 ways you can help new contributors grow into future leaders—and keep the open source community going for generations to come.👇 github.blog/open-source/ma…

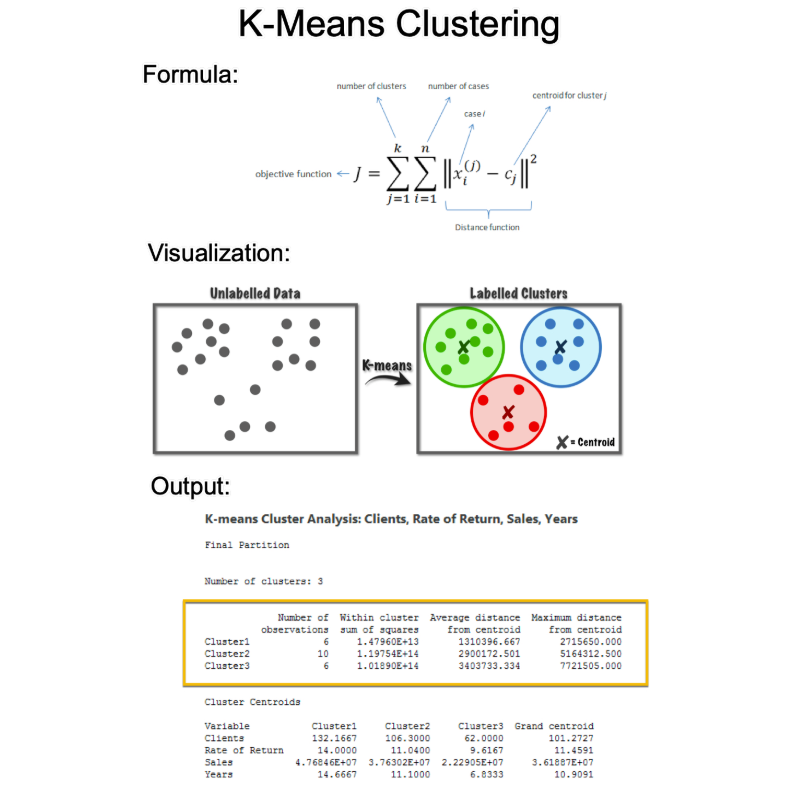

K-means is an essential algorithm for Data Science. But it's confusing for beginners. Let me demolish your confusion:

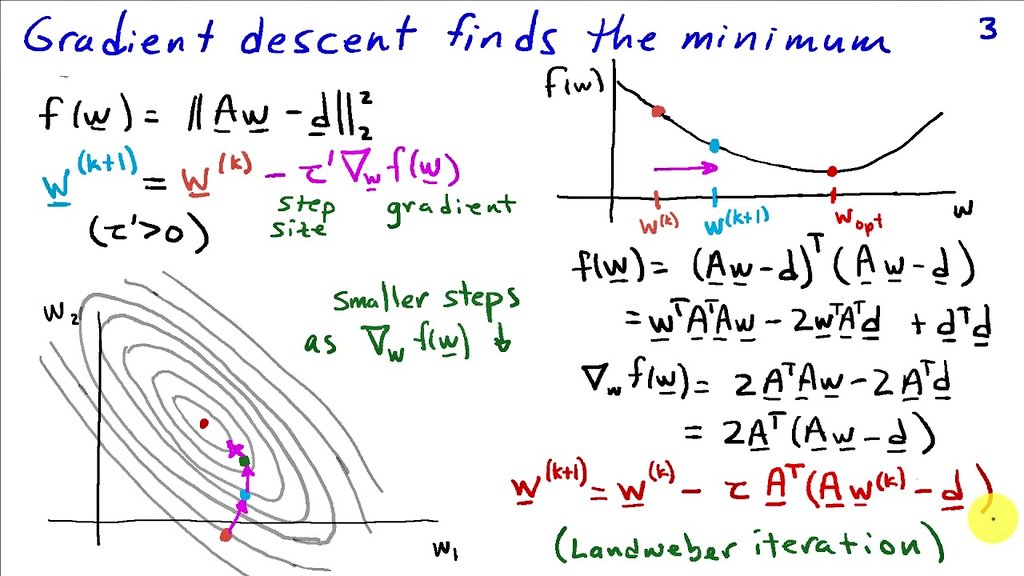

Gradient descent is an iterative optimization algorithm used to find the minimum of a function. It works by taking repeated steps in the opposite direction of the gradient (the steepest ascent) of the function at the current point. In statistics, it's used for maximum likelihood estimation and linear regression. In ML, it's fundamental to training neural networks and other models by minimizing the loss function.