Adam Zweiger

76 posts

Adam Zweiger

@AdamZweiger

Working on learning | @MIT_CSAIL

We are sharing an early preview of our ongoing SWE-1.6 training run. It significantly improves upon SWE-1.5 while being post-trained on the same pre-trained model - and it runs equally as fast at 950 tok/s. On SWE-Bench Pro it exceeds top open-source models. The preview model still exhibits some undesirable behaviors like overthinking and excessive self-verification, which we aim to improve. We are rolling out early access to a small subset of users in Windsurf.

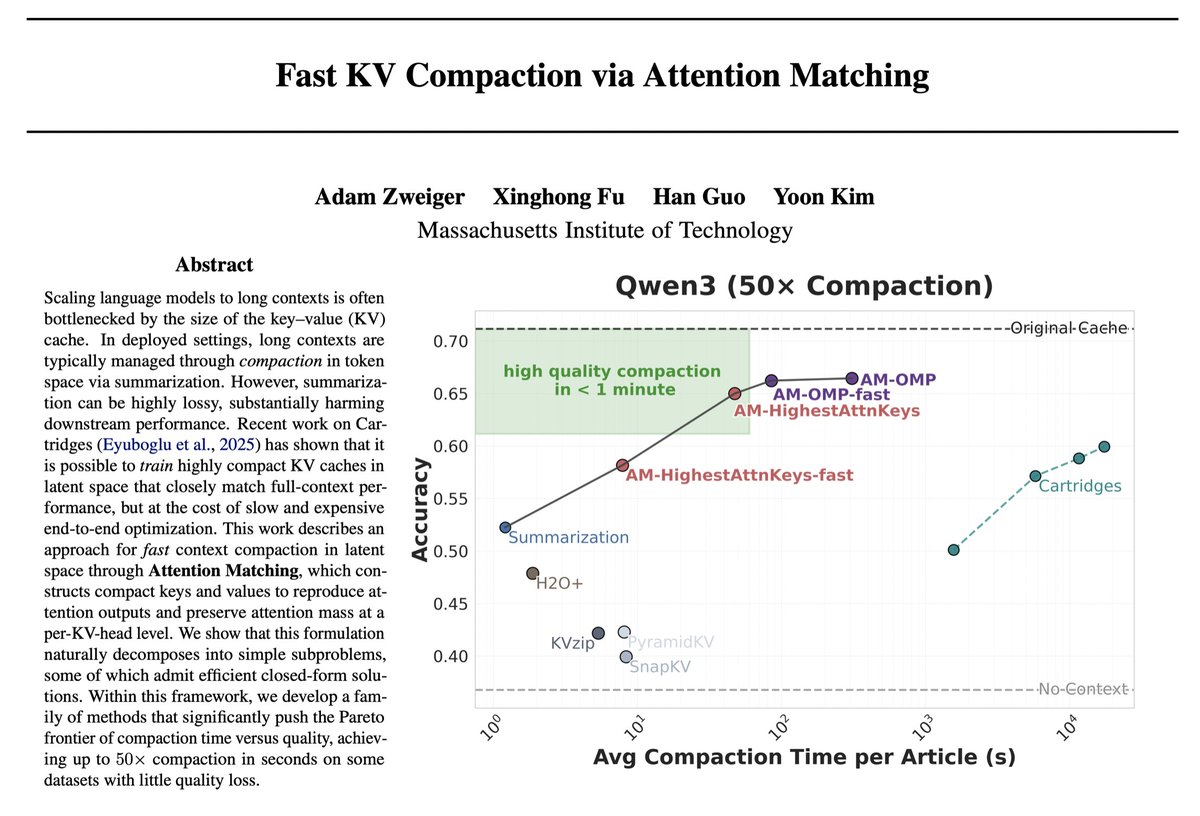

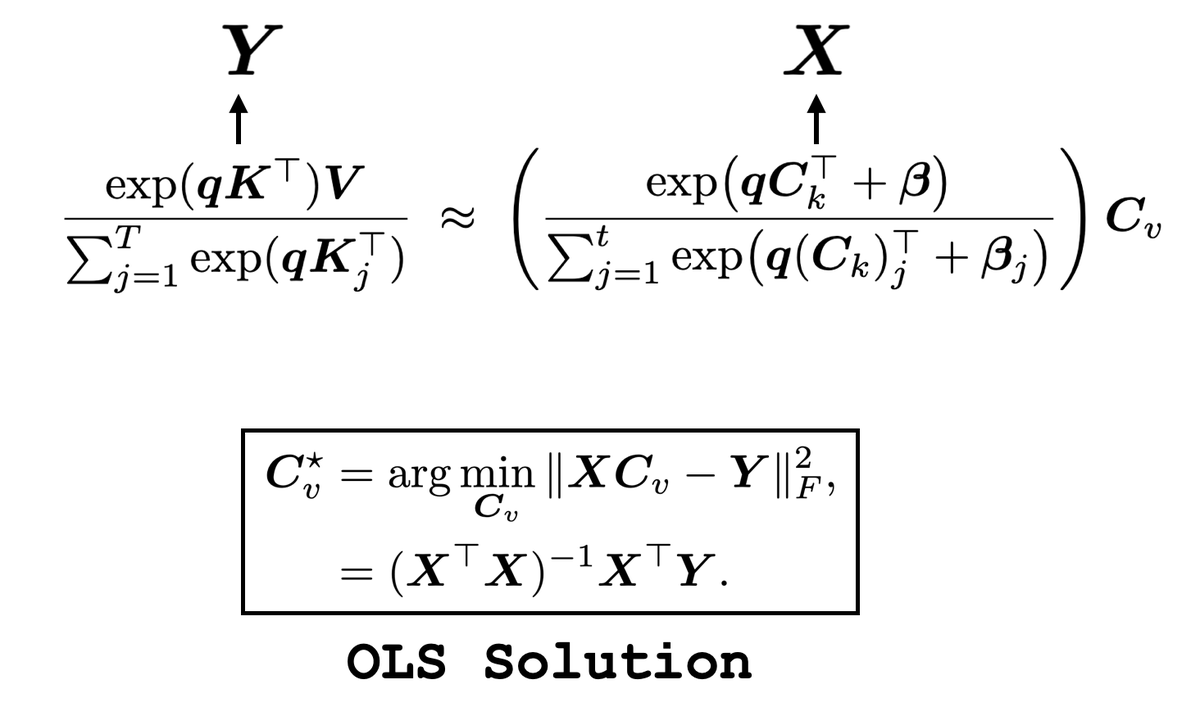

We introduce a new approach for fast and high-quality context compaction in latent space. Attention Matching (AM) achieves 50× compaction in seconds with little performance loss, substantially outperforming summarization and other baselines.

RL for reasoning often rely on verifiers — great for math, but tricky for creative writing or open-ended research. Meet RARO: a new paradigm that teaches LLMs to reason via adversarial games instead of verification. No verifiers. No environments. Just demonstrations. 🧵👇