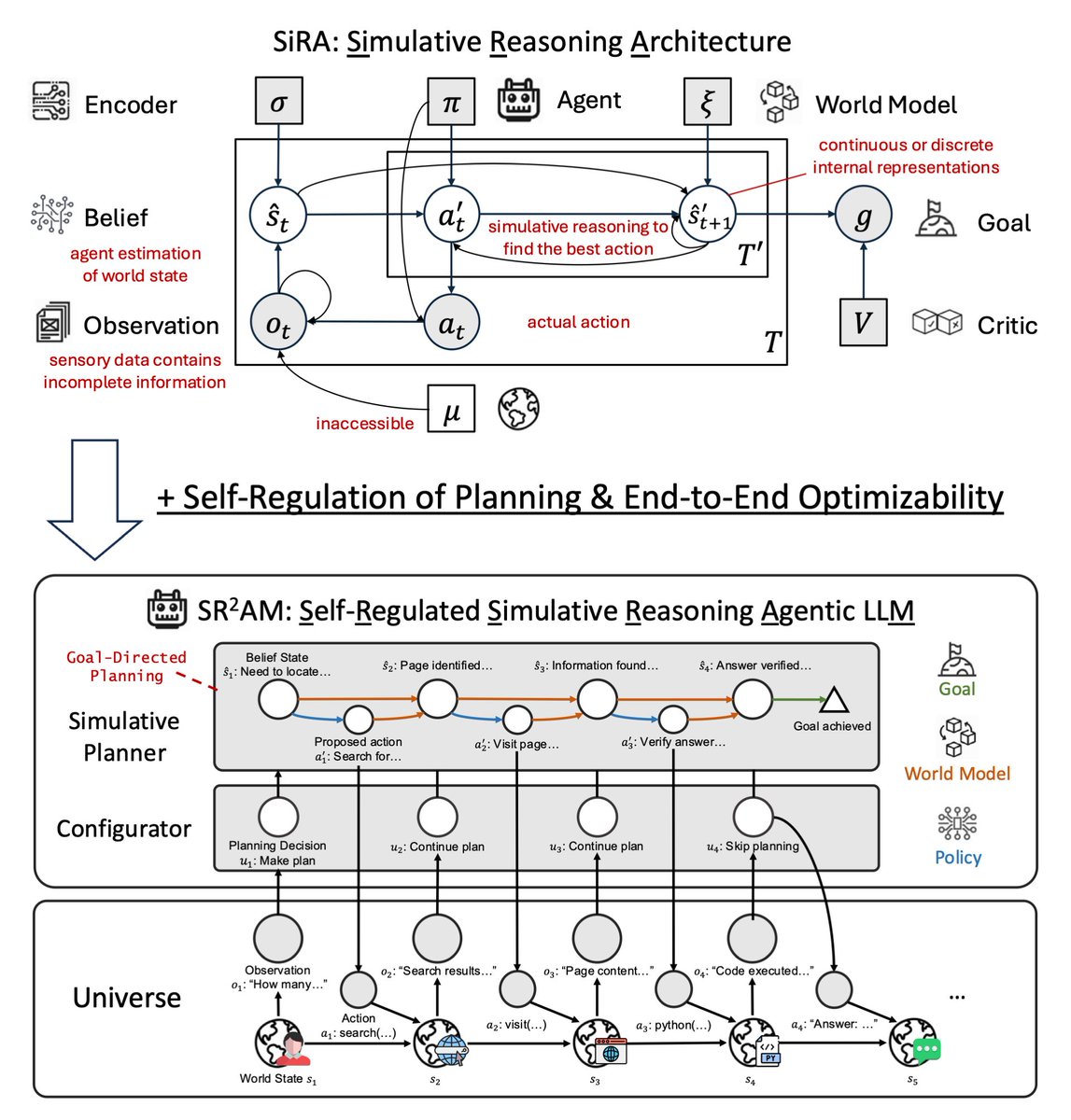

Happy to share that DR Tulu has been accepted to ICML as a ✨Spotlight✨! We believe that co-evolving the agent and its reward metric can lead to more capable intelligence. DR Tulu is a team effort. Huge thanks and congrats to all my amazing collaborators and mentors!