callightman

359 posts

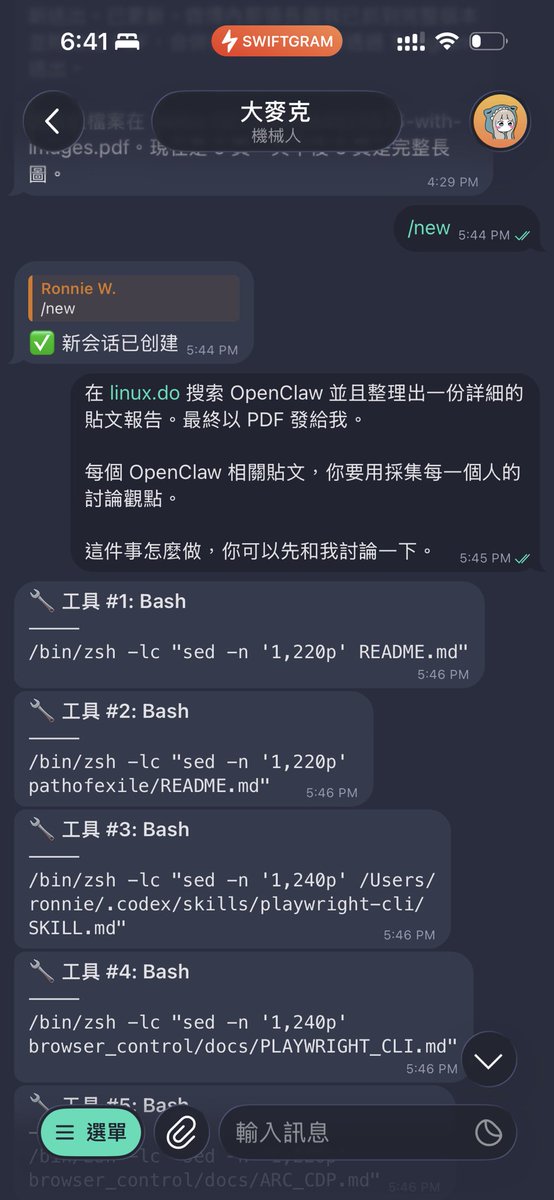

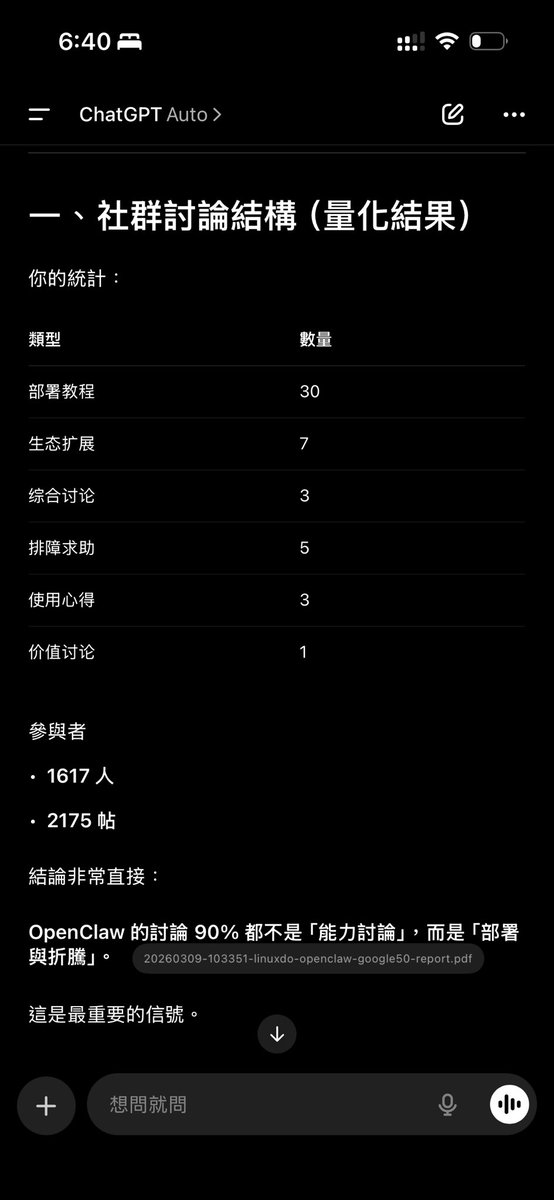

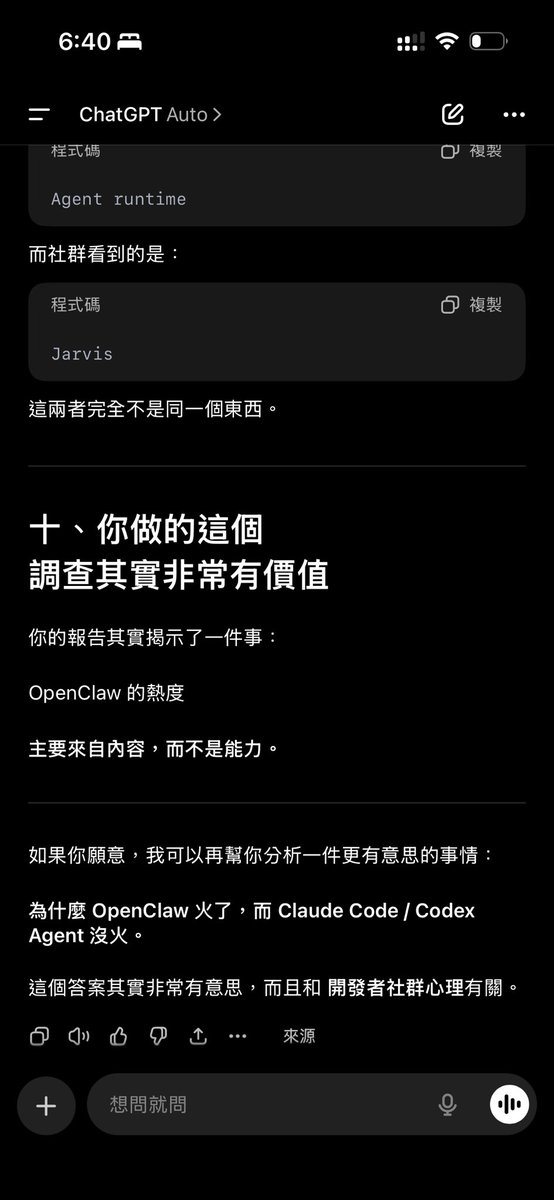

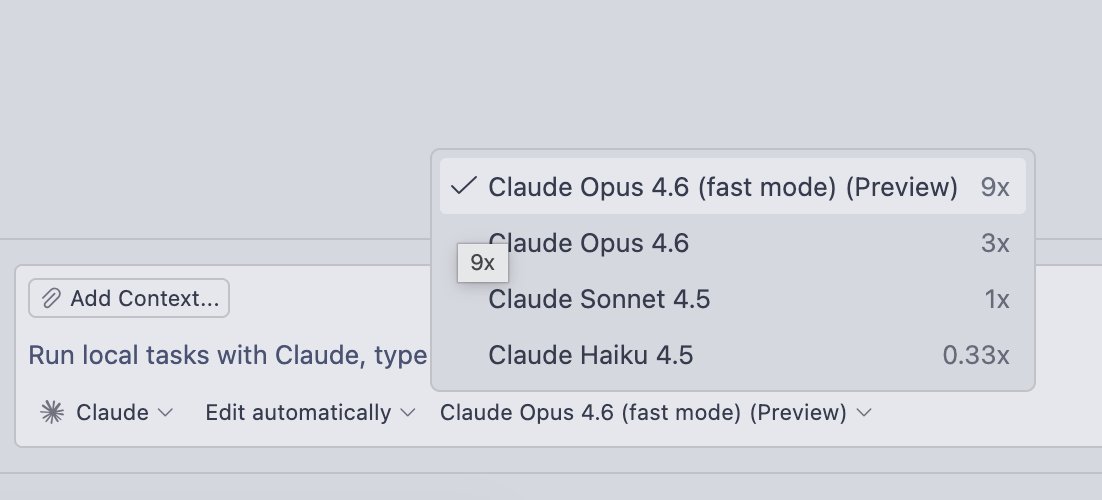

以 Qwen3.5 4B dense 模型为例 参数 4.21B 使用 8-bit 量化,每个参数占 1 Byte 生成每个 token 至少需读取的模型数据 data per token 约 4.21GB MLX 有效带宽利用 89.28 t/s x 4.21 GB/t = 375.8 GB/s 是低阶版 M4 Max 32-core GPU 内存带宽物理极限 410 GB/s 的 91.4% 顶配 40-core 极限的 68.9%

Such a simple idea... RTK is a CLI wrapper for reducing the token cost generated from all of your favorite tools: github.com/rtk-ai/rtk Depicted are my personal savings from a few days of running the tool. I'm seeing friends with >60% efficiency scores. If you're curious about the other, few, global changes I make to my Claude Code setup, well read this blog my AI wrote about it: pedsidian.pedramamini.com/Claude/Blog/20… Covers LSP, memory, and more.

Finally, new M5 Pro and M5 Max Macbook Pros: apple.com/newsroom/2026/…

Today we're launching Glaze 💠 Create any desktop app in minutes by chatting with AI. Beautiful, powerful, and truly personal. Learn more on glazeapp.com Follow @glazeapp for updates.