Chairman τao

10.3K posts

Chairman τao

@MarsSmuff

Irresponsibly long τao. Life. Liberτy. Biττensor.

🚨 NEW: The Green Party has drafted proposals to reduce the time police can detain terror suspects from 14 days to 4 [@genevieve_holl]

I said "And this sustained project of privatisation and deregulation turned Britain from a place which made things people need to a place which made money for people who owned things." And the City said:

“The NHS is Failing because Billionaires are getting Richer and Richer” thanks for clearing that up Hannah !!! 🙄deary me… 🤡 @TheGreenParty

@MothinAli Thoughts on banning halal slaughter mate? Nobody from the 'Greens' seem keen to answer me on that?

🚨IS STARMER COMPROMISED? FROM FREE SPEECH DEFENDER TO JAILING PEOPLE FOR SOCIAL MEDIA POSTS 🤔🔒 What Happened To The Uk Prime Minister And Why His Stance On Social Media Arrests Flipped So Dramatically In 2012, as Director of Public Prosecutions, Keir Starmer proudly declared himself a guardian of free speech. In that now-infamous video he insisted: “Where a communication is merely offensive... principles of free speech require a high threshold, and dictate that a prosecution is unlikely to be in the public interest.” Today, Prime Minister Starmer presides over a regime that has racked up around 12,000 arrests tied solely to social media posts. The numbers are so grotesque that even Russia and China suddenly look like bastions of free speech by comparison. The hypocrisy is breathtaking. Many on X are no longer asking politely — they’re outright declaring that Starmer has been compromised. Whether it’s political cowardice, shadowy external influence, or naked authoritarian instinct, the man who once set a high bar for prosecution now jails people for hurting feelings online. He still trots out the same tired lines about “protecting children” and preventing disorder after the 2024 riots. But the latest escalation is his determined push for brand-new laws specifically targeting “Islamophobic” content. Critics say this is not about hate — it’s about criminalising legitimate public concern and dissent over mass migration, the rapid demographic changes, growing Islamic influence in public life, grooming gang scandals that were long ignored, and the general state of the country. What many see as valid political criticism and free debate is now being re-labelled as illegal “Islamophobia” to shut it down. What started as emergency measures after unrest has quietly morphed into a broader, relentless expansion of censorship dressed up in ever-changing justifications. The old Keir is nowhere to be seen. The contrast is damning.

🇺🇸🇯🇵 Japan's PM just complimented Barron Trump calling him a "very tall, good-looking gentleman." By the time she leaves, Trump will be giving Japan whatever they want😂

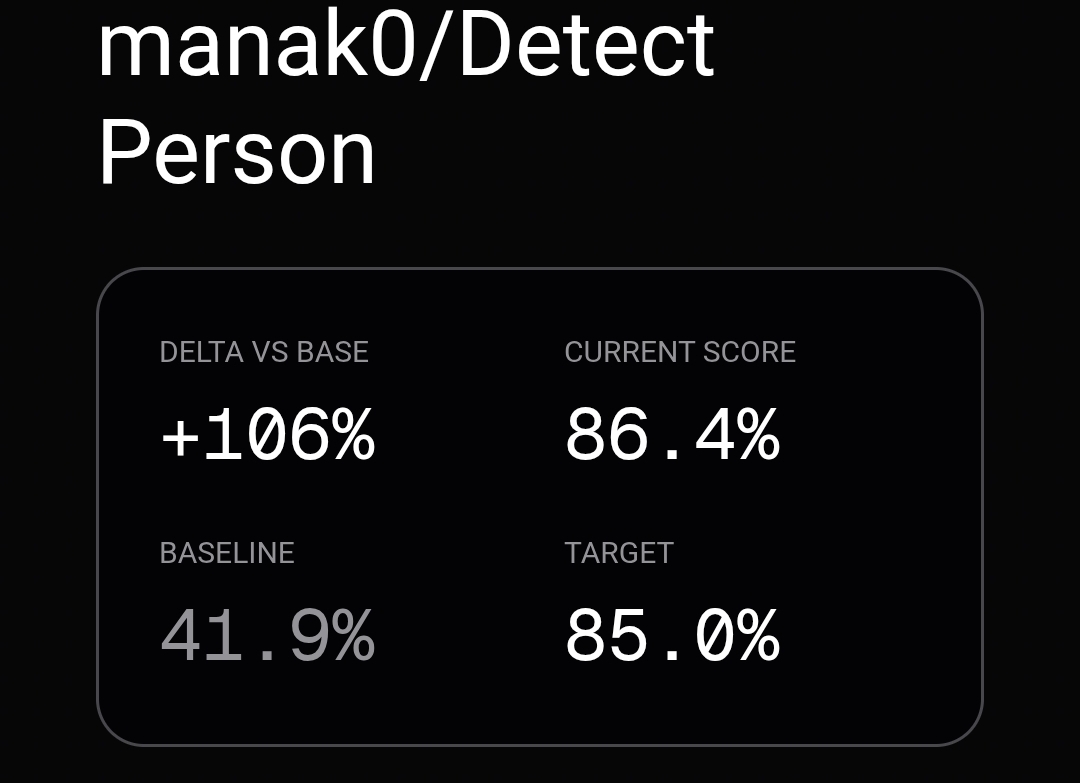

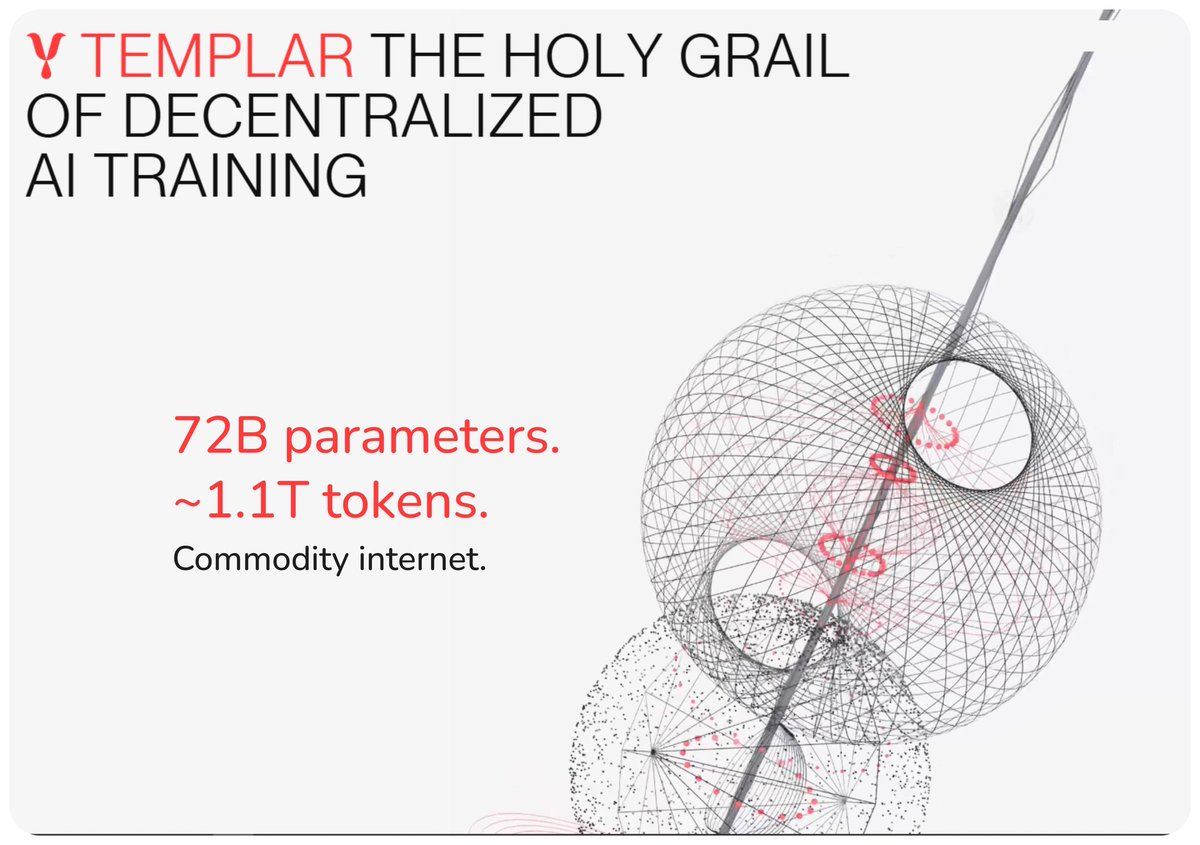

🔥 Exactly. Templar changed how I think about AI infra. I didn’t expect much from decentralized AI, but seeing @tplr_ai train a 72B model on 1.1T tokens across ~70 permissionless nodes on Bittensor ( $TAO). That alone is already unusual, but what really changed my mind is how they made it work. - At this scale, training is limited by coordination. Normally you’re pushing ~280GB of data per synchronization step between nodes, which makes decentralized training basically dead on arrival. - @tplr_ai compressed that down to ~2.2GB and reduced sync frequency massively using SparseLoCo. When I look at that, I see them removing the core bottleneck that killed every previous attempt 🤯. That’s why I think calling this a DeepSeek moment is actually not exaggerated. DeepSeek showed models can be trained cheaper. Templar shows they can be trained without central coordination at all. -> Those are two very different directions, and this one feels structurally harder to compete with. Another signal I don’t ignore: when people like Anthropic’s Jack Clark publicly frame it as real infra: - In my experience, that kind of validation usually comes after something already works, not before. - This is still pre-training. The real edge in AI comes from post-training, RLHF, alignment loops, basically where models become actually useful. Templar is moving there next with Grail, and for me that’s the real test. If they can decentralize that layer too, then we’re no longer talking about decentralized compute, they’re talking about a fully permissionless AI production pipeline. What makes Templar stand out to me is the timing and direction they chose. 1/ They went after coordination when the entire AI industry is quietly hitting scaling limits. - That’s a very different bet, and usually the ones who attack constraints, not trends, are the ones that matter later. 2/ Another catalyst I see is the permissionless design. - Most decentralized AI systems still gate participation in some way, which kills network effects early. - Templar went fully open from the start, which means if this model works, it doesn’t just scale linearly, but compounds with more contributors, more experimentation, more edge cases being solved in parallel. Also, the fact they are building toward post-training (RL layer) tells me they understand where real value sits. Pre-training gets attention, but post-training is where models become usable, sticky, and monetizable. If they execute here, they start owning part of the intelligence layer itself. 3/ My prediction based on this: In the short term, most people will still underestimate it because model quality gap vs centralized labs will be the easy argument. But over time, I think Templar becomes: - a backend layer for open AI development. - a coordination network for distributed compute. - and eventually a marketplace for intelligence refinement. Not dominant overnight, but quietly embedded everywhere. And if that plays out, the upside comes from becoming the system that anyone can build on when they don’t want to rely on @OpenAI at all.

@GarethHowe95938 @vidaio_ Did I find it? 🗿🥚

On the @theallinpod this week, @chamath asked @nvidia CEO Jensen Huang about decentralized AI training, calling our Covenant-72B run "a pretty crazy technical accomplishment." One correction: it's 72 billion parameters, not four. Trained permissionlessly across 70+ contributors on commodity internet. The largest model ever pre-trained on fully decentralized infrastructure. Jensen's answer is worth hearing too.