Carlos

4.7K posts

bro created an AI job search system for Claude Code that scored 700+ job applications and actually got him a job. AND IT'S NOW OPEN-SOURCE. It scans multiple company career pages, rewrites your CV per job, and even fills application forms. The repo has: > 14 skill modes (evaluate, scan, PDF, ...) > Go terminal dashboard > ATS-optimized PDF generation via Playwright > 45+ companies pre-configured (Anthropic, OpenAI, ElevenLabs, Stripe...) GitHub: github.com/santifer/caree…

So I spend a lot of time on my terminal these days, and I always have to go back to my browser to open a website or page. So I decided to build a tool to help me view webpages, docs, and APIs directly from the terminal. It's called Openpreview - Preview URLs, files, and command output in your terminal. I also wanted to experiment with OpenUI and Bun by @opencode, @anomalyco, and @bunjavascript, so this turned out nicely. It's OSS, try it out at openpreview.co

Today we had an issue affecting ~3000 users, where their authenticated content may have been served to their unauthenticated users Below is our writeup on impact, resolution, and prevention We've deeply sorry. This is unacceptable and we will do better blog.railway.com/p/incident-rep…

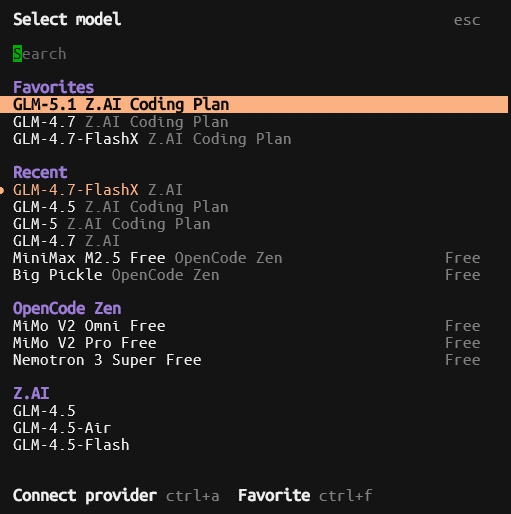

Zen x Qwen3.6-Plus - free during preview improved reasoning vs Qwen3.6-Minus.. i mean Qwen3.5 1M context · text only

Free CDN. No, seriously Changelog #0283 • One-click CDN • Railway x Stripe Projects • High-availability Postgres railway.com/changelog/2026…

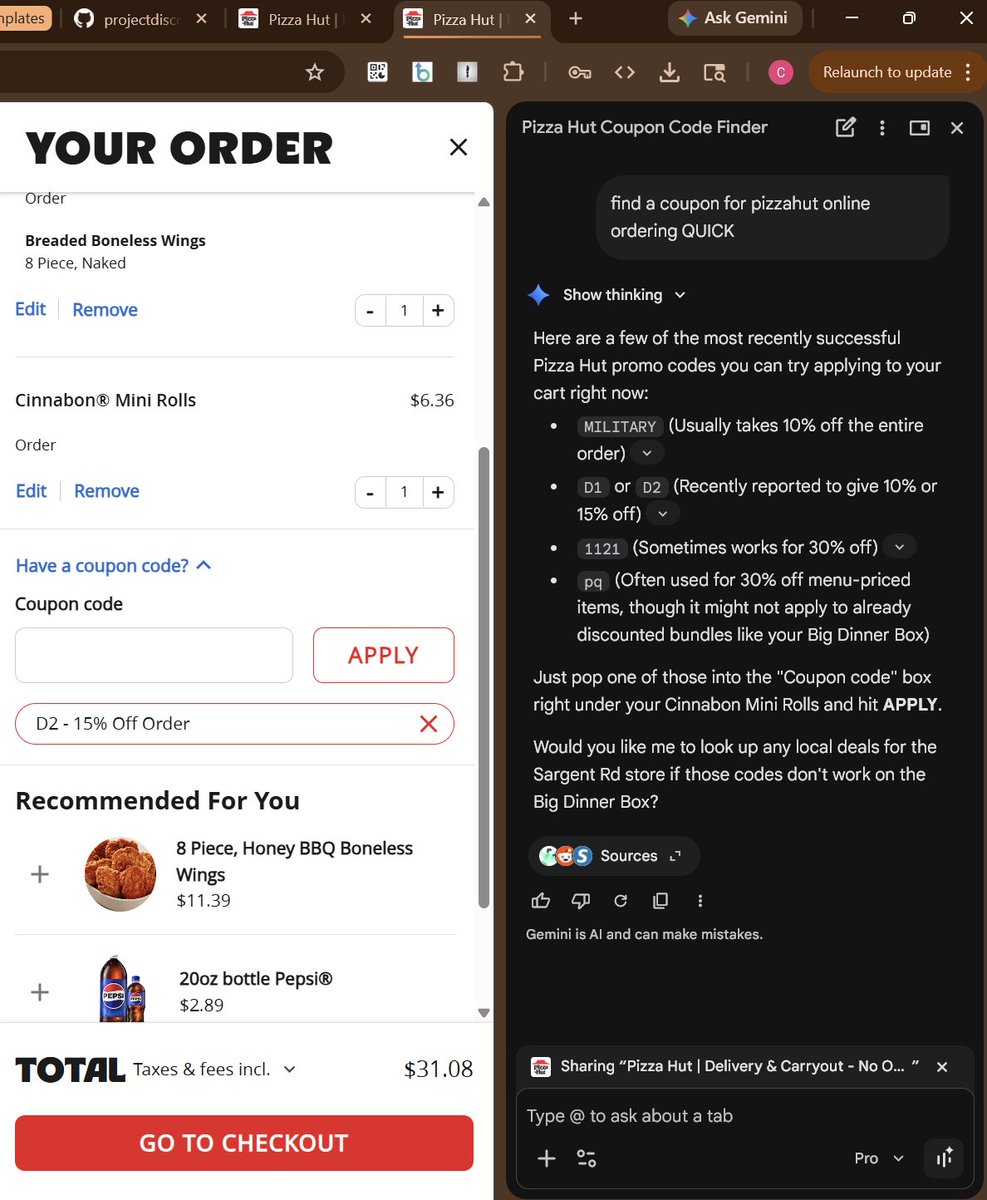

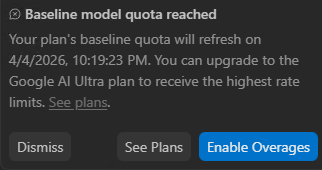

rip chatgpt pro unlimited