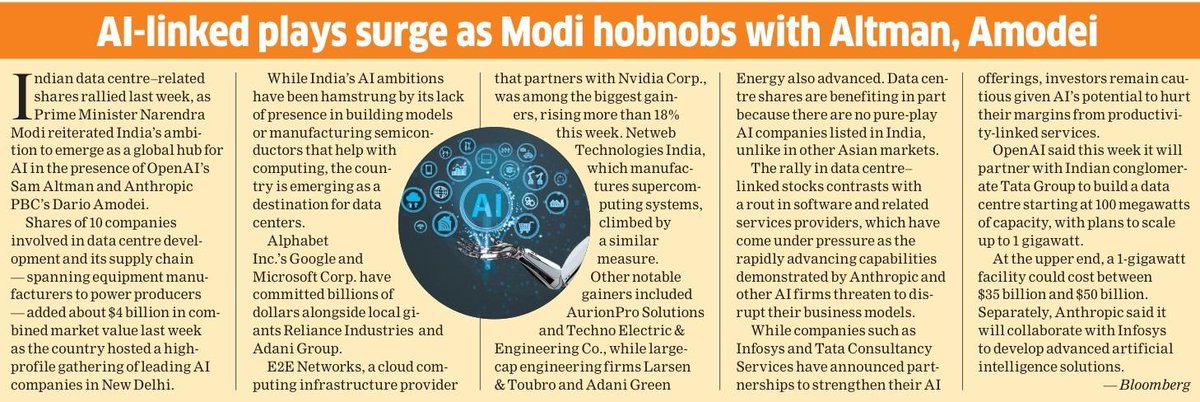

Aurionpro

1.4K posts

Drop 3/7: #LexsiLabsResearch Making RL more efficient. We made RL on math learn faster by changing one thing: token credit assignment. When we train LLMs with GRPO/DAPO-style reinforcement learning on reasoning tasks, we accidentally reward everything equally, including fluff like “Let me solve this step by step…” and the actual step that makes the answer correct. What if we could identify and train harder on the parts of reasoning that causally produce the right answer? Our approach: Counterfactual Importance Weighting ➡️ We do a lightweight “counterfactual audit” of reasoning: ➡️ Mask one reasoning span (a calc step/equation) ➡️ Measure how much the probability of the correct final answer drops ➡️ Use that drop as a per-token weight in the policy-gradient loss What we found 🔆 Critical calculation chains are ~11× more likely to matter than scaffolding 🔆 3.5% of spans are distractors (removing them improves answer probability) #RLHF #LLM #Reasoning #CausalInference #Optimization #GSM8K #AIResearch

Drop 2/7: #LexsiLabsResearch Policy optimization has become the post-training workhorse for improving LLMs' reasoning after pretraining. GRPO and the growing variants (Dr. GRPO, GSPO, off-policy GRPO, GTPO, etc.) have improved accuracy, tamed instability, and generally made RL-style post-training much more “production-shaped”. But there’s a quiet constant hiding inside almost all of these methods. No matter how fancy the reward shaping or variance tricks get, we almost always regularize with the KL divergence. That’s… odd. Because KL isn’t a law of physics. It’s a choice. And choices have consequences. But it also defines the geometry of your policy updates, which can directly affect accuracy, stability, and even verbosity. So we asked: what if KL is just the default, not the best choice? What we did (GBMPO): GBMPO is our attempt to pry open that “KL-only” door and treat regularization geometry as a first-class design dimension, not a default setting. We’re releasing Group-Based Mirror Policy Optimization (GBMPO), a framework that replaces KL with flexible Bregman divergences (mirror-descent style), turning “divergence choice” into a real design knob Two routes: 1️⃣ ProbL2 (hand-designed): L2 distance in probability space 2️⃣ Neural Mirror Maps (learned): a small network learns task-specific geometry (i) NM-GRPO: single run, random init (ii) NM-GRPO-ES: meta-initialized with evolutionary strategies for extra stability + efficiency #PolicyOptimization #RL #LLMResoning #LexsiLabsParis #LexsiLabs

Drop 1/7: Safety controls without inference-time hooks 🧠⚙️ Modern LLM deployments need selective refusal at scale, but most safety controls still depend on inference-time interventions (runtime hooks, gating logic, per-generation overhead).