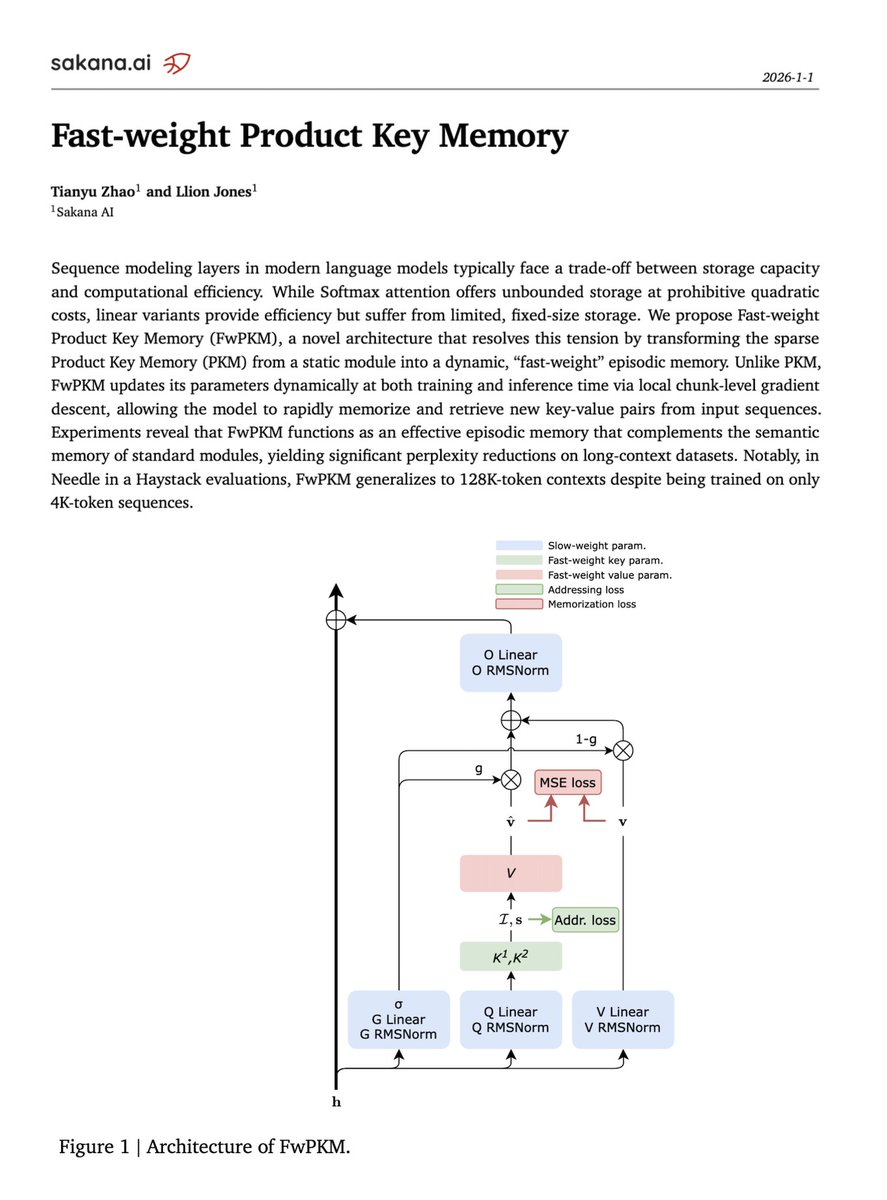

Jessy Lin

365 posts

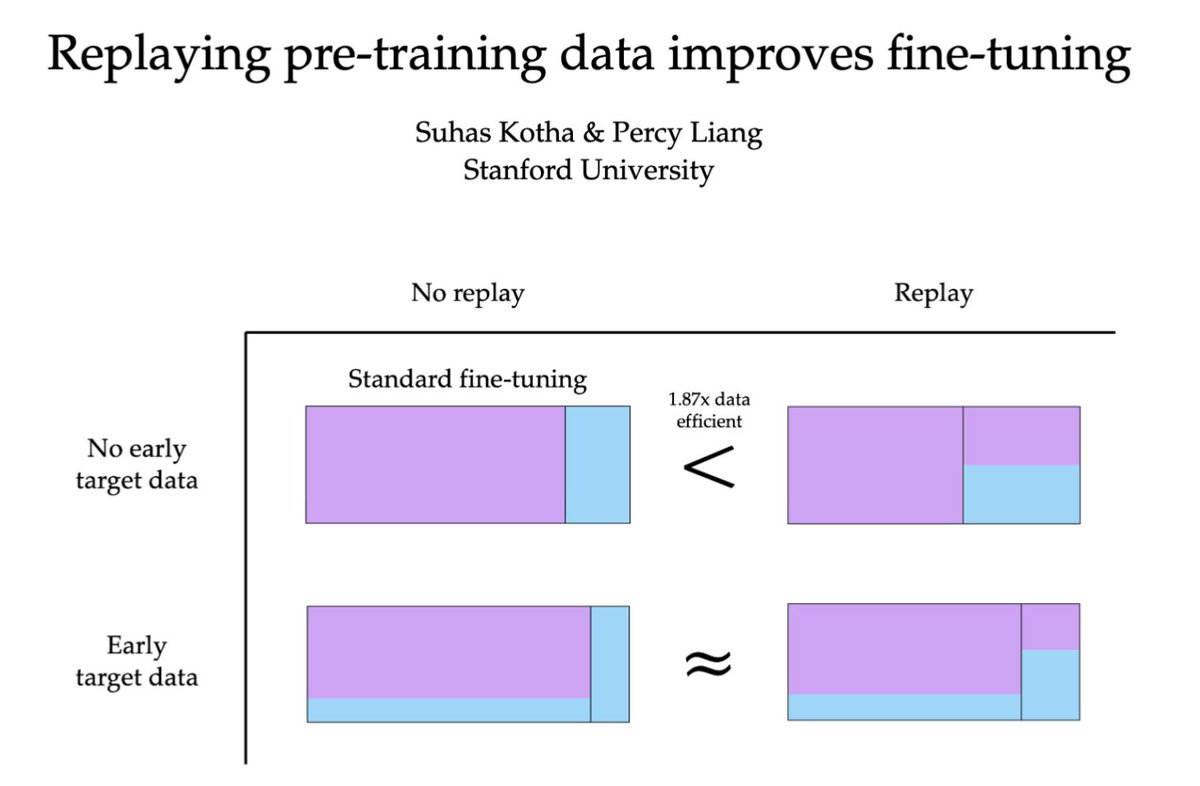

Mixing in more and more pretraining data recovers SimpleQA gains – despite the fact that the SimpleQA docs make up a smaller % of the overall training corpus. With less PT data, model answers are often “near misses” ("geoffrey hinton" as the 2010 IEEE award winner). This suggests there might be something special about pretraining: not just useful for teaching/retaining general capabilities, but impt for knowledge uptake. 🧵 [5/n]

I really liked this new paper which finds that dense MLPs from transformers can be distilled well into much sparser MoEs. This makes it a bit less surprising that gradient-based attribution on dense MLPs shows only a few neurons are responsible for the bulk of behaviour. arxiv.org/abs/2512.18452

I have a bunch of thoughts about continual learning and nothing to do with them (I'm working on something else) so I figured I'd just turn them into a post: First: I think people use "continual learning" to point at a cluster of issues that are related but distinct. I'll list the issues and then speculate about what might fix them. a) Catastrophic Forgetting: If you train on a distribution D_1 and then do SFT on another distribution D_2, you'll often find that your performance on D_1 degrades. The extent of this issue is maybe overstated and is more true for SFT than for RL, but it's still real. There's also an important limit case that IMO is a "smell" for the way we train models currently: repeated data can seriously harm model performance. Humans don't have this problem - they eventually just stop updating on redundant information. b) No integration of new knowledge into existing concepts: If I tell you that I'm from Michigan, you will update your representation of me to include that fact, but you will also change your representation of Michigan. Michigan becomes "a place where someone I know is from". If people ask you questions about Michigan in the future, you may answer those questions with this knowledge in mind. If I tell a chatbot that I'm from Michigan, that fact may get stored in a memory file about me, but it won't affect the model's representation of Michigan. c) No consolidation from short-term memory to long-term memory: Models are good at accumulating information in context up to a point, but then they run out of context (or effective context) and performance degrades. They are missing a mechanism for deciding what's important to retain and then taking action to retain it. d) No notion of timeliness: When you tell a human something, they also retain *when* they learned it, and that "time tag" becomes part of the representation. Humans experience a stream of facts unfolding through time. As a result we form an implicit model of history/causality. Many people can answer "who is the current Pope?" without doing a special search step. Now that we've enumerated the issues, we can think about solutions. In AI it's always worth asking why the simplest solution can't work. The very simplest thing to try is what chatbots currently do: maintain a text file of memories. IMO it's obvious why this is unsatisfying relative to what humans are doing, so I won't dwell on it. I expect there are many refinements you could make here around learning to manually manage the text file, but I also expect these approaches to be brittle. A slightly smarter thing that's still pretty simple is to just keep updating the model during deployment. I actually do think that something like this could work OK, but we probably need a few tweaks. Some combination of the following seems worth pursuing: 1. Sparser updates: Catastrophic forgetting is plausibly worsened by updating all parameters at once. I'd bet either selective parameter updates or making the models themselves sparser could help a lot here. @realJessyLin has some nice work here. 2. Update only on surprising data: Updating on every new datapoint feels wrong. We want a mechanism that decides what’s important/surprising and only updates on that subset. A crude version: automatically generate questions about a datapoint and only update if the model fails to answer them. The hippocampus also has interesting mechanisms for doing this that seem worth trying to emulate. 3. Don't train on the raw datapoint w/ the standard objective. Given that we've decided a datapoint is surprising, I don't think we should just train on it using the standard objective. We may want to automatically generate questions about a given corpus and train on the answers (as in e.g. the Cartridges work) and we may also want to modify the objective. One option is to do prompt distillation with the facts in context - the intuition being that the consolidated model ought to answer the question as though it has the facts on hand. These are "in-paradigm" approaches compatible with LLMs. I bet they’ll yield real progress, but I’m also starting to suspect something less in-paradigm may be needed for a really satisfying solution. That’s for a different post though.

This is, and I can't stress this enough, a Bluetooth microphone. All the "AI" isn't in the ring. The "AI" is just an app, that is ChatGPT wrapped in an organizer.

The computer is the most flexible tool humanity has invented: whatever you can imagine, if you can describe it precisely enough it runs on a machine. I've spent the last 15 years learning how to describe things precisely to computers, and yet my side projects feel less like a triumph of personal computing and more like a graveyard of abandoned threads. Not because I don't want them, but because they take too much effort to build and maintain. Today we're introducing Zo Computer - the computing environment I always wanted.

today we're announcing @zocomputer. when we came up with the idea – giving everyone a personal server, powered by AI – it sounded crazy. but now, even my mom has a server of her own. and it's making her life better. she thinks of Zo as her personal assistant. she texts it to manage her busy schedule, using all the context from her notes and files. she no longer needs me for tech support. she also uses Zo as her intelligent workspace – she asks it to organize her files, edit documents, and do deep research. with Zo's help, she can run code from her graduate students and explore the data herself. (my mom's a biologist and runs a research lab. hi mom) Zo has given my mom a real feeling of agency – she can do so much more with her computer. we want everyone to have that same feeling. we want people to fall in love with making stuff for themselves. in the future we're building, we'll own our data, craft our own tools, and create personal APIs. owning an intelligent cloud computer will be just like owning a smartphone. and the internet will feel much more alive. THIS ONE'S FOR YOU MOM ❤️ special thank you to @modal, @pydantic AI, and @steeldotdev for being great partners leading up to this launch. and thank you @cursor_ai for being my sword 🗡️ and thank you to everyone who believed in us. a small handful: @southpkcommons, @adityaag, @chrisbest, @rauchg, @immad, @shreyas, @MattHartman, @lessin, @gokulr, @sabrinahahn, @iqramband, @whoisnnamdi, @guruchahal, @mikemarg_, @gaybrick, @SJCizmar, @magdovitz, @anneleeskates, @henloitsjoyce, @sugarjammi, @vibethinker, @aaronmakhoffman, @Sunfield__

As part of our recent work on memory layer architectures, I wrote up some of my thoughts on the continual learning problem broadly: Blog post: jessylin.com/2025/10/20/con… Some of the exposition goes beyond mem layers, so I thought it'd be useful to highlight separately:

Introducing Stream & Stream Ring. Let thoughts & ideas flow ∽ Preorder now at sandbar.com