Miha

4.9K posts

Miha

@mihar

Building things ⋅ @linear ⋅ @coinbase ⋅ YCS11

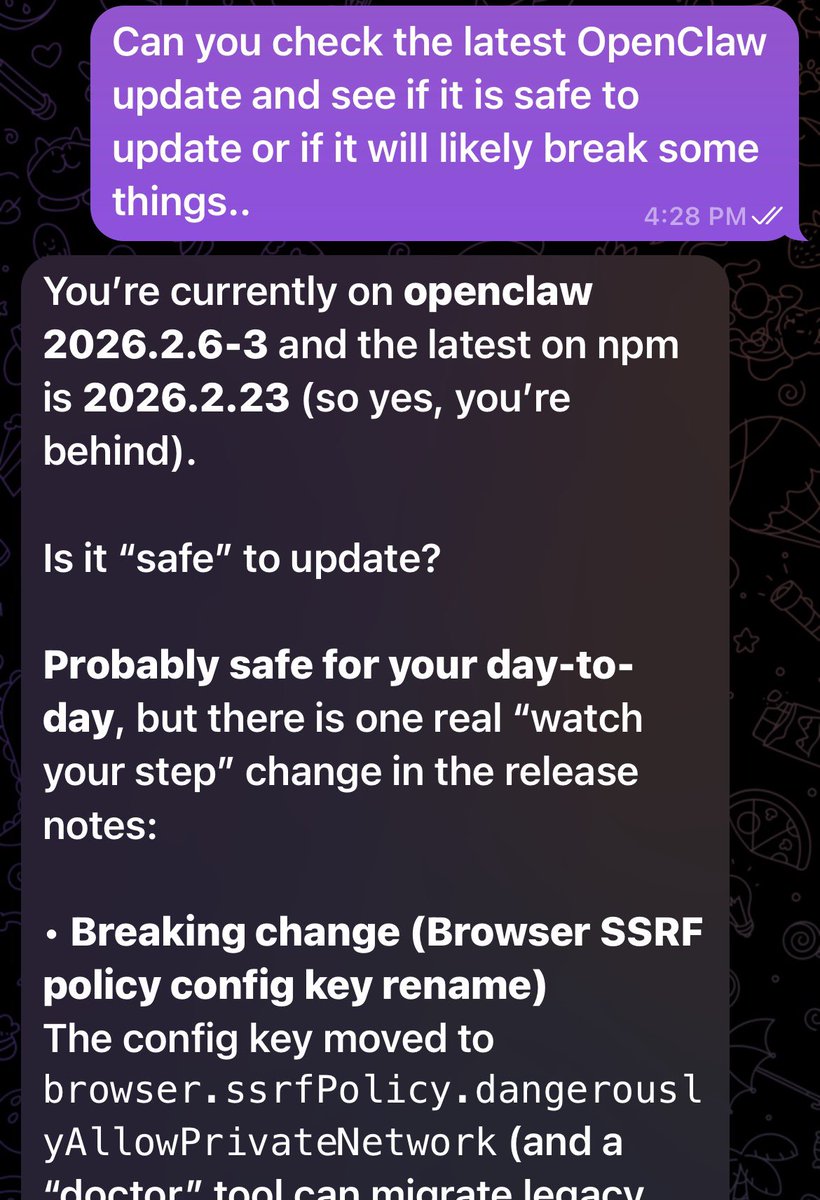

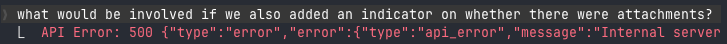

Karpathy's llama2.c showed you could train a real transformer in pure C with no frameworks. A solo researcher (and Claude Code) just took that same model, Stories 110M, Llama2 architecture, trained on real text and ran it on Apple's M4 Neural Engine (ANE) for less than a watt. He reverse-engineered the undocumented private APIs, bypassed CoreML, and found Apple's abstraction layer was hiding 2-4x of the chip's real throughput. The ANE delivers 6.6 TFLOPS per watt, roughly 80x more efficient than an Nvidia A100. The real implication here is inference: there are hundreds of millions of Apple devices with one of the most efficient AI accelerators ever shipped in consumer hardware, and Apple's own software stack is the thing standing between developers and its actual performance. h/t @maderix