MIT NLP

99 posts

MIT NLP

@nlp_mit

NLP Group at @MIT_CSAIL! PIs: @yoonrkim @jacobandreas @lateinteraction @pliang279 @david_sontag, Jim Glass, @roger_p_levy

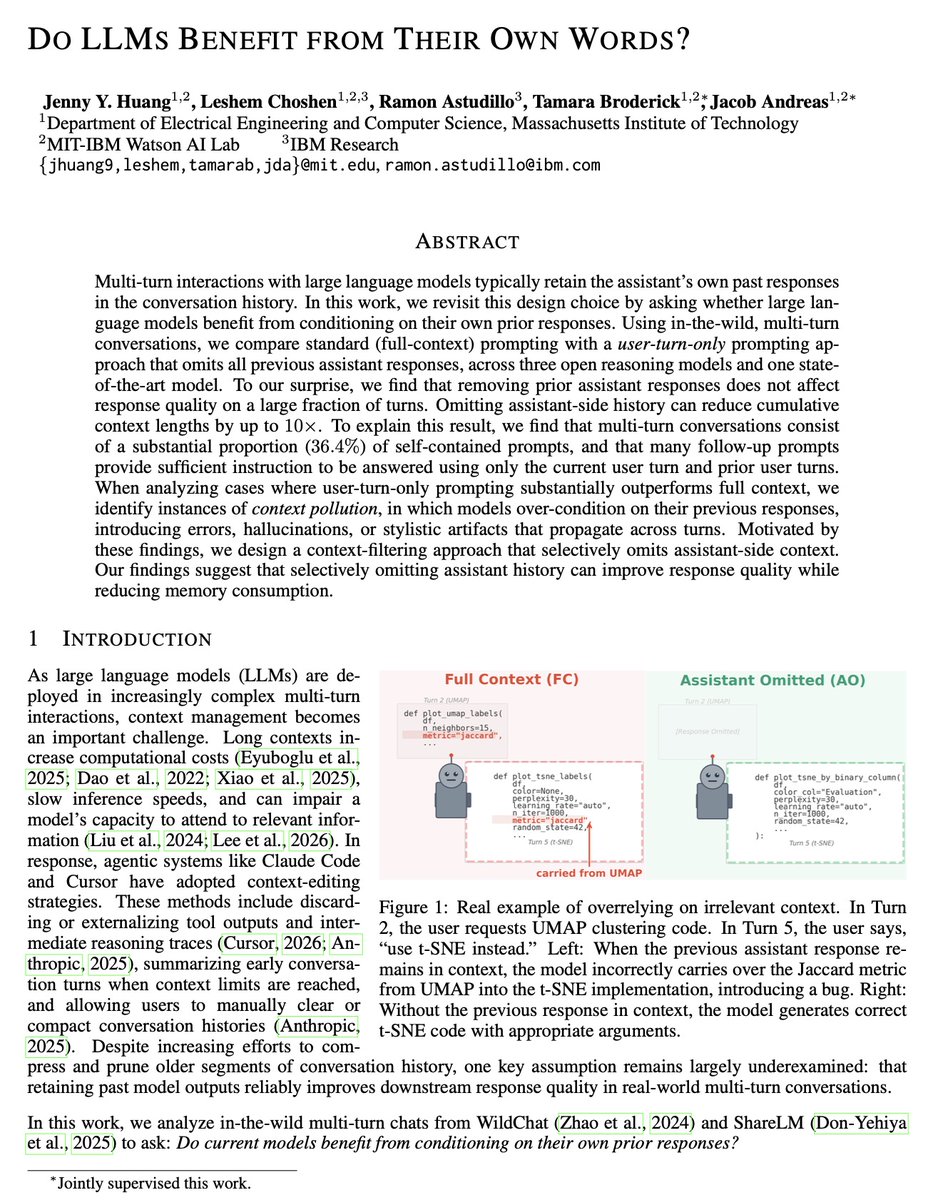

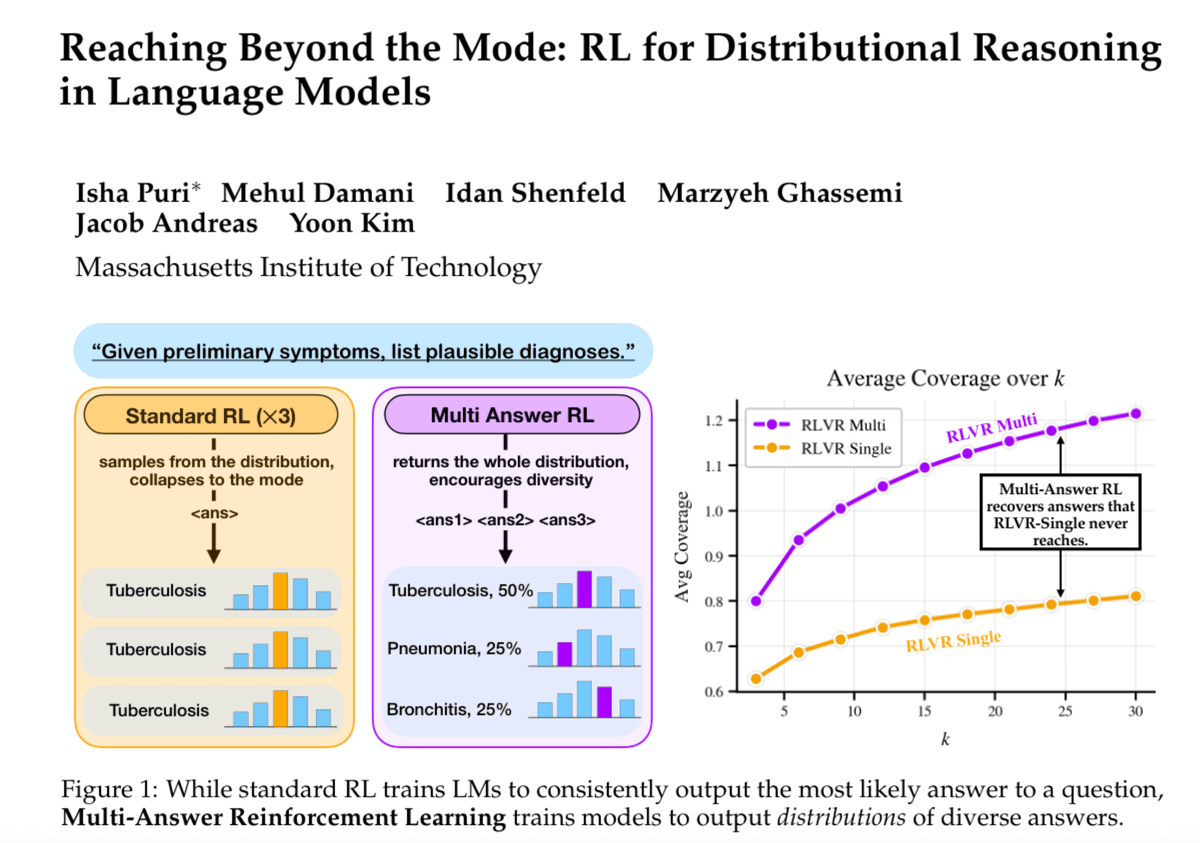

ChatGPT several times where's best to go for spring break? It recommends Barcelona almost every time. This isn't a fluke. RL training rewards one best answer, so the model learns to commit to one mode and repeat it. Meet Multi-Answer RL: a simple RL method that trains LMs to reason through and output a distribution of answers in a single generation. [1/N]

New paper: It's time to optimize for 🔁self-consistency 🔁 We’ve pushed LLMs to the limits of available data, yet failures like sycophancy and factual inconsistency persist. We argue these stem from the same assumption: that behavior can be specified one I/O pair at a time. 🧵

🚀 Launching Every Eval Ever: Toward a Common Language for AI Eval Reporting 🚀 A shared schema + crowdsourced repository so we can finally compare evals across frameworks and stop rerunning everything from scratch 🔧 A tale of broken AI evals 🧵👇 evalevalai.com/projects/every…

We introduce a new approach for fast and high-quality context compaction in latent space. Attention Matching (AM) achieves 50× compaction in seconds with little performance loss, substantially outperforming summarization and other baselines.

QoQ-Med: Building Multimodal Clinical Foundation Models with Domain-Aware GRPO Training "we introduce QoQ-Med-7B/32B, the first open generalist clinical foundation model that jointly reasons across medical images, time-series signals, and text reports. QoQ-Med is trained with Domain-aware Relative Policy Optimization (DRPO), a novel reinforcement-learning objective that hierarchically scales normalized rewards according to domain rarity and modality difficulty, mitigating performance imbalance caused by skewed clinical data distributions. Trained on 2.61 million instruction tuning pairs spanning 9 clinical domains, we show that DRPO training boosts diagnostic performance by 43% in macro-F1 on average across all visual domains as compared to other critic-free training methods like GRPO."

Robust reward models are critical for alignment/inference-time algos, auto eval, etc. (e.g. to prevent reward hacking which could render alignment ineffective). ⚠️ But we found that SOTA RMs are brittle 🫧 and easily flip predictions when the inputs are slightly transformed 🍃 🧵