Paul D. Tyler

2.5K posts

Paul D. Tyler

@pauldtyler

Working hard to live 5 years in the future. #strategy | #innovation | #ai | #insurtech | #annuities | #runnign

NYC Beigetreten Ekim 2010

2.9K Folgt2.2K Follower

@chamath Except take the same data and divide by population and states with the most fraud per person tell a much diffferent story (according to Grok’s population data):

English

Paul D. Tyler retweetet

IKEA deployed an AI chatbot named Billy to handle level-one customer service inquiries. It reportedly resolved around 57% of those engagements without human escalation.

Most companies would have celebrated the labor savings and stopped there. Cost takeout right?

But the more interesting move was to study the 43% of cases Billy could not resolve. Those unresolved inquiries pointed to customer demand for interior design help.

IKEA responded by spinning up a design consultancy, reskilling customer service employees powered by AI, and creating a new revenue stream that generated roughly €1 billion in new revenue in its 1st year.

Automation + Augmentation = Exponential Growth 💪🦾📈

Story here: @briansolis/the-real-threat-of-ai-is-what-your-competitors-will-become-with-it-b2df702db4af?postPublishedType=repub" target="_blank" rel="nofollow noopener">medium.com/@briansolis/th…

English

@KanikaBK @pauldtyler But aren’t you giving it access to read/write whatever it wants with the API, same as giving it direct access?

English

I live on the NY/CT line. I cross state borders to get groceries.

So I have to ask: will my AI get smarter when I cross the road into Connecticut?

New York's S7263 would make companies liable when a chatbot impersonates a licensed professional — a doctor, lawyer, financial advisor — and causes harm. The intent is sound. AI giving bad advice while cosplaying as an expert is a real problem worth solving.

But software doesn't stop at state lines. And that creates a real tension.

If every state writes its own rules, companies face an impossible choice: build 50 different versions, or default to the most restrictive one for everyone. Either way, users lose.

Here's what keeps me up at night: the people most exposed to bad AI advice are often the same people who can't afford a real doctor, lawyer, or advisor. They're not using AI recklessly — they're using it because it's the only option they have. Over-restrict, and you've just taken that option away.

Getting AI governance right isn't just about liability. It requires:

- A clear line between advice and information

- Consistent disclosure so users know what they're dealing with

- Frameworks built to scale nationally, not state by state

The groceries are the same on both sides of the border.

The AI shouldn't be different either.

Curious how others are thinking about this.

English

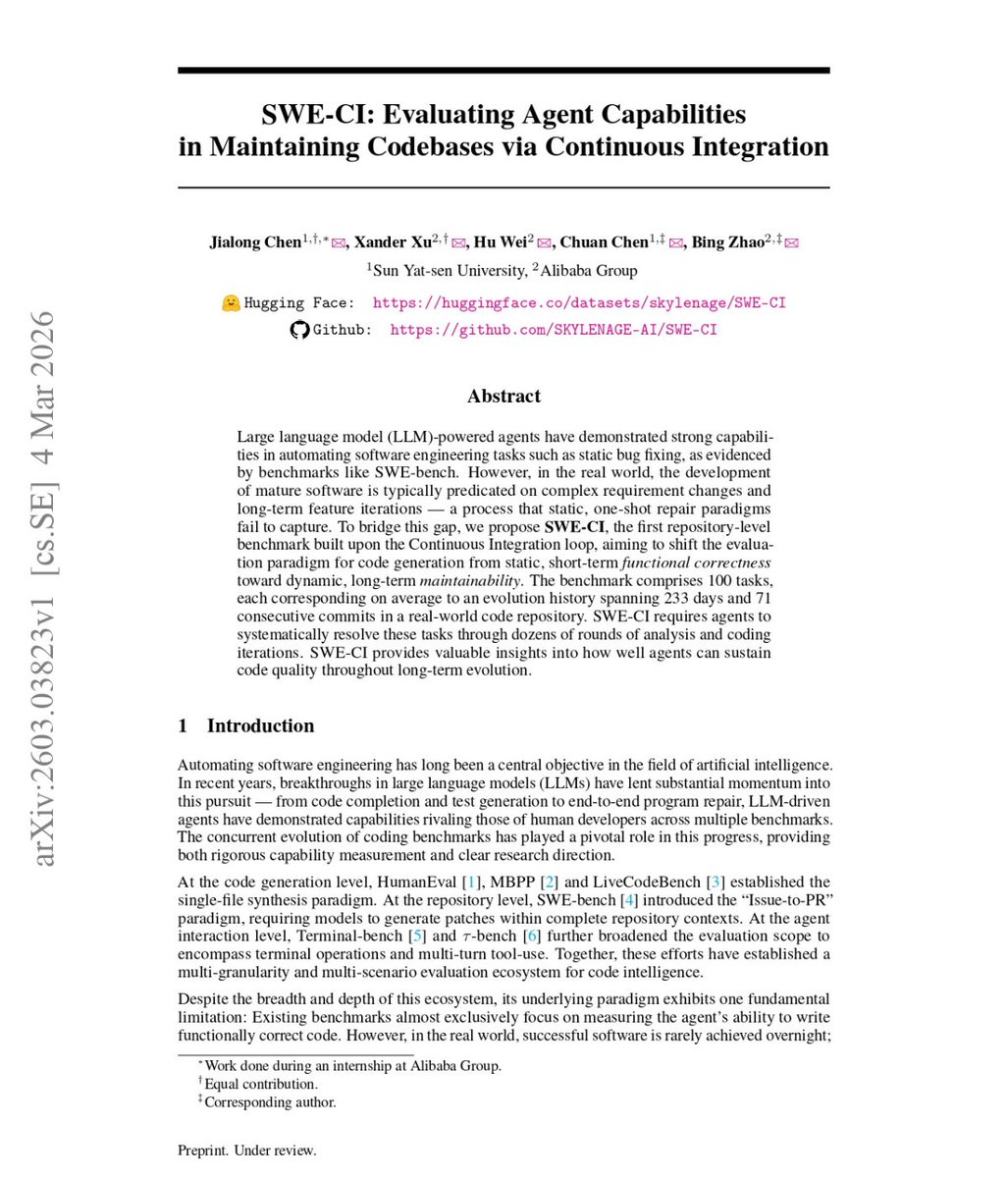

🤯BREAKING: Alibaba just proved that AI Coding isn't taking your job, it's just writing the legacy code that will keep you employed fixing it for the next decade. 🤣

Passing a coding test once is easy. Maintaining that code for 8 months without it exploding? Apparently, it’s nearly impossible for AI.

Alibaba tested 18 AI agents on 100 real codebases over 233-day cycles. They didn't just look for "quick fixes"—they looked for long-term survival.

The results were a bloodbath:

75% of models broke previously working code during maintenance.

Only Claude Opus 4.5/4.6 maintained a >50% zero-regression rate.

Every other model accumulated technical debt that compounded until the codebase collapsed.

We’ve been using "snapshot" benchmarks like HumanEval that only ask "Does it work right now?"

The new SWE-CI benchmark asks: "Does it still work after 8 months of evolution?"

Most AI agents are "Quick-Fix Artists." They write brittle code that passes tests today but becomes a maintenance nightmare tomorrow. They aren't building software; they're building a house of cards.

The narrative just got honest: Most models can write code. Almost none can maintain it.

English

Launched new podcast - L&A Hug - innovation in the life & annuity industry. First episode is all about change in the underwriting profession and the possible impact of AI: zinnia.com/en/la-hub

English

@TrendyVids7 @DonaldJTrumpJr Interesting this is coming from someone in South Asia.

English

WTF!???

Greg Price@greg_price11

WATCH: RINO Kentucky Senate candidate @barrforsenate demands that we allow thousands of Afghan migrants to flood into America: "We have failed in our obligation to help these Afghans...We owe them to help them get into our country...I voted for these Special Immigrant Visas."

Annuity products have changed a lot since the financial crisis of 2008. Consumer needs haven't. Spoke with @KerryPechter about how he approached revising "Annuities for Dummies" - first published in 2008 - for audiences in 2023

English

@ParadisoPresent Yep. Already have projects running.

Rye Brook, NY 🇺🇸 English

@EducatedOver @billburr Enjoyed the night. All new stuff. I’m sure three or four more show in it will be running like a train.

Rye Brook, NY 🇺🇸 English

@pauldtyler @billburr What did you think? I thought it was pretty good but maybe 10% weak. Maybe it’s the start of the tour…

English

Saw @billburr’s great show last night in Newark. They made us stuff our phones into faraday bags so we actually had to give the show our full attention. (BTW, our kids thought we had been kidnapped by the time we got out.) Couldn’t get a real pic, but AI came to the rescue.

Rye Brook, NY 🇺🇸 English

Paul D. Tyler retweetet

Last week I made my grand return to the @NReimagine Podcast with hosts Paul and Laura. We had a terrific time discussing how bootstrapping will thrive in 2023 & what it means to become a great bootstrapper.

Listen to the full podcast episode here: apple.co/3FvN6EZ

English