Pedro Cuenca

3.9K posts

Pedro Cuenca

@pcuenq

ML Engineer at 🤗 Hugging Face | Co-founder at LateNiteSoft (Camera+). I love AI and photography.

Humanity's Last Hackathon is NOW OPEN for registration. This is not a normal hackathon. You will be judged on the context, not the code! Use Codex @OpenAIDevs to build and optimize models for local inference (kernels on Max metal). Submit through @GPU_MODE. Climb the leaderboard. Top performers qualify for the final battle. Launches May 4th. Registration is live now.

Today we’re releasing Laguna XS.2, Poolside’s first open-weight model. It’s a 33B total / 3B active MoE model built for agentic coding and long-horizon tasks. Trained fully in-house on our own stack. Runs on a single GPU. Released under Apache 2.0. Links 👇 Weights: huggingface.co/poolside/Lagun… API: platform.poolside.ai Blog: poolside.ai/blog/laguna-a-…

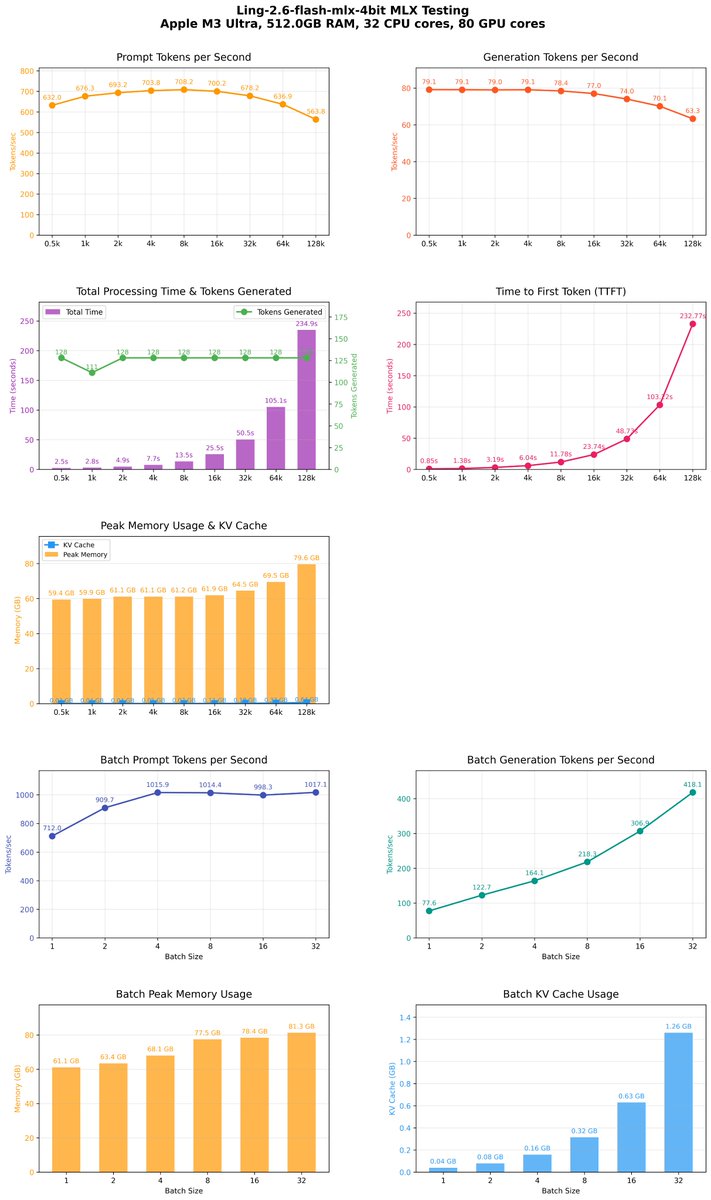

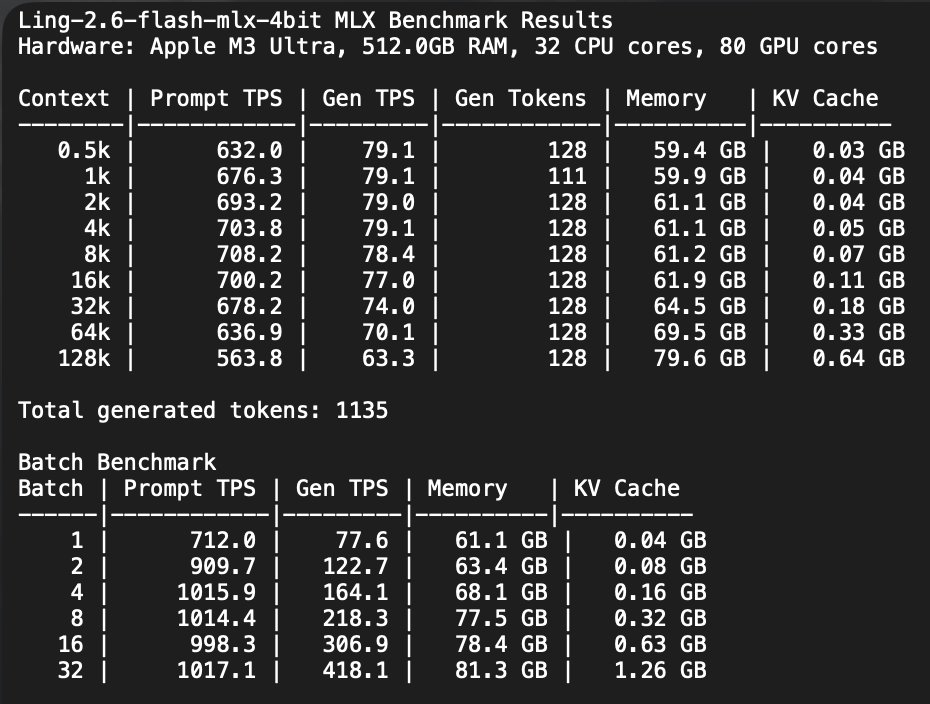

MLX DeepSeek-V4-Flash-2bit-DQ MLX 4K context issue solved! Benchmark results on Apple M5 Max, 128.0GB RAM, 18 CPU cores, 40 GPU cores A comparison M3 Ultra vs M5 Max including bath performance will follow shortly. 0.5k pp 446 tg 42 t/s mem 97.8GB kv 0.02GB 1k pp 578 tg 42 t/s mem 98.1GB kv 0.02GB 2k pp 622 tg 40 t/s mem 99.2GB kv 0.03GB 4k pp 570 tg 37 t/s mem 100.7GB kv 0.04GB 8k pp 513 tg 37 t/s mem 101.4GB kv 0.06GB 16k pp 390 tg 37 t/s mem 102.7GB kv 0.12GB 32k pp 343 tg 36 t/s mem 104.5GB kv 0.23GB 64k pp 297 tg 34 t/s mem 109.4GB kv 0.45GB This is using this PR from @0xClandestine 🔥 It's faster than yesterday! I bet it's using matmul in hardware much more. github.com/Blaizzy/mlx-lm…