📢 Announcing 𝗦𝗣𝗢𝗧: 𝗦𝗰𝗮𝗹𝗶𝗻𝗴 𝗣𝗼𝘀𝘁-𝗧𝗿𝗮𝗶𝗻𝗶𝗻𝗴 𝗳𝗼𝗿 𝗟𝗟𝗠𝘀 Workshop at #ICLR2026 (@iclr_conf )🚀 🚨 We invite work on the principles of post-training scaling, bridging algorithms, data & systems 📅 Feb 5, 2026 for papers 🌐 spoticlr.github.io 🧵(1/5)

Rishabh Tiwari

87 posts

@rish2k1

CS PhD @UCBerkeley | Ex-Deepmind, FAIR | Research area: Efficient LLM reasoning, scaling RL

📢 Announcing 𝗦𝗣𝗢𝗧: 𝗦𝗰𝗮𝗹𝗶𝗻𝗴 𝗣𝗼𝘀𝘁-𝗧𝗿𝗮𝗶𝗻𝗶𝗻𝗴 𝗳𝗼𝗿 𝗟𝗟𝗠𝘀 Workshop at #ICLR2026 (@iclr_conf )🚀 🚨 We invite work on the principles of post-training scaling, bridging algorithms, data & systems 📅 Feb 5, 2026 for papers 🌐 spoticlr.github.io 🧵(1/5)

Can LLMs Self-Verify? Much better than you'd expect. LLMs are increasingly used as parallel reasoners, sampling many solutions at once. Choosing the right answer is the real bottleneck. We show that pairwise self-verification is a powerful primitive. Introducing V1, a framework that unifies generation and self-verification: 💡 Pairwise self-verification beats pointwise scoring, improving test-time scaling 💡 V1-Infer: Efficient tournament-style ranking that improves self-verification 💡 V1-PairRL: RL training where generation and verification co-evolve for developing better self-verifiers 🧵👇

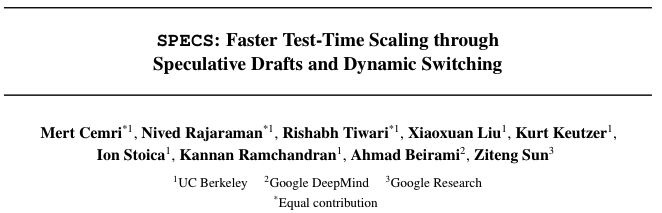

Introducing SPECS (SPECulative test time Scaling), a test-time scaling (TTS) algorithm with pareto-frontier latency/accuracy trade-off. Scaling test-time compute improves LLM reasoning but imposes a latency overhead. Prior work optimizes TTS accuracy as a function of FLOPS, we propose to further reduce latency by addressing the memory bottleneck of LLM inference through speculative drafts. See a breakdown of the method below. (1/n) 🧵 👇

🚀SonicMoE🚀: a blazingly-fast MoE implementation optimized for NVIDIA Hopper GPUs. SonicMoE reduces activation memory by 45% and is 1.86x faster on H100 than previous SOTA😃 Paper: arxiv.org/abs/2512.14080 Work with @MayankMish98, @XinleC295, @istoica05, @tri_dao