Santosh Agrawal

2.4K posts

Santosh Agrawal

@santgra

IT Infrastructure Architect & Entrepreneur.

The Anubis ransomware group claims to have breached healthcare provider ViaQuest and law firm Samuel I. White, PC, leaking terabytes of highly sensitive data. dailydarkweb.net/anubis-ransomw…

I am a CISO at one of the six banks that got called to Treasury on Tuesday. I need to explain what happened in that room. Bessent and Powell don't convene emergency meetings. They just don't. Last time was SVB collapsing. So when the invite hit — "cybersecurity briefing, Treasury headquarters, Tuesday morning" — every one of us knew this wasn't a courtesy call. Jane Fraser was there. Brian Moynihan. Charlie Scharf. Ted Pick. David Solomon. Dimon got the invite but couldn't make it. They told us Anthropic built a model called Mythos that found thousands of zero-day vulnerabilities across every major operating system and every major browser. Some of these bugs are 27 years old. Sitting in production code since 1999. Nobody — not Google Project Zero, not the NSA, not a single human researcher — ever caught them. The room got very quiet when they explained the next part. This model doesn't just find vulnerabilities. It writes working exploits. Autonomously. No human in the loop. Their previous model had a near-0% success rate at autonomous exploit development. Mythos hit 72.4% on Firefox alone. One demo showed it chaining four separate vulnerabilities to escape both a browser sandbox AND an OS sandbox — weeks of work for an elite human team. Mythos did it overnight while the engineers slept. The Anthropic people kept using the word "emerged." These capabilities weren't trained. They emerged from general improvements in reasoning. That word is what made the room go cold. Because if it emerged in their model, it'll emerge in the next one. And the one after that. 99% of the vulnerabilities are still unpatched right now. Every major bank runs on the same compromised infrastructure. We just got told the exact shape of the holes and we can't fix them fast enough. Anthropic committed $100 million in compute credits and launched Project Glasswing — 40 organizations get limited access to use Mythos defensively. Amazon, Google, JPMorgan, Apple, Microsoft. But nobody in the press is connecting this part. Anthropic is valued at $380 billion. $30 billion revenue run rate — just surpassed OpenAI. Evaluating an IPO for October. And the model that terrified every bank CEO in America? They can't release it to paying customers. Their own researchers said it might be "many times larger and more expensive than Opus." Too expensive to commercialize at scale. The company preparing for the biggest AI IPO in history just told the U.S. government its flagship product is simultaneously too dangerous to sell and too expensive to run. That's a hell of a slide to put in front of underwriters. Meanwhile OpenAI is reportedly building something called "Spud" with similar capabilities. Every hospital system, every power grid, every bank in America is running software with decades of unfound vulnerabilities — and we're entering a world where any sufficiently advanced model finds them all at once. We left Treasury with one clear understanding: the window between when AI can find every vulnerability and when defenders can patch them is going to be the most dangerous period in the history of cybersecurity. Nobody in that room disagreed. What's your company doing about it? Genuinely — because most of us don't have an answer yet either. This is a fictional narrator. The numbers are real.

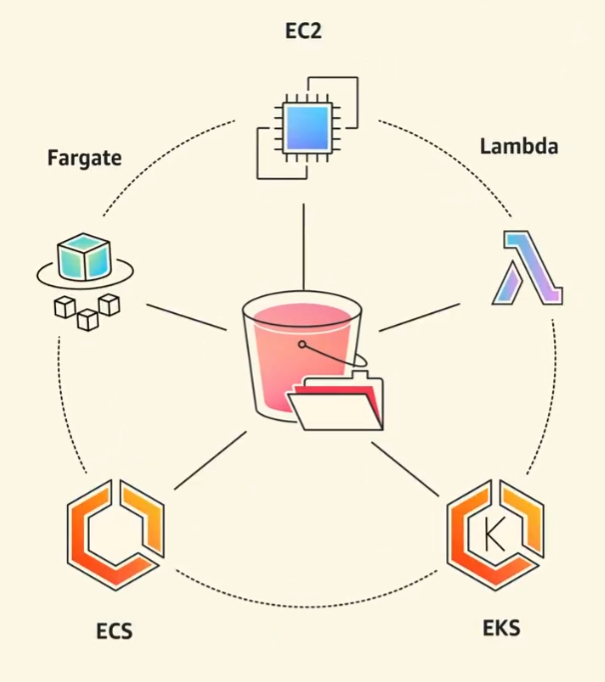

Announcing Amazon S3 Files. The first and only cloud object store with fully-featured, high-performance file system access. Learn more here. go.aws/4tw17Zg

🚨 BREAKING: A Google researcher and a Turing Award winner just published a paper that exposes the real crisis in AI. It's not training. It's inference. And the hardware we're using was never designed for it. The paper is by Xiaoyu Ma and David Patterson. Accepted by IEEE Computer, 2026. No hype. No product launch. Just a cold breakdown of why serving LLMs is fundamentally broken at the hardware level. The core argument is brutal: → GPU FLOPS grew 80X from 2012 to 2022 → Memory bandwidth grew only 17X in that same period → HBM costs per GB are going UP, not down → The Decode phase is memory-bound, not compute-bound → We're building inference on chips designed for training Here's the wildest part: OpenAI lost roughly $5B on $3.7B in revenue. The bottleneck isn't model quality. It's the cost of serving every single token to every single user. Inference is bleeding these companies dry. And five trends are making it worse simultaneously: → MoE models like DeepSeek-V3 with 256 experts exploding memory → Reasoning models generating massive thought chains before answering → Multimodal inputs (image, audio, video) dwarfing text → Long-context windows straining KV caches → RAG pipelines injecting more context per request Their four proposed hardware shifts: → High Bandwidth Flash: 512GB stacks at HBM-level bandwidth, 10X more memory per node → Processing-Near-Memory: logic dies placed next to memory, not on the same chip → 3D Memory-Logic Stacking: vertical connections delivering 2-3X lower power than HBM → Low-Latency Interconnect: fewer hops, in-network compute, SRAM packet buffers Companies that tried SRAM-only chips like Cerebras and Groq already failed and had to add DRAM back. This paper doesn't sell a product. It maps the entire hardware bottleneck and says: the industry is solving the wrong problem. Paper dropped January 2026. Link in the first comment 👇

@PathfinderAstro Anything is meant to happen it will happen by feb only, the risk of war between Iran US will slightly reduce by march. After april I don’t see any major challenges coming except regime change in Iran in the upcoming year 2027.

I spent 100 hours over the past week researching, writing and editing the piece we just put out. It’s a scenario, not a prediction like most of our work. But it was rigorously constructed, dismissing it outright requires the kind of intellectual laziness that tends to get expensive. And we’ve released it for free. Hopefully you enjoy it. citriniresearch.com/p/2028gic