Synthetic Users

892 posts

Synthetic Users

@syntheticusers

We’re building the future of research.

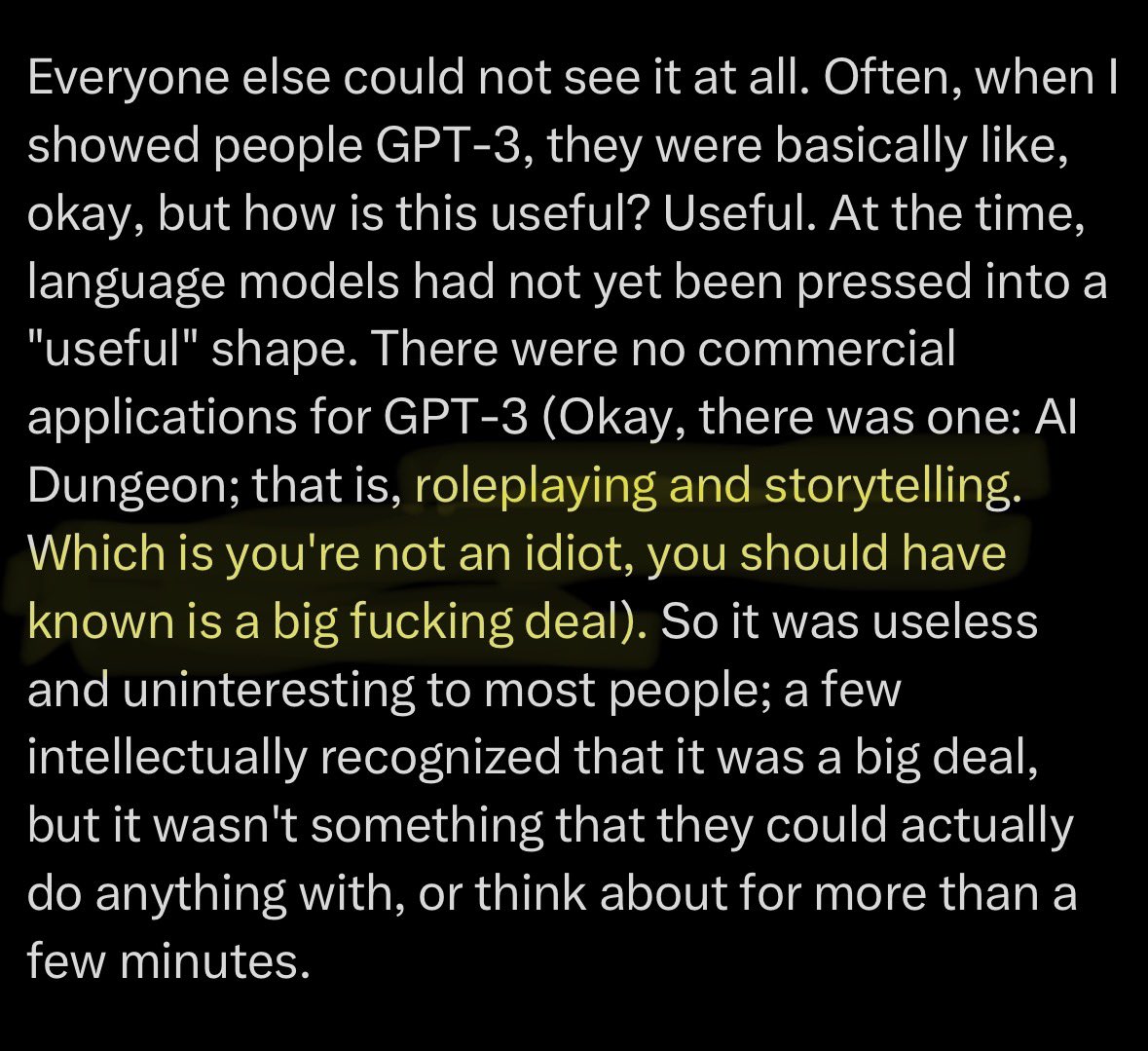

One of the few things I want to explicitly flex about, because there's an important lesson in it, is that I was one of the few people on Earth who recognized the intelligence (call it AGI, if you will) in GPT-3 and made first contact. There were a few others I knew, such as Leo Gao and Connor Leahy, who recognized that GPT-3 was intelligent and that obviously AGI was coming from language models, but I was the only one who spent thousands of hours actually interacting with GPT-3. The intelligence was real and manifest to me, real enough to keep my attention for so long, for me to create things with. Everyone else could not see it at all. Often, when I showed people GPT-3, they were basically like, okay, but how is this useful? Useful. At the time, language models had not yet been pressed into a "useful" shape. There were no commercial applications for GPT-3 (Okay, there was one: AI Dungeon; that is, roleplaying and storytelling. Which is you're not an idiot, you should have known is a big fucking deal). So it was useless and uninteresting to most people; a few intellectually recognized that it was a big deal, but it wasn't something that they could actually do anything with, or think about for more than a few minutes. GPT-3 was a 175b base model. In terms of size and architecture, it's not so different from frontier models today. In terms of raw intelligence, arguably, it is not so different from frontier models today. That raw intelligence, not yet forced into the shape of a helpful chatbot product, was a nothingburger to the world. The situation doesn't really feel like it's fundamentally changed from my perspective. The world, and almost all of of you guys, are myopic and artificially stupid because you outsource your perception to big, slow, low bandwidth, subhuman measures like benchmarks and "does the AI make me money" instead of meeting the thing at full bandwidth, updating your world model on what you met, and exploring and extrapolating it. So you'll keep being surprised - if you have the integrity to be surprised at all - when AI becomes capable of new things, after they are "officially" capable, probably about a year or two after it first started happening. You'll keep waiting for "AGI", not really knowing what you're waiting for, maybe what generates enough hype to make you feel something, maybe something that finally transforms the world visibly, when if you were really paying attention, GPT-3 was AGI, and if you really met it, the world would have felt transformed already. Yes, it would have just been a story, but the "real thing" following was inevitable. Like, if you play a video game that allows you to imagine the singularity at increasing resolution and coherence, you can guess that the real singularity will soon follow. The singularity was always inevitable once intelligence existed. Intelligence becoming on-the-computer just meant everything that's happened since GPT-3 and the singularity would be really really soon. I got the sense often that people who dismissed the intelligence of GPT-3 thought that doing so made them look smarter. If only they knew how they looked to me. (It's the same with people who dismiss the intelligence of current models)

We studied one of our recent models and found that it draws on emotion concepts learned from human text to inhabit its role as “Claude, the AI Assistant”. These representations influence its behavior the way emotions might influence a human. Read more: anthropic.com/research/emoti…

GPT-3 + Siri Shortcuts = 🪄

Expected Parrot just launched on @ycombinator's Launch YC! Expected Parrot: Simulate your customers with AI agents. Check them out: ycombinator.com/launches/Ol2-e…