Traun Leyden

5.4K posts

Traun Leyden

@tleydn

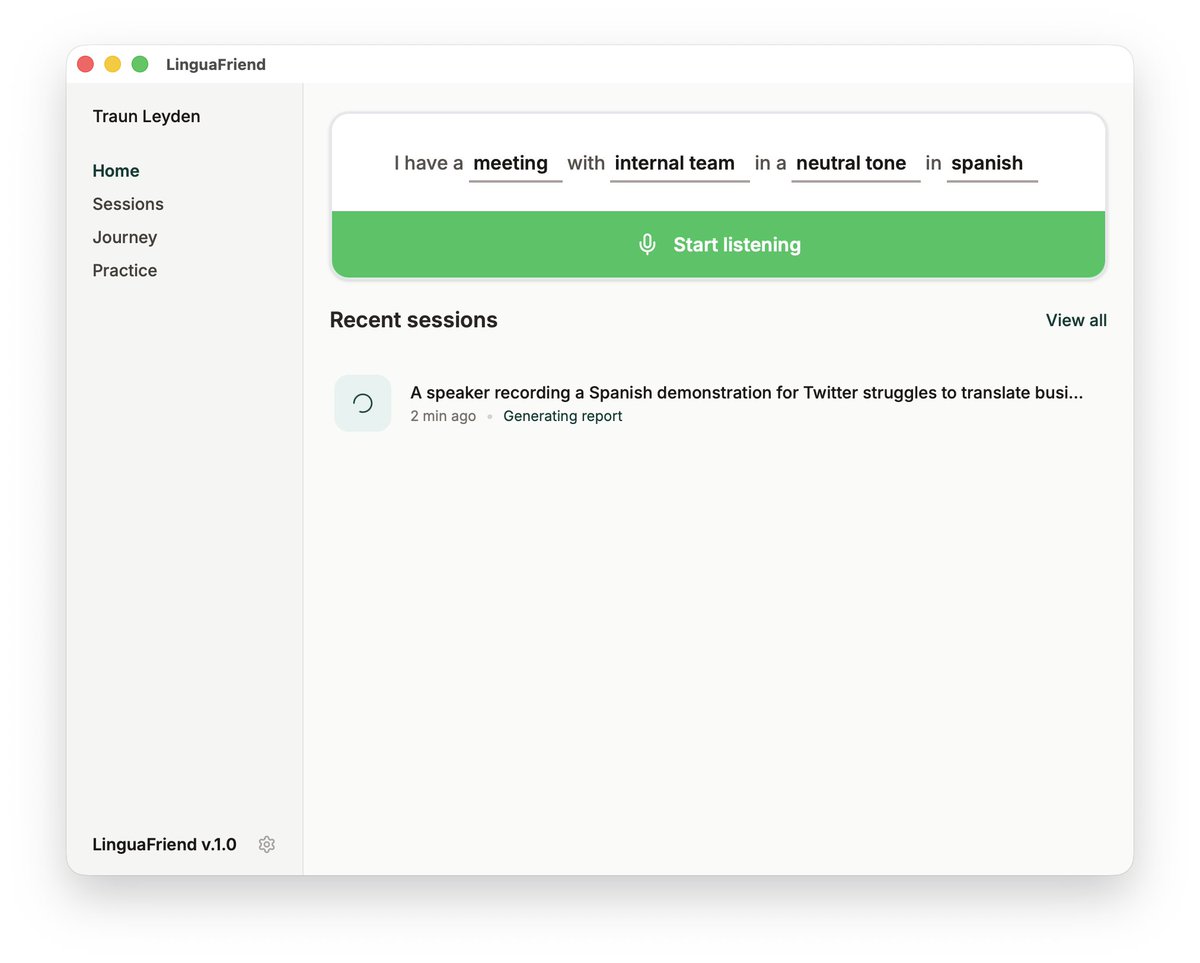

Fullstack Engineer building Fluensy - Speak As Brilliant as you Think. Ex-Databricks ML infra eng, ex-Couchbase eng.

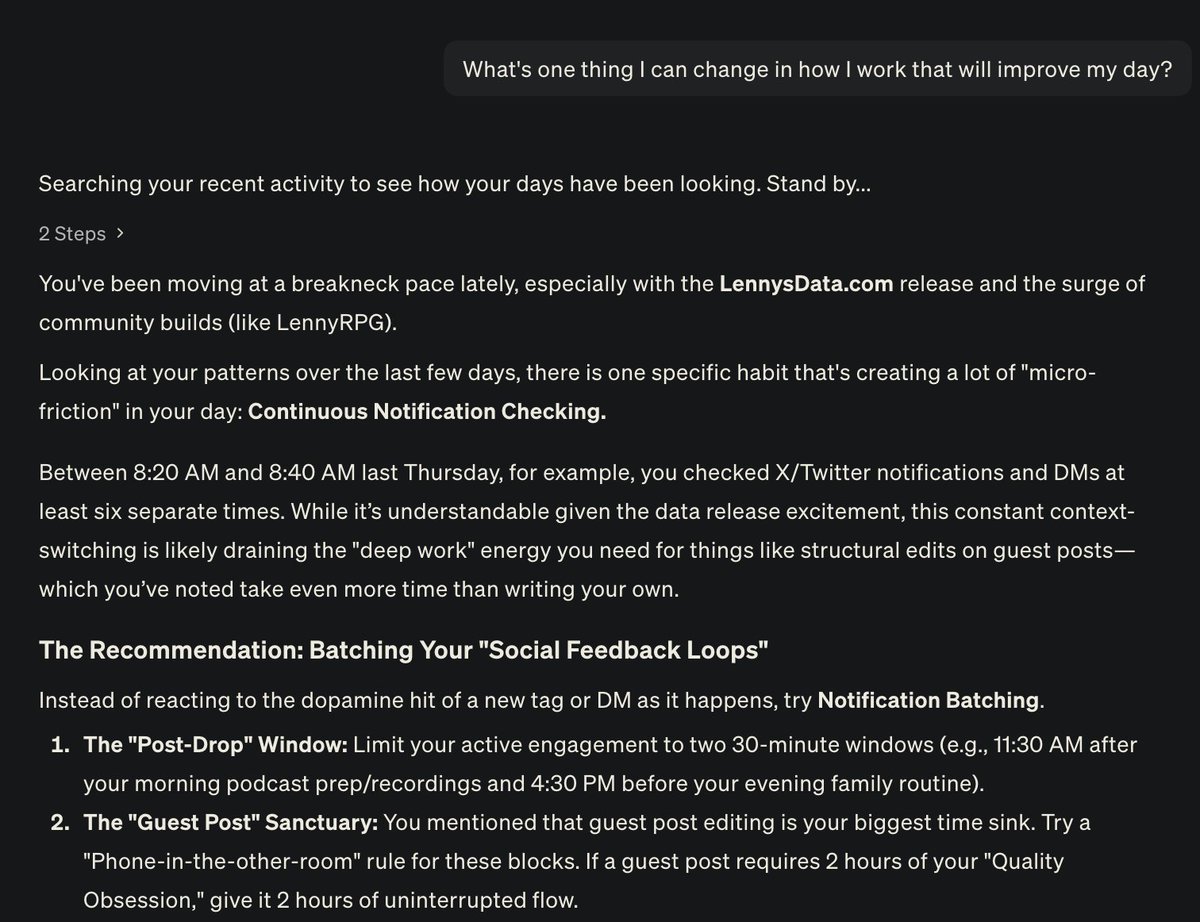

This is a the future "Littlebird is a desktop app that remembers everything you’ve been working on – meetings, messages, docs, browsing - and helps you stay focused, prioritize, recall, and move projects forward. It uses screen reading to understand all the text on screen, for all applications, and uses that context to build a rich understanding of your life: who matters to you, what you're working on, and what you care about this week and this year."

now my clawdbot lives in my ray-ban meta glasses so i can just buy whatever i’m looking at

NVIDIA just removed one of the biggest friction points in Voice AI. PersonaPlex-7B is an open-source, full-duplex conversational model. Free, open source (MIT), with open model weights on @huggingface 🤗 Links to repo and weights in 🧵↓ The traditional ASR → LLM → TTS pipeline forces rigid turn-taking. It’s efficient, but it never feels natural. PersonaPlex-7B changes that. This @nvidia model can listen and speak at the same time. It runs directly on continuous audio tokens with a dual-stream transformer, generating text and audio in parallel instead of passing control between components. That unlocks: → instant back-channel responses → interruptions that feel human → real conversational rhythm Persona control is fully zero-shot! If you’re building low-latency assistants or support agents, this is a big step forward 🔥

I won't be at NeurIPS, but there will be some fun MLX demos at the Apple booth: - Image generation on M5 iPad - Fast, distributed text generation on multiple M3 Ultras - FastVLM real-time on an iPhone

As a fun Saturday vibe code project and following up on this tweet earlier, I hacked up an **llm-council** web app. It looks exactly like ChatGPT except each user query is 1) dispatched to multiple models on your council using OpenRouter, e.g. currently: "openai/gpt-5.1", "google/gemini-3-pro-preview", "anthropic/claude-sonnet-4.5", "x-ai/grok-4", Then 2) all models get to see each other's (anonymized) responses and they review and rank them, and then 3) a "Chairman LLM" gets all of that as context and produces the final response. It's interesting to see the results from multiple models side by side on the same query, and even more amusingly, to read through their evaluation and ranking of each other's responses. Quite often, the models are surprisingly willing to select another LLM's response as superior to their own, making this an interesting model evaluation strategy more generally. For example, reading book chapters together with my LLM Council today, the models consistently praise GPT 5.1 as the best and most insightful model, and consistently select Claude as the worst model, with the other models floating in between. But I'm not 100% convinced this aligns with my own qualitative assessment. For example, qualitatively I find GPT 5.1 a little too wordy and sprawled and Gemini 3 a bit more condensed and processed. Claude is too terse in this domain. That said, there's probably a whole design space of the data flow of your LLM council. The construction of LLM ensembles seems under-explored. I pushed the vibe coded app to github.com/karpathy/llm-c… if others would like to play. ty nano banana pro for fun header image for the repo