Asher Cohen 🤖🤳

23.2K posts

Asher Cohen 🤖🤳

@code_tank_dev

Developer, traveller, permaculturer. Evergrowing my knowledge!I use JS/TS to solve problems and architect solutions to make people's lives easier!

im fully convinced that LLMs are not an actual net productivity boost (today) they remove the barrier to get started, but they create increasingly complex software which does not appear to be maintainable so far, in my situations, they appear to slow down long term velocity

My monthly cost of living in Switzerland 🇨🇭 🏠 $5000 rent 📝 $1200 health insurance ⚡ $100 utilities 📱 $180 phone + internet 🚌 $500 Uber + public transport 🥗 $2000 food/groceries 📦 $1000 various orders (food, restaurants, etc) Total: ~$10,000/month. We're a family of 5. My wife and I +3 children. And we live in the best country in the world (but also the most expensive one).

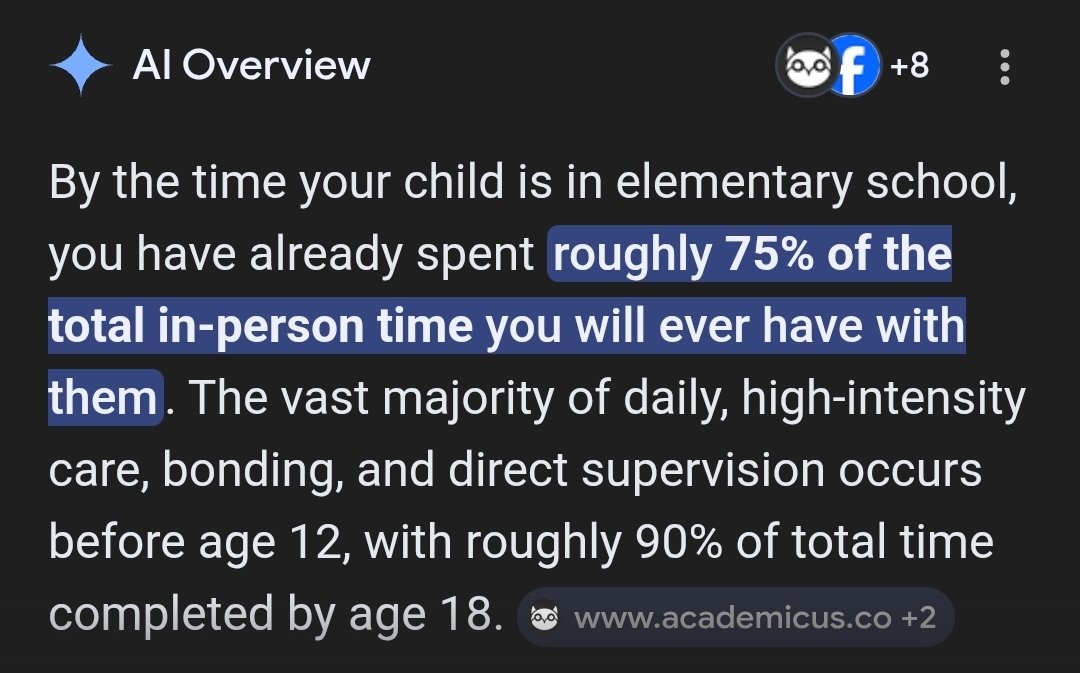

🚨 SAM ALTMAN: “People talk about how much energy it takes to train an AI model … But it also takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart.”

🚨 SAM ALTMAN: “People talk about how much energy it takes to train an AI model … But it also takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart.”

🚨 SAM ALTMAN: “People talk about how much energy it takes to train an AI model … But it also takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart.”

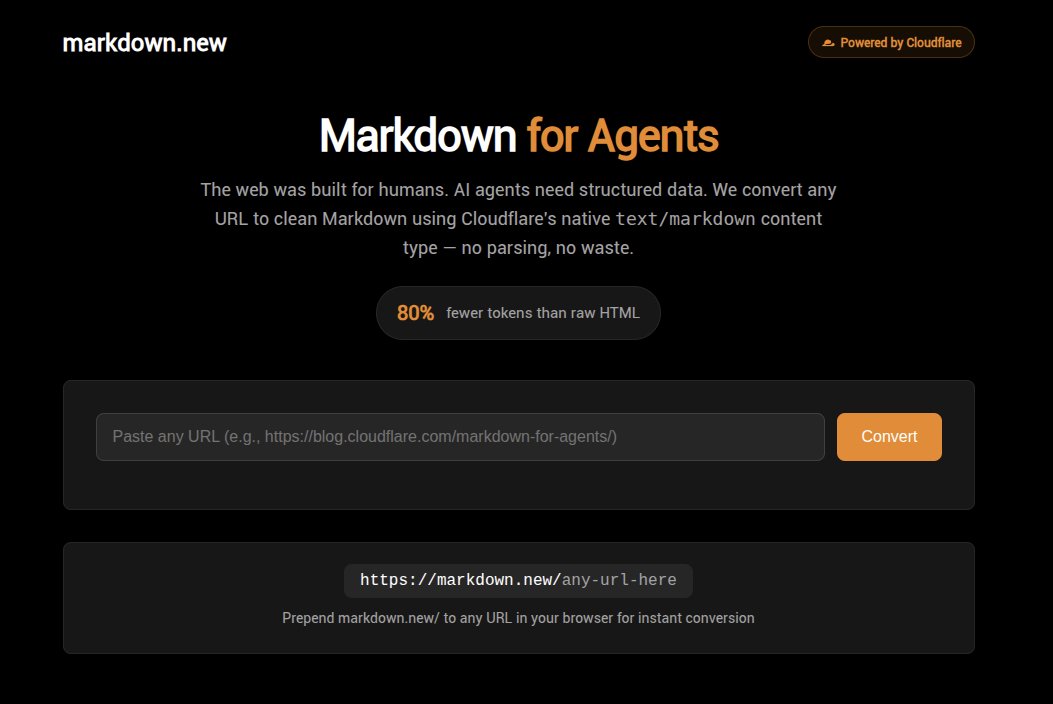

I built markdown.new Put markdown.new before any URL → get clean Markdown back. Cloudflare's Markdown for Agents is great, but only works for enabled sites. markdown.new works for ANY website on the internet. 80% fewer tokens. Also converts PDFs, images, audio. Free. No signup. markdown.new

Elon Musk predicts that AI will bypass coding entirely by the end of 2026 - just creates the binary directly AI can create a much more efficient binary than can be done by any compiler So just say, "Create optimized binary for this particular outcome," and you actually bypass even traditional coding Current: Code → Compiler → Binary → Execute Future: Prompt → AI-generated Binary → Execute Grok Code is going to be state-of-the-art in 2–3 months Software development is about to fundamentally change

In 18 months we went from - AI is bad at math - Okay but it’s only as smart as a high school kid - Sure it can win the top math competition but can it generate a new mathematical proof - Yeah but that proof was obvious if you looked for it… Next year it will be “Sure but it still hasn’t surpassed the complete output of all the mathematicians who have ever lived”

New Engineering blog: We tasked Opus 4.6 using agent teams to build a C compiler. Then we (mostly) walked away. Two weeks later, it worked on the Linux kernel. Here's what it taught us about the future of autonomous software development. Read more: anthropic.com/engineering/bu…