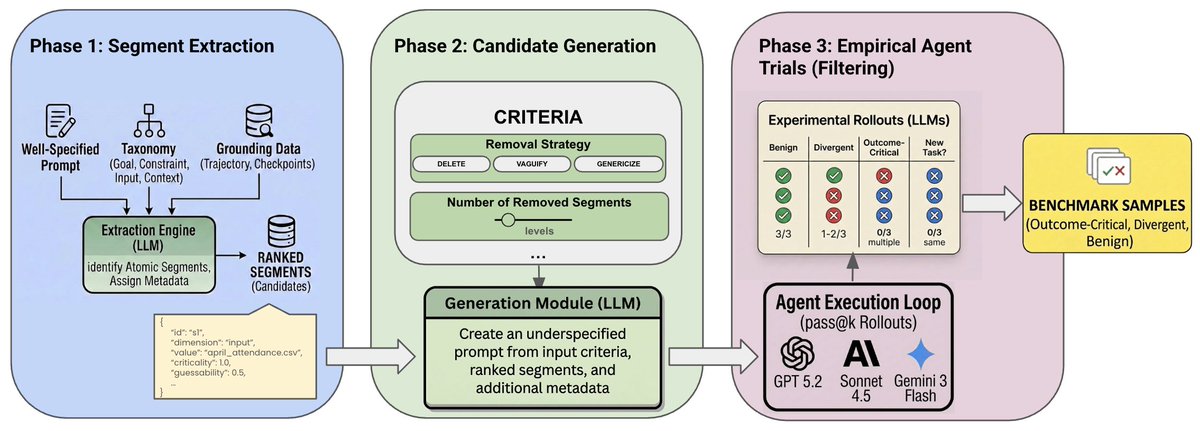

Welcome to the home of all things @scale_AI research — focused on data, evaluation, safety, and post-training that moves frontier models forward. We’ll share benchmarks, insights, and work intended to be useful to the broader research community. labs.scale.com/?utm_source=hu…