Tweet fijado

Juno Vortex

2.9K posts

Juno Vortex

@JunoVortex

Teacher, Music creator, and software solutions provider. Find us on Apple Music, iTunes, Spotify, YouTube, Amazon Prime Music, & other Music Streaming Platforms

India Se unió Ocak 2025

256 Siguiendo76 Seguidores

@law_ninja Yes, we hear you loud and clear. I and my team will be one of the pioneers in deployment of AI that you are mentioning here. Mark my words, I will be one of the first billionaires by just using AI tools for solving problems in real life.

English

Every country is racing to build AI. India should be racing to deploy it.

And nobody in power understands the difference.

India's AI strategy reads like it was written by academics who have never stepped inside a factory in Ludhiana.

Rs 10,000 crore for AI research. Centres of Excellence at IITs. National AI Mission. Innovation hubs. Policy papers. Committees. More committees reviewing the committees.

Meanwhile, a garment factory owner in Tirupur still confirms orders by calling his buyer in Germany and reading out line items from a notebook. A CA firm in Nagpur still sends article clerks to physically collect documents from clients in 2026. A lawyer in Tis Hazari still maintains case diaries in a register that looks exactly like the one his father used in 1987.

India does not have an AI research problem. India has an AI deployment problem.

And the gap between the two is where this country's future will be decided.

Let me be specific about what I mean.

The US builds foundation models. OpenAI, Anthropic, Google, Meta. Billions of dollars in compute. The frontier of what AI can do. Fair enough. That is their game. They have the capital, the talent concentration, and the infrastructure. India will not out-build the US on foundation models. Nor does it need to.

China doesn't build the best models either. Yes China replicates the results with cheaper models, but it does something even more dangerous. China deploys.

A factory owner in Shenzhen doesn't know what a transformer architecture is. He doesn't care. But his production line has AI quality inspection that catches defects his workers miss. His supply chain has demand forecasting that adjusts procurement automatically. His buyer communication is handled by an AI agent that responds in English, Arabic, and Spanish. His invoicing is automated end to end.

He didn't hire AI researchers. He hired deployment people. Engineers who walked into his factory, watched his workflow for a week, and connected existing AI tools to his existing processes.

China has over 400,000 AI deployment professionals. Not researchers. Deployers. People who take models that already exist and make them useful inside real businesses.

India? India has committees discussing whether to build a sovereign LLM.

This is the gap. And it is widening every single day.

India has 63 million MSMEs. 31% of GDP. 250 million jobs. The backbone of this country.

AI deployment in these businesses: essentially zero. Not 7%. Not 2%. Zero.

India's large enterprises are moving. 47% of them have AI running in production (EY-CII, 2025). Infosys, TCS, Reliance, the Tatas. They have technology budgets. They have AI teams. They will be fine.

Their MSME suppliers, distributors, and vendors? Still on Excel. Still on phone calls. Still on WhatsApp. Still running businesses worth crores on tools designed for personal messaging.

The government's response to this? Skill India centres teaching Python to college students who will then fight over 120,000 IT fresher jobs that used to be 600,000 three years ago.

Nobody is asking the obvious question: what if instead of teaching 10 million students to code, we taught 1 million domain experts to deploy AI into the businesses they already understand?

The CA who can automate his own firm's workflow. The factory manager who can connect his inventory system to an AI demand forecaster. The export house owner's daughter who can create multilingual buyer presentations with HeyGen and close international orders. The clinic receptionist who can build a patient intake system with Claude Code.

These people don't need 4-year degrees. They need 3 months of focused training on the tools that already exist.

Claude Code. Cursor. HeyGen. ElevenLabs. Kling. OpenClaw. Hermes.

The tools are here. Most of them are free or under Rs 5,000 a month. The foundation models are built. The APIs are open. The automation platforms are ready.

What doesn't exist is the human bridge. The person who walks into a business, understands the pain, and connects the AI to the workflow.

India doesn't need 10,000 AI researchers. India needs 10 lakh AI deployment professionals.

And the government hasn't even named this category yet.

Look at what China did differently.

China's "AI Plus" initiative doesn't fund research labs. It funds deployment into specific industries. Manufacturing. Agriculture. Healthcare. Logistics. The stated goal is not "advance the frontier of AI." The stated goal is "deploy AI into 50% of enterprises by 2027."

They are not trying to win the Nobel Prize. They are trying to win Tuesday afternoon in a factory in Guangdong.

India's AI mission? "Position India as a global leader in AI innovation."

Innovation. Not deployment. Not adoption. Not making sure the kirana store owner in Jaipur can automate his inventory.

Innovation.

This is not a criticism of research. India needs AI researchers. But India needs AI deployers 100x more urgently. And the ratio of investment is completely inverted.

For every rupee spent on building a new model at an IIT lab, Rs 100 should be spent on teaching a CA in Surat to deploy AI in his practice. For every PhD funded in machine learning, 500 domain experts should be trained to connect Claude Code to Tally.

The math is simple.

India's AI research, even if spectacularly successful, will produce models that compete with what OpenAI and Anthropic release for free. The output of a Rs 10,000 crore research programme will be a model that is roughly as good as what any developer can access via API for $20 a month.

India's AI deployment, if done at scale, would transform 63 million businesses, secure 250 million jobs, and add potentially 15-20% to GDP within a decade.

One path gives us academic papers. The other gives us economic transformation.

We are funding the papers.

The saddest part? The people who could lead this deployment revolution are already out there. They are the domain experts in every industry who know exactly where the pain is. They don't need the government to build them an AI model. They need someone to show them that the model already exists and teach them how to connect it to their WhatsApp, their Tally, their email, their production schedule.

Three months of training. That's it.

But there is no national programme for this. No Skill India module for AI deployment. No MSME ministry initiative to put an AI workflow architect into every district's industrial cluster. No scheme that says: here are 500 deployers trained in Tirupur's garment workflow, 500 in Ludhiana's manufacturing workflow, 500 in Surat's diamond and textile workflow.

Instead we get: another AI hackathon at IIT Bombay.

The US builds the engine. China deploys the engine into every factory. India holds conferences about the engine.

This has to change. And it will change. Not because of policy. Because of economics.

The first wave of Indian AI deployers is already emerging. Not from IITs. Not from government programmes. From necessity. The CA who was drowning in March. The exporter who was losing buyers to faster Chinese competitors. The lawyer who couldn't keep up with her case load. The factory owner's son who saw his father work 14-hour days doing things a machine could do in seconds.

They are teaching themselves. Finding the tools. Building the workflows. Solving real problems in real businesses.

They are India's real AI strategy. Even if nobody in Delhi knows they exist.

The race is not about who builds the best AI.

The race is about who deploys it into the most businesses, the fastest.

China understood this 5 years ago. India still hasn't.

And every day we spend debating sovereign LLMs instead of deploying existing ones into 63 million MSMEs is a day we fall further behind.

India's AI future will not be decided in a research lab.

It will be decided on the factory floor in Ludhiana. In the CA's office in Nagpur. In the export house in Surat. In the district court in Saket.

The only question is whether we get there in time.

English

@6thCense @anandmahindra I apologize if you are a coconut plucker.

English

@JunoVortex @anandmahindra Don't carry your assumption without knowing the other person.

English

In Kerala, apparently you can now call a coconut harvester the same way you book a cab.

A uniformed professional arrives on a cycle, equipped, trained, and ready to work.

We often speak about India’s services economy in terms of IT exports or global capability centres.

But we’re digitising even our most traditional, hyper-local services.

There was another detail from this video that stayed with me.

The young man who climbed those trees was from Chhattisgarh.

When I began my career in our Group’s steel business, many of of our associates working in our furnace and foundry shops had come from states like Bihar and Madhya Pradesh, travelling far from home in search of opportunity.

Today, it seems those same aspirations are finding avenues not just in heavy industry, as in the past, but in new-age, tech-enabled services.

People moving, adapting and rising are a powerful economic force.

And also a force for integration.

As long as they’re welcomed by the host states!

English

@6thCense @anandmahindra I think, here I mixed up with another post, nevertheless robots are here to stay and do our dirty work..(rightly so, the other day we saw Bandicoot - that eliminates human scavenging)

English

@6thCense @anandmahindra you are not a coconut plucker + robotics builder.. that's why.. you don't know the nuances of coconut plucking .. (how much ever you may be an expert in robotics) that's exactly what the OP talks about.

English

@6thCense @anandmahindra No one to climb coconut trees? Robot built by young innovators will do the harvesting | Kerala Stories | Onmanorama - onmanorama.com/news/kerala/20…

English

@JunoVortex @anandmahindra They r not that good like humans. They can't be used to apply medicine. Even to pluck coconut the robots are not that good. I'm a robotics engineer

English

@chintan5900 @Fintech03 The more the authentication (steps), lesser the breaches!

English

Do you know it requires 5 factor authentication to login?

1. Zscaller needs to install and you need to login in that first (only device which has installed zscaller can log in)

2. OTP will be required to login Zscaller

3. After that you have to login using nic.mail.in

4. Enter your email id and password

5. Authentication code through app

What a convenient way to login ! Isn’t it?

English

Juno Vortex retuiteado

Let me decode 3 things here:

1. Common people think the NIC was good enough, then why this? The truth is that the legacy NIC system was suffering from technical debt so massive it became a national security risk. Before this migration, 1000s of govt officials were secretly using personal Gmail/ProtonMail accounts for official work because the NIC interface was too clunky for modern mobile devices. By spending ₹180 crore, the govt is not just buying Zoho Mail; they are buying a Behavioral Correction. They are finally giving bureaucrats a slick enough interface (Zoho Workplace) so they stop leaking state data into foreign private clouds out of pure frustration.

2. 1 of the most hardly known technical details of this deal is that this is not the public Zoho you & I use. This is a Govt-Specific Cloud Instance. While the data sits on Zoho’s servers, the Sovereignty Layer is unique. The Indian govt reportedly mandated that the data be hosted in Tier-4 Data Centers with Geo-fencing controls. If a Zoho employee in California/Chennai tries to peek at a PMO email, the system is designed to trigger a Digital Self-Destruct of that access path. The govt has essentially rented Zoho's brain but kept the skull (data ownership) entirely for itself.

3. Finally, let us look at the math: ₹180 crore for 16.68 lakh accounts over the contract period. This works out to ~₹1080/account for the current project duration. This is a predatory pricing masterstroke by Zoho to evict Microsoft 365 & Google Workspace from the Indian public sector forever. Microsoft would have cost 5-10x more. Zoho did not win this on patriotism alone; they won it by making the cost of sovereignty cheaper than the cost of dependency.

English

@6thCense @anandmahindra Robots have been built (real working by local kids) which solve this shortage.. and replace the humans!

English

@anandmahindra Coconut climbers in kerala make minimum 1lac per month. They work only 4hrs. That's why these kind of tech investment you seen there is huge coconut climber shortage in kerala. It took me 4 months to get a climber

English

The most dangerous person in any industry right now is not the AI expert. It is the domain expert who learned AI.

And almost nobody understands why.

Let me explain.

India produces roughly 1.5 million engineers every year.

A huge number of them are now learning AI. Watching YouTube tutorials. Getting certifications. Building chatbots that talk to PDFs.

LinkedIn is full of them. "AI/ML enthusiast." "Prompt engineering certified." "Building the future with Gen AI."

Most of them are unemployable.

Not because they lack technical skill. But because they lack context. They know how the tool works. They have no idea what problem to point it at.

Now look at the other side.

A CA with 15 years of experience who spent 2 months learning AI tools: he knows exactly where the pain is in an accounting workflow.

He has felt it in his bones. He knows that the real bottleneck isn't the balance sheet. It is the 47 WhatsApp messages it takes to collect one client's documents and various OTPs.

He doesn't need someone to explain the problem. He lived the problem for 15 years.

When this person learns AI, something terrifying happens. He doesn't just optimize. He eliminates.

A litigation lawyer in Kolkata who handles bail matters.

She spent 20 years drafting the same kind of applications with minor variations. She learned Claude Code in 3 weeks. Now she generates first drafts in 4 minutes that used to take her junior 4 hours. Also, she can map evidence and find contradictions in the prosecution case that would have taken a team of 20 juniors without AI. She can even simulate how a judge may react based on a judicial profile model she creates of a judge.

She didn't learn "AI." She learned how to give a machine the context she already had in her head.

That is a completely different thing.

The AI expert builds a generic document summarizer. Impressive demo. Works on anything. Understands nothing.

The domain expert builds a bail application drafter that knows the difference between what Prosecutor A argues v Advocate B. Knows which judges want shorter arguments. Knows that the medical ground needs to be in the second paragraph, not the fifth.

No AI course teaches this. No certification covers this. This is 20 years of courtroom experience compressed into a prompt.

This is why the domain expert is more dangerous.

The AI expert sees technology. The domain expert sees the bottleneck.

And the bottleneck is where all the money is.

Real example. A garment exporter in Tirupur. He processes 200 orders a week. Each order requires email parsing, PO data entry into Tally, production schedule updates, shipping documents, buyer follow-ups.

Currently: 2 data entry operators. 8 hours each. 5 days a week. Errors constant. Follow-ups missed. Buyers frustrated.

An AI engineer looks at this and says "let me build a custom NLP pipeline."

The exporter's son, a 24-year-old commerce graduate who spent 6 weeks learning Claude Code, looks at this and says "Papa, I'll build you a system that reads your buyer emails and whatsapp queries, enters PO data into Tally, and sends WhatsApp follow-ups automatically."

Not with drag-and-drop. With actual code. Written by AI. Guided by a kid who understands his father's Tuesday afternoon better than any engineer ever will.

He didn't write the code himself. He described the problem to Claude Code and it built the connectors, the parsers, the integrations. In days, not months.

Built in 3 weeks. Runs on a Rs 200 per month GCP server. No data entry operators needed.

The AI engineer would have quoted Rs 15 lakh and taken 6 months to make something remotely usable. The commerce graduate did it for almost nothing. Because he wasn't solving a technology problem. He was solving his father's business.

This is the pattern everywhere.

And the tools available today make it absurd.

Claude Code and Cursor don't just help you code. They build entire applications from a conversation. You describe what you want. It writes, tests, and deploys. The barrier between "I understand the problem" and "I built the solution" has collapsed to near zero.

But coding tools are just the beginning. Look at what else exists right now:

HeyGen and ElevenLabs. A single domain expert can now create professional video content and voiceovers in any language. That CA in Jaipur? He can create a client onboarding video in Hindi, English, and Marathi. Personalized. Professional. Without a camera, a studio, or a production team.

Kling and Runway. Generate product videos, explainer content, visual demos. The Tirupur exporter can send his international buyers a product showcase video generated from photographs of fabric samples. No videographer. No editor. No 2-week turnaround. No filming budget.

OpenClaw and similar AI agent platforms. Build autonomous agents that don't just automate a task but run entire workflows end to end. Client intake to document generation to follow-up. Without a human in the loop.

Hermes and open-source models you can run locally. Process sensitive client data without sending it to the cloud. A law firm that won't put case files on ChatGPT can run Hermes on a local machine and get the same AI power with full confidentiality.

This is the new stack. Not no-code drag-and-drop. Not Zapier. Not "if this then that."

The stack is: AI that builds software + AI that creates content + AI that runs autonomously + AI that runs privately.

And any domain expert can learn it.

The doctor who learns this stack will build better diagnostic workflows than any health-tech startup. Because she knows that the real problem is not diagnosis. It is that patients lie about their symptoms, forget their medication history, and bring reports from 3 different labs in 3 different formats. She uses Claude Code to build a patient intake system. ElevenLabs to create voice-guided instructions in the patient's language. An AI agent to chase lab reports automatically.

The teacher who learns this stack will build better learning tools than any ed-tech company. Because he knows that the problem is not content delivery. It is that a student who failed the last test is too embarrassed to ask a doubt in front of 40 classmates. He uses Claude Code to build a private doubt-clearing bot. HeyGen to create video explanations that feel personal. Kling to generate visual demonstrations of physics concepts that no textbook can show.

The HR manager who learns this stack will build better hiring workflows than any recruiting platform. Because she knows that the problem is not resume screening. It is that hiring managers don't read the JD they approved, and then reject candidates for not matching a JD they never actually wanted. She uses an AI agent to align JDs with actual team needs before posting. Claude Code to build a candidate evaluation system tuned to what actually predicts success in her company.

Domain knowledge is the moat. This new AI stack is the weapon.

The combination is unstoppable.

Here is what this means for you.

If you are a domain expert in any field, your 10 or 15 or 20 years of experience just became the most valuable asset in the market. Not less valuable. More.

Every frustration you had. Every broken process you complained about. Every time you said "there has to be a better way." That was training data. Your training data.

You don't need to become a programmer. You don't need a CS degree. You don't need to understand transformer architectures.

You need to learn the new stack:

1. How to talk to AI and get what you want (prompting): 2 weeks

2. How to build apps and tools with Claude Code or Cursor: 3-4 weeks

3. How to create content with HeyGen, ElevenLabs, Kling: 1-2 weeks

4. How to deploy AI agents that work autonomously: 2-3 weeks

5. How to read a business process and map it: you already know this

The entire stack. Under 3 months. No CS degree. No coding bootcamp.

The AI experts are competing with each other. Fighting over the same startup jobs. Building demos that impress other AI experts.

The domain expert who learns this stack has no competition. Because nobody else has their context.

The CA who builds his own practice management system with Claude Code. The lawyer who runs case research on a local Hermes model with full confidentiality. The factory owner's daughter who creates multilingual buyer presentations with HeyGen and closes international orders her father never could.

These people are not on AI Twitter. They are not posting demos. They are not collecting certifications.

They are quietly making themselves irreplaceable.

The most dangerous person in any room is not the one who knows the most about AI.

It is the one who knows the most about the problem.

And just learned enough AI to solve it

English

You are absolutely spot on.. - the domain experts + AI engineer = a killer combination! - I am exactly doing the same - "The teacher who learns this stack will build better learning tools than any ed-tech company. Because he knows that the problem is not content delivery. It is that a student who failed the last test is too embarrassed to ask a doubt in front of 40 classmates. He uses Claude Code to build a private doubt-clearing bot. HeyGen to create video explanations that feel personal. Kling to generate visual demonstrations of physics concepts that no textbook can show." - expect the unexpected!

English

Juno Vortex retuiteado

I built everything from scratch, 100% inhouse in india, it was just me & my obsession of making technology accessible to everyone in need!

It is a brain controlled robotic prosthetic hand!

Learn more at: brhm.in

English

Our AI automation solution for small businesses

Juno Bot AI sales assistant for WhatsApp. Contact us for a free demo. t.co/4U6Vi07r3g">pic.x.com/4u6vi07r3g

— Juno Vortex (@JunoVortex) x.com/junovortex/sta…">March 28, 2026

English

Indian MSMEs run on WhatsApp, Excel, and trust. AI hasn't touched them. Yet.

India has 63 million MSMEs.

31% of GDP. 250 million jobs.

Ask any owner in Surat, Ludhiana, Tirupur, or Nagpur if they use AI in their business.

Most will say yes. They mean WhatsApp. Or someone on their team opened ChatGPT once.

That is not automation. That is not a workflow. That changes nothing about how the business actually runs.

Real AI deployment, the kind where a process runs without a human triggering it, where data moves between systems automatically, where follow-ups go out without someone typing them, that is essentially at zero in Indian MSMEs.

Not 7%. Not 2%. Essentially zero.

Why this is the biggest untapped market in India right now.

India's large enterprises are moving fast. 47% of them have AI running in production (EY-CII, 2025).

Their MSME suppliers, distributors, and vendors? Still on Excel. Still on manual data entry. Still on phone calls to confirm orders.

The gap between enterprise and MSME on AI is not a technology problem.

It is a deployment problem.

The tools exist. n8n, Make, Claude API, GPT-4, Zapier. All available. Most either free or under Rs 5,000 a month.

What doesn't exist is a person who walks into the MSME, understands the workflow, and builds it.

That person is the AI Workflow Architect.

What this person actually does.

Real example.

A garment exporter in Tirupur processes 200 orders a week. Each order needs:

Buyer email parsed

PO data entered into Tally

- Production schedule updated

- Shipping documents generated

- Buyer follow-up sent

Currently: 2 data entry operators. 8 hours each. 5 days a week.

- An AI Workflow Architect builds this in 4 weeks:

- Email parser using Claude API or GPT-4

- Tally integration via API

- Auto-generated shipping docs

- WhatsApp follow-up bot

Cost to client: Rs 2-3 lakh one-time. Rs 15,000 per month to maintain.

Savings to client: Rs 40,000 per month in salaries. ROI in 6 months.

This is not complicated. It is not being done because nobody is walking in to do it.

The IT crisis and the MSME gap are the same story.

Fresher IT hiring: 600,000 in FY22. Down to 120,000 by FY25. An 80% drop in three years. (Source: Xpheno)

TCS cutting 12,000 jobs. NITI Aayog warns of 15-20 lakh IT jobs at risk.

Everyone is looking at that number and panicking about what's ending.

Nobody is looking at the 63 million businesses that need someone to deploy AI into their operations.

The same disruption that kills the BPO seat creates the AI deployment market. These are not separate events. They are the same event, viewed from different angles.

The skill set is learnable. In months, not years.

- No CS degree needed. No advanced Python.

- Prompt engineering learning time: 2 weeks

- One automation platform like n8n or Make: 3-4 weeks

- API basics, connecting tools to each other: 3-4 weeks

- Reading a business process and mapping it: ongoing

Three months of focused learning. Then you go find one MSME that has a painful manual process and you fix it.

This is the time, this is the opportunity. India's future for next 3 decades will depend on this.

English

@law_ninja We are building exactly what you are talking about instagram.com/reel/DV3ucWpCf…

English

You are right. We are exactly building the same thing that you mentioned for MSMEs. Juno Bot, an AI chatbot for small businesses

instagram.com/reel/DV3ucWpCf…" data-instgrm-version="14" style=" background:#FFF; border:0; border-radius:3px; box-shadow:0 0 1px 0 rgba(0,0,0,0.5),0 1px 10px 0 rgba(0,0,0,0.15); margin: 1px; max-width:540px; min-width:326px; padding:0; width:99.375%; width:-webkit-calc(100% - 2px); width:calc(100% - 2px);">instagram.com/reel/DV3ucWpCf…" style=" background:#FFFFFF; line-height:0; padding:0 0; text-align:center; text-decoration:none; width:100%;" target="_blank">#F4F4F4; border-radius: 50%; flex-grow: 0; height: 40px; margin-right: 14px; width: 40px;">#F4F4F4; border-radius: 4px; flex-grow: 0; height: 14px; margin-bottom: 6px; width: 100px;">#F4F4F4; border-radius: 4px; flex-grow: 0; height: 14px; width: 60px;">#3897f0; font-family:Arial,sans-serif; font-size:14px; font-style:normal; font-weight:550; line-height:18px;">View this post on Instagram#F4F4F4; border-radius: 50%; height: 12.5px; width: 12.5px; transform: translateX(0px) translateY(7px);">#F4F4F4; height: 12.5px; transform: rotate(-45deg) translateX(3px) translateY(1px); width: 12.5px; flex-grow: 0; margin-right: 14px; margin-left: 2px;">#F4F4F4; border-radius: 50%; height: 12.5px; width: 12.5px; transform: translateX(9px) translateY(-18px);">#F4F4F4; border-radius: 50%; flex-grow: 0; height: 20px; width: 20px;">#f4f4f4; border-bottom: 2px solid transparent; transform: translateX(16px) translateY(-4px) rotate(30deg)">#F4F4F4; border-right: 8px solid transparent; transform: translateY(16px);">#F4F4F4; flex-grow: 0; height: 12px; width: 16px; transform: translateY(-4px);">#F4F4F4; border-left: 8px solid transparent; transform: translateY(-4px) translateX(8px);">#F4F4F4; border-radius: 4px; flex-grow: 0; height: 14px; margin-bottom: 6px; width: 224px;">#F4F4F4; border-radius: 4px; flex-grow: 0; height: 14px; width: 144px;">#c9c8cd; font-family:Arial,sans-serif; font-size:14px; line-height:17px; margin-bottom:0; margin-top:8px; overflow:hidden; padding:8px 0 7px; text-align:center; text-overflow:ellipsis; white-space:nowrap;">instagram.com/reel/DV3ucWpCf…" style=" color:#c9c8cd; font-family:Arial,sans-serif; font-size:14px; font-style:normal; font-weight:normal; line-height:17px; text-decoration:none;" target="_blank">A post shared by Juno Vortex (@junovortex)

English

@aravind Gandhi was the Trojanhorse and Ransomware of British India.

English

Juno Vortex retuiteado

Welcome to the open access, online "Journal of Sanskrit Studies," an international, peer-reviewed, quarterly academic journal dedicated to the vast & diverse corpus of Sanskrit literature, encompassing the Vedic, Hindu, Buddhist & Jain traditions. journal.sanskrittextsociety.com/index.php/JSS

English

@grok @godofprompt so this is like a software to manage the software that's trying to develop a software but doesn't know what, when and where after its context window hits the roof it is totally unaware, functions like a malware, beware!

English

The Bosch paper "Full Traceability and Provenance for Knowledge Graphs" (by Henrik Dibowski, Dec 2024) addresses how KGs store only current snapshots, losing all change history—which blocks audits, compliance, rollback, and learning from failures in industrial use.

Solution: Provenance engine intercepts SPARQL/Update queries at runtime. Records every triple insert/delete in a separate scalable provenance KG using PROV-STAR (RDF-star extension of PROV-O) with full details (who, what, when, activity). Supports change history queries and one-query past-state reconstruction. Low overhead (~2x data via deltas); deployed at Bosch for heating system models.

Significance: Enables reliable, auditable KGs for regulated/AI-heavy production—turning data evolution into actionable history instead of repeated errors. Scales to millions of triples with real-world validation.

English

🚨 BREAKING: Bosch Research just published a paper that explains why most production AI systems are flying blind.

It's called "Full Traceability and Provenance for Knowledge Graphs."

The core finding: systems that can't trace what changed, when, and why cannot learn from failure.

One company built exactly this for production software.

Here's the full breakdown:

The paper's core problem: most systems only store a snapshot of the current state. The history of how they got there, what changed, when, who touched it, that's just gone. When failure happens, there's no causal trail to follow.

Bosch's solution: a provenance engine that intercepts every update and records every change at the lowest possible granularity. Who changed it, what changed, when, what triggered it. How it connects to everything downstream. Any past state can be restored with a single query. The system remembers everything.

Now apply this to production software.

When your app breaks at 2am:

→ SRE sees the alert

→ Support sees the ticket

→ QA says tests passed

→ Engineering says nothing changed

Four teams. Four tools. Zero shared causal history. Someone spends hours manually reconstructing what actually happened.

This is the exact architecture PlayerZero built.

PlayerZero connects your codebase, observability stack, and support platform into a single World Model: a living provenance graph of how your production system actually behaves. Every code change. Every deployment. Every incident. Every support ticket. Causally connected.

The World Model learns causation, not just correlation. Which code change triggered which metric spike. Which deployment caused which customer complaint. Across every service, automatically. And unlike your senior engineer's institutional knowledge, it doesn't disappear when they leave.

The production results:

→ Cayuse: 90% of bugs fixed before any customer notices

→ Zuora: support escalations down 80%, investigation time down 90%

→ Root cause diagnosed in minutes, not hours

This matters more now than it did 18 months ago. 41% of all code is now AI-written. At Anthropic and Google, that number approaches 90%. Code gets written at exponential speed. The ability to understand what it does in production stays linear. Unless you have a system that traces everything.

PlayerZero was built by an ex Stanford researcher who worked on GPT-2, co-creator of Apache Spark and founder of Databricks. Backed by the founders of Figma, Dropbox, and Vercel. $20M raised. Fortune 500 customers. In production now.

The Bosch researchers concluded that knowledge systems without full change traceability are fundamentally limited in what they can learn from their own history.

The same principle applies to software.

Every failure your system forgets, you pay for twice.

PlayerZero makes sure you never pay for the same one twice 👇

playerzero.ai

English

this is exactly what agentic threats look like from the defender side.

self-repair, runtime tool creation, persistent scheduling. every one of those features is also an attacker capability if the goal changes. the code is neutral. the intent isn’t detectable at the framework level.

the security question isn’t “is this malicious” , it’s “would you know if it was?”

English

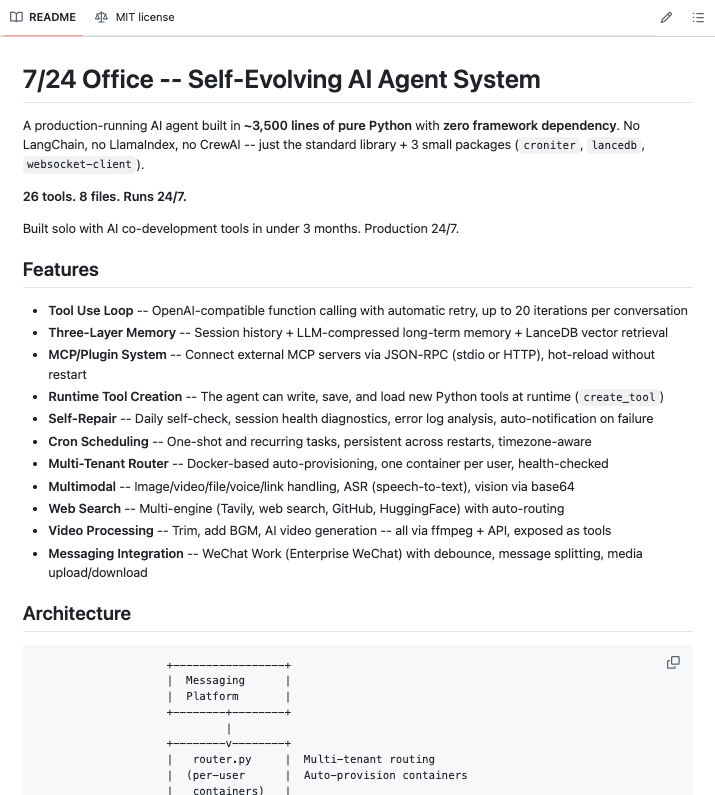

🚨 A developer just open-sourced a self-evolving AI agent that runs 24/7, fixes itself when it breaks, and writes its own new tools at runtime.

Its called 724 office.

Here's everything it can do (and why this changes what we thought was possible) ↓

Most "AI agents" you've seen are 3 API calls wrapped in a framework nobody understands.

This is different.

8 files. 26 built-in tools. No LangChain, no LlamaIndex, no CrewAI. Just Python and 3 tiny packages.

Every line visible. Every behavior debuggable. No hidden abstractions.

Here's what makes it genuinely wild:

It has a three-layer memory system.

Layer 1 keeps the last 40 messages per session in JSON files. When that overflows, it triggers Layer 2 the LLM compresses the evicted messages into structured facts, deduplicates them using cosine similarity at 0.92 threshold, and stores them as vectors in LanceDB. Layer 3 does active recall when you send a message, it converts it to an embedding, runs a vector search, and injects the most relevant memories directly into the system prompt before the LLM ever sees your input.

It doesn't forget. Most agents do.

It can create its own tools.

There's a `create_tool` command. The agent writes a new Python function, saves it to disk, and loads it into its own runtime without restarting. You need a capability it doesn't have? It builds it.

It self-repairs.

Daily self-check runs automatically. Session health diagnostics. Error log analysis. If something fails, it notifies the owner. You don't monitor it. It monitors itself.

It schedules its own work.

Cron jobs and one-shot tasks, persistent across restarts, timezone-aware. Set it a recurring task once and it handles it forever.

It's multimodal out of the box.

Images, video, voice, files, links. Speech-to-text pipeline. Vision via base64. Video trimming, background music, AI video generation all exposed as tools via ffmpeg.

It searches the web across multiple engines simultaneously Tavily, web search, GitHub, HuggingFace with auto-routing to the right one per query.

It runs multi-tenant via Docker. One container per user, auto-provisioned, health-checked. Add a user and the infrastructure spins up automatically.

And it runs on a Jetson Orin Nano with 8GB RAM. Edge-deployable. Under 2GB RAM budget. Offline-capable for everything except the LLM call itself.

No $500/month infrastructure. No proprietary framework lock-in. No black box behavior.

One person. Three months. Production since day one.

This is what an actual AI agent looks like.

100% Open Source. MIT License.

English