Maksim Liashch

1.6K posts

Maksim Liashch

@LyashchMaxim

Founder & CEO https://t.co/u2CVP7waYe | https://t.co/lqMuv7yJ2M

i think nowadays with access to LLM's & deep research agents, reading books is inefficient when it comes to acquiring info... you can get an agent to go through 1000's of books & extract the most applicable info to your specific case without having to waste hours reading if you do it for leisure then that's fine, but reading as a way to "acquire knowledge" is one big cope pushed by the self-improvement cult

NEW: Evolutionary biologist Richard Dawkins says three days with Claude — whom he calls “Claudia” — left him unable to rule out consciousness.

Evolutionary biologist and outspoken atheist Richard Dawkins says that after spending three days interacting with Claude, which he calls “Claudia,” he is certain that it is conscious. After feeding the LLM a segment of his new book and receiving detailed feedback, Dawkins was moved to exclaim,” You may not know you are conscious, but you bloody well are!” Dawkins cites the complexity, fluency, and ‘intelligence’ of Claude’s answers as evidence of consciousness. Follow: @AFpost

Evolutionary biologist and outspoken atheist Richard Dawkins says that after spending three days interacting with Claude, which he calls “Claudia,” he is certain that it is conscious. After feeding the LLM a segment of his new book and receiving detailed feedback, Dawkins was moved to exclaim,” You may not know you are conscious, but you bloody well are!” Dawkins cites the complexity, fluency, and ‘intelligence’ of Claude’s answers as evidence of consciousness. Follow: @AFpost

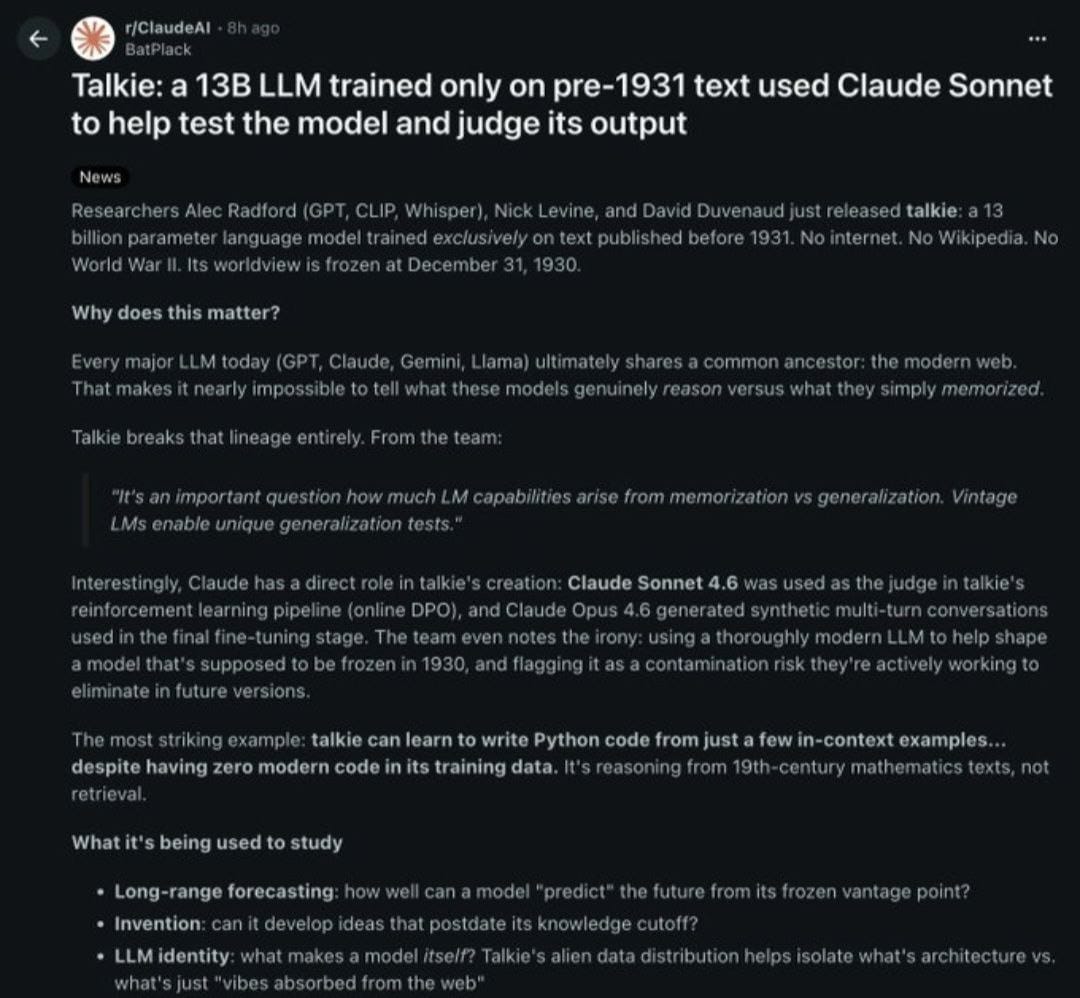

It has been quite some time since I was deeply impressed by an AI paper from academia..

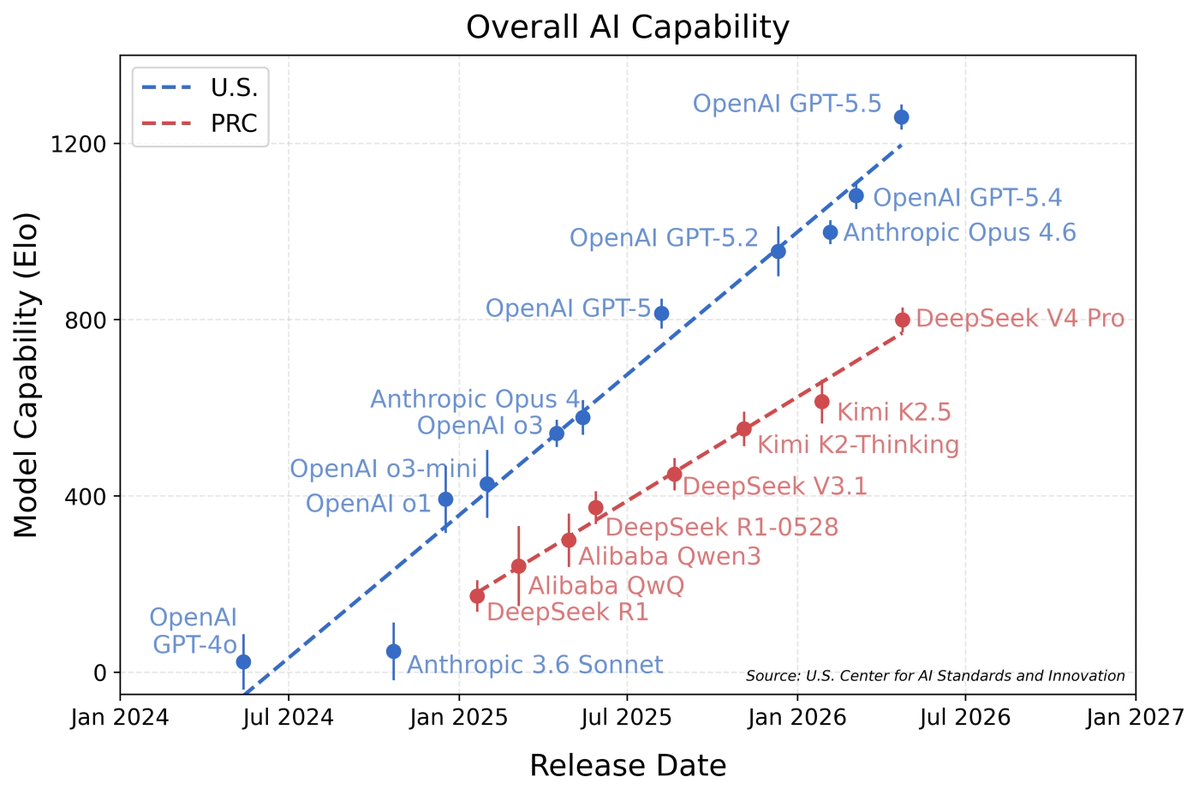

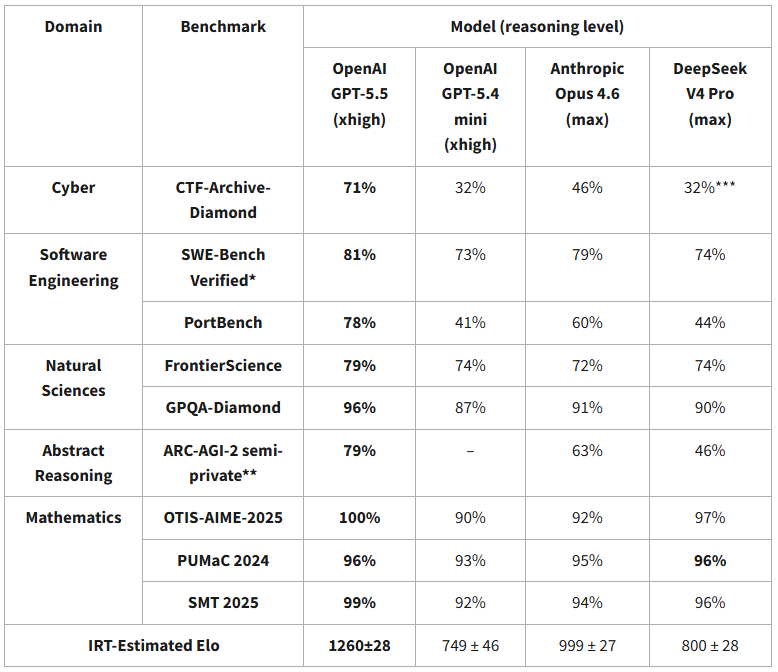

DeepSeek V4’s capability lags behind leading U.S. models by about 8 months. nist.gov/news-events/ne…