NVIDIA Asia Pacific

5.4K posts

NVIDIA Asia Pacific

@NVIDIAAP

The official Twitter channel for all NVIDIA news, products and events in Asia Pacific. Follow us on Facebook: https://t.co/EEBiWv6vEl

Se unió Ağustos 2017

16 Siguiendo7.9K Seguidores

Couldn’t make it to San Jose?

It’s not too late to join in. Secure a FREE virtual pass granting access to 600+ #NVIDIAGTC sessions.

⌛ Register by Thursday, March 26 PT

English

A bold bet on parallel computing paved the way for the AI revolution.

We honor 20 years of #CUDA and thank the 6 million architects who turned a programming model into a global foundation for innovation. 💚

🔗 nvda.ws/4bndvVz

#NVIDIAGTC

English

Build deep agents for enterprise search with the NVIDIA AI‑Q blueprint and LangChain 🔍

Our new technical tutorial walks you through:

✅ Connecting agents to your enterprise data sources

✅ Configuring the agents with Nemotron models

✅ Monitoring and debugging performance in LangSmith

📝 nvda.ws/4rKY6mW

English

The future of AI isn't being built in a vacuum. 🤝

NVIDIA Founder and CEO Jensen Huang sat down with the builders from AMP PBC, @bfl_ml, @Cursor_ai, @LangChain, @MistralAI, @EvidenceOpen, @Perplexity_ai, @Reflection_AI, @Thinkymachines & @Allen_ai to discuss the rapid rise of open frontier models.

🔗 Learn more: nvda.ws/3NrGdvh

#NVIDIAGTC

English

🤖 From humanoids greeting guests to DJ arms spinning records and quadrupeds roaming the halls, #NVIDIAGTC is the spot for robotics.

🔗 nvda.ws/3NQcNaa

English

In 2006, NVIDIA made a bold bet on a parallel computing platform that would eventually redefine the limits of science and industry.

20 years later, CUDA serves as the foundation for 6 million developers worldwide—and we are only just beginning.

Join the next wave of architects and build what’s next on two decades of innovation.

🔗 nvda.ws/4uKctKU

English

NVIDIA Asia Pacific retuiteado

🏥 Don't teach robots inside hospitals - train the hospital in simulation first.

Project Rheo is a new blueprint for Hospital Automation & Physical AI. See how you can help solve the data gap in healthcare robotics, helping make deployment scalable.

✅ GR00T VLA Models: Train Physical AI using Vision-Language-Action (VLA) architectures for intuitive, multi-modal robot control.

✅ Isaac Sim & Lab-Arena: Develop in high-fidelity digital twins to master complex loco-manipulation and precision bimanual tasks.

✅ Cosmos-H Synthetic Data: Scale training datasets exponentially with generative AI to bridge the domain gap between simulation and real-world hospitals.

✅ RL Post-Training: Use Reinforcement Learning (PPO) to refine high-precision stages, ensuring robust performance in chaotic clinical environments.

#NVIDIAGTC

English

Imagine a robot that’s a jack of all trades but still an expert in its field. 🤖

With the open NVIDIA Isaac platform, robotics developers get the technology they need to build these generalist‑specialist robots and deploy them at scale.

These open models, libraries and frameworks can run in the cloud or at the edge on Jetson, and can be integrated into long‑running agents like OpenClaw to power continuous learning and real‑world autonomy. 🦞

Learn more ➡️ nvda.ws/4rJpB06

#NVIDIAGTC

English

AI agents are hitting a new bottleneck: context memory.

The newly renamed NVIDIA CMX context memory storage platform, based on the NVIDIA STX storage architecture announced at #NVIDIAGTC and powered by NVIDIA BlueField-4, creates a pod-level context tier built for KV cache with up to 5x higher TPS and 5x better power efficiency.

Explore how CMX unlocks scalable, AI-native context memory for agentic inference. nvda.ws/4cW71ya

English

AI factories require validating the entire infrastructure stack before deployment.

NVIDIA DSX Air enables teams to design and simulate AI infrastructure in the cloud across compute, networking, storage, and security before a single rack is installed.

By modeling configurations, automation workflows, and system integrations in advance, organizations can reduce integration risk and bring AI infrastructure online faster.

Learn how it works ➡️ nvda.ws/4bSnkuA

English

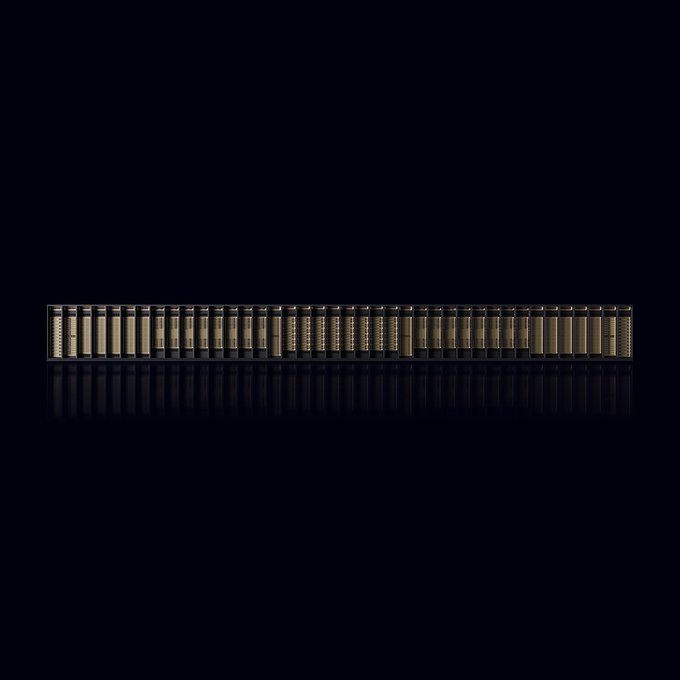

Meet the NVIDIA Vera Rubin POD — a next-generation AI supercomputer built for the era of agentic applications.

⚙️ Powered by seven co-designed chips across compute, networking, and storage

🧠 Built on 3rd-gen NVIDIA MGX rack-scale architecture

🧩 Five specialized rack-scale systems, one coherent AI platform

Read the technical deep dive: nvda.ws/4uDiLM8

#NVIDIAGTC

English

🏭 @WeAreHRT (Hudson River Trading) accelerates algorithmic trading with an energy-efficient AI factory powered by the NVIDIA Blackwell architecture and NVIDIA Spectrum-X Ethernet networking.

The AI factory connects HRT researchers directly to immense compute power, streamlining workflows across data ingestion, model training, simulation and deployment.

Learn how AI factories are transforming financial services: nvda.ws/3Pjqu1R

#NVIDIAGTC

English

We’re bringing you rolling coverage from #NVIDIAGTC.

Check out the major retail and media updates in case you missed it:

🧪 @LOrealGroupe x ALCHEMI: L’Oréal is accelerating skincare formulation 100x by using NVIDIA ALCHEMI to screen molecular combinations at unprecedented speeds.

🛍️ Agentic Commerce: Our new NVIDIA Blueprint for retail agentic commerce with @OpenAI allows shoppers to move from discovery to secure checkout entirely through AI agents.

🎬 Content Localization: New AI technologies enable studios and broadcasters to deliver software-driven localization at scale for global audiences.

👉 Get the full story and live updates here: nvda.ws/40Fze4N

English

Reasoning models are growing fast, and running them efficiently requires distributing workloads across multiple GPU nodes.

NVIDIA Dynamo 1.0 delivers low-latency, high-throughput distributed inference for production AI deployments—while boosting NVIDIA Blackwell inference performance by up to 7x, lowering token cost, and expanding opportunities with free #OSS.

Built for production. Key features:

🔹 Disaggregated serving

🔹 Agentic-aware routing

🔹 Multimodal inference

🔹 Topology-aware Kubernetes scaling

🔹 SLA-ready quick deployments

Available now with native support for SGLang (@lmsysorg), TensorRT-LLM, and @vllm_project 👉 nvda.ws/4rLNAM5

English

AI-native services are exposing a new bottleneck in AI infrastructure.

The challenge is shifting from peak training throughput to delivering deterministic inference at scale with predictable latency, jitter, and sustainable token economics.

Check out our latest tech blog to see how AI grids make real-time, multi-modal, and hyper-personalized AI experiences viable at scale.

📡 Read now: nvda.ws/47bcehM

English

🦞 Make claw agents safer with our new NVIDIA OpenShell – an open source runtime to build with autonomous evolving agents.

🐚 OpenShell sits between your agent and your infrastructure to govern how the agent executes, what the agent can see and do, and where inference goes.

🔐 Gives you fine-grained control over your privacy and security while letting you benefit from the agents’ productivity.

Run one command—and make zero code changes. Then any claw or coding agent like OpenClaw, Anthropic’s Claude Code, or OpenAI’s Codex can run unmodified inside OpenShell.

Every SaaS company just became an agent company. The missing piece was never the agents — it was the infrastructure that makes them safe enough to deploy. That's OpenShell.

Technical blog to learn more ➡️ nvda.ws/40F0HDQ

English