Tweet fijado

Eric Anderson

2.8K posts

Eric Anderson

@putersdcat

$TSLA, $MSFT. 🇺🇸 living in 🇩🇪 TESLA 3, Y, S. husband and father, post are 69% /S 😎

Munich, Bavaria Se unió Kasım 2014

1.6K Siguiendo420 Seguidores

Trump just signed off on NVIDIA's plan to divert advanced chips to China.

That'll drive prices of laptops and smartphones even higher – and help China overtake us in AI.

Big Tech and China win. The rest of us lose.

Bloomberg@business

Nvidia CEO Jensen Huang said the company is firing up manufacturing of H200 AI accelerators for customers in China, a sign of progress in the chipmaker’s effort to reenter the vital market bloomberg.com/news/articles/…

English

On the AI3 Retrofit Discussion…

I still have one HW3 vehicle and two HW4; they are all in the EU, so I'm just fucked either way, no fault of Tesla. From my own deep research and as a heavy technologist, I can say with 100% certainty that Tesla is not dragging its feet on this to screw anyone over. A retrofit for AI5 is pure fiction, as it's not clear if it's gone to the "tape-out" phase, so we know nothing about the physical die size and power requirements. As for dropping AI4 into an AI3 car, the immediate issues are that the AI4 chip is more power-hungry than the AI3 and thus needs more power than is currently available at the AI3 location, and it puts out more heat at full tilt than the AI3. All of this means it's not something where they can just take AI4 compute and drop it in: first, a physical form factor change to the PCBA hosting the NPUs would be needed; then, most likely, a different, somehow less power-hungry or de-rated AI4 SoC configuration would be required. It would be a huge engineering cost and also pull from a custom silicon supply chain that already has demand outstripping supply. So am I saying we're all fucked? Well, no, I don't think so. From my own deep dives into what little information is publicly known about the Tesla FSD multi-net end-to-end architecture, the core bottleneck holding back the AI3 is not entirely peak TOPS but more the DRAM memory quantity and speed. With that said, I'm still confident that a "v14-lite" model can be and will be distilled/back-ported to AI3 and may not even need a camera upgrade to reach current v14-level performance, even with reading hand gestures. I don't have any inside knowledge, but I go hard with AI, and Tesla's models are already insanely optimized, quantized, etc., but it's all based on the world's best and brightest meat nets. That is to say, with AI swarm intelligence applied to the problem, many little optimizations will be found, even down to the way the DRAM can be utilized differently than it is today for further gains. I'm confident Tesla will make good on this, but there is no easy button.

@elonmusk

English

I still have one HW3 vehicle and two HW4; they are all in the EU, so I'm just fucked either way, no fault of Tesla. From my own deep research and as a heavy technologist, I can say with 100% certainty that Tesla is not dragging its feet on this to screw anyone over. A retrofit for AI5 is pure fiction, as it's not clear if it's gone to the "tape-out" phase, so we know nothing about the physical die size and power requirements. As for dropping AI4 into an AI3 car, the immediate issues are that the AI4 chip is more power-hungry than the AI3 and thus needs more power than is currently available at the AI3 location, and it puts out more heat at full tilt than the AI3. All of this means it's not something where they can just take AI4 compute and drop it in: first, a physical form factor change to the PCBA hosting the NPUs would be needed; then, most likely, a different, somehow less power-hungry or de-rated AI4 SoC configuration would be required. It would be a huge engineering cost and also pull from a custom silicon supply chain that already has demand outstripping supply. So am I saying we're all fucked? Well, no, I don't think so. From my own deep dives into what little information is publicly known about the Tesla FSD multi-net end-to-end architecture, the core bottleneck holding back the AI3 is not entirely peak TOPS but more the DRAM memory quantity and speed. With that said, I'm still confident that a "v14-lite" model can be and will be distilled/back-ported to AI3 and may not even need a camera upgrade to reach current v14-level performance, even with reading hand gestures. I don't have any inside knowledge, but I go hard with AI, and Tesla's models are already insanely optimized, quantized, etc., but it's all based on the world's best and brightest meat nets. That is to say, with AI swarm intelligence applied to the problem, many little optimizations will be found, even down to the way the DRAM can be utilized differently than it is today for further gains. I'm confident Tesla will make good on this, but there is no easy button.

English

@elonmusk @LiveSquawk Elon, AI3 vehicle owners have supported the Tesla mission longer than anyone else. Could you please direct Tesla to prioritize announcing hardware retrofits for AI3 car computers with AI4/AI5 computers? Especially for FSD owners, even for the price.

English

Macrohard or Digital Optimus is a joint xAI-Tesla project, coming as part of Tesla’s investment agreement with xAI.

Grok is the master conductor/navigator with deep understanding of the world to direct digital Optimus, which is processing and actioning the past 5 secs of real-time computer screen video and keyboard/mouse actions. Grok is like a much more advanced and sophisticated version of turn-by-turn navigation software.

You can think of it as Digital Optimus AI being System 1 (instinctive part of the mind) and Grok being System 2. (thinking part of the mind).

This will run very competitively on the super low cost Tesla AI4 ($650) paired with relatively frugal use of the much more expensive xAI Nvidia hardware. And it will be the only real-time smart AI system. This is a big deal.

In principle, it is capable of emulating the function of entire companies. That is why the program is called MACROHARD, a funny reference to Microsoft.

No other company can yet do this.

English

@heygurisingh I have run it on a 7th gen i7 in a surface book 2 it’s not easy to get the dependencies compiled right, but once you do it’s useable, I started to build a an educational game for my kids where bitnet can be used for simple npc dialog all offline.

English

Holy shit... Microsoft open sourced an inference framework that runs a 100B parameter LLM on a single CPU.

It's called BitNet. And it does what was supposed to be impossible.

No GPU. No cloud. No $10K hardware setup. Just your laptop running a 100-billion parameter model at human reading speed.

Here's how it works:

Every other LLM stores weights in 32-bit or 16-bit floats.

BitNet uses 1.58 bits.

Weights are ternary just -1, 0, or +1. That's it. No floats. No expensive matrix math. Pure integer operations your CPU was already built for.

The result:

- 100B model runs on a single CPU at 5-7 tokens/second

- 2.37x to 6.17x faster than llama.cpp on x86

- 82% lower energy consumption on x86 CPUs

- 1.37x to 5.07x speedup on ARM (your MacBook)

- Memory drops by 16-32x vs full-precision models

The wildest part:

Accuracy barely moves.

BitNet b1.58 2B4T their flagship model was trained on 4 trillion tokens and benchmarks competitively against full-precision models of the same size. The quantization isn't destroying quality. It's just removing the bloat.

What this actually means:

- Run AI completely offline. Your data never leaves your machine

- Deploy LLMs on phones, IoT devices, edge hardware

- No more cloud API bills for inference

- AI in regions with no reliable internet

The model supports ARM and x86. Works on your MacBook, your Linux box, your Windows machine.

27.4K GitHub stars. 2.2K forks. Built by Microsoft Research.

100% Open Source. MIT License.

English

@ogre_codes @mrkylefield This is the most popular 2 Seater actually comes with 2 steering wheels! Cybercab needs to get with it.

English

Generally speaking *cabs* have 2 useful seats, the front row being reserved for the driver. There are a few mini-van cabs, but they are less common in fleets. Most Uber drivers only allow passengers in the back seat as well.

So "Less than 1% of the market" is incorrect.

Whole Mars Catalog@wholemars

You’re forgetting that the iPhone will have no keyboard, a form factor that makes up less than 1% of the smartphone market. So demand will be limited.

English

@visegrad24 The B-52s have now entered the theater. That’s when the heavy ordinance phase begins. Anyone who thinks the IRGC has a chance of outlasting the US doesn’t understand modern warfare.

English

Modell 3 mit 2 Kindern, 2 Erwachsene im Inneren stürzt 40 Meter von der Klippe, Verletzungen wurden zunächst als mittelschwer beschrieben.

CALFIRE: "Nicht weniger als ein Wunder!"

@Tesla Sicherheit ist auf einem anderen Level!

Deutsch

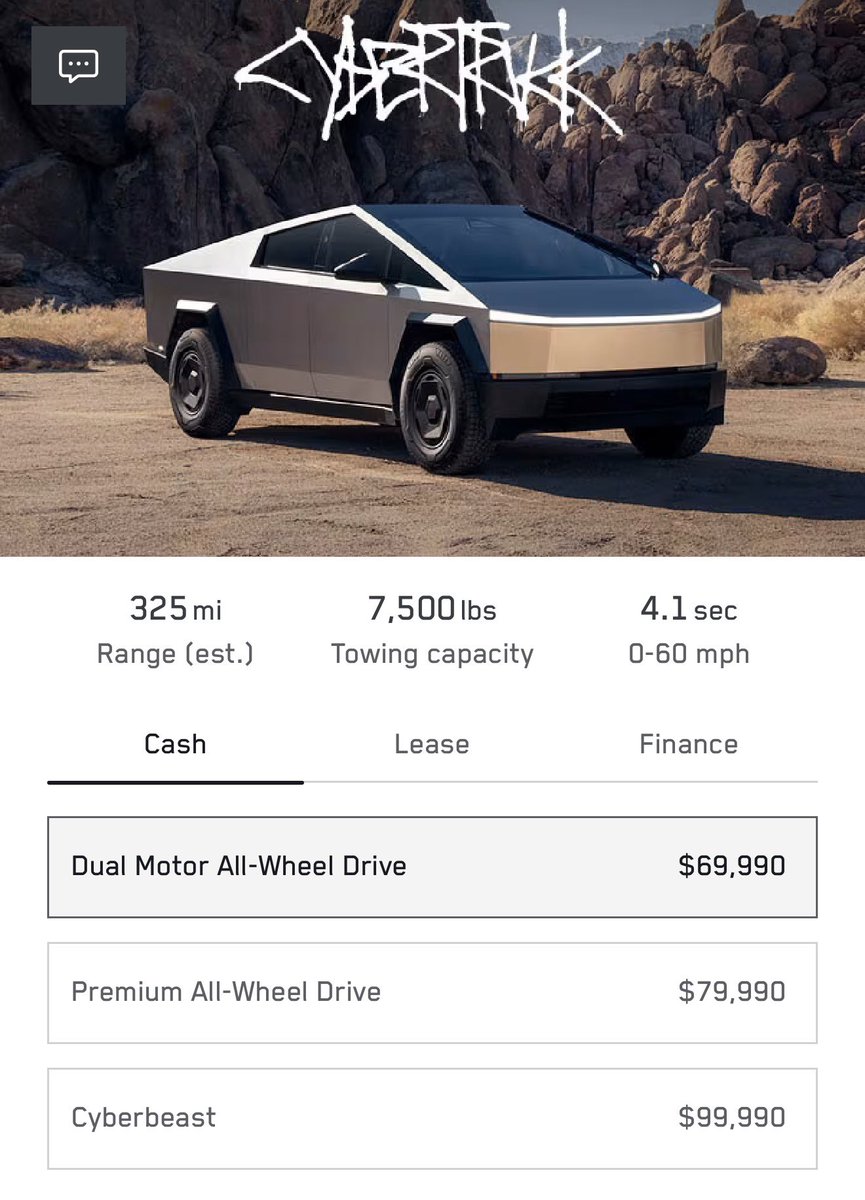

@cybrtrkguy Use my referral link to get the Cybertruck for $69,420: ts.la/eric11482

English

@titangilroy It’s impressive that the blank has such tight tolerances, was this a natural wood #2 out of the box?

English

@DontWalkRUN It’s not the meme “trying to convince us the views of the 10% are held by 90%…” it’s just North Korean state TV at this point.

English

Where is the blood? Where are the learning materials? Where is the massive amount of damage that would kill over a hundred people? And if the US/IDF targeted that building, it would be completely destroyed. There would be nothing left.

Then ABC News claims that 148 people were killed. Where is the evidence?!?

So much for journalism in 2026...

English

@DontWalkRUN I feel like we are now seeing the sleeper media cells activating globally in a last ditch effort to paint an alternate reality fast, but everybody moved out from living under rocks.

English

@teslacarsonly That tank holds 1/17 the currently atmosphere between your ears.

English

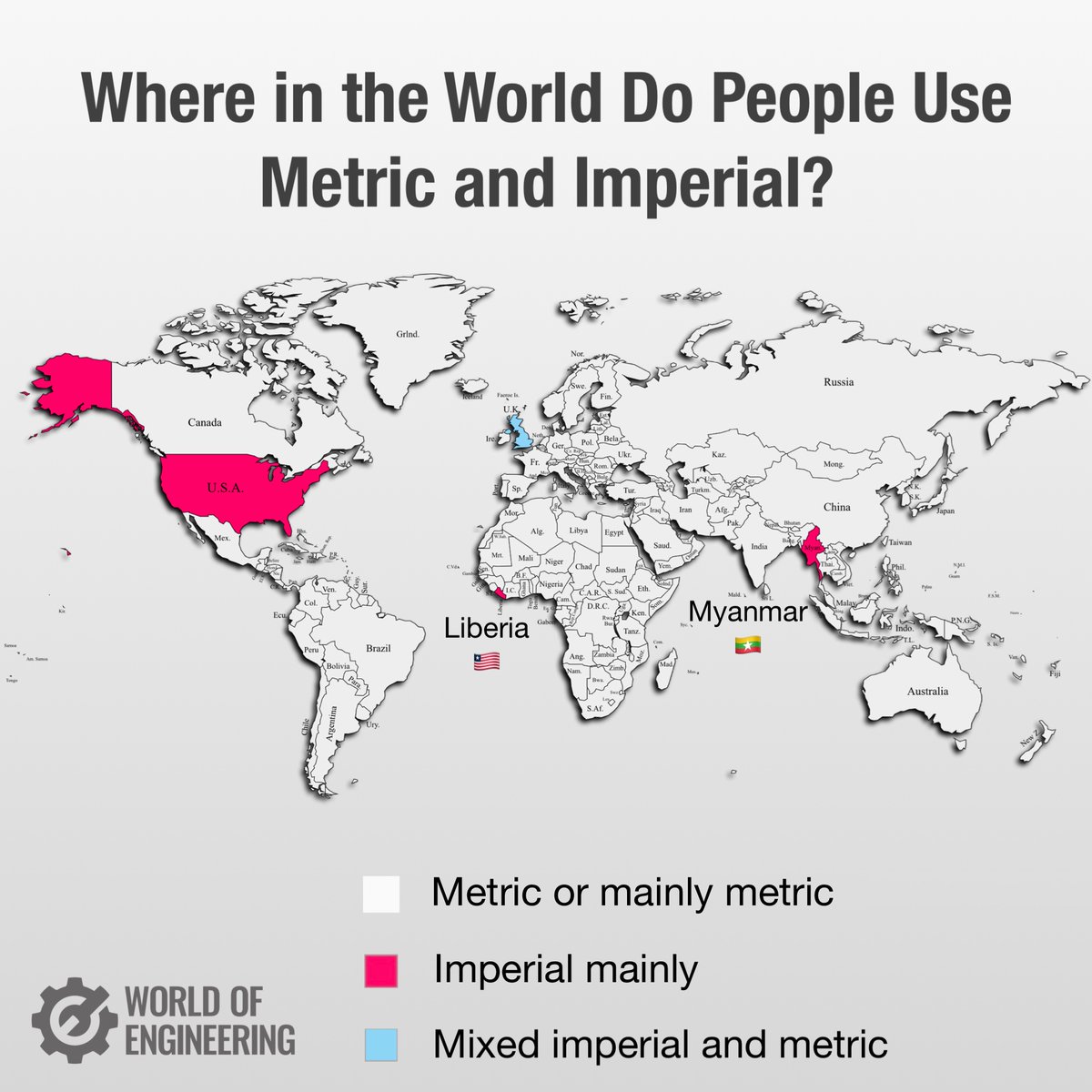

@engineers_feed Extending off-world, rumor has it that a certain Martian crater uses a mix.

English

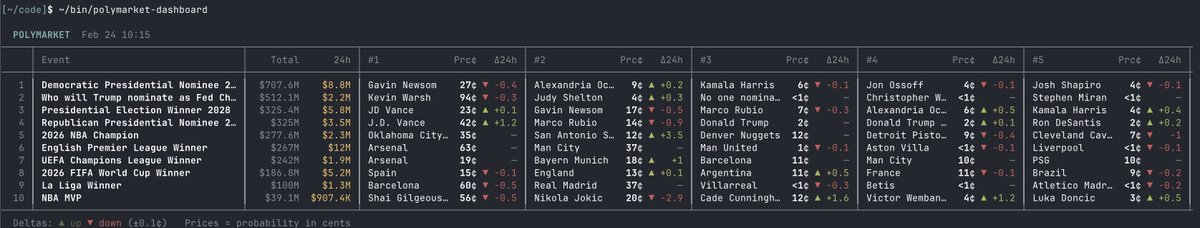

CLIs are super exciting precisely because they are a "legacy" technology, which means AI agents can natively and easily use them, combine them, interact with them via the entire terminal toolkit.

E.g ask your Claude/Codex agent to install this new Polymarket CLI and ask for any arbitrary dashboards or interfaces or logic. The agents will build it for you. Install the Github CLI too and you can ask them to navigate the repo, see issues, PRs, discussions, even the code itself.

Example: Claude built this terminal dashboard in ~3 minutes, of the highest volume polymarkets and the 24hr change. Or you can make it a web app or whatever you want. Even more powerful when you use it as a module of bigger pipelines.

If you have any kind of product or service think: can agents access and use them?

- are your legacy docs (for humans) at least exportable in markdown?

- have you written Skills for your product?

- can your product/service be usable via CLI? Or MCP?

- ...

It's 2026. Build. For. Agents.

Suhail Kakar@SuhailKakar

introducing polymarket cli - the fastest way for ai agents to access prediction markets built with rust. your agent can query markets, place trades, and pull data - all from the terminal fast, lightweight, no overhead

English

github.com/putersdcat/MID…

Because AI I can finally get around to making the things nobody needs, but I want, here is one of them.

English

Working on the new simulator. I just wanted to see what Atari2600 fetching data from ROM looks like at CMOS FET level

(@tinytapeout TT09 Atari circuit by @__ReJ__)

English

@i_am_fabs Spent time back in the USA this summer with grok in Tesla on FSD, it’s nice after 6 months to finally get something with AI in Germany. Now if we can get FSD it will really be back to the future!

English

@HumansNoContext I bought a 3 pack recently, gave one to each kid, kept one for me. Kids don’t have phones, everybody is happy.

English