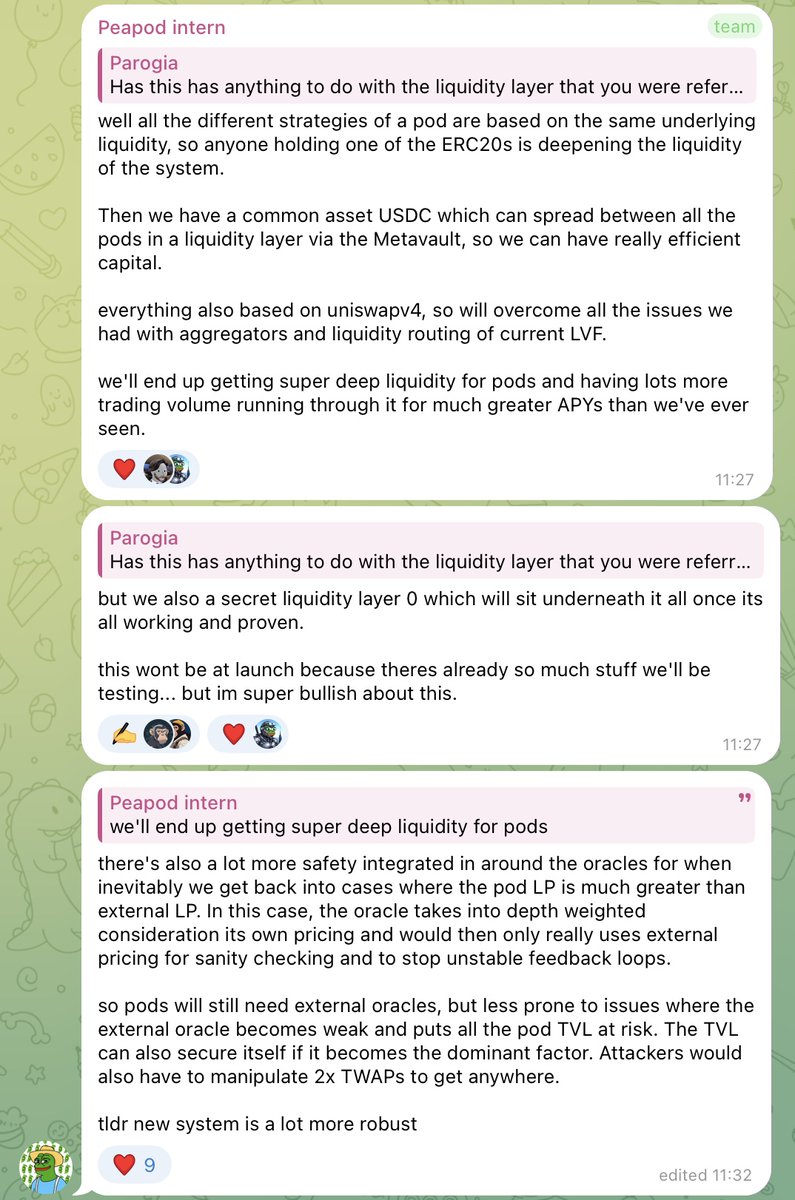

While degens rotate from token to token, a few teams are quietly setting new standards. One of them? @AlvaraProtocol. They’re not just launching a token they’re building a new layer for fund creation onchain. 🧵👇 🔍 What is $ALVA really about? 💥 Who’s building this? 📦 So how does it work? 🔐 How do they keep things safe? 🌍 What about RWAs? 💸 Why hold $ALVA? 🧠 But what if someone forks ERC-7621? 🔮 What’s coming next? 📣 Final note 🧵 TL;DR 🔍 What is $ALVA really about? Think: baskets for multi-asset fund management, but onchain. Sounds simple... until you realize they wrote an entire Ethereum standard ERC-7621 just to make it work. This ERC standard was developed in collaboration with the Ethereum Foundation, and the Alvara team is still actively in contact with them. At a high level, it lets anyone create a multi-asset portfolio, tokenize it, and share it in just a few clicks. But the real innovation is how composable and flexible that portfolio becomes once it’s minted. It's not a vault. It's not a wrapper. It’s programmable, tradable, and liquid. You’re not just buying into a fund you’re buying into the future of asset management onchain. 💥 Who’s building this? The protocol is led by co-founders Callum Mitchell-Clark and Dominic Ryder. Dom came from traditional fund management and saw the friction trying to deploy strategies in DeFi. Callum was already deep in smart contract design, focused on how asset management should work onchain. But this isn’t just two founders building in isolation it’s a full team: – Deon Dreyer (COO) keeps operations running smoothly, making sure timelines, workflows, and product launches stay on track. – Joey van Etten (BD Lead) drives partnerships whether it’s RWAs or cross-chain plays, Joey’s likely behind the scenes making it happen. – Mike Ryder (Research Lead) dives into tokenomics and onchain fund models to keep things robust and forward-looking. – Max Green (Marketing Lead) bridges the tech and the narrative shaping how ERC-7621 and Alvara’s vision get communicated. – And the heavy lifting? That’s Troon Technologies, a dedicated dev team (15 engineers) building the core infrastructure: Basket Factory, DEX, governance, and more. 📦 So how does it work? Each "Basket Token" (ERC-7621) is like a tokenized portfolio. You choose the underlying assets (ETH, LINK, RWA tokens, etc). You set the allocations. You mint a BTS and that becomes an ERC-721 that tracks ownership. There’s also a secondary set of tokens BTS LP tokens that represent shares in the basket, kind of like shares in a fund or vault. These can be split, traded, or used for incentives. What sets this apart is how non-custodial and programmable it is. The fund doesn’t live in someone’s wallet it lives onchain. You can rebalance it. You can sell it. You can govern it. 🔐 How do they keep things safe? This part matters. Because if anyone can launch a basket, how do you prevent spam, rugs, or black swans? Here’s what they’ve put in place: – Asset filtering: Illiquid or sketchy tokens are excluded at the smart contract level – User behavior: If a basket is junk, users won't mint into it cause there’s no incentive – Emergency Stables function: In case of market chaos, fund managers can convert assets to stablecoins (except ALVA) via a hardcoded trigger. It’s one-click, all-assets, immediate. – KYC support: For RWA integration, they're working with partners that support verifiable, regulated custody There’s no perfect solution but it’s not being left to vibes either. 🌍 What about RWAs? Honestly didn’t expect this part to be so far along. They’re working with real RWA protocols like LandX (tokenized farmland) and EstateX (real estate) to plug directly into the basket system. That means you’ll soon be able to mint baskets with exposure to tokenized farmland, real estate, and more. Not just as a gimmick but as an investable, rebalancing, tradable portfolio. Details like verification layers, custody protocols, and asset auditing are being finalized, but they’ve clearly thought it through and have partnerships forming behind the scenes. 💸 Why hold $ALVA? This is where things get reflexive. Every basket minted through Alvara must include 5% ALVA. That ALVA is pulled from the market and removed from circulation. Not burned, but removed from liquidity. So the more baskets get created, the more demand pressure is applied to the token. On top of that, you can stake ALVA → lock it into veALVA, and direct emissions to specific baskets via gauge voting. 🧠 But what if someone forks ERC-7621? If someone skips the 5% ALVA inclusion by minting their own implementation of ERC-7621, they might bypass the token but they also disconnect from Alvara’s tooling: the leaderboard, the DEX, the staking emissions, the marketplace. So adoption of the standard still benefits Alvara. And the strongest gravity will pull toward the original ecosystem. First mover advantage, built-in. 🔮 What’s coming next? With Quill audit already finished and Certik in the final 10%, Alvara’s getting close to unleashing mainnet. The launch will happen in three phases and while the full strategy is still under wraps, a few hints have started to surface. Just this Monday, they teased a full rebrand coming Wednesday. Oh and they’ve brought in Hy.pe, one of the top crypto marketing agencies in the game (same crew that worked with Sui, Avalanche, zkSync). Now they’re helping Alvara shape the story, find the right audience, and execute a serious go-to-market strategy. And it’s not just vibes there’s over $500K in stables behind the campaign, with whispers of extra $ALVA being thrown into the mix. The best part? The big campaigns haven’t even started yet. 📣 Final note: I interviewed one of the core devs We got into things you won’t find in any docs like how managers might bypass ALVA, what actually triggers the emergency stables function, and how they plan to vet RWAs before they hit chain. I pushed on pre-launch risks, security assumptions, even the real mechanics behind adoption. Some of those answers? You’ll spot them scattered across this thread. Quietly, that convo was the edge I needed to actually understand what they’re building. They’re aware of the challenges. But they’re also shipping and trying to stay clear of the noise. And I would encourage anyone to hop on their telegram and start learning today. 🧵 TL;DR $ALVA / @AlvaraProtocol – A new standard for onchain funds (ERC-7621) – Mainnet around the corner – Real-world asset integration coming – Incentives tied to actual product use – Serious team, serious stack – Undervalued given the scope