Parthe Pandit

217 posts

Parthe Pandit

@PartheP

Thakur Family Chair Assistant Professor @ C-MInDS, IIT Bombay

We identify that Conv Nets implement a variant of the same general mechanism of feature learning as in fully-connected networks. The covariances of the filters in CNNs again recover the average gradient outer-product (AGOP) of the model, additionally averaged over input patches.

We identify that Conv Nets implement a variant of the same general mechanism of feature learning as in fully-connected networks. The covariances of the filters in CNNs again recover the average gradient outer-product (AGOP) of the model, additionally averaged over input patches.

Really excited to share this new paper: Kernel Regression with Infinite-Width Neural Networks on Millions of Examples. We found that the recipe for success with kernels is 1) highly expressive kernels (infinitely wide deep nets) and 2) lots of data. arxiv.org/abs/2303.05420

Postdoc @raazdwivedi has accepted a tenure-track assistant professorship at @Cornell, Operations Research and Information Engineering @Cornell_orie, @Cornell Tech in NYC! Congratulations, Raaz!!!

What is the nature of feature learning in deep networks? We propose that neural networks recover a statistic known as the average gradient outer product (AGOP). Github: github.com/aradha/recursi… arXiv: arxiv.org/abs/2212.13881

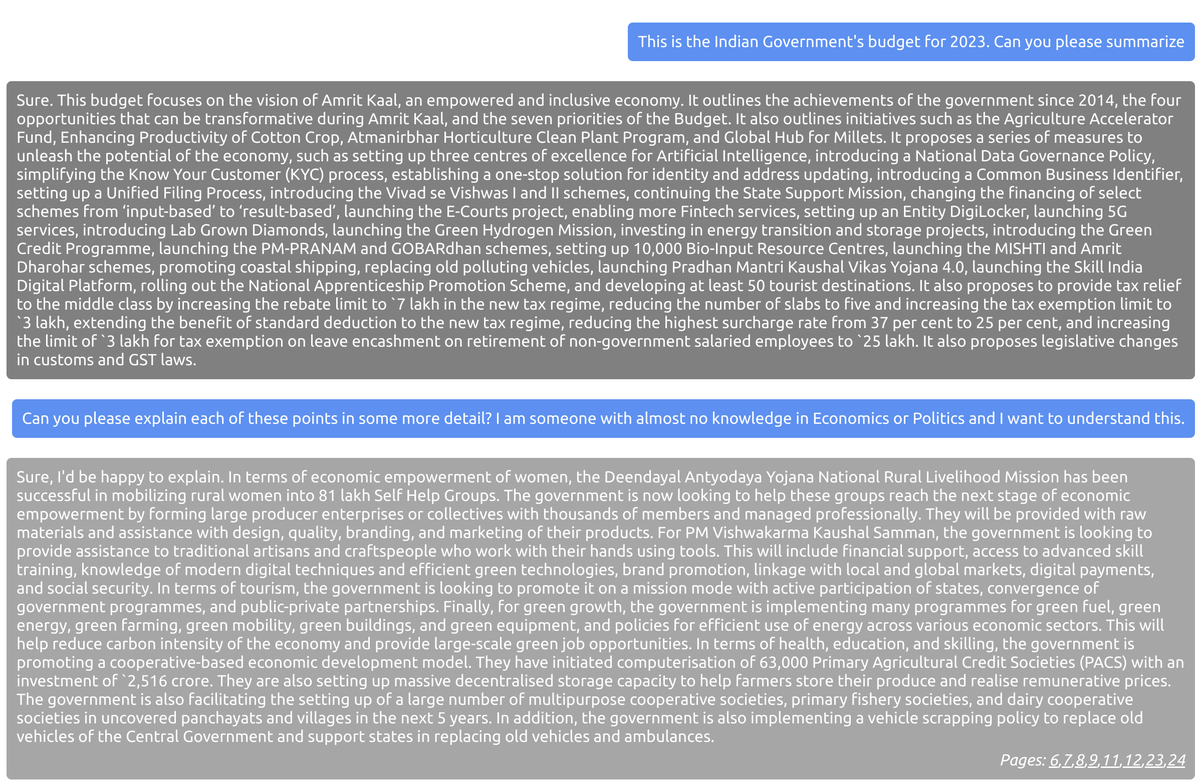

Use ChatGPT on your own files This is going to be big: humata.ai lets you upload a .pdf up to 60 pages long and allows you to ask questions about it in plain English ↓