Liu Liu

1.3K posts

Liu Liu

@liuliu

Maintains https://t.co/VoCwlJ9Eq0 / https://t.co/bMI9arVwcR / https://t.co/2agmCPOZ2t. Wrote iOS app Snapchat (2014-2020). Founded Facebook Videos with others (2013). Sometimes writes at https://t.co/Gyt4J9Z9Tv

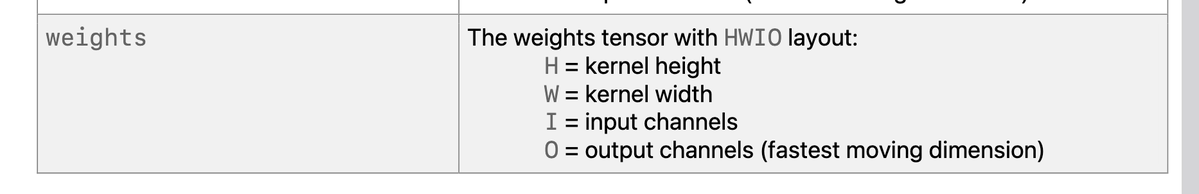

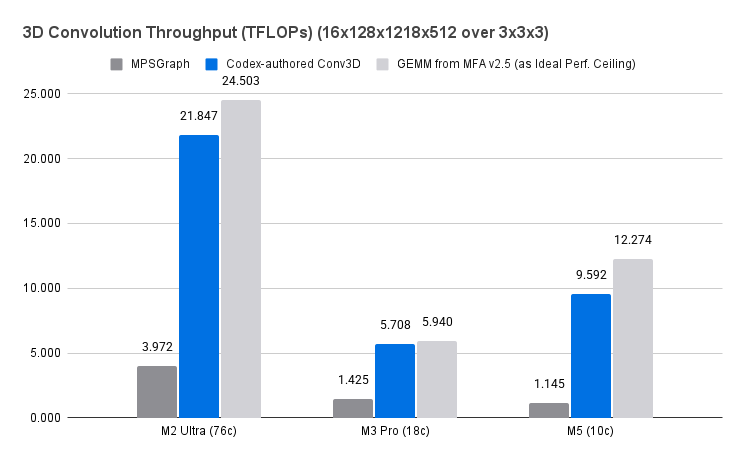

Have a hunch that the Conv3D in MPSGraph is not well-maintained. BRB.

I know there is some overlap between open source and anti-AI activists, but I have a hard time reconciling it. My million+ open source LOC were always intended as a gift to the world. Yes, I would make arguments about how it would strengthen our communities, and the GPL would prevent outright exploitation by our competitors, but those were to allay fears of my partners to allow me to make the gift. AI training on the code magnifies the value of the gift. I am enthusiastic about it! Some people do look at open source as a tool for social change, career advancement, or reputation building, but those are all downstream of the gift.