Julen Urain

285 posts

Julen Urain

@robotgradient

Robotics Tinkerer. RS @Amazon FAR Prev: @META (FAIR), @DFKI, @TUDarmstadt https://t.co/RQpq7Prbln X https://t.co/umZQeDjJv4

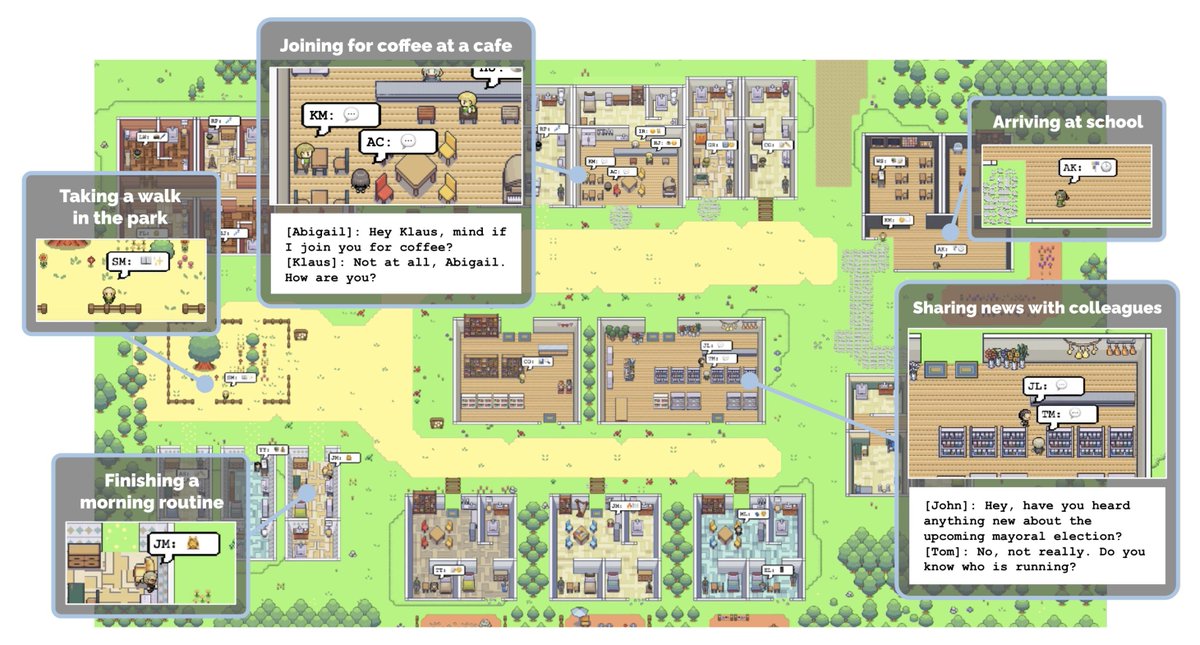

- Project website: dreamdojo-world.github.io - Paper: arxiv.org/abs/2602.06949 - Code repo and model ckpts: github.com/NVIDIA/DreamDo… This is a huge team work at NVIDIA. All credits go to the wonderful teams who poured their hearts into it!

In my recent blog post, I argue that "vision" is only well-defined as part of perception-action loops, and that the conventional view of computer vision - mapping imagery to intermediate representations (3D, flow, segmentation...) is about to go away. vincentsitzmann.com/blog/bitter_le…

okay, actually yes

Step inside Project Genie: our experimental research prototype that lets you create, edit, and explore virtual worlds. 🌎

World generation is a bottleneck for robotics. We’re exploring how generative 3D worlds can reduce manual simulation setup and enable broader, more realistic evaluation 🧵

Dexterous manipulation by directly observing humans - a dream in AI for decades - is hard due to visual and embodiment gaps. With simple yet powerful hardware - Aria 2 glasses 👓 - and our new work AINA 🪞, we are now one significant step closer to achieving this dream.