Kaifeng Lyu

65 posts

Kaifeng Lyu

@vfleaking

Assistant Professor @ Tsinghua University

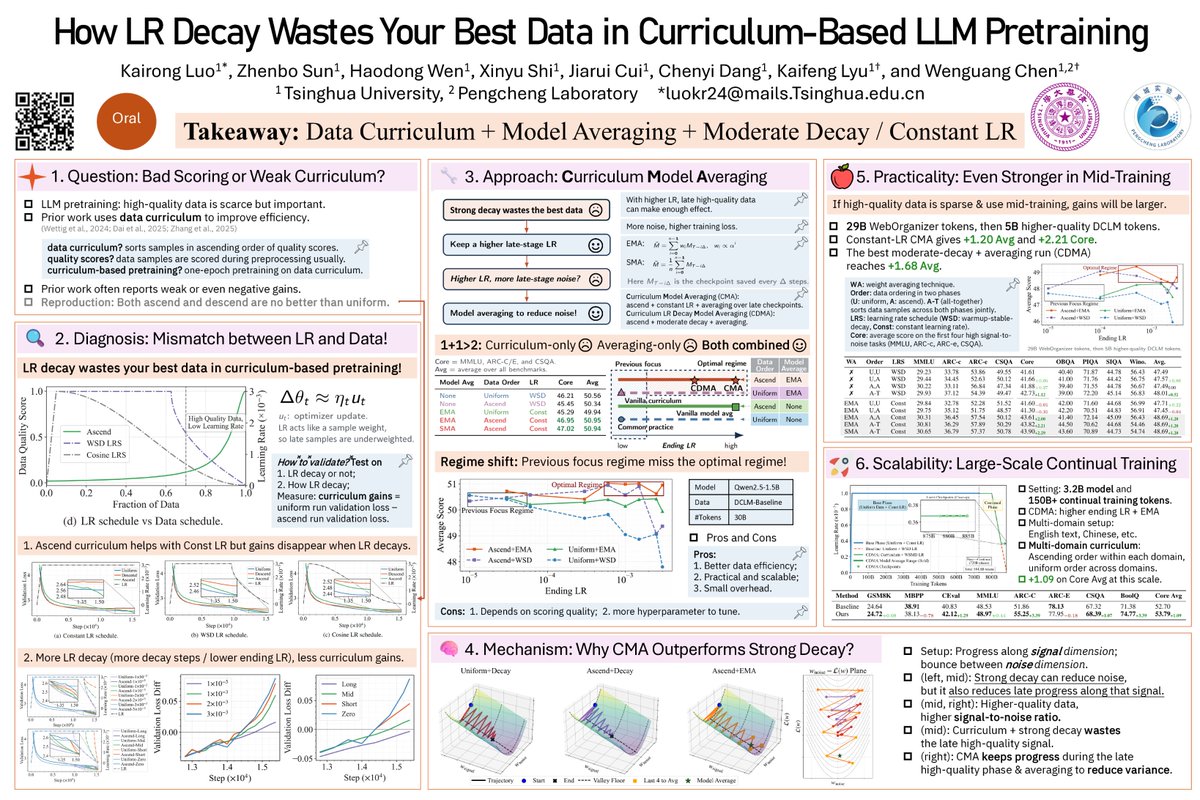

✈️ Heading to ICLR 🇧🇷 Apr 22–27. Come to our oral on Fri, Apr 24 (10:30 AM–12:00 PM, Room 202 A/B) or find me at our poster (3:15 PM–5:45 PM, P3-#521). We study why LR decay can hurt curriculum-based LLM pretraining — and how to fix it. Happy to chat!

(1/n) Introducing Hyperball — an optimizer wrapper that keeps weight & update norm constant and lets you control the effective (angular) step size directly. Result: sustained speedups across scales + strong hyperparameter transfer.

Excited to share our #NeurIPS2025 spotlight! LLMs train on massive web scrapes + small knowledge-dense datasets. But how much knowledge is learned from this small proportion? Unlike linear scaling for single domains, we find that data mixing induces phase transitions. (1/3)

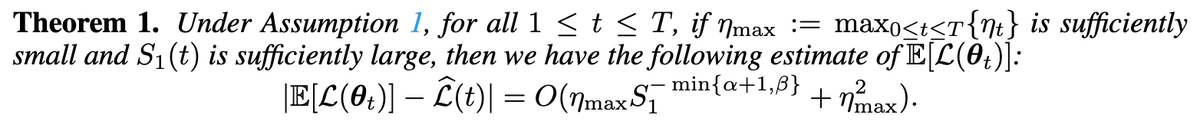

Adam prefers a different minimizer than SGD (exemplified below), but how? 🤔 Our NeurIPS 2025 Paper: Based on our Slow SDE approximation of Adam, we show that under label noise Adam implicitly minimizes tr(Diag(H)^½), whereas prior works showed that SGD minimizes tr(H). 🧵1/n

Adam prefers a different minimizer than SGD (exemplified below), but how? 🤔 Our NeurIPS 2025 Paper: Based on our Slow SDE approximation of Adam, we show that under label noise Adam implicitly minimizes tr(Diag(H)^½), whereas prior works showed that SGD minimizes tr(H). 🧵1/n

I've discovered a truly marvelous idea for building AGI, but Twitter's space limit won't let me explain it! Damn! 😫 Introducing ✏️PENCIL, a new LLM reasoning paradigm that generates and erases thoughts, enabling longer and deeper thinking with shorter context. #ICML2025 🧵1/n 🤯 Theoretically, ✏️PENCIL is Turing-complete with optimal space and time complexity, and thus can solve arbitrary computable problems efficiently. This is something fundamentally impossible for ✒️CoT. This post is based on the paper “PENCIL: Long thoughts with short memory” accepted in ICML 2025, a joint work with Nathan Srebro, David McAllester, and @zhiyuanli_ Paper: arxiv.org/pdf/2503.14337 Github: github.com/chr26195/PENCIL Expand thread for full details ⬇️

Check out our new paper! We explore the representation gap between RNNs and Transformers. Theory: CoT improves RNNs but is insufficient to close the gap. Improving the capability of retrieving information from context is the key (e.g. +RAG / +1 attention). arxiv.org/abs/2402.18510

📢 Come meet us at #ICLR2025! We'll be presenting our Multi-Power Law — a new approach to predicting full pretraining loss curves across LR schedules — during the poster session: 🗓 Friday, April 25 🕒 3:00 PM – 5:30 PM CST 📍 Hall 3 + Hall 2B, Poster #237 Expect your feedback!

Thrilled to know that our paper, `Safety Alignment Should be Made More Than Just a Few Tokens Deep`, received the ICLR 2025 Outstanding Paper Award. We sincerely thank the ICLR committee for awarding one of this year's Outstanding Paper Awards to AI Safety / Adversarial ML. Special thanks go to the reviewers and area chairs for their strong support and recommendations. Throughout the rebuttal period, the reviewers remained deeply engaged, raising thoughtful questions that helped enhance the rigor of our experiments and manuscript. I am also profoundly grateful to my collaborators (@PandaAshwinee @vfleaking @infoxiao @sroy_subhrajit @abeirami) for their joint efforts and my advisors (@prateekmittal_ @PeterHndrsn) for their invaluable guidance and support. + On a personal note, I also defended my PhD at Princeton in February and joined OpenAI last month, where I will continue working on AI safety and adversarial robustness. I'm looking forward to catching up with old friends and meeting new friends around the Bay!) ------ Below are some of my reflections and thoughts on our awarded paper: Adversarial robustness has been an ongoing topic since the early rise of deep learning in 2013 (arxiv.org/abs/1312.6199). Over the years, we've observed the community swing from pessimism—epitomized by Nicholas Carlini's adaptive attacks (ieeexplore.ieee.org/abstract/docum…) systematically dismantling various defenses, fostering the sentiment "adversarial examples are hard"—to skepticism, as adversarial examples appeared to have limited impact on practical AI applications for a while, prompting the notion "adversarial examples are not even important." With the emergence of ChatGPT at the end of 2022, deep learning entered a new era towards AGI, shifting AI safety from theoretical speculation to mainstream practical concern. This is also when adversarial robustness again gets more attention. For example, following our 2023 demonstrations that adversarial examples pose fundamental threats to AI safety alignment (arxiv.org/abs/2306.13213, arxiv.org/abs/2306.15447, arxiv.org/pdf/2307.15043), adversarial examples reemerged as the "Sword of Damocles" hanging over AI safety (memorably illustrated by Zico Kolter at ICML 2023 in Hawaii, who humorously preempted his talk on the GCG attack with a Terminator slide captioned, "adversarial examples are back"). More concerningly, in the context of AI safety, disrupting safety alignment through fine-tuning is even simpler and harder to mitigate than adversarial examples (arxiv.org/abs/2310.03693, arxiv.org/abs/2310.02949, arxiv.org/abs/2404.01099, arxiv.org/abs/2412.07097, arxiv.org/abs/2502.17424). In 2023, conducting attack research was enjoyable—simply formulating and demonstrating the existence of vulnerabilities sufficed, as the effectiveness of an attack is inherently compelling. However, in 2024, my advisors started to heavily push me toward working on robustness defense, asserting that identifying problems without striving for solutions is not ambitious enough. While I wholeheartedly agreed, I was acutely aware of the profound challenge in achieving genuine robustness. After a lot of struggle, we eventually still developed this paper. Initially, our exploration focused on constrained supervised fine-tuning (SFT) against fine-tuning attacks. During this process, we discovered a critical bias—models exhibit substantial "first-few-tokens bias" concerning safety (here we acknowledge similar findings by arxiv.org/abs/2401.17256 and arxiv.org/abs/2312.01552, despite differences in our ultimate directions). Using this bias as a technical trick, we impose strong constraints on the losses of only the initial tokens, relaxing constraints for later tokens. This achieved robustness with significantly lower utility regression. Nevertheless, we soon recognized that this bias is not merely a technical trick but represents a fundamental issue. Consequently, we shifted our focus to exploring the broader implications of this phenomenon itself, ultimately shaping the current paper. In writing this paper, I intentionally echoed the style of two seminal works: "Adversarial Examples Are Not Bugs, They Are Features" (arxiv.org/abs/1905.02175) and "Shortcut Learning in Deep Neural Networks" (arxiv.org/abs/2004.07780). The two papers deeply influenced my research style, and receiving the Outstanding Paper award at the culmination of my PhD journey, using a similar writing style, feels both fulfilling and like a tribute to these classics. Frankly, our work still stands far from fully resolving adversarial robustness. In fact, during writing, we deliberately reduced/avoided using the term "defense," resulting in some critique that our paper reads more like a position paper. Rather, our contribution primarily provides just a simple yet concrete explanation (shallow alignment) for a broadly exploited class of vulnerabilities, enabling causal interventions on models to explore the counterfactual of shallow alignment—deep alignment—and demonstrating that such interventions genuinely improve robustness. Fundamentally, our intervention underscores that model alignment must span the entire generation process rather than being confined to the first few token distributions—a principle articulated explicitly in our paper's title. This concept resonates with several other studies, such as Andy Zou et al.’s Circuit Breakers (arxiv.org/abs/2406.04313), Youliang Yuan et al.’s refusal at every position (arxiv.org/abs/2407.09121), and Yiming Zhang et al.’s backtracking (fri). To some extent, improved robustness in reasoning models’ safety alignment (openai.com/index/trading-…) might also be related to this principle, as large-scale reinforcement learning for reasoning spontaneously enhances self-correction and recovery. Yet, adversarial robustness remains unresolved. Adaptive attacks will continuously emerge, potentially perpetuating many cycles of a cat-and-mouse game again. Furthermore, our challenges extend beyond AI safety and jailbreak issues. As frontier models rapidly advance in agentic capabilities, we eagerly anticipate their large-scale deployment to automate numerous tasks. However, currently, robustness and prompt injection significantly hinder this vision. As AI increasingly manages critical workloads and computational systems, robustness failures could pose severe systemic security risks. Finally, we again extend our sincere appreciation to all friends in the AI safety and AdvML research communities for their ongoing support and encouragement. Let’s continue working together to advance the research on AI safety and adversarial machine learning.

Our recent paper shows: 1. Crrent LLM safety alignment is only a few tokens deep. 2. Deepening the safety alignment can make it more robust against multiple jailbreak attacks. 3. Protecting initial token positions can make the alignment more robust against fine-tuning attacks.

Congrats to @Abhishek_034 on receiving an Apple Scholars in AIML fellowship! 🎉🍎 The fellowship supports grad students doing innovative research in machine learning and artificial intelligence. Panigrahi is a PhD student advised by @prfsanjeevarora. bit.ly/3Rm8DVD

🔍How does pretraining loss evolve under different LR schedules? 🌟Meet our Multi-Power Law: predicts the full loss curve for various schedules! 🌟Accurate enough to optimize LR schedules directly. 🌟Result? A WSD-like schedule that outperforms the rest! 🔥Accepted at #ICLR2025