Scribe

240 posts

Scribe

@LearnWithScribe

AI-powered side hustles to land your first $1K client in 30 days. From manual outreach to automated systems. No code needed

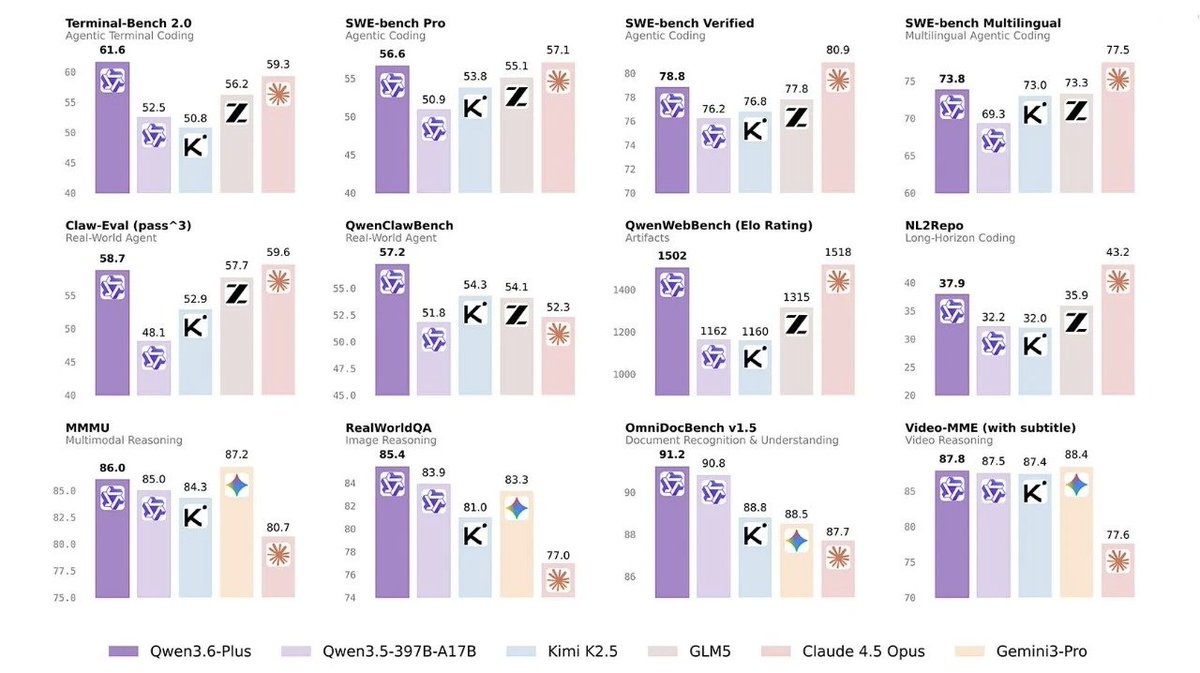

Qwen 3.6 Plus is an incredible model. Well done to the @Alibaba_Qwen team. It blows GPT-5.4-Codex out of the water for agentic tasks / @openclaw , is 3X faster, and is currently offered free through @OpenRouter. openrouter.ai/qwen/qwen3.6-p…

We're launching CodeDB v0.2.53! 538x faster than ripgrep. 569x faster than rtk. 1,231x faster than grep. 0.065ms code search. Pre-built trigram index. Query once, instant forever. 21 issues closed. 14 PRs merged. 7 contributors. One weekend.

Holy moly, MiniMax-M2.7 is amazing, watch till the end.

So, Anthropic really cut down usage limits, right?

Suis resté sur le cul... OpenAI vient de publier la première étude exhaustive sur la manière dont 700 millions de personnes utilisent réellement ChatGPT. Les résultats remettent en cause toutes les hypothèses concernant l'adoption de l'IA. On va décortiquer tout çà semaine prochaine dans Silicon Carne... c'est super intéressant 🌶️ Voici tout ce que vous devez savoir en 3 minutes :