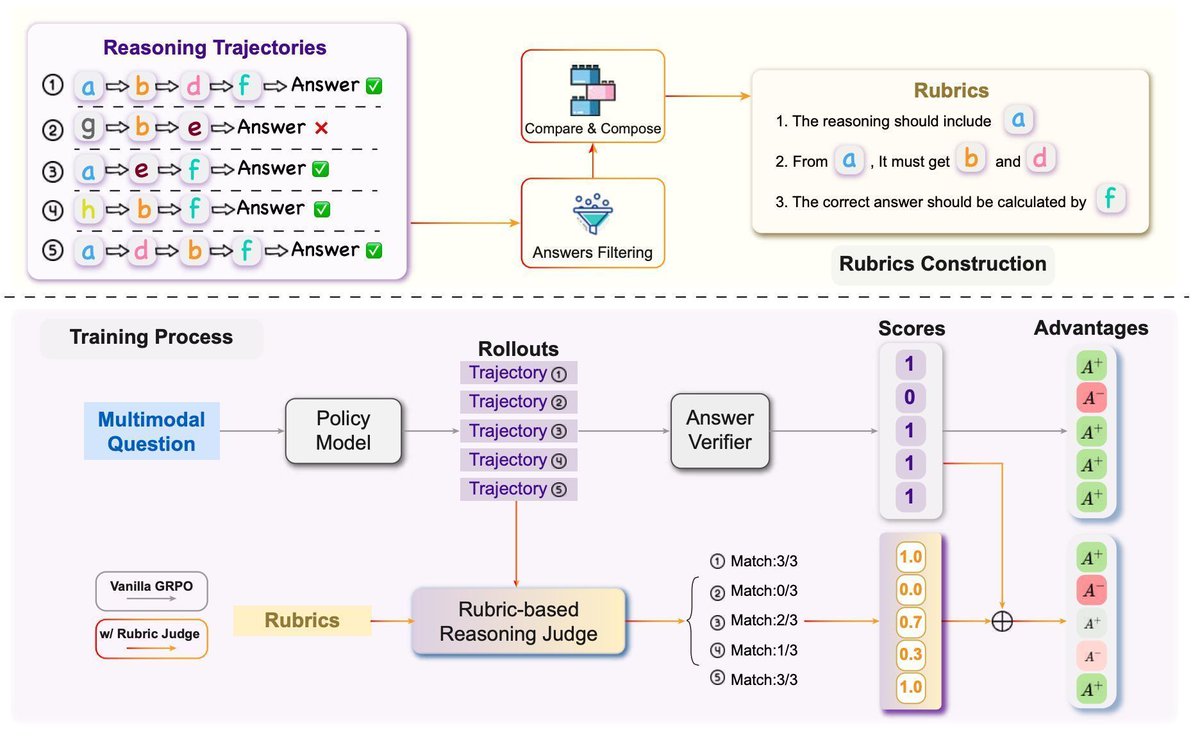

Decentralized RAG allows your database to benefit all LLM clients. On the other side, not all data sources are reliable. Managing source reliability on blockchain can avoid third-party manipulation. Introducing dRAG + Blockchain + Truth Discovery: arxiv.org/abs/2511.07577

English