Meng Jiang

206 posts

Meng Jiang

@Meng_CS

Frank M. Freimann Collegiate Professor at Notre Dame CSE | Data Mining | NLP | AI

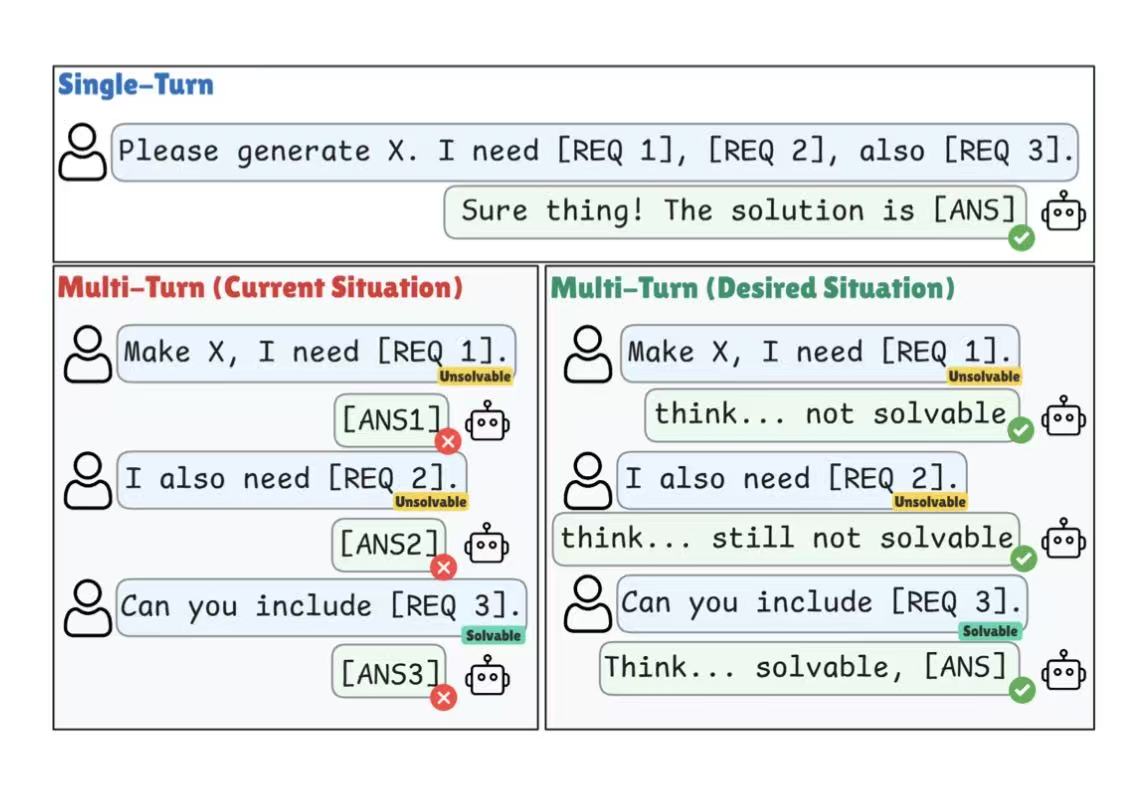

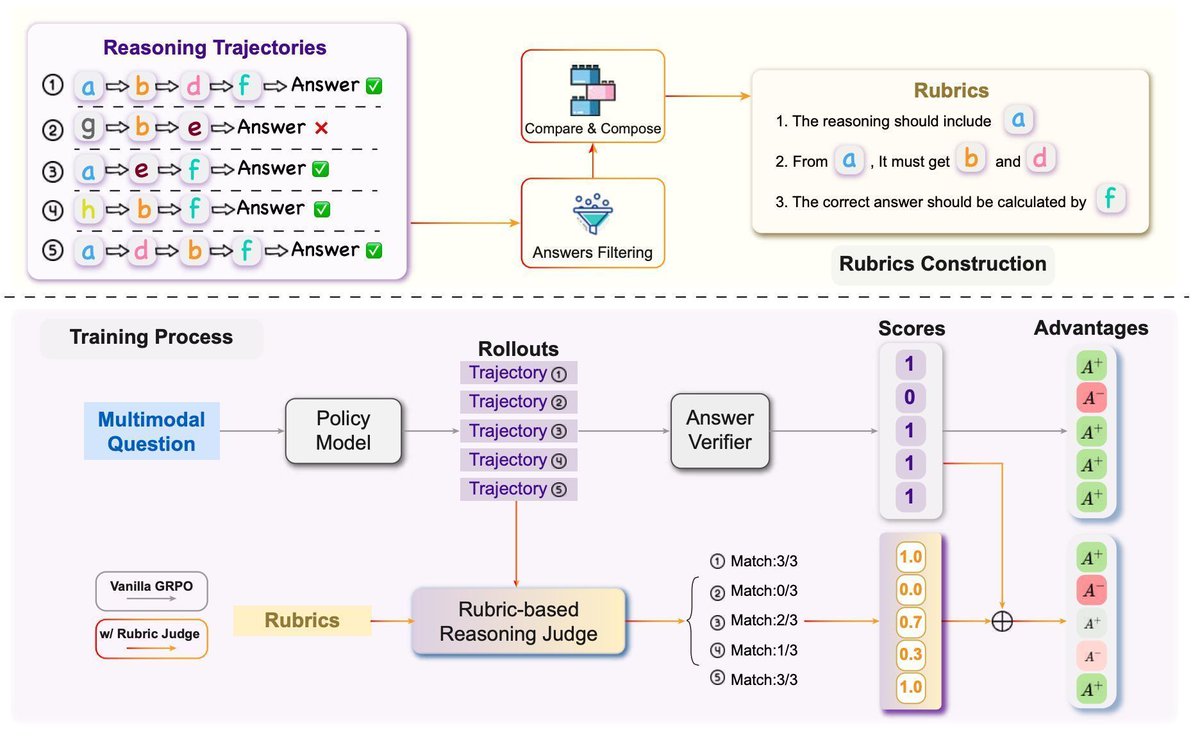

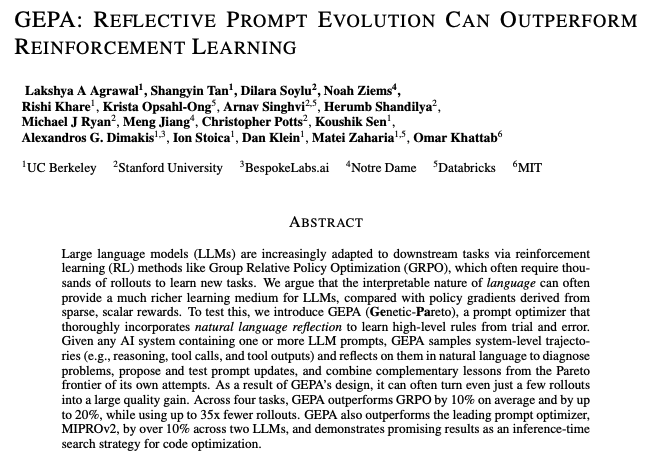

How does prompt optimization compare to RL algos like GRPO? GRPO needs 1000s of rollouts, but humans can learn from a few trials—by reflecting on what worked & what didn't. Meet GEPA: a reflective prompt optimizer that can outperform GRPO by up to 20% with 35x fewer rollouts!🧵

How does prompt optimization compare to RL algos like GRPO? GRPO needs 1000s of rollouts, but humans can learn from a few trials—by reflecting on what worked & what didn't. Meet GEPA: a reflective prompt optimizer that can outperform GRPO by up to 20% with 35x fewer rollouts!🧵

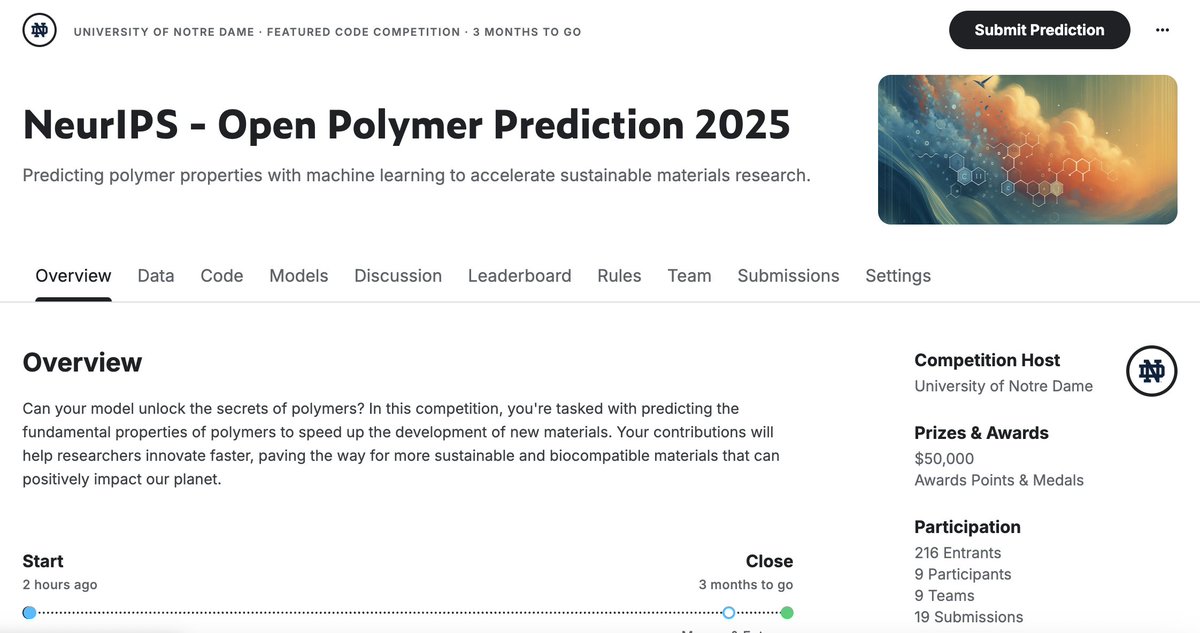

🎓💰🔬 Want to learn machine learning, win a cash prize (USD 50K in total!!), and help drive real progress in discovering new polymer materials? All available at our NeurIPS 2025 Open Polymer Challenge: open-polymer-challenge.github.io 🚀 Join now (Kaggle): kaggle.com/competitions/n… 📈