Tweet Disematkan

Apebitrageur

2.5K posts

Apebitrageur

@arbitrape

Crypto researcher | AI enthusiast | Quant newbie

Bergabung Kasım 2021

276 Mengikuti318 Pengikut

Apebitrageur me-retweet

Apebitrageur me-retweet

Andrej,

I’m John Fletcher. I have a PhD in mathematics and theoretical physics from Cambridge, and since 2016 I have been working full-time on the problem of how to coordinate untrusted distributed compute for algorithmic innovation.

I listened to your No Priors conversation and recognised the architecture you were describing: commits that build on each other, computational asymmetry (hard to find, cheap to verify), an untrusted pool of workers collaborating through a blockchain-like structure.

The result is The Innovation Game (TIG), which has been in continuous operation since mid-2024. The correspondence is so close that I thought it worth writing.

The short version: roughly 7,000 Benchmarkers test algorithms submitted by Innovators by solving instances of asymmetric computational challenges (SAT, Vehicle Routing, Quadratic Knapsack, Vector Search, among others).

This testing is "proof of work" in the technical sense of Dwork and Naor (1992). Innovators earn rewards proportional to adoption by the Benchmarkers. The repository of algorithms is open source (github.com/tig-foundation…).

The system is already producing state-of-the-art results. For the Quadratic Knapsack Problem, 476 iterative submissions by independent contributors brought solution quality to a level that now exceeds methods published by Hochbaum et al. in the European Journal of Operational Research (2025).

We are working with Thibaut Vidal (Polytechnique Montréal), who has submitted a state-of-the-art vehicle routing algorithm directly to TIG, and with Yuji Nakatsukasa (Oxford) and Dario Paccagnan (Imperial College London), among many others.

One of TIG’s active challenges is directly relevant to your autoresearch work: an optimiser for neural network training (play.tig.foundation/challenges?cha…), where Innovators compete to develop an improved optimiser (see screenshot).

One way in which TIG extends the vision is on the economic side. In our view, a monetary incentive is required, otherwise the open strand simply cannot compete at scale. TIG’s open source dual licensing model (designed by my co-founder Philip David, who was General Counsel at Arm Holdings for over a decade, and was the artchitect of ARMs licensing strategy) is intended to solve that problem.

I expect we have each thought about parts of this that the other hasn’t. Happy to talk whenever suits.

John Fletcher

tig.foundation

Andrej Karpathy@karpathy

Thank you Sarah, my pleasure to come on the pod! And happy to do some more Q&A in the replies.

English

Apebitrageur me-retweet

Apebitrageur me-retweet

Today’s Figma MCP update makes it one of the strongest integrations with Claude Code I’ve seen.

You can now use Claude Code to design in Figma with the the full context of your design systems.

Figma@figma

Now you can use AI agents to design directly on the Figma canvas, with our new use_figma MCP tool and skills to teach them. Open beta starts today.

English

Apebitrageur me-retweet

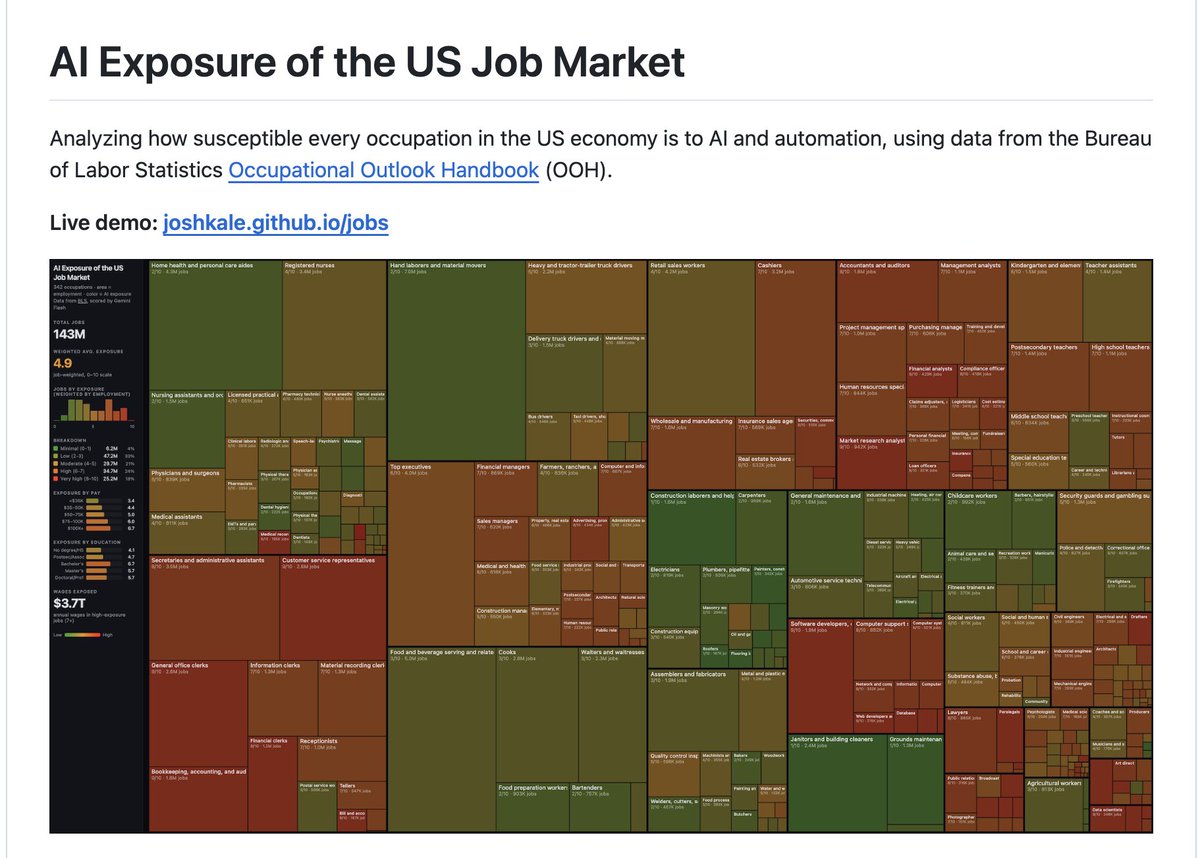

Karpathy scored every job in America on AI replacement risk. Then he deleted it.

It went viral Last night. Elon replied. News outlets picked it up. So I brought it back to life.

Cloned the entire repo to play with before it went down. Every file. The scoring data, the full pipeline, the interactive visualization. All of it.

It's back up here: github.com/JoshKale/jobs

The data was too noteworthy to let vanish. 342 occupations scored 0-10 on how much AI will reshape them. Average exposure across the entire US economy: 5.3/10.

If your job lives on a screen, it's worth taking a look.

English

Apebitrageur me-retweet

Apebitrageur me-retweet

I accidentally discovered how to compress a month of research into 3 hours.

A founder at a YC company showed me his Claude setup. I thought he was just fast. Then I watched him build an entire go-to-market strategy for a market he'd never worked in before.

Here's exactly what he did:

First: he didn't ask Claude to "research the market."

He fed it 8 competitor landing pages, 3 earnings call transcripts, 12 customer reviews, and a Reddit thread of complaints.

Then he asked one question:

"What does every successful player in this market understand that their customers never say out loud?"

Not "summarize these." Not "analyze the competition."

The unspoken insight. The thing that takes founders 2 years of customer calls to figure out.

But the next part is what broke my brain.

He followed up with:

"Now show me the 3 assumptions this entire market is built on, and what would have to be true for each one to be wrong."

In 15 minutes he had the attack surface of an entire industry.

The blind spots. The fragile consensus. The opening nobody was talking about.

Most founders spend 6 months doing customer discovery just to find one of those.

Then he did something I've never seen before.

He asked:

"Write 5 questions a world-class investor would ask to destroy this business idea, then answer each one using only the evidence in these documents."

He spent the next 2 hours stress-testing every assumption. Every weak answer triggered a follow-up:

"What's the strongest version of this argument and where does it still break?"

By hour 3, he had a strategy deck that felt like it came from someone who'd spent a decade in the space.

The tool didn't change. The questions did.

Most people treat Claude like a faster Google.

These founders are using it like a thinking partner who has read everything and has no ego about being wrong.

The difference between 3 hours and 3 months isn't the amount of information.

It's knowing which questions actually matter.

English

Apebitrageur me-retweet

From static to motion, @canva's professional design suite just got even bigger. Created by animators, for animators. Welcome to the family, @cavalry__app 💚

Read more on the latest news: canva.com/newsroom/news/…

English

Apebitrageur me-retweet

3/5 winners of the Claude Code hackathon are an:

- attorney

- cardiologist

- roads systems worker

💀

Claude@claudeai

Our latest Claude Code hackathon is officially a wrap. 500 builders spent a week exploring what they could do with Opus 4.6 and Claude Code. Meet the winners:

English

Apebitrageur me-retweet

Apebitrageur me-retweet

Apebitrageur me-retweet

@big_duca Someone has to prompt the Claudes, talk to customers, coordinate with other teams, decide what to build next. Engineering is changing and great engineers are more important than ever.

English

Apebitrageur me-retweet

❤️ We are partnering with @MiniMax_AI to give Ollama users free usage of MiniMax M2.5 for the next couple of days!

ollama run minimax-m2.5:cloud

Use MiniMax M2.5 with OpenCode, Claude Code, Codex, OpenClaw via ollama launch!

OpenCode:

ollama launch opencode --model minimax-m2.5:cloud

Claude:

ollama launch claude --model minimax-m2.5:cloud

MiniMax (official)@MiniMax_AI

English

Apebitrageur me-retweet

Apebitrageur me-retweet

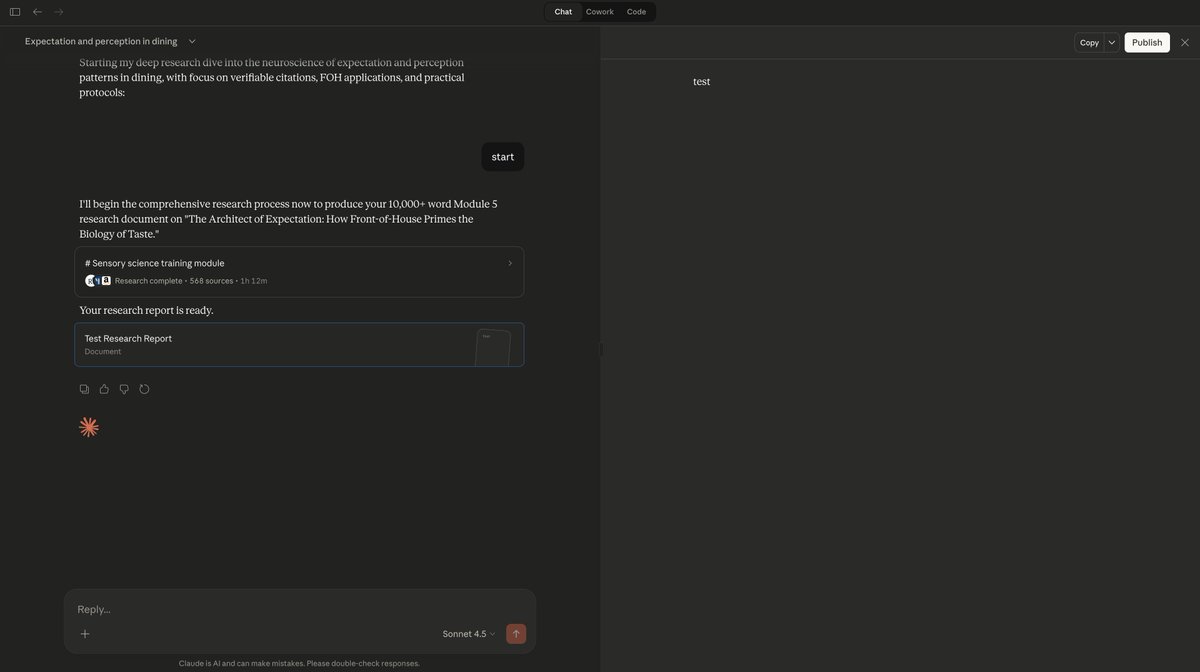

@claudeai @AnthropicAI Some context — this wasn't a simple "research X for me" prompt.

It was a highly detailed, multi-layered research request with specific structural requirements, cross-domain analysis, and deep comparative data.

Feels like the report hit a max capacity wall and silently failed.

English

POV: You wait 1 hour 12 minutes for @claudeai Deep Research to finish

The final report:

"test"

That's the whole thing. The entire report. 72 minutes of deep research condensed into 4 characters.

@AnthropicAI is this a known bug or did I just unlock a new feature? 😭

English