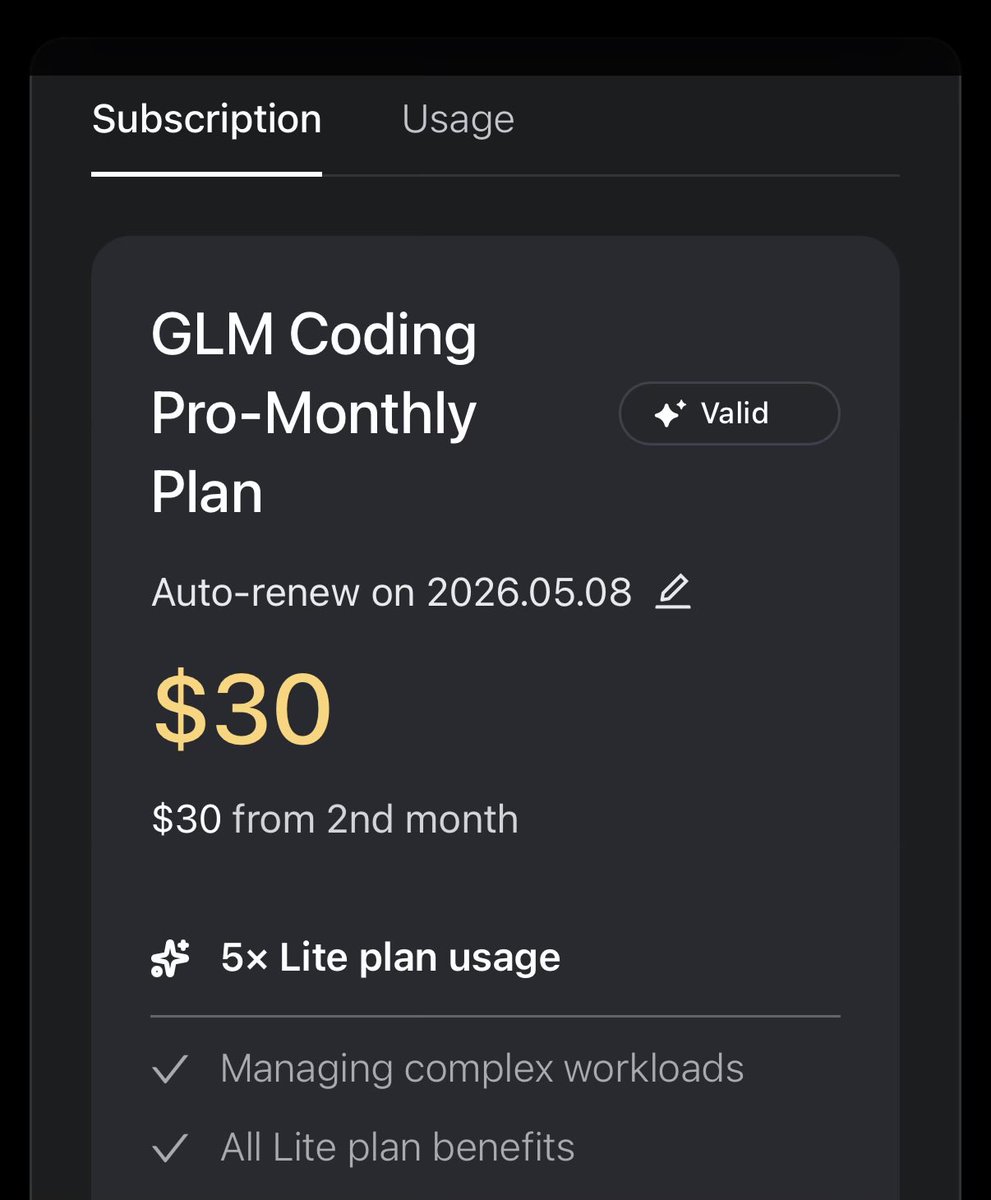

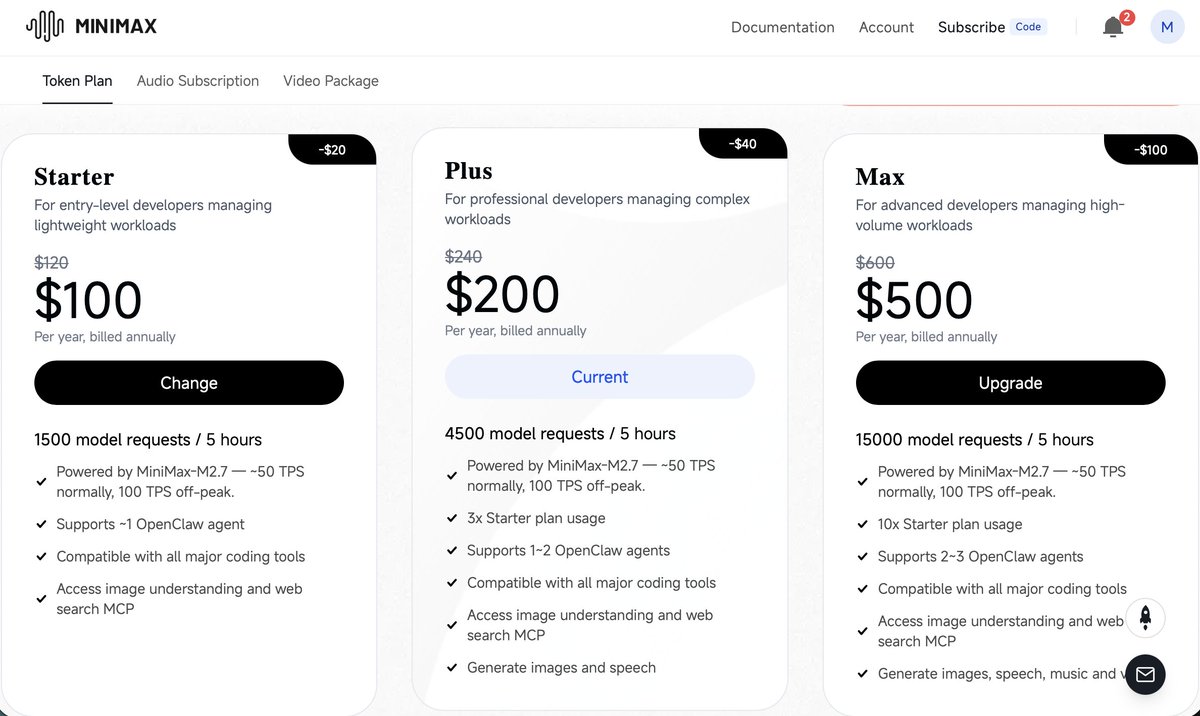

OK that was fast.. GLM 5.1 was the best model in terms of quality and price. For just few days.🙈 For this price it's still good option, but instead of 72$ I would rather pay 100$ for codex. More reliable models. For GLM go for OpenCode GO for 5 usd. 4400 requests per month is not bad to play with it. It's slow, but works. If you like it go with lite. King of value stays with MiniMax 2.7.