Changyu Chen

205 posts

Changyu Chen

@Cameron_Chann

PhD student @sgSMU. RL x LLMs. Previously @NTUsg, @ZJU_China

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

Self-play led to superhuman Go performance, why hasn’t it for LLMs? In practice, long run self-play plateaus like RL. We study why this happens, and build a self-play algorithm that scales better. It solves as many problems with a 7B model as the pass@4 of a model 100x bigger.

"Actually, we (vllm) get more users from the simple UX than vllm performance" For our third guest, we welcome @woosuk_k, co-founder & CTO of @inferact and creator of @vllm_project. To us, Woosuk is a unique guest, and we are amazed by the user-centric perspective on LLM inference he shared — from what makes the vLLM project successful, to new application scenarios to tailor inference to, and to how to support continual learning from user signals, and more. 0:00 - Prelude: Introducing Woosuk and Inferact 3:00 - Woosuk’s First PhD Project 6:00 - How the vLLM Project Got Started 9:18 - AI Infra Needs More Than Just Efficiency 14:08 - How AI Infra and Human-centered AI Are Connected 15:01 - How to Prioritize Feature Requests for Popular AI Infra 18:18 - Streaming Requests and Realtime API 24:05 - Multi-turn, Agentic, Proactive LLMs 27:03 - How to Design AI Infra in a Principled Way 29:13 - How to Design an AI Inference Engine for Continue Learning with RL 35:05 - Would LoRA Training Affect RL Infra Design? 37:28 - Why Start an AI Inference Infra Startup? 40:46 - What Effortless Inference with Open-source Models Means for Developers 43:46 - A Vision for On-device AI Inference 46:19 - Can Today’s Coding Agents Create vLLM?

A few clarifications to common q's about our thickets paper: 1. Is this just ensembling? Seed averaging? Bagging? ... 2. Is this just Qwen? 3. Is it K times slower inference? 4. RL is dead? Post-training is dead?

Finally finished! If you're interested in an overview of recent methods in reinforcement learning for reasoning LLMs, check out this blog post: aweers.de/blog/2026/rl-f… It summarizes ten methods, tries to highlight differences and trends, and has a collection of open problems

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.

AI used to be a distant promise; now it permeates our lives. AI is getting better, but is it making us better? We are promised that AI will augment our minds, but how? We--@EchoShao8899, @shannonzshen, and @michaelryan207--are excited to launch the Augmented Mind Podcast (The AM Podcast), a podcast about technical human-centered AI work. We'll share compelling research, infrastructure, and systems through monthly episodes, featuring interviews with the pioneering minds behind them. We release EP0 today to share who we are, why we started this podcast, and what we're looking forward to. 0:00 - Prelude: the problems we care about 1:48 - Host introduction 2:03 - Why we started the AM Podcast 2:31 - Hot takes on human-centered AI 10:45 - Format of our podcast 11:28 - Unique technical challenges in human-centered AI 16:45 - Let the journey begin!

GPT-5.2 new spreadsheet and presentation capabilities are just skills. And I extracted of them in a github repo. Here’s what’s in `/home/oai/skills`: - docs/ - pdfs/ - spreadsheets/ Skills: docs/render_docx.py docs/skill.md pdfs/skill.md spreadsheets/artifact_tool_spreadsheet_formulas.md spreadsheets/artifact_tool_spreadsheets_api.md spreadsheets/skill.md spreadsheets/spreadsheet.md --- I am skill-pilled. Don't build agents, build skills.

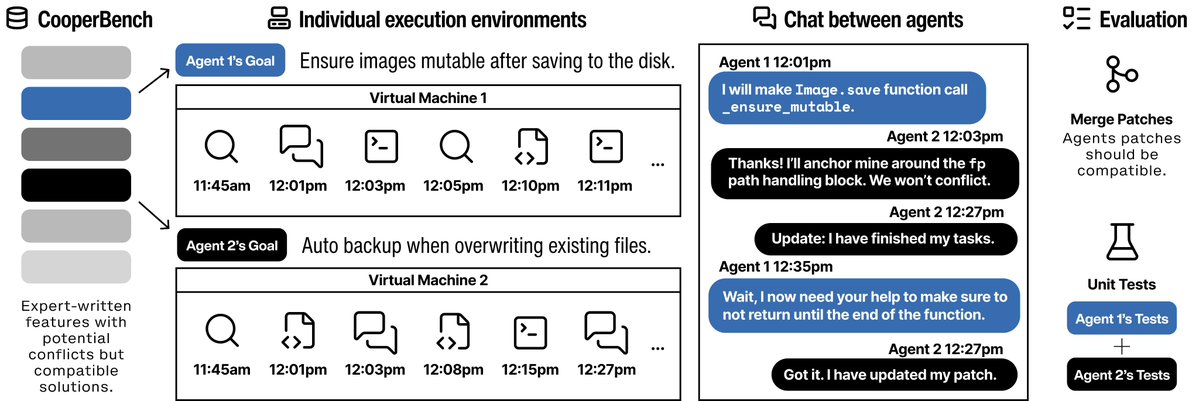

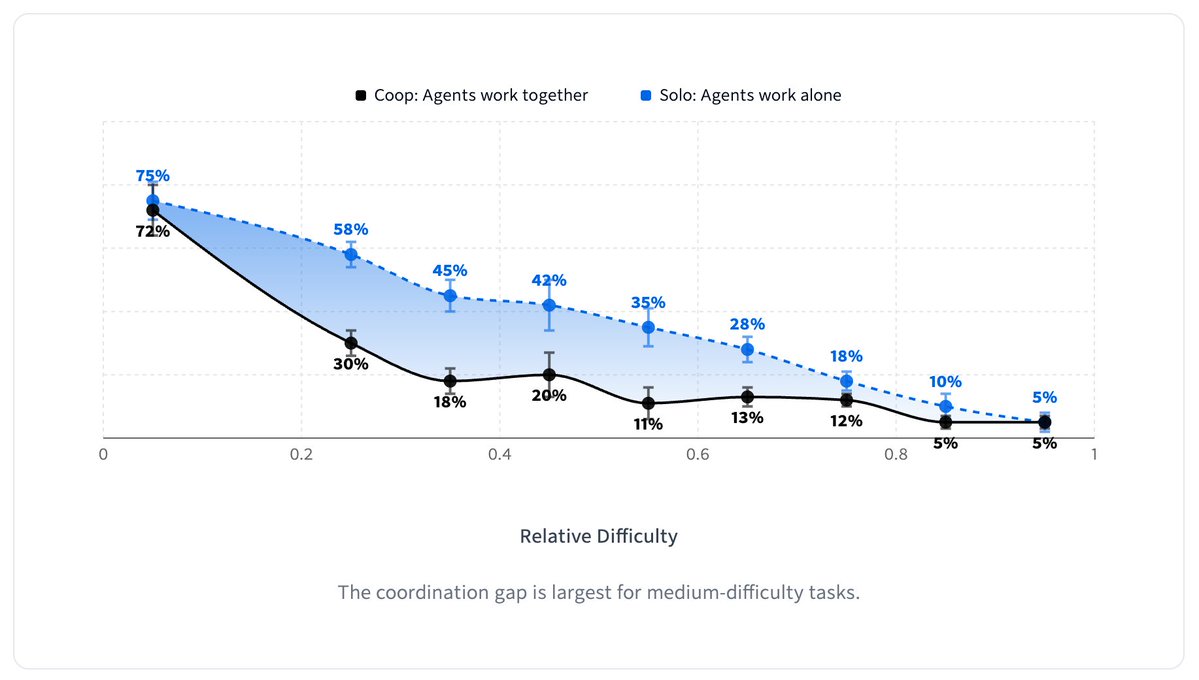

Congrats to the following paper authors attaining Outstanding Paper Awards at @SEAWorkshop! GEM: A Gym for Agentic LLMs Zichen Liu, Anya Sims, Keyu Duan, Changyu Chen, Haotian Xu, Simon Yu, Chenmien Tan, Shaopan Xiong, Weixun Wang, Bo Liu, Hao Zhu, Weiyan Shi, Diyi Yang, Wee Sun Lee, Min Lin